Hadoop生态圈-zookeeper完全分布式部署

Hadoop生态圈-zookeeper完全分布式部署

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

本篇博客部署是建立在Hadoop高可用基础之上的,关于Hadoop高可用部署请参考:https://www.cnblogs.com/yinzhengjie/p/9070017.html。本篇博客是将Hadoop的高可用配置和zookeeper完全分布式结合使用!

一.分布式协调框架

1>.分布式框架的好处

a>.可靠性:

一个或几个节点的崩溃不会导致整个集群的崩溃。 b>.可伸缩性:

可以通过动态添加主机的方式以及修改少量配置文件,以便提示集群性能。 c>.透明性:

隐藏系统的复杂性,对用户体现为一个单一的应用。

2>.分布式框架的弊端

a>.竞态条件:

一个或多个主机尝试运行一个应用,但是该应用只需要被一个主机所运行。 b>.死锁:

两个进程分别等待对方完成。 c>.不一致性:

数据的部分丢失。

二.Zookeeper简介

1>.Zookeeper的作用

答:为了解决分布式协调框架的一些弊端,就出现了zookeeper软件,它是目前大数据中比较流行的分布式协调框架。

2>.Zookeeper的特色

a>.名字服务:

标识集群中的所有节点,节点能够向其注册并产生唯一标识。 b>.配置管理:

存储配置文件,以便共享。 c>.领袖推选机制:

就是根据其自身的算法推选出leader和follower。 d>.锁和同步服务:

当文件进行修改,会将其进行加锁,防止多用户同时写入。 e>.高有效性数据注册:

一个节点是数据不能超过1M,这个规则是递归的,换句话说,每个节点及其子节点的存储空间大小不能超过1M。

3>.本篇博客部署zookeeper架构图

4>.领袖推选机制介绍

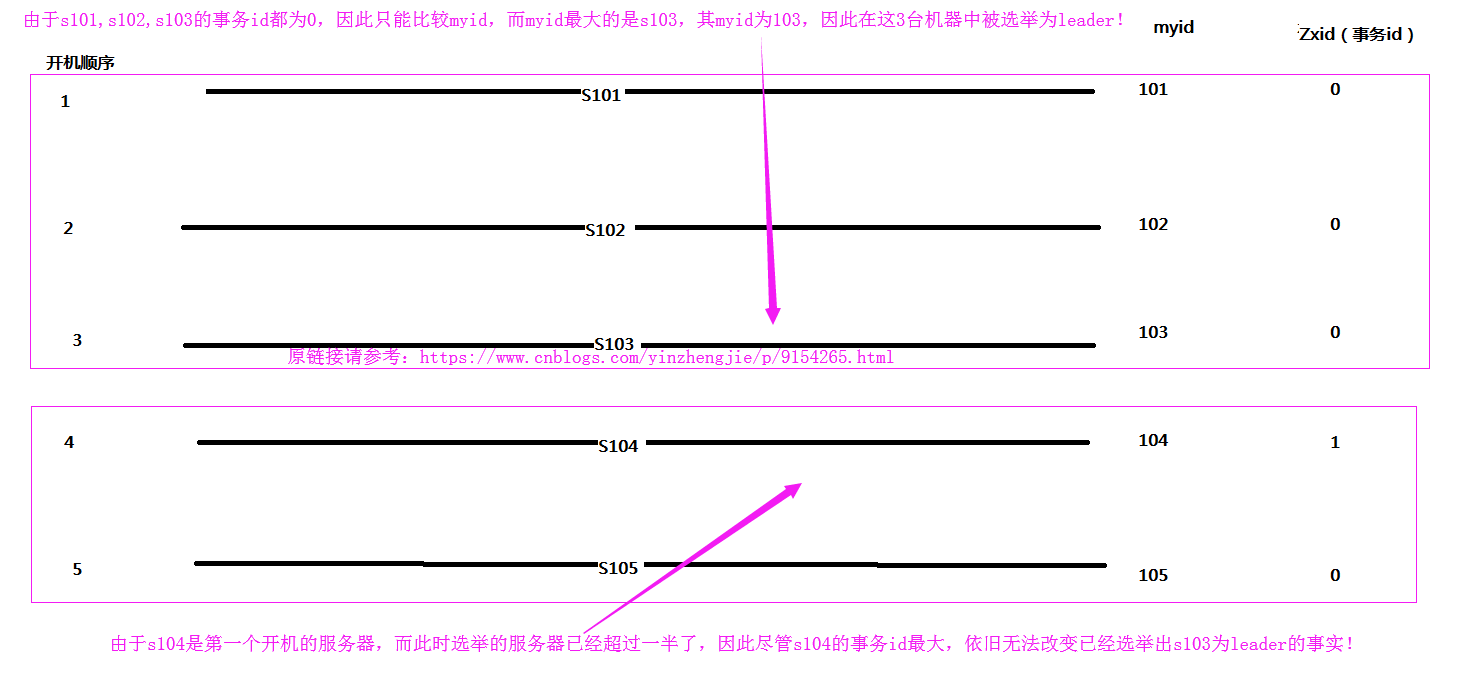

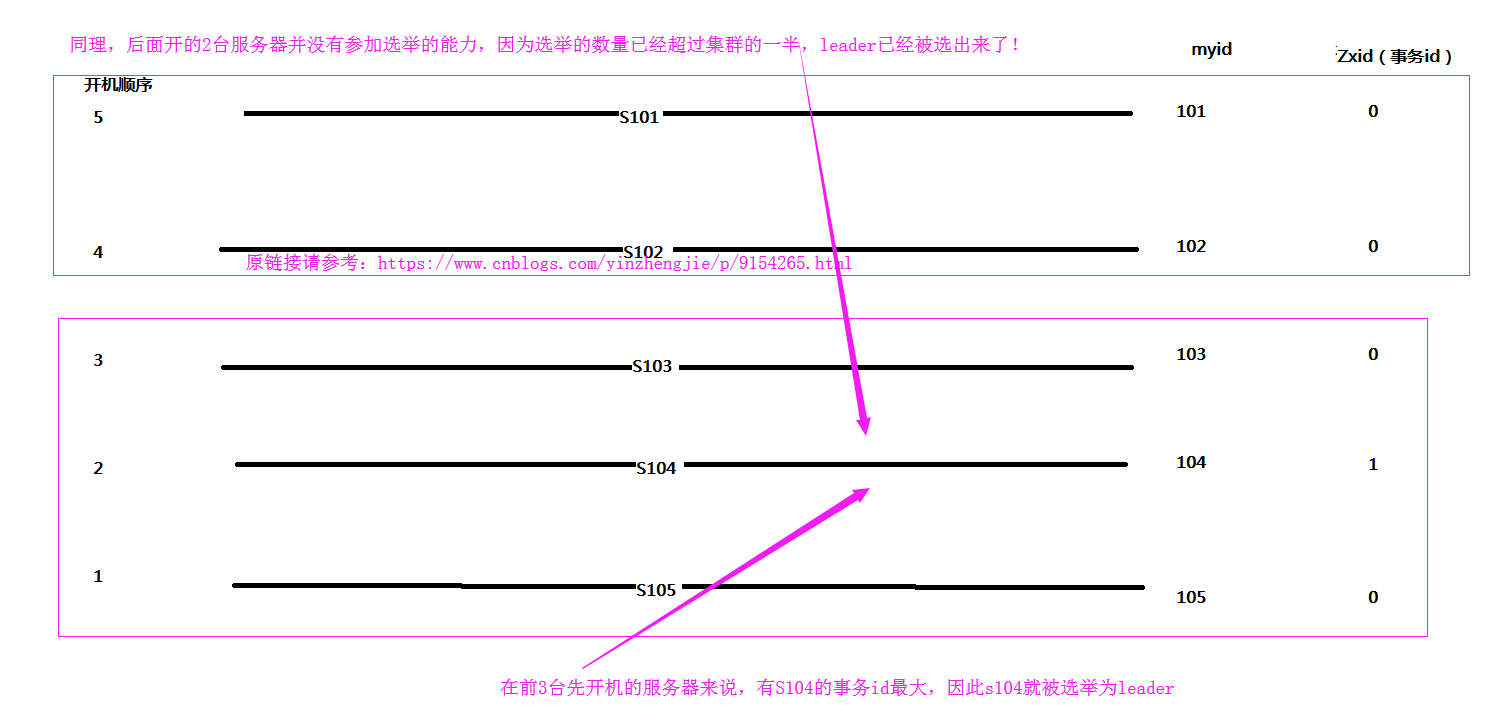

在选举leader节点时,首先会比较事务id,其次比较myid,如果集群中已经有一半机器参加选举,那么次leader就是整个集群中的leader。

为了方便理解,我画了两幅图,便于理解上面的一句话,当事物id一致时推选leader如下:

当事物id不一致时推选leader如下:

三.zookeeper完全分布式部署

1>.下载zookeeper安装包

[yinzhengjie@s101 data]$ sudo yum -y install wget

[sudo] password for yinzhengjie:

Loaded plugins: fastestmirror

Repodata is over weeks old. Install yum-cron? Or run: yum makecache fast

base | 3.6 kB ::

extras | 3.4 kB ::

updates | 3.4 kB ::

(/): extras//x86_64/primary_db | kB ::

(/): updates//x86_64/primary_db | 2.7 MB ::

Determining fastest mirrors

* base: mirrors.huaweicloud.com

* extras: mirrors.huaweicloud.com

* updates: mirrors.huaweicloud.com

Resolving Dependencies

--> Running transaction check

---> Package wget.x86_64 :1.14-.el7_4. will be installed

--> Finished Dependency Resolution Dependencies Resolved ===============================================================================================================================

Package Arch Version Repository Size

===============================================================================================================================

Installing:

wget x86_64 1.14-.el7_4. base k Transaction Summary

===============================================================================================================================

Install Package Total download size: k

Installed size: 2.0 M

Downloading packages:

wget-1.14-.el7_4..x86_64.rpm | kB ::

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Installing : wget-1.14-.el7_4..x86_64 /

Verifying : wget-1.14-.el7_4..x86_64 / Installed:

wget.x86_64 :1.14-.el7_4. Complete!

[yinzhengjie@s101 data]$

[yinzhengjie@s101 data]$ sudo yum -y install wget

[yinzhengjie@s101 data]$ wget http://mirrors.hust.edu.cn/apache/zookeeper/zookeeper-3.4.12/zookeeper-3.4.12.tar.gz

---- ::-- http://mirrors.hust.edu.cn/apache/zookeeper/zookeeper-3.4.12/zookeeper-3.4.12.tar.gz

Resolving mirrors.hust.edu.cn (mirrors.hust.edu.cn)... 202.114.18.160

Connecting to mirrors.hust.edu.cn (mirrors.hust.edu.cn)|202.114.18.160|:... connected.

HTTP request sent, awaiting response... OK

Length: (35M) [application/octet-stream]

Saving to: ‘zookeeper-3.4..tar.gz’ %[=====================================================================================>] ,, 132KB/s in 4m 4s -- :: ( KB/s) - ‘zookeeper-3.4..tar.gz’ saved [/] [yinzhengjie@s101 data]$ ll

total

-rw-r--r--. yinzhengjie yinzhengjie Jun : seq

-rw-rw-r--. yinzhengjie yinzhengjie Apr : zookeeper-3.4..tar.gz

[yinzhengjie@s101 data]$

[yinzhengjie@s101 data]$ wget http://mirrors.hust.edu.cn/apache/zookeeper/zookeeper-3.4.12/zookeeper-3.4.12.tar.gz

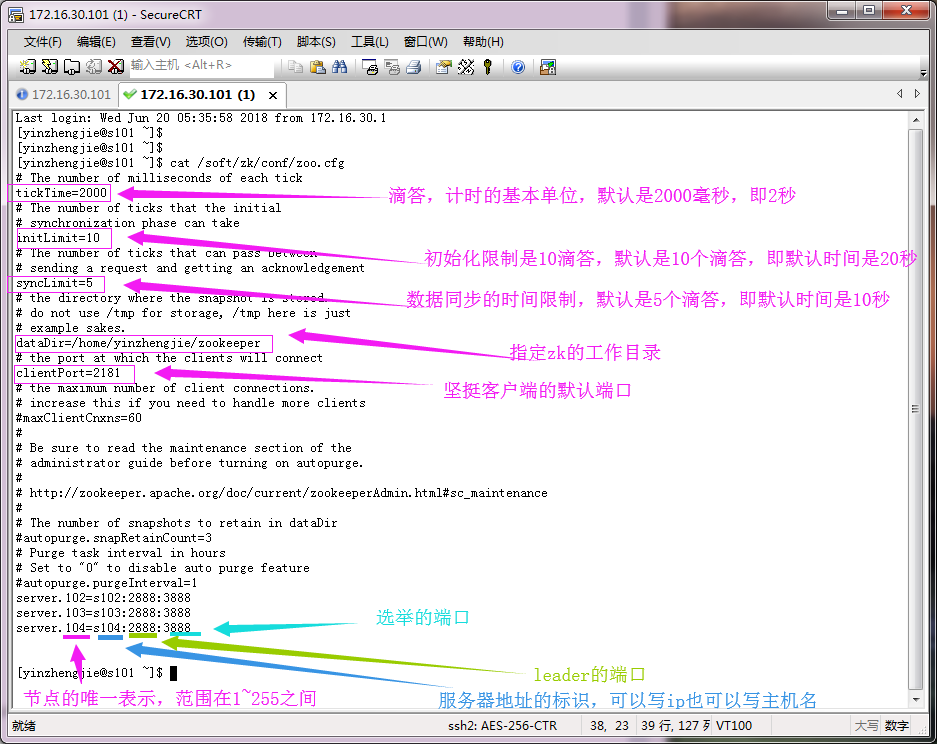

2>.修改配置文件

[yinzhengjie@s101 ~]$ cat /soft/zk/conf/zoo.cfg

# The number of milliseconds of each tick

tickTime=

# The number of ticks that the initial

# synchronization phase can take

initLimit=

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/home/yinzhengjie/zookeeper

# the port at which the clients will connect

clientPort=

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=

# Purge task interval in hours

# Set to "" to disable auto purge feature

#autopurge.purgeInterval=

server.=s102::

server.=s103::

server.=s104:: [yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ cat /soft/zk/conf/zoo.cfg

3>.分发配置文件

[yinzhengjie@s101 ~]$ more `which xrsync.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -lt ];then

echo "请输入参数";

exit

fi #获取文件路径

file=$@ #获取子路径

filename=`basename $file` #获取父路径

dirpath=`dirname $file` #获取完整路径

cd $dirpath

fullpath=`pwd -P` #同步文件到DataNode

for (( i=;i<=;i++ ))

do

#使终端变绿色

tput setaf

echo =========== s$i %file ===========

#使终端变回原来的颜色,即白灰色

tput setaf

#远程执行命令

rsync -lr $filename `whoami`@s$i:$fullpath

#判断命令是否执行成功

if [ $? == ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more `which xrsync.sh`

[yinzhengjie@s101 ~]$ xrsync.sh /soft/zk

=========== s102 %file ===========

命令执行成功

=========== s103 %file ===========

命令执行成功

=========== s104 %file ===========

命令执行成功

=========== s105 %file ===========

命令执行成功

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ xrsync.sh /soft/zk

[yinzhengjie@s101 ~]$ xrsync.sh /soft/zookeeper-3.4./

=========== s102 %file ===========

命令执行成功

=========== s103 %file ===========

命令执行成功

=========== s104 %file ===========

命令执行成功

=========== s105 %file ===========

命令执行成功

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ xrsync.sh /soft/zookeeper-3.4.12/

[yinzhengjie@s101 ~]$ su root

Password:

[root@s101 yinzhengjie]# xrsync.sh /etc/profile

=========== s102 %file ===========

命令执行成功

=========== s103 %file ===========

命令执行成功

=========== s104 %file ===========

命令执行成功

=========== s105 %file ===========

命令执行成功

[root@s101 yinzhengjie]# exit

exit

[yinzhengjie@s101 ~]$

[root@s101 yinzhengjie]# xrsync.sh /etc/profile

4>.在zk节点注册ID信息

[yinzhengjie@s101 ~]$ more `which xcall.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -lt ];then

echo "请输入参数"

exit

fi #获取用户输入的命令

cmd=$@ for (( i=;i<=;i++ ))

do

#使终端变绿色

tput setaf

echo ============= s$i $cmd ============

#使终端变回原来的颜色,即白灰色

tput setaf

#远程执行命令

ssh s$i $cmd

#判断命令是否执行成功

if [ $? == ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more `which xcall.sh`

[yinzhengjie@s101 ~]$ xcall.sh mkdir ~/zookeeper

============= s101 mkdir /home/yinzhengjie/zookeeper ============

命令执行成功

============= s102 mkdir /home/yinzhengjie/zookeeper ============

命令执行成功

============= s103 mkdir /home/yinzhengjie/zookeeper ============

命令执行成功

============= s104 mkdir /home/yinzhengjie/zookeeper ============

命令执行成功

============= s105 mkdir /home/yinzhengjie/zookeeper ============

命令执行成功

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ xcall.sh mkdir ~/zookeeper

[yinzhengjie@s101 ~]$ xcall.sh touch ~/zookeeper/myid

============= s101 touch /home/yinzhengjie/zookeeper/myid ============

命令执行成功

============= s102 touch /home/yinzhengjie/zookeeper/myid ============

命令执行成功

============= s103 touch /home/yinzhengjie/zookeeper/myid ============

命令执行成功

============= s104 touch /home/yinzhengjie/zookeeper/myid ============

命令执行成功

============= s105 touch /home/yinzhengjie/zookeeper/myid ============

命令执行成功

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ xcall.sh touch ~/zookeeper/myid

[yinzhengjie@s101 ~]$ for (( i=;i<=;i++ )) do ssh s$i "echo -n $i > ~/zookeeper/myid" ;done

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ for (( i=102;i<=104;i++ )) do ssh s$i "echo -n $i > ~/zookeeper/myid" ;done

[yinzhengjie@s102 ~]$ more /home/yinzhengjie/zookeeper/myid [yinzhengjie@s102 ~]$

[yinzhengjie@s102 ~]$ more /home/yinzhengjie/zookeeper/myid

[yinzhengjie@s103 ~]$ more /home/yinzhengjie/zookeeper/myid [yinzhengjie@s103 ~]$

[yinzhengjie@s103 ~]$ more /home/yinzhengjie/zookeeper/myid

[yinzhengjie@s104 ~]$ more /home/yinzhengjie/zookeeper/myid [yinzhengjie@s104 ~]$

[yinzhengjie@s104 ~]$ more /home/yinzhengjie/zookeeper/myid

5>.编写zookeeper启动脚本(“/usr/local/bin/xzk.sh”,别忘记添加执行权限)

[yinzhengjie@s101 ~]$ more /usr/local/bin/xzk.sh

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -ne ];then

echo "无效参数,用法为: $0 {start|stop|restart|status}"

exit

fi #获取用户输入的命令

cmd=$ #定义函数功能

function zookeeperManger(){

case $cmd in

start)

echo "启动服务"

remoteExecution start

;;

stop)

echo "停止服务"

remoteExecution stop

;;

restart)

echo "重启服务"

remoteExecution restart

;;

status)

echo "查看状态"

remoteExecution status

;;

*)

echo "无效参数,用法为: $0 {start|stop|restart|status}"

;;

esac

} #定义执行的命令

function remoteExecution(){

for (( i= ; i<= ; i++ )) ; do

tput setaf

echo ========== s$i zkServer.sh $ ================

tput setaf

ssh s$i "source /etc/profile ; zkServer.sh $1"

done

} #调用函数

zookeeperManger

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ sudo chmod +x /usr/local/bin/xzk.sh

[yinzhengjie@s101 ~]$

6>.启动zookeeper

[yinzhengjie@s101 ~]$ xzk.sh start

启动服务

========== s102 zkServer.sh start ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

========== s103 zkServer.sh start ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

========== s104 zkServer.sh start ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[yinzhengjie@s101 ~]$

启动zookeeper([yinzhengjie@s101 ~]$ xzk.sh start)

[yinzhengjie@s101 ~]$ xzk.sh status

查看状态

========== s102 zkServer.sh status ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Mode: follower

========== s103 zkServer.sh status ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Mode: leader

========== s104 zkServer.sh status ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Mode: follower

[yinzhengjie@s101 ~]$

查看zookeeper集群状态([yinzhengjie@s101 ~]$ xzk.sh status)

四.HDFS高可用配置

1>.启动zookeeper集群([yinzhengjie@s101 ~]$ xzk.sh start)

启动方式参考:"三.zookeeper完全分布式部署",一下是查看集群的zookeeper进程是否启动成功:

[yinzhengjie@s101 ~]$ xcall.sh jps

============= s101 jps ============

Jps

命令执行成功

============= s102 jps ============

Jps

QuorumPeerMain

命令执行成功

============= s103 jps ============

QuorumPeerMain

Jps

命令执行成功

============= s104 jps ============

Jps

QuorumPeerMain

命令执行成功

============= s105 jps ============

Jps

命令执行成功

[yinzhengjie@s101 ~]$

2>.修改hadoop的核心配置文件

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.replication</name>

<value></value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/home/yinzhengjie/ha/dfs/name1,/home/yinzhengjie/ha/dfs/name2</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/home/yinzhengjie/ha/dfs/data1,/home/yinzhengjie/ha/dfs/data2</value>

</property> <!-- 高可用配置 -->

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property> <property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property> <property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>s101:</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>s105:</value>

</property> <property>

<name>dfs.namenode.http-address.mycluster.nn1</name>

<value>s101:</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn2</name>

<value>s105:</value>

</property> <property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://s102:8485;s103:8485;s104:8485/mycluster</value>

</property> <property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property> <!-- 在容灾发生时,保护活跃的namenode -->

<property>

<name>dfs.ha.fencing.methods</name>

<value>

sshfence

shell(/bin/true)

</value>

</property> <property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/home/yinzhengjie/.ssh/id_rsa</value>

</property> <property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property> </configuration> <!--

hdfs-site.xml 配置文件的作用:

#HDFS的相关设定,如文件副本的个数、块大小及是否使用强制权限

等,此中的参数定义会覆盖hdfs-default.xml文件中的默认配置. dfs.replication 参数的作用:

#为了数据可用性及冗余的目的,HDFS会在多个节点上保存同一个数据

块的多个副本,其默认为3个。而只有一个节点的伪分布式环境中其仅用

保存一个副本即可,这可以通过dfs.replication属性进行定义。它是一个

软件级备份。 dfs.namenode.name.dir 参数的作用:

#本地磁盘目录,NN存储fsimage文件的地方。可以是按逗号分隔的目录列表,

fsimage文件会存储在全部目录,冗余安全。这里多个目录设定,最好在多个磁盘,

另外,如果其中一个磁盘故障,不会导致系统故障,会跳过坏磁盘。由于使用了HA,

建议仅设置一个。如果特别在意安全,可以设置2个 dfs.datanode.data.dir 参数的作用:

#本地磁盘目录,HDFS数据应该存储Block的地方。可以是逗号分隔的目录列表

(典型的,每个目录在不同的磁盘)。这些目录被轮流使用,一个块存储在这个目录,

下一个块存储在下一个目录,依次循环。每个块在同一个机器上仅存储一份。不存在

的目录被忽略。必须创建文件夹,否则被视为不存在。 dfs.nameservices 参数的作用:

#nameservices列表。逗号分隔。 dfs.ha.namenodes.mycluster 参数的作用:

#dfs.ha.namenodes.[nameservice ID] 命名空间中所有NameNode的唯一标示名称。

可以配置多个,使用逗号分隔。该名称是可以让DataNode知道每个集群的所有NameNode。

当前,每个集群最多只能配置两个NameNode。 dfs.namenode.rpc-address.mycluster.nn1 参数的作用:

#dfs.namenode.rpc-address.[nameservice ID].[name node ID] 每个namenode监听的RPC地址。 dfs.namenode.http-address.mycluster.nn1 参数的作用:

#dfs.namenode.http-address.[nameservice ID].[name node ID] 每个namenode监听的http地址。 dfs.namenode.shared.edits.dir 参数的作用:

#这是NameNode读写JNs组的uri。通过这个uri,NameNodes可以读写edit log内容。URI的格式"qjournal://host1:port1;host2:port2;h

ost3:port3/journalId"。这里的host1、host2、host3指的是Journal Node的地址,这里必须是奇数个,至少3个;其中journalId是集群的唯一

标识符,对于多个联邦命名空间,也使用同一个journalId。 dfs.client.failover.proxy.provider.mycluster 参数的作用:

#这里配置HDFS客户端连接到Active NameNode的一个java类 dfs.ha.fencing.methods 参数的作用:

#dfs.ha.fencing.methods 配置active namenode出错时的处理类。当active namenode出错时,一般需要关闭该进程。处理方式可以是s

sh也可以是shell。 dfs.ha.fencing.ssh.private-key-files 参数的作用:

#使用sshfence时,SSH的私钥文件。 使用了sshfence,这个必须指定 dfs.ha.automatic-failover.enabled 参数作用:

#配置自动容灾,需要把它的值改为true!

-->

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/hdfs-site.xml

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/core-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/yinzhengjie/ha</value>

</property>

<property>

<name>hadoop.http.staticuser.user</name>

<value>yinzhengjie</value>

</property> <property>

<name>ha.zookeeper.quorum</name>

<value>s102:,s103:,s104:</value>

</property>

</configuration> <!-- core-site.xml配置文件的作用:

用于定义系统级别的参数,如HDFS URL、Hadoop的临时

目录以及用于rack-aware集群中的配置文件的配置等,此中的参

数定义会覆盖core-default.xml文件中的默认配置。 fs.defaultFS 参数的作用:

#fs.defaultFS 客户端连接HDFS时,默认的路径前缀。如果前面配置了nameservice ID的值是mycluster,那么这里可以配置为授权信息

的一部分 hadoop.tmp.dir 参数的作用:

#声明hadoop工作目录的地址。 hadoop.http.staticuser.user 参数的作用:

#在网页界面访问数据使用的用户名。 ha.zookeeper.quorum 参数的作用:

#指定zookeeper的节点。 -->

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/core-site.xml

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/yarn-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>s101</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration> <!-- yarn-site.xml配置文件的作用:

#主要用于配置调度器级别的参数.

yarn.resourcemanager.hostname 参数的作用:

#指定资源管理器(resourcemanager)的主机名

yarn.nodemanager.aux-services 参数的作用:

#指定nodemanager使用shuffle -->

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/yarn-site.xml

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration> <!--

mapred-site.xml 配置文件的作用:

#HDFS的相关设定,如reduce任务的默认个数、任务所能够使用内存

的默认上下限等,此中的参数定义会覆盖mapred-default.xml文件中的

默认配置. mapreduce.framework.name 参数的作用:

#指定MapReduce的计算框架,有三种可选,第一种:local(本地),第

二种是mapred(hadoop一代执行框架),第三种是yarn(二代执行框架),我

们这里配置用目前版本最新的计算框架yarn即可。 -->

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/mapred-site.xml

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/slaves

#指定从DataNode服务器

s102

s103

s104

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/slaves

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/masters

#指定从NameNode服务器

s101

s105

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more /soft/hadoop/etc/hadoop/masters

[yinzhengjie@s101 ~]$ tail - /soft/hadoop/sbin/start-yarn.sh

# start resourceManager

#"$bin"/yarn-daemon.sh --config $YARN_CONF_DIR start resourcemanager

"$bin"/yarn-daemons.sh --config $YARN_CONF_DIR --hosts masters start resourcemanager

# start nodeManager

"$bin"/yarn-daemons.sh --config $YARN_CONF_DIR start nodemanager

# start proxyserver

#"$bin"/yarn-daemon.sh --config $YARN_CONF_DIR start proxyserver

[yinzhengjie@s101 ~]$

此脚本存在问题,需要修改脚本,如果不修改默认只启动本地进程([yinzhengjie@s101 ~]$ tail -7 /soft/hadoop/sbin/start-yarn.sh)

[yinzhengjie@s101 ~]$ tail - /soft/hadoop/sbin/stop-yarn.sh

# stop resourceManager

#"$bin"/yarn-daemon.sh --config $YARN_CONF_DIR stop resourcemanager

"$bin"/yarn-daemons.sh --config $YARN_CONF_DIR --hosts masters stop resourcemanager

# stop nodeManager

"$bin"/yarn-daemons.sh --config $YARN_CONF_DIR stop nodemanager

# stop proxy server

"$bin"/yarn-daemon.sh --config $YARN_CONF_DIR stop proxyserver

[yinzhengjie@s101 ~]$

此脚本存在问题,需要修改脚本,如果不修改默认只停止本地进程([yinzhengjie@s101 ~]$ tail -7 /soft/hadoop/sbin/stop-yarn.sh )

3>.同步配置文件

[yinzhengjie@s101 ~]$ more `which xrsync.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -lt ];then

echo "请输入参数";

exit

fi #获取文件路径

file=$@ #获取子路径

filename=`basename $file` #获取父路径

dirpath=`dirname $file` #获取完整路径

cd $dirpath

fullpath=`pwd -P` #同步文件到DataNode

for (( i=;i<=;i++ ))

do

#使终端变绿色

tput setaf

echo =========== s$i %file ===========

#使终端变回原来的颜色,即白灰色

tput setaf

#远程执行命令

rsync -lr $filename `whoami`@s$i:$fullpath

#判断命令是否执行成功

if [ $? == ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more `which xrsync.sh`

[yinzhengjie@s101 ~]$ xrsync.sh /soft/hadoop/etc/

=========== s102 %file ===========

命令执行成功

=========== s103 %file ===========

命令执行成功

=========== s104 %file ===========

命令执行成功

=========== s105 %file ===========

命令执行成功

[yinzhengjie@s101 ~]$

4>.格式化zk数据

[yinzhengjie@s101 ~]$ hdfs zkfc -formatZK

// :: INFO tools.DFSZKFailoverController: Failover controller configured for NameNode NameNode at s101/172.16.30.101:

// :: INFO zookeeper.ZooKeeper: Client environment:zookeeper.version=3.4.-, built on // : GMT

// :: INFO zookeeper.ZooKeeper: Client environment:host.name=s101

// :: INFO zookeeper.ZooKeeper: Client environment:java.version=1.8.0_131

// :: INFO zookeeper.ZooKeeper: Client environment:java.vendor=Oracle Corporation

// :: INFO zookeeper.ZooKeeper: Client environment:java.home=/soft/jdk1..0_131/jre

// :: INFO zookeeper.ZooKeeper: Client environment:java.class.path=/soft/hadoop-2.7./etc/hadoop:/soft/hadoop-2.7./share/hadoop/common/lib/jaxb-impl-2.2.-.jar:/soft/hadoop-2.7./share/hadoop/common/lib/jaxb-api-2.2..jar:/soft/hadoop-2.7./share/hadoop/common/lib/stax-api-1.0-.jar:/soft/hadoop-2.7./share/hadoop/common/lib/activation-1.1.jar:/soft/hadoop-2.7./share/hadoop/common/lib/jackson-core-asl-1.9..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jackson-mapper-asl-1.9..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jackson-jaxrs-1.9..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jackson-xc-1.9..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jersey-server-1.9.jar:/soft/hadoop-2.7./share/hadoop/common/lib/asm-3.2.jar:/soft/hadoop-2.7./share/hadoop/common/lib/log4j-1.2..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jets3t-0.9..jar:/soft/hadoop-2.7./share/hadoop/common/lib/httpclient-4.2..jar:/soft/hadoop-2.7./share/hadoop/common/lib/httpcore-4.2..jar:/soft/hadoop-2.7./share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-lang-2.6.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-configuration-1.6.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-digester-1.8.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-beanutils-1.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-beanutils-core-1.8..jar:/soft/hadoop-2.7./share/hadoop/common/lib/slf4j-api-1.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/slf4j-log4j12-1.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/avro-1.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/paranamer-2.3.jar:/soft/hadoop-2.7./share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-compress-1.4..jar:/soft/hadoop-2.7./share/hadoop/common/lib/xz-1.0.jar:/soft/hadoop-2.7./share/hadoop/common/lib/protobuf-java-2.5..jar:/soft/hadoop-2.7./share/hadoop/common/lib/gson-2.2..jar:/soft/hadoop-2.7./share/hadoop/common/lib/hadoop-auth-2.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/apacheds-kerberos-codec-2.0.-M15.jar:/soft/hadoop-2.7./share/hadoop/common/lib/apacheds-i18n-2.0.-M15.jar:/soft/hadoop-2.7./share/hadoop/common/lib/api-asn1-api-1.0.-M20.jar:/soft/hadoop-2.7./share/hadoop/common/lib/api-util-1.0.-M20.jar:/soft/hadoop-2.7./share/hadoop/common/lib/zookeeper-3.4..jar:/soft/hadoop-2.7./share/hadoop/common/lib/netty-3.6..Final.jar:/soft/hadoop-2.7./share/hadoop/common/lib/curator-framework-2.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/curator-client-2.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jsch-0.1..jar:/soft/hadoop-2.7./share/hadoop/common/lib/curator-recipes-2.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/htrace-core-3.1.-incubating.jar:/soft/hadoop-2.7./share/hadoop/common/lib/junit-4.11.jar:/soft/hadoop-2.7./share/hadoop/common/lib/hamcrest-core-1.3.jar:/soft/hadoop-2.7./share/hadoop/common/lib/mockito-all-1.8..jar:/soft/hadoop-2.7./share/hadoop/common/lib/hadoop-annotations-2.7..jar:/soft/hadoop-2.7./share/hadoop/common/lib/guava-11.0..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jsr305-3.0..jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-cli-1.2.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-math3-3.1..jar:/soft/hadoop-2.7./share/hadoop/common/lib/xmlenc-0.52.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-httpclient-3.1.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-logging-1.1..jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-codec-1.4.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-io-2.4.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-net-3.1.jar:/soft/hadoop-2.7./share/hadoop/common/lib/commons-collections-3.2..jar:/soft/hadoop-2.7./share/hadoop/common/lib/servlet-api-2.5.jar:/soft/hadoop-2.7./share/hadoop/common/lib/jetty-6.1..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jetty-util-6.1..jar:/soft/hadoop-2.7./share/hadoop/common/lib/jsp-api-2.1.jar:/soft/hadoop-2.7./share/hadoop/common/lib/jersey-core-1.9.jar:/soft/hadoop-2.7./share/hadoop/common/lib/jersey-json-1.9.jar:/soft/hadoop-2.7./share/hadoop/common/lib/jettison-1.1.jar:/soft/hadoop-2.7./share/hadoop/common/hadoop-common-2.7..jar:/soft/hadoop-2.7./share/hadoop/common/hadoop-common-2.7.-tests.jar:/soft/hadoop-2.7./share/hadoop/common/hadoop-nfs-2.7..jar:/soft/hadoop-2.7./share/hadoop/hdfs:/soft/hadoop-2.7./share/hadoop/hdfs/lib/commons-codec-1.4.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/log4j-1.2..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/commons-logging-1.1..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/netty-3.6..Final.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/guava-11.0..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/jsr305-3.0..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/commons-cli-1.2.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/xmlenc-0.52.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/commons-io-2.4.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/servlet-api-2.5.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/jetty-6.1..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/jetty-util-6.1..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/jersey-core-1.9.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/jackson-core-asl-1.9..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/jackson-mapper-asl-1.9..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/jersey-server-1.9.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/asm-3.2.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/commons-lang-2.6.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/protobuf-java-2.5..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/htrace-core-3.1.-incubating.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/commons-daemon-1.0..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/netty-all-4.0..Final.jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/xercesImpl-2.9..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/xml-apis-1.3..jar:/soft/hadoop-2.7./share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7./share/hadoop/hdfs/hadoop-hdfs-2.7..jar:/soft/hadoop-2.7./share/hadoop/hdfs/hadoop-hdfs-2.7.-tests.jar:/soft/hadoop-2.7./share/hadoop/hdfs/hadoop-hdfs-nfs-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/zookeeper-3.4.-tests.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/commons-lang-2.6.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/guava-11.0..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jsr305-3.0..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/commons-logging-1.1..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/protobuf-java-2.5..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/commons-cli-1.2.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/log4j-1.2..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jaxb-api-2.2..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/stax-api-1.0-.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/activation-1.1.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/commons-compress-1.4..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/xz-1.0.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/servlet-api-2.5.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/commons-codec-1.4.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jetty-util-6.1..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jersey-core-1.9.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jersey-client-1.9.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jackson-core-asl-1.9..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jackson-mapper-asl-1.9..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jackson-jaxrs-1.9..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jackson-xc-1.9..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/guice-servlet-3.0.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/guice-3.0.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/javax.inject-.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/aopalliance-1.0.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/commons-io-2.4.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jersey-server-1.9.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/asm-3.2.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jersey-json-1.9.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jettison-1.1.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jaxb-impl-2.2.-.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jersey-guice-1.9.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/zookeeper-3.4..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/netty-3.6..Final.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/commons-collections-3.2..jar:/soft/hadoop-2.7./share/hadoop/yarn/lib/jetty-6.1..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-api-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-common-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-server-common-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-server-tests-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-client-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7..jar:/soft/hadoop-2.7./share/hadoop/yarn/hadoop-yarn-registry-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/protobuf-java-2.5..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/avro-1.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/jackson-core-asl-1.9..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/paranamer-2.3.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/commons-compress-1.4..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/xz-1.0.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/hadoop-annotations-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/commons-io-2.4.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/asm-3.2.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/log4j-1.2..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/netty-3.6..Final.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/guice-3.0.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/javax.inject-.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/junit-4.11.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7..jar:/soft/hadoop-2.7./share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.-tests.jar:/contrib/capacity-scheduler/*.jar

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:java.library.path=/soft/hadoop-2.7.3/lib/native

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:java.io.tmpdir=/tmp

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:java.compiler=<NA>

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:os.name=Linux

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:os.arch=amd64

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:os.version=3.10.0-327.el7.x86_64

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:user.name=yinzhengjie

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:user.home=/home/yinzhengjie

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Client environment:user.dir=/home/yinzhengjie

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Initiating client connection, connectString=s102:2181,s103:2181,s104:2181 sessionTimeout=5000 watcher=org.apache.hadoop.ha.ActiveStandbyElector$WatcherWithClientRef@74fe5c40

18/06/20 08:35:18 INFO zookeeper.ClientCnxn: Opening socket connection to server s104/172.16.30.104:2181. Will not attempt to authenticate using SASL (unknown error)

18/06/20 08:35:18 INFO zookeeper.ClientCnxn: Socket connection established to s104/172.16.30.104:2181, initiating session

18/06/20 08:35:18 INFO zookeeper.ClientCnxn: Session establishment complete on server s104/172.16.30.104:2181, sessionid = 0x680000ca40a10000, negotiated timeout = 5000

18/06/20 08:35:18 INFO ha.ActiveStandbyElector: Session connected.

18/06/20 08:35:18 INFO ha.ActiveStandbyElector: Successfully created /hadoop-ha/mycluster in ZK.

18/06/20 08:35:18 INFO zookeeper.ZooKeeper: Session: 0x680000ca40a10000 closed

18/06/20 08:35:18 INFO zookeeper.ClientCnxn: EventThread shut down

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ echo $?

0

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ hdfs zkfc -formatZK

5>.启动hdfs

[yinzhengjie@s101 ~]$ start-dfs.sh

Starting namenodes on [s101 s105]

s101: starting namenode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-namenode-s101.out

s105: starting namenode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-namenode-s105.out

s104: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s104.out

s102: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s102.out

s103: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s103.out

Starting journal nodes [s102 s103 s104]

s104: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s104.out

s103: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s103.out

s102: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s102.out

Starting ZK Failover Controllers on NN hosts [s101 s105]

s101: starting zkfc, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-zkfc-s101.out

s105: starting zkfc, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-zkfc-s105.out

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ start-dfs.sh

6>.检验hadoop的高可用

[yinzhengjie@s101 ~]$ more `which xcall.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -lt ];then

echo "请输入参数"

exit

fi #获取用户输入的命令

cmd=$@ for (( i=;i<=;i++ ))

do

#使终端变绿色

tput setaf

echo ============= s$i $cmd ============

#使终端变回原来的颜色,即白灰色

tput setaf

#远程执行命令

ssh s$i $cmd

#判断命令是否执行成功

if [ $? == ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ more `which xcall.sh`

[yinzhengjie@s101 ~]$ xcall.sh jps

============= s101 jps ============

NameNode

Jps

DFSZKFailoverController

命令执行成功

============= s102 jps ============

QuorumPeerMain

Jps

JournalNode

命令执行成功

============= s103 jps ============

Jps

QuorumPeerMain

JournalNode

命令执行成功

============= s104 jps ============

QuorumPeerMain

Jps

JournalNode

命令执行成功

============= s105 jps ============

DFSZKFailoverController

NameNode

Jps

命令执行成功

[yinzhengjie@s101 ~]$

五.初学者遇到问题最霸道的解决方案:(这种暴力解决方式适合我这样测试集群中使用,如果你的架构跟我的一样,那么这种方法也适合你)

温馨提示:如果你要格式化集群,最好是将之前的数据目录改名,若你没有删除或修改集群之前的数据目录,格式化后的版本信息仍然是上一次没有格式化的集群版本信息哟!这样很可能导致你的DataNode或者NameNode进程启动不了,或者存在刚刚启动不一会儿进程就挂掉了!

[yinzhengjie@s101 ~]$ hadoop-daemons.sh start journalnode

s103: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s103.out

s102: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s102.out

s104: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s104.out

[yinzhengjie@s101 ~]$

启动journalnode进程([yinzhengjie@s101 ~]$ hadoop-daemons.sh start journalnode)

[yinzhengjie@s101 ~]$ hdfs namenode -format

// :: INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = s101/172.16.30.101

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.7.3

STARTUP_MSG: classpath = /soft/hadoop-2.7.3/etc/hadoop:/soft/hadoop-2.7.3/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/stax-api-1.0-2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/activation-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jets3t-0.9.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/httpclient-4.2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/httpcore-4.2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-configuration-1.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-digester-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/avro-1.7.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/paranamer-2.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/gson-2.2.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hadoop-auth-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/zookeeper-3.4.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-framework-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-client-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsch-0.1.42.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/junit-4.11.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hamcrest-core-1.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/mockito-all-1.8.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hadoop-annotations-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-math3-3.1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/xmlenc-0.52.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-httpclient-3.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-net-3.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-collections-3.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsp-api-2.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-json-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jettison-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3-tests.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-nfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3-tests.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/activation-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-client-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guice-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/javax.inject-1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/aopalliance-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-json-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jettison-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-api-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-client-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-registry-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/javax.inject-1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/junit-4.11.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3-tests.jar:/contrib/capacity-scheduler/*.jar

STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff; compiled by 'root' on 2016-08-18T01:41Z

STARTUP_MSG: java = 1.8.0_131

************************************************************/

// :: INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]

// :: INFO namenode.NameNode: createNameNode [-format]

// :: WARN common.Util: Path /home/yinzhengjie/ha/dfs/name1 should be specified as a URI in configuration files. Please update hdfs configuration.

// :: WARN common.Util: Path /home/yinzhengjie/ha/dfs/name2 should be specified as a URI in configuration files. Please update hdfs configuration.

// :: WARN common.Util: Path /home/yinzhengjie/ha/dfs/name1 should be specified as a URI in configuration files. Please update hdfs configuration.

// :: WARN common.Util: Path /home/yinzhengjie/ha/dfs/name2 should be specified as a URI in configuration files. Please update hdfs configuration.

Formatting using clusterid: CID-f4979818-1b00--9ce6-6a84ab5a27e7

// :: INFO namenode.FSNamesystem: No KeyProvider found.

// :: INFO namenode.FSNamesystem: fsLock is fair:true

// :: INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=

// :: INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true

// :: INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to :::00.000

// :: INFO blockmanagement.BlockManager: The block deletion will start around Jun ::

// :: INFO util.GSet: Computing capacity for map BlocksMap

// :: INFO util.GSet: VM type = -bit

// :: INFO util.GSet: 2.0% max memory MB = 17.8 MB

// :: INFO util.GSet: capacity = ^ = entries

// :: INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false

// :: INFO blockmanagement.BlockManager: defaultReplication =

// :: INFO blockmanagement.BlockManager: maxReplication =

// :: INFO blockmanagement.BlockManager: minReplication =

// :: INFO blockmanagement.BlockManager: maxReplicationStreams =

// :: INFO blockmanagement.BlockManager: replicationRecheckInterval =

// :: INFO blockmanagement.BlockManager: encryptDataTransfer = false

// :: INFO blockmanagement.BlockManager: maxNumBlocksToLog =

// :: INFO namenode.FSNamesystem: fsOwner = yinzhengjie (auth:SIMPLE)

// :: INFO namenode.FSNamesystem: supergroup = supergroup

// :: INFO namenode.FSNamesystem: isPermissionEnabled = true

// :: INFO namenode.FSNamesystem: Determined nameservice ID: mycluster

// :: INFO namenode.FSNamesystem: HA Enabled: true

// :: INFO namenode.FSNamesystem: Append Enabled: true

// :: INFO util.GSet: Computing capacity for map INodeMap

// :: INFO util.GSet: VM type = -bit

// :: INFO util.GSet: 1.0% max memory MB = 8.9 MB

// :: INFO util.GSet: capacity = ^ = entries

// :: INFO namenode.FSDirectory: ACLs enabled? false

// :: INFO namenode.FSDirectory: XAttrs enabled? true

// :: INFO namenode.FSDirectory: Maximum size of an xattr:

// :: INFO namenode.NameNode: Caching file names occuring more than times

// :: INFO util.GSet: Computing capacity for map cachedBlocks

// :: INFO util.GSet: VM type = -bit

// :: INFO util.GSet: 0.25% max memory MB = 2.2 MB

// :: INFO util.GSet: capacity = ^ = entries

// :: INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033

// :: INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes =

// :: INFO namenode.FSNamesystem: dfs.namenode.safemode.extension =

// :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets =

// :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users =

// :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = ,,

// :: INFO namenode.FSNamesystem: Retry cache on namenode is enabled

// :: INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is millis

// :: INFO util.GSet: Computing capacity for map NameNodeRetryCache

// :: INFO util.GSet: VM type = -bit

// :: INFO util.GSet: 0.029999999329447746% max memory MB = 273.1 KB

// :: INFO util.GSet: capacity = ^ = entries

Re-format filesystem in Storage Directory /home/yinzhengjie/ha/dfs/name1 ? (Y or N) y

Re-format filesystem in Storage Directory /home/yinzhengjie/ha/dfs/name2 ? (Y or N) y

Re-format filesystem in QJM to [172.16.30.102:, 172.16.30.103:, 172.16.30.104:] ? (Y or N) y

// :: INFO namenode.FSImage: Allocated new BlockPoolId: BP--172.16.30.101-

// :: INFO common.Storage: Storage directory /home/yinzhengjie/ha/dfs/name1 has been successfully formatted.

// :: INFO common.Storage: Storage directory /home/yinzhengjie/ha/dfs/name2 has been successfully formatted.

// :: INFO namenode.FSImageFormatProtobuf: Saving image file /home/yinzhengjie/ha/dfs/name2/current/fsimage.ckpt_0000000000000000000 using no compression

// :: INFO namenode.FSImageFormatProtobuf: Saving image file /home/yinzhengjie/ha/dfs/name1/current/fsimage.ckpt_0000000000000000000 using no compression

// :: INFO namenode.FSImageFormatProtobuf: Image file /home/yinzhengjie/ha/dfs/name2/current/fsimage.ckpt_0000000000000000000 of size bytes saved in seconds.

// :: INFO namenode.FSImageFormatProtobuf: Image file /home/yinzhengjie/ha/dfs/name1/current/fsimage.ckpt_0000000000000000000 of size bytes saved in seconds.

// :: INFO namenode.NNStorageRetentionManager: Going to retain images with txid >=

// :: INFO util.ExitUtil: Exiting with status

// :: INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at s101/172.16.30.101

************************************************************/

[yinzhengjie@s101 ~]$

格式化名称节点(hdfs namenode -format)

[yinzhengjie@s101 ha]$ scp -r ~/ha yinzhengjie@s105:~

VERSION % .2KB/s :

seen_txid % .0KB/s :

fsimage_0000000000000000000.md5 % .1KB/s :

fsimage_0000000000000000000 % .4KB/s :

VERSION % .2KB/s :

seen_txid % .0KB/s :

fsimage_0000000000000000000.md5 % .1KB/s :

fsimage_0000000000000000000 % .4KB/s :

[yinzhengjie@s101 ha]$

将s101中的工作目录同步到s105([yinzhengjie@s101 ha]$ scp -r ~/ha yinzhengjie@s105:~)

[yinzhengjie@s101 ~]$ xcall.sh jps

============= s101 jps ============

NameNode

Jps

DFSZKFailoverController

命令执行成功

============= s102 jps ============

QuorumPeerMain

Jps

JournalNode

命令执行成功

============= s103 jps ============

Jps

QuorumPeerMain

JournalNode

命令执行成功

============= s104 jps ============

QuorumPeerMain

Jps

JournalNode

命令执行成功

============= s105 jps ============

DFSZKFailoverController

NameNode

Jps

命令执行成功

[yinzhengjie@s101 ~]$

检查服务启动情况([yinzhengjie@s101 ~]$ xcall.sh jps)

Hadoop生态圈-zookeeper完全分布式部署的更多相关文章

- Hadoop生态圈-phoenix完全分布式部署以及常用命令介绍

Hadoop生态圈-phoenix完全分布式部署 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. phoenix只是一个插件,我们可以用hive给hbase套上一个JDBC壳,但是你 ...

- Hadoop生态圈-Zookeeper的工作原理分析

Hadoop生态圈-Zookeeper的工作原理分析 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 无论是是Kafka集群,还是producer和consumer都依赖于Zoo ...

- Hadoop 2.6.0分布式部署參考手冊

Hadoop 2.6.0分布式部署參考手冊 关于本參考手冊的word文档.能够到例如以下地址下载:http://download.csdn.net/detail/u012875880/8291493 ...

- Hadoop生态圈-CentOs7.5单机部署ClickHouse

Hadoop生态圈-CentOs7.5单机部署ClickHouse 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 到了新的公司,认识了新的同事,生产环境也得你去适应新的集群环境,我 ...

- Hadoop生态圈-zookeeper本地搭建以及常用命令介绍

Hadoop生态圈-zookeeper本地搭建以及常用命令介绍 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 一.下载zookeeper软件 下载地址:https://www.ap ...

- Hadoop生态圈-zookeeper的API用法详解

Hadoop生态圈-zookeeper的API用法详解 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 一.测试前准备 1>.开启集群 [yinzhengjie@s101 ~] ...

- CentOS7 下 Hadoop 单节点(伪分布式)部署

Hadoop 下载 (2.9.2) https://hadoop.apache.org/releases.html 准备工作 关闭防火墙 (也可放行) # 停止防火墙 systemctl stop f ...

- 【Hadoop 分布式部署 三:基于Hadoop 2.x 伪分布式部署进行修改配置文件】

1.规划好哪些服务运行在那个服务器上 需要配置的配置文件 2. 修改配置文件,设置服务运行机器节点 首先在 hadoop-senior 的这台主机上 进行 解压 hadoop2.5 按照 ...

- Zookeeper 伪分布式部署

Zookeeper 可以通过配置不同的配置文件启动 部署环境:CentOS 6.7 Zookeeper 路径: /opt/htools/zookeeper-3.4.6 操作步骤: 1 复制三份zoo. ...

随机推荐

- 网络编程中TCP基础巩固以及Linux打开的文件过多文件句柄的总结

1.TCP连接(短链接和长连接) 什么是TCP连接?TCP(Transmission Control Protocol 传输控制协议)是一种面向连接的.可靠的.基于字节流的传输层通信协议. 当网络通信 ...

- A Deep Learning-Based System for Vulnerability Detection(二)

接着上一篇,这篇研究实验和结果. A.用于评估漏洞检测系统的指标 TP:为正确检测到漏洞的样本数量 FP:为检测到虚假漏洞样本的数量(误报) FN:为未检真实漏洞的样本数量(漏报) TN:未检测到漏洞 ...

- Java开发学习心得(三):项目结构

[TOC] 3 项目结构 经过前面一系列学习,差不多对Java的开发过程有了一定的了解,为了能保持一个良好的项目结构,考虑到接下来要进行开发,还需要学习一下Java的项目结构 下面以两个项目结构为参照 ...

- Git命令行管理代码、安装及使用

出处:https://www.cnblogs.com/ximiaomiao/p/7140456.html Git安装和使用 目的:通过Git管理github托管项目代码 一.下载安装Git 1 ...

- 【入门】Spring-Boot项目配置Mysql数据库

前言 前面参照SpringBoot官网,自动生成了简单项目点击打开链接 配置数据库和代码遇到的问题 问题1:cannot load driver class :com.mysql.jdbc.Drive ...

- UVA - 11374 - Airport Express(堆优化Dijkstra)

Problem UVA - 11374 - Airport Express Time Limit: 1000 mSec Problem Description In a small city c ...

- wxWidgets 和 QT 之间的选择

(非原创,网络摘抄) 跨平台的C++ GUI工具库很多,可是应用广泛的也就那么几个,Qt.wxWidgets便是其中的翘楚这里把GTK+排除在外,以C实现面向对象,上手相当困难,而且Windows平台 ...

- 服务端监控工具:Nmon使用方法

在性能测试过程中,对服务端的各项资源使用情况进行监控是很重要的一环.这篇博客,介绍下服务端监控工具:nmon的使用方法... 一.认识nmon 1.简介 nmon是一种在AIX与各种Linux操作系统 ...

- postman的使用大全

转载 https://blog.csdn.net/fxbin123/article/details/80428216

- triplet loss 在深度学习中主要应用在什么地方?有什么明显的优势?

作者:罗浩.ZJU链接:https://www.zhihu.com/question/62486208/answer/199117070来源:知乎著作权归作者所有.商业转载请联系作者获得授权,非商业转 ...