LSA、LDA

Latent semantic analysis (LSA) is a technique in natural language processing, in particular distributional semantics, of analyzing relationships between a set of documents and the terms they contain by producing a set of concepts related to the documents and terms. LSA assumes that words that are close in meaning will occur in similar pieces of text (the distributional hypothesis). A matrix containing word counts per paragraph (rows represent unique words and columns represent each paragraph) is constructed from a large piece of text and a mathematical technique called singular value decomposition (SVD) is used to reduce the number of rows while preserving the similarity structure among columns. Words are then compared by taking the cosine of the angle between the two vectors (or the dot product between the normalizations of the two vectors) formed by any two rows. Values close to 1 represent very similar words while values close to 0 represent very dissimilar words.

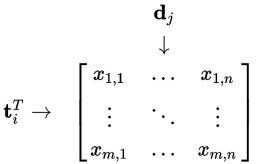

Occurrence matrix:

LSA can use a term-document matrix which describes the occurrences of terms in documents; it is a sparse matrix whose rows correspond to terms and whose columns correspond to documents. A typical example of the weighting of the elements of the matrix is tf-idf (term frequency–inverse document frequency): the weight of an element of the matrix is proportional to the number of times the terms appear in each document, where rare terms are upweighted to reflect their relative importance.

Rank lowering(矩阵降维):

After the construction of the occurrence matrix, LSA finds a low-rank approximation to the term-document matrix. There could be various reasons for these approximations:

- The original term-document matrix is presumed too large for the computing resources; in this case, the approximated low rank matrix is interpreted as an approximation (a "least and necessary evil").

- The original term-document matrix is presumed noisy: for example, anecdotal instances of terms are to be eliminated. From this point of view, the approximated matrix is interpreted as a de-noisified matrix(a better matrix than the original).

- The original term-document matrix is presumed overly sparse relative to the "true" term-document matrix. That is, the original matrix lists only the words actually in each document, whereas we might be interested in all words related to each document—generally a much larger set due to synonymy.

Derivation

Let $X$ be a matrix where element $ (i,j)$ describes the occurrence of term $ i $ in document $j$ (this can be, for example, the frequency). $X$ will look like this:

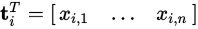

Now a row in this matrix will be a vector corresponding to a term, giving its relation to each document:

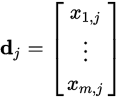

Likewise, a column in this matrix will be a vector corresponding to a document, giving its relation to each term:

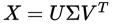

Now, from the theory of linear algebra, there exists a decomposition of $X$ such that $U$ and $ V $ are orthogonal matrices(正交矩阵) and $\Sigma$ is a diagonal matrix(对角矩阵). This is called a singular value decomposition (SVD):

The values  are called the singular values, and

are called the singular values, and  and

and  the left and right singular vectors. Notice the only part of

the left and right singular vectors. Notice the only part of  that contributes to

that contributes to

is the  row. Let this row vector be called

row. Let this row vector be called  . Likewise, the only part of

. Likewise, the only part of  that contributes to

that contributes to  is the

is the  column,

column, . These are not theeigenvectors,but depend on all the eigenvectors.

. These are not theeigenvectors,but depend on all the eigenvectors.

https://en.wikipedia.org/wiki/Latent_semantic_analysis

LSA、LDA的更多相关文章

- 京东商品评论的分类预测与LSA、LDA建模

(一)数据准备 1.爬取京东自营店kindle阅读器的评价数据,对数据进行预处理,使用机器学习算法对评价文本进行舆情分析,预测某用户对本商品的评价是好评还是差评.通过数据分析与模型分析,推测出不同型号 ...

- 文本情感分析(一):基于词袋模型(VSM、LSA、n-gram)的文本表示

现在自然语言处理用深度学习做的比较多,我还没试过用传统的监督学习方法做分类器,比如SVM.Xgboost.随机森林,来训练模型.因此,用Kaggle上经典的电影评论情感分析题,来学习如何用传统机器学习 ...

- 四大机器学习降维算法:PCA、LDA、LLE、Laplacian Eigenmaps

四大机器学习降维算法:PCA.LDA.LLE.Laplacian Eigenmaps 机器学习领域中所谓的降维就是指采用某种映射方法,将原高维空间中的数据点映射到低维度的空间中.降维的本质是学习一个映 ...

- 特征向量、特征值以及降维方法(PCA、SVD、LDA)

一.特征向量/特征值 Av = λv 如果把矩阵看作是一个运动,运动的方向叫做特征向量,运动的速度叫做特征值.对于上式,v为A矩阵的特征向量,λ为A矩阵的特征值. 假设:v不是A的速度(方向) 结果如 ...

- 我是这样一步步理解--主题模型(Topic Model)、LDA

1. LDA模型是什么 LDA可以分为以下5个步骤: 一个函数:gamma函数. 四个分布:二项分布.多项分布.beta分布.Dirichlet分布. 一个概念和一个理念:共轭先验和贝叶斯框架. 两个 ...

- 一口气讲完 LSA — PlSA —LDA在自然语言处理中的使用

自然语言处理之LSA LSA(Latent Semantic Analysis), 潜在语义分析.试图利用文档中隐藏的潜在的概念来进行文档分析与检索,能够达到比直接的关键词匹配获得更好的效果. LSA ...

- 【转】四大机器学习降维算法:PCA、LDA、LLE、Laplacian Eigenmaps

最近在找降维的解决方案中,发现了下面的思路,后面可以按照这思路进行尝试下: 链接:http://www.36dsj.com/archives/26723 引言 机器学习领域中所谓的降维就是指采用某种映 ...

- 降维算法整理--- PCA、KPCA、LDA、MDS、LLE 等

转自github: https://github.com/heucoder/dimensionality_reduction_alo_codes 网上关于各种降维算法的资料参差不齐,同时大部分不提供源 ...

- Word Embedding/RNN/LSTM

Word Embedding Word Embedding是一种词的向量表示,比如,对于这样的"A B A C B F G"的一个序列,也许我们最后能得到:A对应的向量为[0.1 ...

随机推荐

- (64)zabbix正则表达式应用

概述 在前面的<zabbix low-level discovery>一文中有filter一项,用于从结果中筛选出你想要的结果,比如我们在filter中填入^ext|^reiserfs则表 ...

- 如何用纯 CSS 创作一个均衡器 loader 动画

效果预览 在线演示 按下右侧的"点击预览"按钮可以在当前页面预览,点击链接可以全屏预览. https://codepen.io/comehope/pen/oybWBy 可交互视频教 ...

- mcu读写调式

拿仿真SPIS为例: 对于其他外设(UART.SPIM.I2S.I2C...)都是一个道理. 当MCU写时:主要对一个寄存器进行写,此寄存器是外设的入口(基本都会做并转串逻辑). spis_tx_da ...

- 数据结构( Pyhon 语言描述 ) — — 第7章:栈

栈概览 栈是线性集合,遵从后进先出原则( Last - in first - out , LIFO )原则 栈常用的操作包括压入( push ) 和弹出( pop ) 栈的应用 将中缀表达式转换为后缀 ...

- Python 基本数据类型 (二) - 字符串

str.expandtabs([tabsize]): str类型的expandtabs函数,有一个可选参数tabsize(制表符大小) 详细来说,expandtabs的意思就是,将字符串中的制表符\t ...

- centos 服务器配置

安装防火墙 安装Apache 安装MySQL 安装PHP 安装JDK 安装Tomcat 服务器上搭建Apache +MySQL+PHP +JDK +Tomcat环境,用的是Linux Centos7. ...

- chromedriver 下载

https://sites.google.com/a/chromium.org/chromedriver/downloads 百度网盘链接:https://pan.baidu.com/s/1nwL ...

- 学习笔记3——WordPress文件目录结构详解

**********根目录********** 1.index.php:WordPress核心索引文件,即博客输出文件.2.license.txt:WordPress GPL许可证文件.3.my-ha ...

- Opencv学习笔记——视频高斯模糊并分别输出

用两个窗口进行对比 #include "stdafx.h" #include <iostream> #include <opencv2/core/core.hpp ...

- bzoj1853: [Scoi2010]幸运数字 dp+容斥原理

在中国,很多人都把6和8视为是幸运数字!lxhgww也这样认为,于是他定义自己的“幸运号码”是十进制表示中只包含数字6和8的那些号码,比如68,666,888都是“幸运号码”!但是这种“幸运号码”总是 ...