CNN初步-2

Pooling

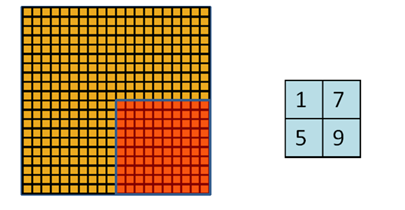

为了解决convolved之后输出维度太大的问题

在convolved的特征基础上采用的不是相交的区域处理

http://www.wildml.com/2015/11/understanding-convolutional-neural-networks-for-nlp/

这里有一个cnn较好的介绍

Pooling also reduces the output dimensionality but (hopefully) keeps the most salient information.

By performing the max operation you are keeping information about whether or not the feature appeared in the sentence, but you are losing information about where exactly it appeared.

You are losing global information about locality (where in a sentence something happens), but you are keeping local information captured by your filters, like "not amazing" being very different from "amazing not".

局部信息能够学到 "not amaziing" "amzaing not"这样 bag of word 不行的顺序信息(知道他们是不一样的),然后max pooling仍然能够保留这一信息

只是丢失了这个信息的具体位置

There are two aspects of this computation worth paying attention to: Location Invarianceand Compositionality. Let's say you want to classify whether or not there's an elephant in an image. Because you are sliding your filters over the whole

image you don't really care wherethe elephant occurs. In practice, pooling also gives you invariance to translation, rotation and scaling, but more on that later. The second key aspect is (local) compositionality. Each filter composes a local patch of lower-level features into higher-level representation. That's why CNNs are so powerful in Computer Vision. It makes intuitive sense that you build edges from pixels, shapes from edges, and more complex objects from shapes.

来自 <http://www.wildml.com/2015/11/understanding-convolutional-neural-networks-for-nlp/>

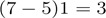

关于conv和pooling可选的参数

PADDING

You can see how wide convolution is useful, or even necessary, when you have a large filter relative to the input size. In the above, the narrow convolution yields an output of size

, and a wide convolution an output of size

. More generally, the formula for the output size is

.

来自 <http://www.wildml.com/2015/11/understanding-convolutional-neural-networks-for-nlp/>

narrow对应 tensorflow提供的VALID padding

wide对应tensorflow提供其中特定一种 SAME padding(zero padding)通过补齐0 来保持输出不变

下面有详细解释

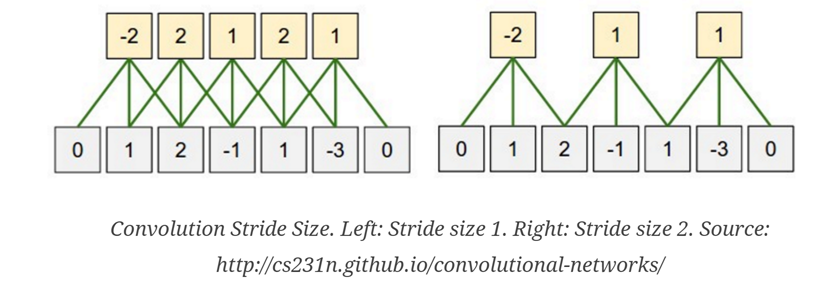

STRIDE

这个比较好理解

每次移动的距离,对应pooling, filter size是多少

一般 stride是多少

|

down vote

|

tensorflow里面提供SAME,VALID两种padding的选择 关于padding, conv和pool用的padding都是同一个padding算法 The TensorFlow Convolution example gives an overview about the difference between SAME and VALID :

And

|

示例

In [2]:

import

tensorflow

as

tf

x = tf.constant([[1., 2., 3.],

[4., 5., 6.]])

x = tf.reshape(x, [1, 2, 3, 1]) # give a shape accepted by tf.nn.max_pool

valid_pad = tf.nn.max_pool(x, [1, 2, 2, 1], [1, 2, 2, 1], padding='VALID')

same_pad = tf.nn.max_pool(x, [1, 2, 2, 1], [1, 2, 2, 1], padding='SAME')

print valid_pad.get_shape() == [1, 1, 1, 1] # valid_pad is [5.]

print same_pad.get_shape() == [1, 1, 2, 1] # same_pad is [5., 6.]

sess = tf.InteractiveSession()

sess.run(tf.initialize_all_variables())

print valid_pad.eval()

print same_pad.eval()

True

True

[[[[ 5.]]]]

[[[[ 5.]

[ 6.]]]]

In [7]:

x = tf.constant([[1., 2., 3., 4.],

[4., 5., 6., 7.],

[8., 9., 10., 11.],

[12.,13.,14.,15.]])

x = tf.reshape(x, [1, 4, 4, 1]) # give a shape accepted by tf.nn.max_pool

valid_pad = tf.nn.max_pool(x, [1, 2, 2, 1], [1, 2, 2, 1], padding='VALID')

same_pad = tf.nn.max_pool(x, [1, 2, 2, 1], [1, 2, 2, 1], padding='SAME')

print valid_pad.get_shape() # valid_pad is [5.]

print same_pad.get_shape() # same_pad is [5., 6.]

#ess = tf.InteractiveSession()

#ess.run(tf.initialize_all_variables())

print valid_pad.eval()

print same_pad.eval()

(1, 2, 2, 1)

(1, 2, 2, 1)

[[[[ 5.]

[ 7.]]

[[ 13.]

[ 15.]]]]

[[[[ 5.]

[ 7.]]

[[ 13.]

[ 15.]]]]

In [8]:

x = tf.constant([[1., 2., 3., 4.],

[4., 5., 6., 7.],

[8., 9., 10., 11.],

[12.,13.,14.,15.]])

x = tf.reshape(x, [1, 4, 4, 1]) # give a shape accepted by tf.nn.max_pool

W = tf.constant([[1., 0.],

[0., 1.]])

W = tf.reshape(W, [2, 2, 1, 1])

valid_pad = tf.nn.conv2d(x, W, strides = [1, 1, 1, 1], padding='VALID')

same_pad = tf.nn.conv2d(x, W, strides = [1, 1, 1, 1],padding='SAME')

print valid_pad.get_shape()

print same_pad.get_shape()

#ess = tf.InteractiveSession()

#ess.run(tf.initialize_all_variables())

print valid_pad.eval()

print same_pad.eval()

(1, 3, 3, 1)

(1, 4, 4, 1)

[[[[ 6.]

[ 8.]

[ 10.]]

[[ 13.]

[ 15.]

[ 17.]]

[[ 21.]

[ 23.]

[ 25.]]]]

[[[[ 6.]

[ 8.]

[ 10.]

[ 4.]]

[[ 13.]

[ 15.]

[ 17.]

[ 7.]]

[[ 21.]

[ 23.]

[ 25.]

[ 11.]]

[[ 12.]

[ 13.]

[ 14.]

[ 15.]]]]

In [9]:

x = tf.constant([[1., 2., 3.],

[4., 5., 6.]])

x = tf.reshape(x, [1, 2, 3, 1]) # give a shape accepted by tf.nn.max_pool

W = tf.constant([[1., 0.],

[0., 1.]])

W = tf.reshape(W, [2, 2, 1, 1])

valid_pad = tf.nn.conv2d(x, W, strides = [1, 1, 1, 1], padding='VALID')

same_pad = tf.nn.conv2d(x, W, strides = [1, 1, 1, 1],padding='SAME')

print valid_pad.get_shape()

print same_pad.get_shape()

#ess = tf.InteractiveSession()

#ess.run(tf.initialize_all_variables())

print valid_pad.eval()

print same_pad.eval()

(1, 1, 2, 1)

(1, 2, 3, 1)

[[[[ 6.]

[ 8.]]]]

[[[[ 6.]

[ 8.]

[ 3.]]

[[ 4.]

[ 5.]

[ 6.]]]]

In [ ]:

CNN初步-2的更多相关文章

- CNN初步-1

Convolution: 个特征,则这时候把输入层的所有点都与隐含层节点连接,则需要学习10^6个参数,这样的话在使用BP算法时速度就明显慢了很多. 所以后面就发展到了局部连接网络,也就是说每个隐 ...

- 初步认识CNN

1.机器学习 (1)监督学习:有数据和标签 (2)非监督学习:只有数据,没有标签 (3)半监督学习:监督学习+非监督学习 (4)强化学习:从经验中总结提升 (5)遗传算法:适者生存,不适者淘汰 2.神 ...

- 卷积神经网络(CNN)学习算法之----基于LeNet网络的中文验证码识别

由于公司需要进行了中文验证码的图片识别开发,最近一段时间刚忙完上线,好不容易闲下来就继上篇<基于Windows10 x64+visual Studio2013+Python2.7.12环境下的C ...

- (六)6.18 cnn 的反向传导算法

本文主要内容是 CNN 的 BP 算法,看此文章前请保证对CNN有初步认识,可参考Neurons Networks convolutional neural network(cnn). 网络表示 CN ...

- [置顶] VB6基本数据库应用(三):连接数据库与SQL语句的Select语句初步

同系列的第三篇,上一篇在:http://blog.csdn.net/jiluoxingren/article/details/9455721 连接数据库与SQL语句的Select语句初步 ”前文再续, ...

- Tensorflow的CNN教程解析

之前的博客我们已经对RNN模型有了个粗略的了解.作为一个时序性模型,RNN的强大不需要我在这里重复了.今天,让我们来看看除了RNN外另一个特殊的,同时也是广为人知的强大的神经网络模型,即CNN模型.今 ...

- 用于NLP的CNN架构搬运:from keras0.x to keras2.x

本文亮点: 将用于自然语言处理的CNN架构,从keras0.3.3搬运到了keras2.x,强行练习了Sequential+Model的混合使用,具体来说,是Model里嵌套了Sequential. ...

- 深入学习卷积神经网络(CNN)的原理知识

网上关于卷积神经网络的相关知识以及数不胜数,所以本文在学习了前人的博客和知乎,在别人博客的基础上整理的知识点,便于自己理解,以后复习也可以常看看,但是如果侵犯到哪位大神的权利,请联系小编,谢谢.好了下 ...

- CS229 6.18 CNN 的反向传导算法

本文主要内容是 CNN 的 BP 算法,看此文章前请保证对CNN有初步认识. 网络表示 CNN相对于传统的全连接DNN来说增加了卷积层与池化层,典型的卷积神经网络中(比如LeNet-5 ),开始几层都 ...

随机推荐

- C语言变量类型和具体的范围

什么是变量?变量自然和常量是相对的.常量就是 1.2.3.4.5.10.6......等固定的数字,而变量则根我们小学学的 x 是一个概念,我们可以让它是 1,也可以让它是 2,我们想让它是几是我们的 ...

- coreseek增量索引合并

重建主索引和增量索引: [plain] view plain copy /usr/local/coreseek/bin/indexer--config /usr/local/coreseek/etc/ ...

- c语言数据结构复习

1)线性表 //顺序存储下线性表的操作实现 #include <stdio.h> #include <stdlib.h> typedef int ElemType; /*线性表 ...

- Java多线程 5 多线程其他知识简要介绍

一.线程组 /** * A thread group represents a set of threads. In addition, a thread * group can also inclu ...

- 阿里云提示Discuz uc.key泄露导致代码注入漏洞uc.php的解决方法

适用所有用UC整合 阿里云提示漏洞: discuz中的/api/uc.php存在代码写入漏洞,导致黑客可写入恶意代码获取uckey,.......... 漏洞名称:Discuz uc.key泄露导致代 ...

- Vue.js简单实践

直接上代码,一个简单的新闻列表页面(.cshtml): @section CssSection{ <style> [v-cloak] { display: none; } </sty ...

- 关于C中内存操作

from:http://blog.csdn.net/shuaishuai80/article/details/6140979 malloc.calloc.realloc的区别 C Language ...

- js 也来 - 【拉勾专场】抛弃简历!让代码说话!

前些日子谢亮兄弟丢了一个链接在群里,我当时看了下,觉得这种装逼题目没什么意思,因为每种语言都有不同的实现方法,你怎么能说你的方法一定比其他语言的好,所以要好的思路 + 好的语言特性运用才能让代码升华. ...

- RabbitMQ之window安装步骤

安装Rabbit MQ Rabbit MQ 是建立在强大的Erlang OTP平台上,因此安装Rabbit MQ的前提是安装Erlang.通过下面两个连接下载安装3.2.3 版本: 下载并安装 Era ...

- Unity3D 动态改变地形

直接获取TerrainData进行修改即可 using System.Collections; using UnityEngine; using UnityEditor; public class D ...