zookeeper+kafka集群的安装

vi ~/.bashrc

export ZOOKEEPER_HOME=/usr/local/zk

export PATH=$ZOOKEEPER_HOME/bin

source ~/.bashrc cd zk/conf

cp zoo_sample.cfg zoo.cfg vi zoo.cfg

修改:

dataDir=/usr/local/zk/data

新增:

server.0=eshop-cache01:2888:3888

server.1=eshop-cache02:2888:3888

server.2=eshop-cache03:2888:3888

import org.apache.ibatis.session.SqlSessionFactory;

import org.apache.tomcat.jdbc.pool.DataSource;

import org.mybatis.spring.SqlSessionFactoryBean;

import org.mybatis.spring.annotation.MapperScan;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.EnableAutoConfiguration;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.boot.context.embedded.ServletListenerRegistrationBean;

import org.springframework.boot.context.properties.ConfigurationProperties;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.ComponentScan;

import org.springframework.core.io.support.PathMatchingResourcePatternResolver;

import org.springframework.jdbc.datasource.DataSourceTransactionManager;

import org.springframework.transaction.PlatformTransactionManager; import com.roncoo.eshop.cache.listener.InitListener; import redis.clients.jedis.HostAndPort;

import redis.clients.jedis.JedisCluster; @EnableAutoConfiguration

@SpringBootApplication

@ComponentScan

@MapperScan("com.roncoo.eshop.cache.mapper")

public class Application { @Bean

@ConfigurationProperties(prefix="spring.datasource")

public DataSource dataSource() {

return new org.apache.tomcat.jdbc.pool.DataSource();

} @Bean

public SqlSessionFactory sqlSessionFactoryBean() throws Exception {

SqlSessionFactoryBean sqlSessionFactoryBean = new SqlSessionFactoryBean();

sqlSessionFactoryBean.setDataSource(dataSource());

PathMatchingResourcePatternResolver resolver = new PathMatchingResourcePatternResolver();

sqlSessionFactoryBean.setMapperLocations(resolver.getResources("classpath:/mybatis/*.xml"));

return sqlSessionFactoryBean.getObject();

} @Bean

public PlatformTransactionManager transactionManager() {

return new DataSourceTransactionManager(dataSource());

} @Bean

public JedisCluster JedisClusterFactory() {

Set<HostAndPort> jedisClusterNodes = new HashSet<HostAndPort>();

jedisClusterNodes.add(new HostAndPort("192.168.31.19", 7003));

jedisClusterNodes.add(new HostAndPort("192.168.31.19", 7004));

jedisClusterNodes.add(new HostAndPort("192.168.31.227", 7006));

JedisCluster jedisCluster = new JedisCluster(jedisClusterNodes);

return jedisCluster;

} @SuppressWarnings({ "rawtypes", "unchecked" })

@Bean

public ServletListenerRegistrationBean servletListenerRegistrationBean() {

ServletListenerRegistrationBean servletListenerRegistrationBean =

new ServletListenerRegistrationBean();

servletListenerRegistrationBean.setListener(new InitListener());

return servletListenerRegistrationBean;

} public static void main(String[] args) {

SpringApplication.run(Application.class, args);

}

}

import javax.servlet.ServletContext;

import javax.servlet.ServletContextEvent;

import javax.servlet.ServletContextListener; import org.springframework.context.ApplicationContext;

import org.springframework.web.context.support.WebApplicationContextUtils; import com.roncoo.eshop.kafka.KafkaConsumer;

import com.roncoo.eshop.spring.SpringContext; /**

* 系统初始化的监听器

*/

public class InitListener implements ServletContextListener { public void contextInitialized(ServletContextEvent sce) {

ServletContext sc = sce.getServletContext();

ApplicationContext context = WebApplicationContextUtils.getWebApplicationContext(sc);

SpringContext.setApplicationContext(context); new Thread(new KafkaConsumer("cache-message")).start();

} public void contextDestroyed(ServletContextEvent sce) { } }

import org.springframework.cache.annotation.EnableCaching;

import org.springframework.cache.ehcache.EhCacheCacheManager;

import org.springframework.cache.ehcache.EhCacheManagerFactoryBean;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.core.io.ClassPathResource; //缓存配置管理类

@Configuration

@EnableCaching

public class CacheConfiguration {

@Bean

public EhCacheManagerFactoryBean ehCacheManagerFactoryBean(){

EhCacheManagerFactoryBean ehCacheManagerFactoryBean=

new EhCacheManagerFactoryBean();

ehCacheManagerFactoryBean.setConfigLocation(new ClassPathResource("ehcache.xml"));

ehCacheManagerFactoryBean.setShared(true);

return ehCacheManagerFactoryBean;

}

@Bean

public EhCacheCacheManager ehCacheCacheManager(EhCacheManagerFactoryBean ehCacheManagerFactoryBean){

return new EhCacheCacheManager(ehCacheManagerFactoryBean.getObject());

}

}

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.Properties; import kafka.consumer.Consumer;

import kafka.consumer.ConsumerConfig;

import kafka.consumer.KafkaStream;

import kafka.javaapi.consumer.ConsumerConnector; // kafka消费者

public class KafkaConsumer implements Runnable {

private ConsumerConnector consumerConnector;

private String topic; public KafkaConsumer(String topic){

this.consumerConnector=Consumer.createJavaConsumerConnector(

createConsumerConfig());

this.topic=topic;

} @SuppressWarnings("rawtypes")

public void run(){

Map<String, Integer> topicCountMap = new HashMap<String, Integer>();

topicCountMap.put(topic, 1); Map<String, List<KafkaStream<byte[], byte[]>>> consumerMap =

consumerConnector.createMessageStreams(topicCountMap);

List<KafkaStream<byte[], byte[]>> streams = consumerMap.get(topic); for (KafkaStream stream : streams) {

new Thread(new KafkaMessageProcessor(stream)).start();

}

} //创建kafka cosumer config

private static ConsumerConfig createConsumerConfig() {

Properties props = new Properties();

props.put("zookeeper.connect", "192.168.31.187:2181,192.168.31.19:2181,192.168.31.227:2181");

props.put("group.id", "eshop-cache-group");

props.put("zookeeper.session.timeout.ms", "40000");

props.put("zookeeper.sync.time.ms", "200");

props.put("auto.commit.interval.ms", "1000");

return new ConsumerConfig(props);

}

}

import com.alibaba.fastjson.JSONObject;

import com.roncoo.eshop.model.ProductInfo;

import com.roncoo.eshop.model.ShopInfo;

import com.roncoo.eshop.service.CacheService;

import com.roncoo.eshop.spring.SpringContext; import kafka.consumer.ConsumerIterator;

import kafka.consumer.KafkaStream; //kafka消息处理线程

@SuppressWarnings("rawtypes")

public class KafkaMessageProcessor implements Runnable { private KafkaStream kafkaStream;

private CacheService cacheService; public KafkaMessageProcessor(KafkaStream kafkaStream) {

this.kafkaStream = kafkaStream;

this.cacheService = (CacheService) SpringContext.getApplicationContext()

.getBean("cacheService");

} @SuppressWarnings("unchecked")

public void run(){

ConsumerIterator<byte[],byte[]> it= kafkaStream.iterator();

while(it.hasNext()){

String message =new String(it.next().message());

// 首先将message转换成json对象

JSONObject messageJSONObject =JSONObject.parseObject(message); // 从这里提取出消息对应的服务的标识

String serviceId =messageJSONObject.getString("serviceId"); // 如果是商品信息服务

if("productInfoService".equals(serviceId)){

processProductInfoChangeMessage(messageJSONObject);

}else if("shopInfoService".equals(serviceId)) {

processShopInfoChangeMessage(messageJSONObject);

}

}

} //处理商品信息变更的消息

private void processProductInfoChangeMessage(JSONObject messageJSONObject){

// 提取出商品id

Long productId = messageJSONObject.getLong("productId"); // 调用商品信息服务的接口

// 直接用注释模拟:getProductInfo?productId=1,传递过去

// 商品信息服务,一般来说就会去查询数据库,去获取productId=1的商品信息,然后返回回来 String productInfoJSON = "{\"id\": 1, \"name\": \"iphone7手机\", \"price\": 5599, \"pictureList\":\"a.jpg,b.jpg\", \"specification\": \"iphone7的规格\", \"service\": \"iphone7的售后服务\", \"color\": \"红色,白色,黑色\", \"size\": \"5.5\", \"shopId\": 1}";

ProductInfo productInfo = JSONObject.parseObject(productInfoJSON, ProductInfo.class);

cacheService.saveProductInfo2LocalCache(productInfo);

System.out.println("===================获取刚保存到本地缓存的商品信息:" + cacheService.getProductInfoFromLocalCache(productId));

cacheService.saveProductInfo2ReidsCache(productInfo);

} //处理店铺信息变更的消息

private void processShopInfoChangeMessage(JSONObject messageJSONObject){

// 提取出商品id

Long productId = messageJSONObject.getLong("productId");

Long shopId = messageJSONObject.getLong("shopId"); String shopInfoJSON = "{\"id\": 1, \"name\": \"小王的手机店\", \"level\": 5, \"goodCommentRate\":0.99}";

ShopInfo shopInfo = JSONObject.parseObject(shopInfoJSON, ShopInfo.class);

cacheService.saveShopInfo2LocalCache(shopInfo);

System.out.println("===================获取刚保存到本地缓存的店铺信息:" + cacheService.getShopInfoFromLocalCache(shopId));

cacheService.saveShopInfo2ReidsCache(shopInfo);

}

}

public class ProductInfo {

private Long id;

private String name;

private Double price;

public ProductInfo() {

}

public ProductInfo(Long id, String name, Double price) {

this.id = id;

this.name = name;

this.price = price;

}

public Long getId() {

return id;

}

public void setId(Long id) {

this.id = id;

}

public String getName() {

return name;

}

public void setName(String name) {

this.name = name;

}

public Double getPrice() {

return price;

}

public void setPrice(Double price) {

this.price = price;

}

}

//店铺信息

public class ShopInfo {

private Long id;

private String name;

private Integer level;

private Double goodCommentRate; public Long getId() {

return id;

} public void setId(Long id) {

this.id = id;

} public String getName() {

return name;

} public void setName(String name) {

this.name = name;

} public Integer getLevel() {

return level;

} public void setLevel(Integer level) {

this.level = level;

} public Double getGoodCommentRate() {

return goodCommentRate;

} public void setGoodCommentRate(Double goodCommentRate) {

this.goodCommentRate = goodCommentRate;

} @Override

public String toString() {

return "ShopInfo [id=" + id + ", name=" + name + ", level=" + level

+ ", goodCommentRate=" + goodCommentRate + "]";

}

}

import com.roncoo.eshop.model.ProductInfo;

import com.roncoo.eshop.model.ShopInfo; //缓存service接口

public interface CacheService {

//将商品信息保存到本地缓存中

public ProductInfo saveLocalCache(ProductInfo productInfo); //从本地缓存中获取商品信息

public ProductInfo getLocalCache(Long id); //将商品信息保存到本地的ehcache缓存中

public ProductInfo saveProductInfo2LocalCache(ProductInfo productInfo); //从本地ehcache缓存中获取商品信息

public ProductInfo getProductInfoFromLocalCache(Long productId); // 将店铺信息保存到本地的ehcache缓存中

public ShopInfo saveShopInfo2LocalCache(ShopInfo shopInfo); //从本地ehcache缓存中获取店铺信息

public ShopInfo getShopInfoFromLocalCache(Long shopId); //将商品信息保存到redis中

public void saveProductInfo2ReidsCache(ProductInfo productInfo); //将店铺信息保存到redis中

public void saveShopInfo2ReidsCache(ShopInfo shopInfo); }

import javax.annotation.Resource; import org.springframework.cache.annotation.CachePut;

import org.springframework.cache.annotation.Cacheable;

import org.springframework.stereotype.Service; import com.alibaba.fastjson.JSONObject;

import com.roncoo.eshop.model.ProductInfo;

import com.roncoo.eshop.model.ShopInfo;

import com.roncoo.eshop.service.CacheService; import redis.clients.jedis.JedisCluster; //缓存Service实现类

@Service("cacheService")

public class CacheServiceImpl implements CacheService {

public static final String CACHE_NAME ="local"; @Resource

private JedisCluster jedisCluster; //将商品信息保存到本地缓存中

@CachePut(value = CACHE_NAME, key = "'key_'+#productInfo.getId()")

public ProductInfo saveLocalCache(ProductInfo productInfo) {

return productInfo;

} // 从本地缓存中获取商品信息

@Cacheable(value = CACHE_NAME, key = "'key_'+#id")

public ProductInfo getLocalCache(Long id) {

return null;

} //将商品信息保存到本地的ehcache缓存中

@CachePut(value=CACHE_NAME,key = "'product_info_'+#productInfo.getId()")

public ProductInfo saveProductInfo2LocalCache(ProductInfo productInfo) {

return productInfo;

} //从本地ehcache缓存中获取商品信息

@Cacheable(value = CACHE_NAME, key = "'product_info_'+#productId")

public ProductInfo getProductInfoFromLocalCache(Long productId) {

return null;

} //将店铺信息保存到本地的ehcache缓存中

@CachePut(value = CACHE_NAME, key = "'shop_info_'+#shopInfo.getId()")

public ShopInfo saveShopInfo2LocalCache(ShopInfo shopInfo) {

return shopInfo;

} //从本地ehcache缓存中获取店铺信息

@Cacheable(value = CACHE_NAME, key = "'shop_info_'+#shopId")

public ShopInfo getShopInfoFromLocalCache(Long shopId) {

return null;

} //将商品信息保存到redis中

public void saveProductInfo2ReidsCache(ProductInfo productInfo){

String key="product_info_" + productInfo.getId();

jedisCluster.set(key, JSONObject.toJSONString(productInfo));

} //将店铺信息保存到redis中

public void saveShopInfo2ReidsCache(ShopInfo shopInfo){

String key="shop_info_" + shopInfo.getId();

jedisCluster.set(key, JSONObject.toJSONString(shopInfo));

}

}

import org.springframework.context.ApplicationContext; /**

* spring上下文

*/

public class SpringContext { private static ApplicationContext applicationContext; public static ApplicationContext getApplicationContext() {

return applicationContext;

} public static void setApplicationContext(ApplicationContext applicationContext) {

SpringContext.applicationContext = applicationContext;

} }

import javax.annotation.Resource;

import org.springframework.stereotype.Controller;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.ResponseBody;

import com.roncoo.eshop.model.ProductInfo;

import com.roncoo.eshop.service.CacheService; @Controller

public class CacheController {

@Resource

private CacheService cacheService; @RequestMapping("/testPutCache")

@ResponseBody

public String testPutCache(ProductInfo productInfo) {

cacheService.saveLocalCache(productInfo);

return "success";

} @RequestMapping("/testGetCache")

@ResponseBody

public ProductInfo testGetCache(Long id) {

return cacheService.getLocalCache(id);

}

}

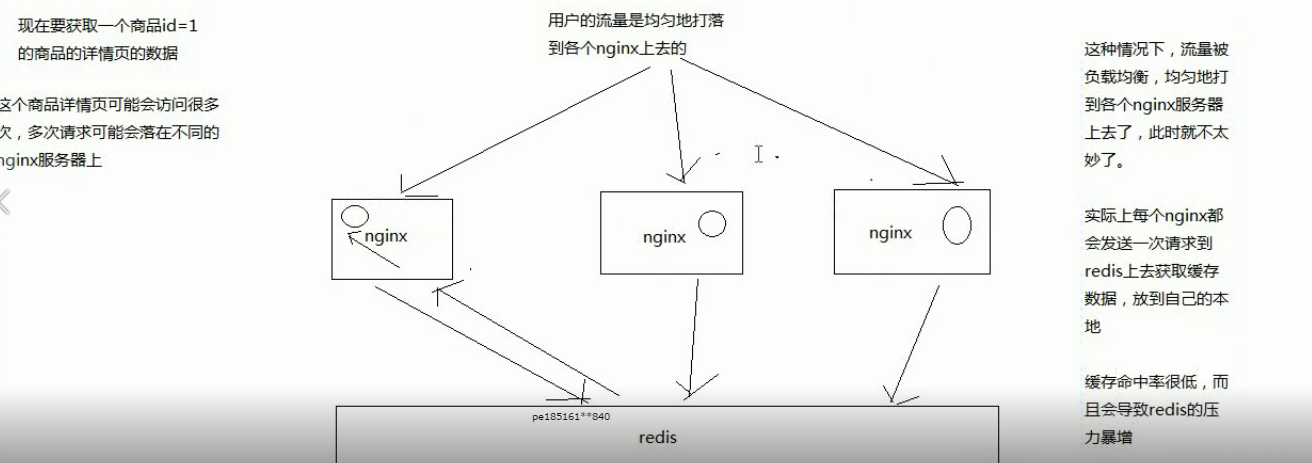

如何提升缓存命中率:

分发层+应用层,双层nginx

分发层nginx,负责流量分发的逻辑和策略,这个里面它可以根据你自己定义的一些规则,比如根据productId去进行hash,然后对后端的nginx数量取模

将某一个商品的访问的请求,就固定路由到一个nginx后端服务器上去,保证说只会从redis中获取一次缓存数据,后面全都是走nginx本地缓存了

后端的nginx服务器,就称之为应用服务器; 最前端的nginx服务器,被称之为分发服务器

大幅度提升你的nginx本地缓存这一层的命中率,大幅度减少redis后端的压力,提升性能

zookeeper+kafka集群的安装的更多相关文章

- zookeeper+kafka集群的安装部署

准备工作 上传 zookeeper-3.4.6.tar.gz.scala-2.11.4.tgz.kafka_2.9.2-0.8.1.1.tgz.slf4j-1.7.6.zip 至/usr/local目 ...

- zookeeper+kafka集群安装之二

zookeeper+kafka集群安装之二 此为上一篇文章的续篇, kafka安装需要依赖zookeeper, 本文与上一篇文章都是真正分布式安装配置, 可以直接用于生产环境. zookeeper安装 ...

- zookeeper+kafka集群安装之一

zookeeper+kafka集群安装之一 准备3台虚拟机, 系统是RHEL64服务版. 1) 每台机器配置如下: $ cat /etc/hosts ... # zookeeper hostnames ...

- zookeeper+kafka集群安装之中的一个

版权声明:本文为博主原创文章.未经博主同意不得转载. https://blog.csdn.net/cheungmine/article/details/26678877 zookeeper+kafka ...

- Zookeeper+Kafka集群部署(转)

Zookeeper+Kafka集群部署 主机规划: 10.200.3.85 Kafka+ZooKeeper 10.200.3.86 Kafka+ZooKeeper 10.200.3.87 Kaf ...

- 搭建zookeeper+kafka集群

搭建zookeeper+kafka集群 一.环境及准备 集群环境: 软件版本: 部署前操作: 关闭防火墙,关闭selinux(生产环境按需关闭或打开) 同步服务器时间,选择公网ntpd服务器或 ...

- 一脸懵逼学习KafKa集群的安装搭建--(一种高吞吐量的分布式发布订阅消息系统)

kafka的前言知识: :Kafka是什么? 在流式计算中,Kafka一般用来缓存数据,Storm通过消费Kafka的数据进行计算.kafka是一个生产-消费模型. Producer:生产者,只负责数 ...

- Zookeeper+Kafka集群部署

Zookeeper+Kafka集群部署 主机规划: 10.200.3.85 Kafka+ZooKeeper 10.200.3.86 Kafka+ZooKeeper 10.200.3.87 Kaf ...

- Kafka集群的安装和使用

Kafka是一种高吞吐量的分布式发布订阅的消息队列系统,原本开发自LinkedIn,用作LinkedIn的活动流(ActivityStream)和运营数据处理管道(Pipeline)的基础.现在它已被 ...

随机推荐

- 第09组 Alpha冲刺(6/6)

队名:观光队 组长博客 作业博客 组员实践情况 王耀鑫 过去两天完成了哪些任务 文字/口头描述 博客撰写,文档,答辩材料整理. 展示GitHub当日代码/文档签入记录 接下来的计划 QA. 还剩下哪些 ...

- adb 常用命令一

1.install 和uninstall adb -s 设备号 install 安装包路径 adb uninstall package名 2.pull 和push: adb pull /sdcar ...

- cv2 的用法

转载:https://www.cnblogs.com/shizhengwen/p/8719062.html 一.读入图像 使用函数cv2.imread(filepath,flags)读入一副图片 fi ...

- [技术博客]微信小程序开发中遇到的两个问题的解决

IDE介绍 微信web开发者工具 前端语言 微信小程序使用的语言为wxml和wss,使用JSON以及js逻辑进行页面之间的交互.与网页的html和css略有不同,微信小程序在此基础上添加了自己的改进, ...

- getaddrinfo工作原理分析

getaddrinfo工作原理分析 将域名解析成ip地址是所有涉及网络通讯功能程序的基本步骤之一,常用的两个接口是gethostbyname和getaddrinfo,而后者是Posix标准推荐在新应用 ...

- Vue系列——如何运行一个Vue项目

声明 本文转自:如何运行一个Vue项目 正文 一开始很多刚入手vue.js的人,会扒GitHub上的开源项目,但是发现不知如何运行GitHub上的开源项目,很尴尬.通过查阅网上教程,成功搭建好项目环境 ...

- docker run 中的privileged参数

docker 应用容器 获取宿主机root权限(特殊权限-) docker run -d --name="centos7" --privileged=true centos:7 / ...

- 使用Faker来随机生成接近真实数据的数据

在很多场景我们需要造一些假数据或者mock数据,如果我们写死类似[XXXX]类似的无意义的其实不是很优雅,Faker能提供常用的一些名词的随机数据. 1.引入POM: <dependency&g ...

- IIS服务器怎么查看网站日志

在做网站的优化以及网站安全的时候,分析网站的日志是非常重要的,但是公司的服务器是IIS的,以前弄的是linux的服务器,不知道该怎么弄,最终找到了解决办法. 1.iis默认是有日志的,在iislog下 ...

- 查看apache httpd server中加载了哪些模块

说明: 有的时候,需要查看当前apache中都加载了哪些模块,通过以下命令进行查看 [root@hadoop1 httpd-]# bin/apachectl -t -D DUMP_MODULES Lo ...