Understanding Convolutions【转】

Understanding Convolutions

In a previous post, we built up an understanding of convolutional neural networks, without referring to any significant mathematics. To go further, however, we need to understand convolutions.

If we just wanted to understand convolutional neural networks, it might suffice to roughly understand convolutions. But the aim of this series is to bring us to the frontier of convolutional neural networks and explore new options. To do that, we’re going to need to understand convolutions very deeply.

Thankfully, with a few examples, convolution becomes quite a straightforward idea.

Lessons from a Dropped Ball

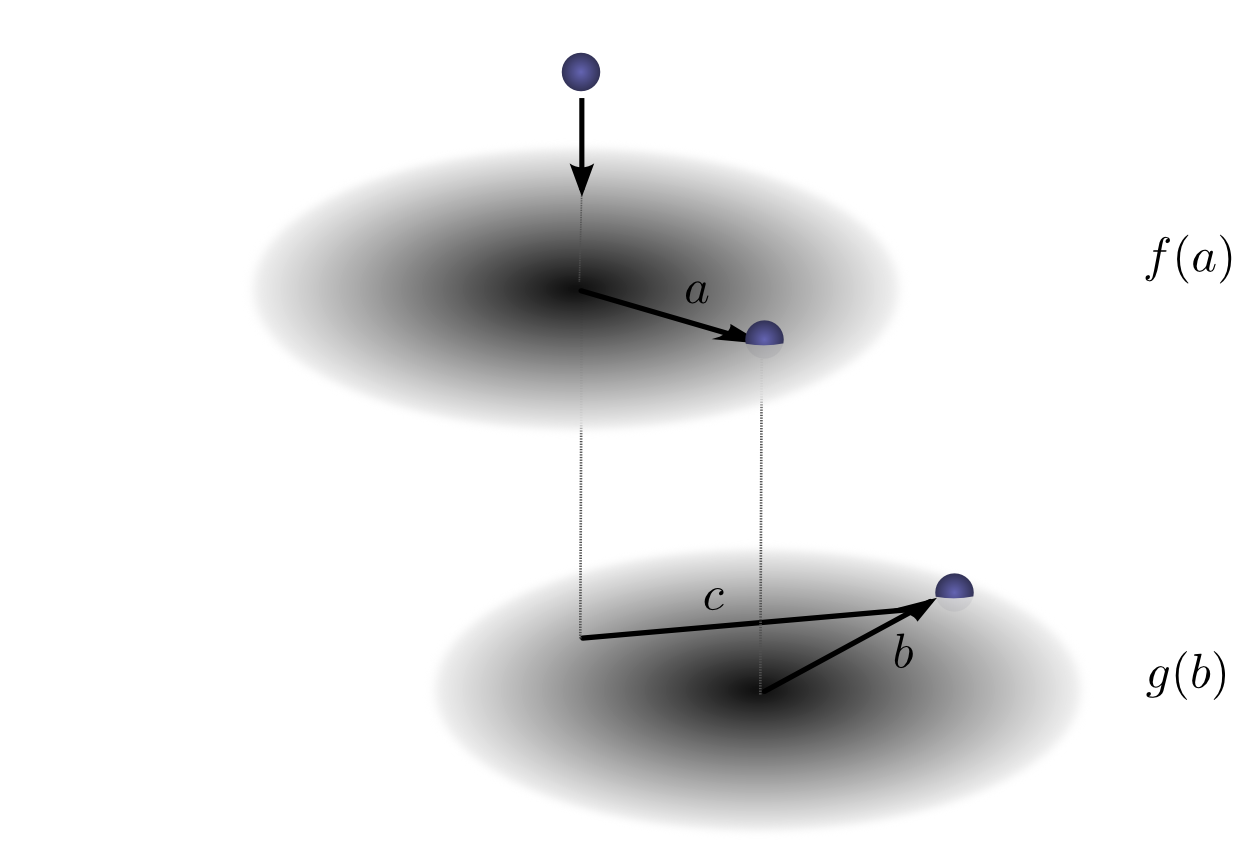

Imagine we drop a ball from some height onto the ground, where it only has one dimension of motion. How likely is it that a ball will go a distance c if you drop it and then drop it again from above the point at which it landed?

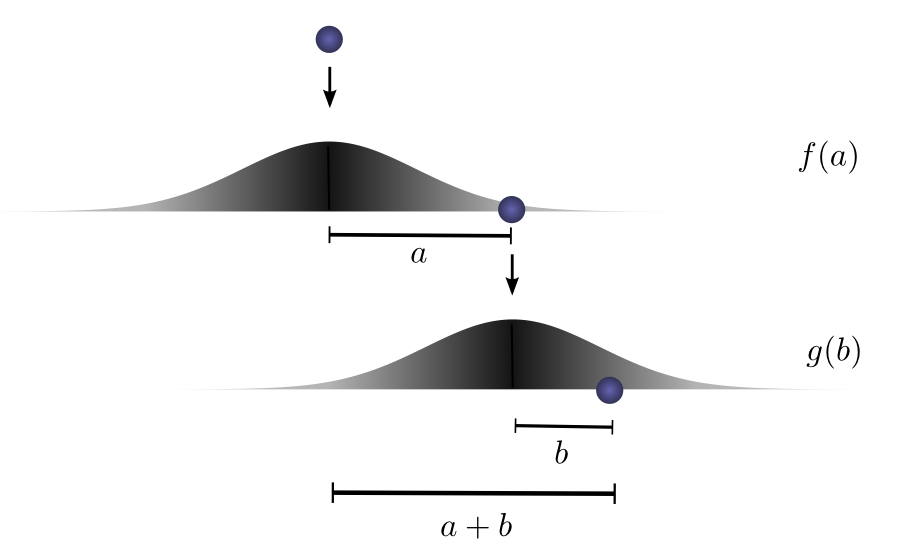

Let’s break this down. After the first drop, it will land a units away from the starting point with probability f(a), where f is the probability distribution.

Now after this first drop, we pick the ball up and drop it from another height above the point where it first landed. The probability of the ball rolling b units away from the new starting point is g(b), where g may be a different probability distribution if it’s dropped from a different height.

If we fix the result of the first drop so we know the ball went distance a, for the ball to go a total distance c, the distance traveled in the second drop is also fixed at b, where a+b=c. So the probability of this happening is simply f(a)⋅g(b).1

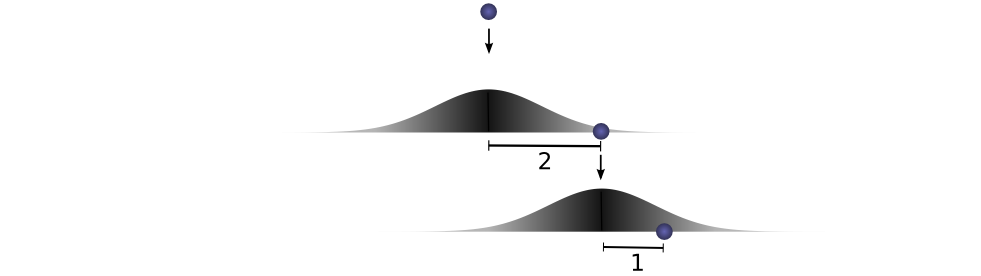

Let’s think about this with a specific discrete example. We want the total distance c to be 3. If the first time it rolls, a=2, the second time it must roll b=1 in order to reach our total distance a+b=3. The probability of this is f(2)⋅g(1).

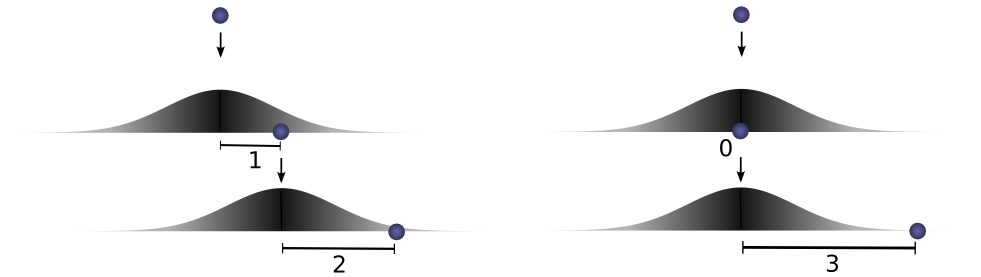

However, this isn’t the only way we could get to a total distance of 3. The ball could roll 1 units the first time, and 2 the second. Or 0 units the first time and all 3 the second. It could go any aand b, as long as they add to 3.

The probabilities are f(1)⋅g(2) and f(0)⋅g(3), respectively.

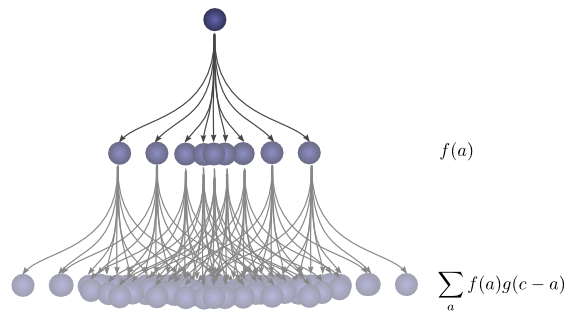

In order to find the total likelihood of the ball reaching a total distance of c, we can’t consider only one possible way of reaching c. Instead, we consider all the possible ways of partitioning cinto two drops a and b and sum over the probability of each way.

We already know that the probability for each case of a+b=c is simply f(a)⋅g(b). So, summing over every solution to a+b=c, we can denote the total likelihood as:

Turns out, we’re doing a convolution! In particular, the convolution of f and g, evluated at c is defined:

If we substitute b=c−a, we get:

This is the standard definition2 of convolution.

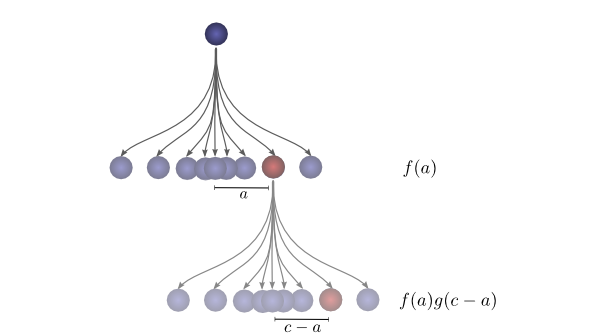

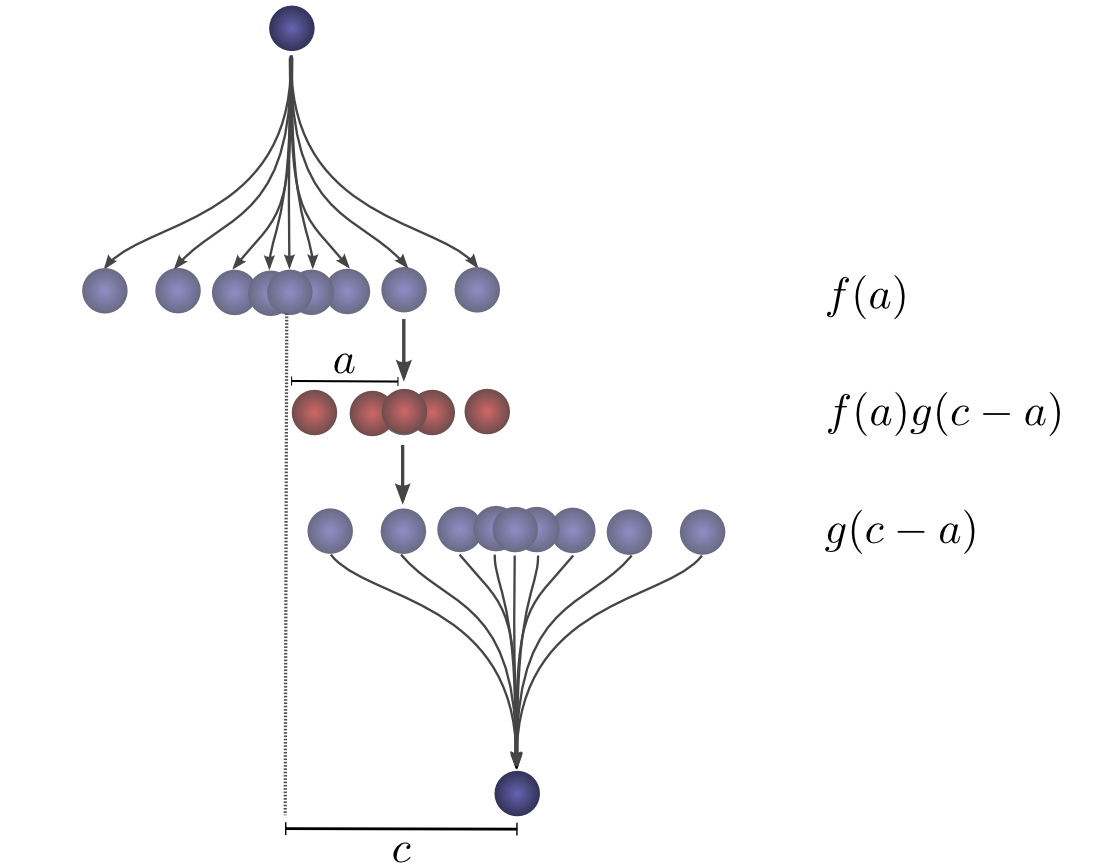

To make this a bit more concrete, we can think about this in terms of positions the ball might land. After the first drop, it will land at an intermediate position a with probability f(a). If it lands at a, it has probability g(c−a) of landing at a position c.

To get the convolution, we consider all intermediate positions.

Visualizing Convolutions

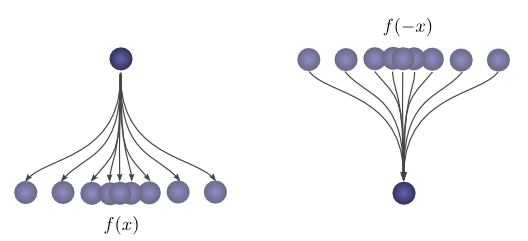

There’s a very nice trick that helps one think about convolutions more easily.

First, an observation. Suppose the probability that a ball lands a certain distance x from where it started is f(x). Then, afterwards, the probability given that it started a distance x from where it landed is f(−x).

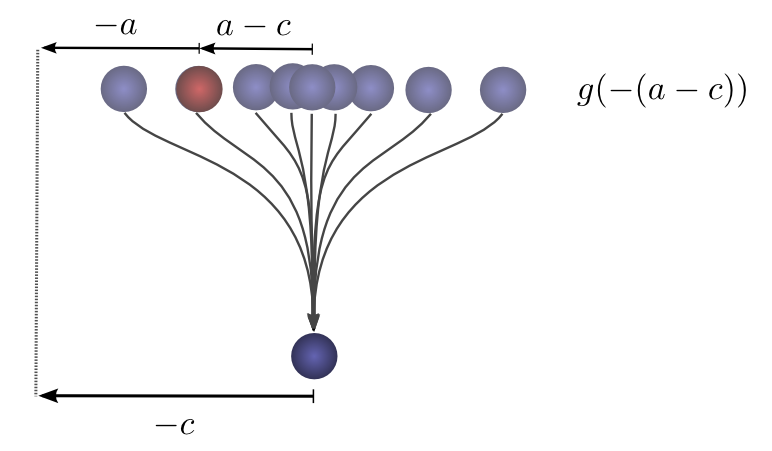

If we know the ball lands at a position c after the second drop, what is the probability that the previous position was a?

So the probability that the previous position was a is g(−(a−c))=g(c−a).

Now, consider the probability each intermediate position contributes to the ball finally landing atc. We know the probability of the first drop putting the ball into the intermediate position a is f(a). We also know that the probability of it having been in a, if it lands at c is g(c−a).

Summing over the as, we get the convolution.

The advantage of this approach is that it allows us to visualize the evaluation of a convolution at a value c in a single picture. By shifting the bottom half shifting around, we can evaluate the convolution at other values of c. This allows us to understand the convolution as a whole.

For example, we can see that it peaks when the distributions align.

And shrinks as the intersection between the distributions gets smaller.

By using this trick in an animation, it really becomes possible to visually understand convolutions.

Below, we’re able to visualize the convolution of two box functions:

Armed with this perspective, a lot of things become more intuitive.

Let’s consider a non-probabilistic example. Convolutions are sometimes used in audio manipulation. For example, one might use a function with two spikes in it, but zero everywhere else, to create an echo. As our double-spiked function slides, one spike hits a point in time first, adding that signal to the output sound, and later, another spike follows, adding a second, delayed copy.

Higher Dimensional Convolutions

Convolutions are an extremely general idea. We can also use them in a higher number of dimensions.

Let’s consider our example of a falling ball again. Now, as it falls, it’s position shifts not only in one dimension, but in two.

Convolution is the same as before:

Except, now a, b and c are vectors. To be more explicit,

Or in the standard definition:

Just like one-dimensional convolutions, we can think of a two-dimensional convolution as sliding one function on top of another, multiplying and adding.

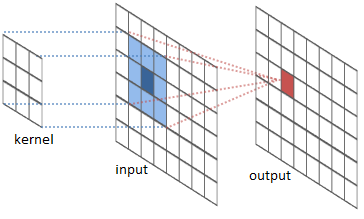

One common application of this is image processing. We can think of images as two-dimensional functions. Many important image transformations are convolutions where you convolve the image function with a very small, local function called a “kernel.”

The kernel slides to every position of the image and computes a new pixel as a weighted sum of the pixels it floats over.

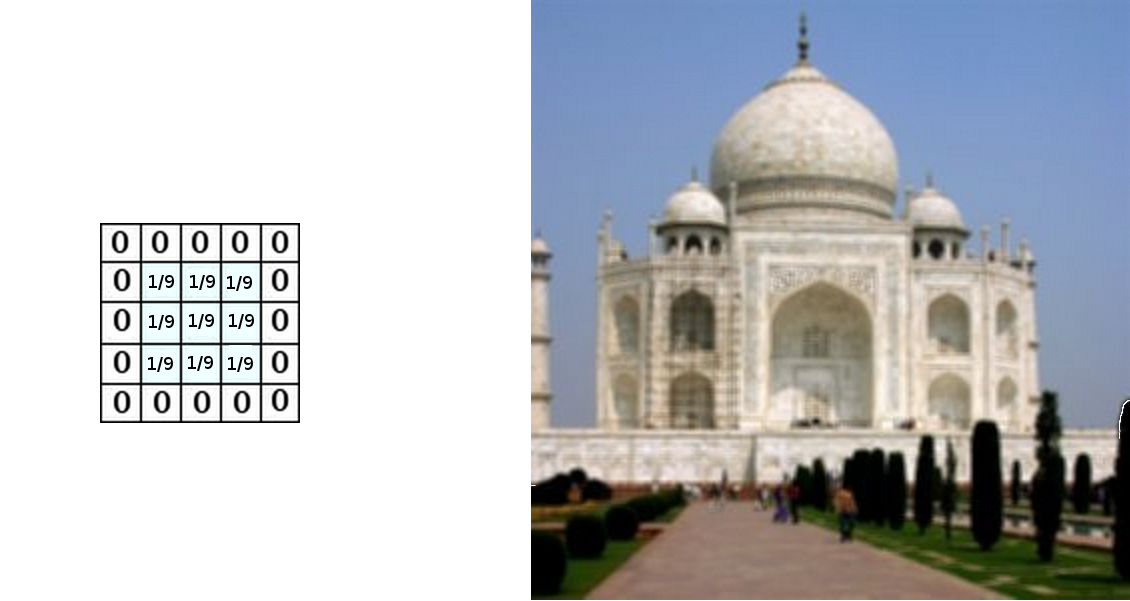

For example, by averaging a 3x3 box of pixels, we can blur an image. To do this, our kernel takes the value 1/9 on each pixel in the box,

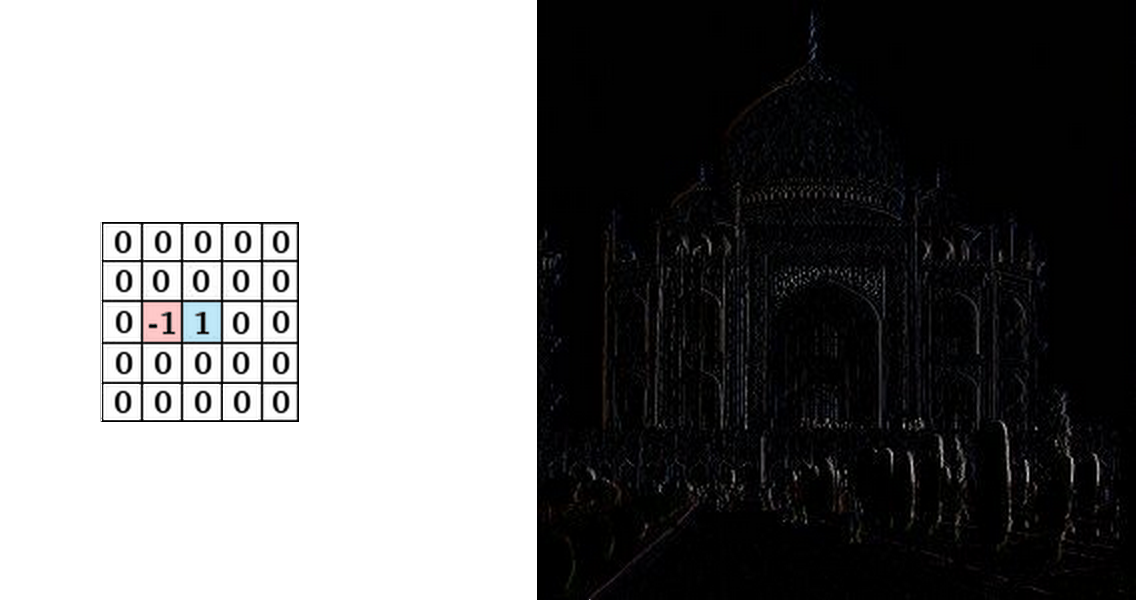

We can also detect edges by taking the values −1 and 1 on two adjacent pixels, and zero everywhere else. That is, we subtract two adjacent pixels. When side by side pixels are similar, this is gives us approximately zero. On edges, however, adjacent pixels are very different in the direction perpendicular to the edge.

The gimp documentation has many other examples.

Convolutional Neural Networks

So, how does convolution relate to convolutional neural networks?

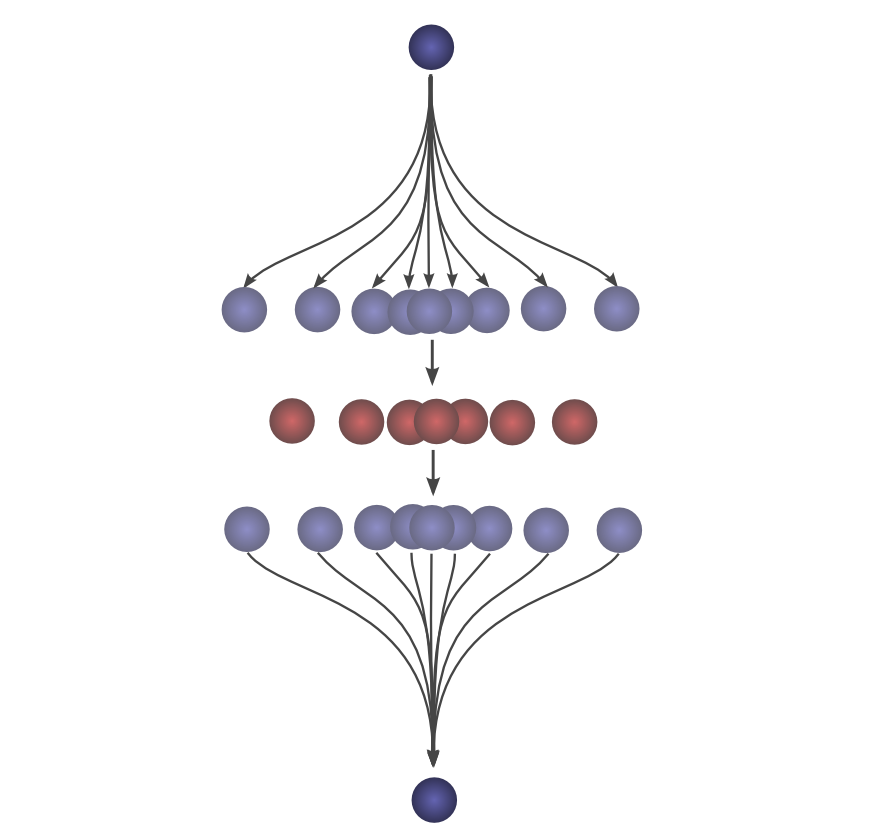

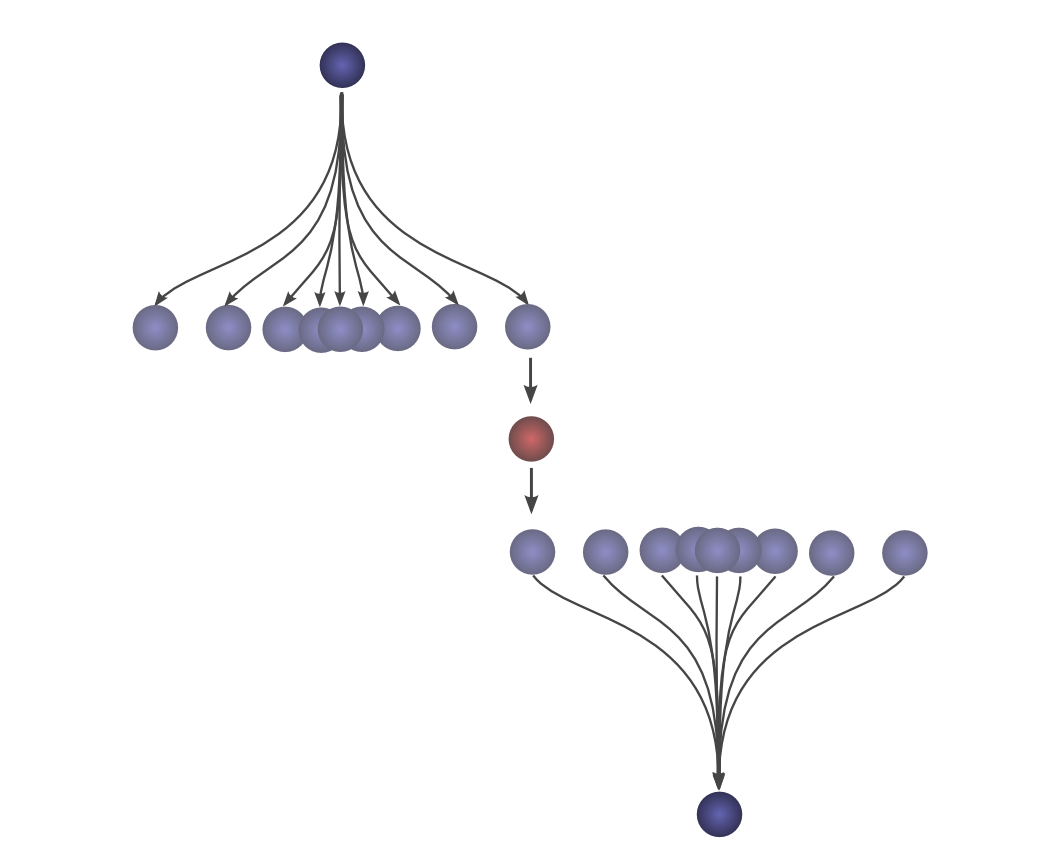

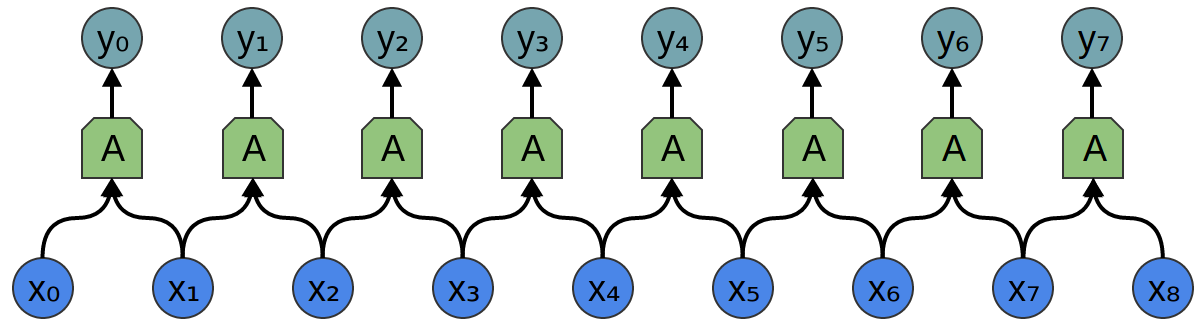

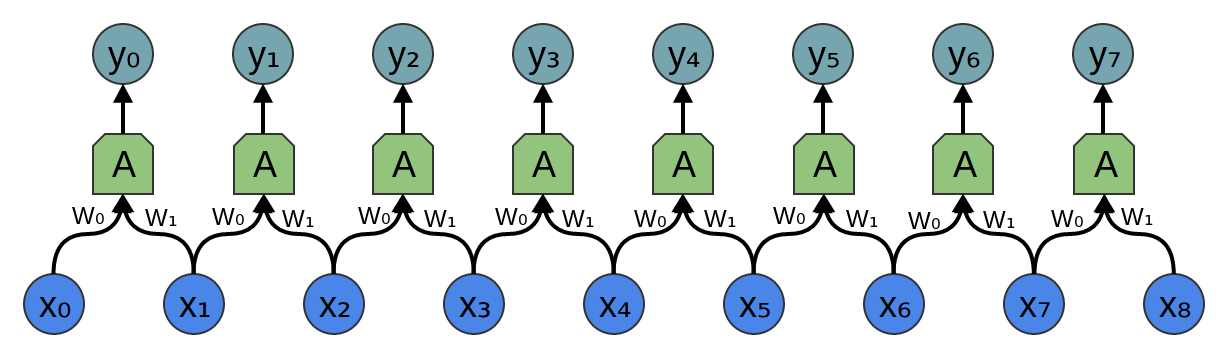

Consider a 1-dimensional convolutional layer with inputs {xn} and outputs {yn}, like we discussed in the previous post:

As we observed, we can describe the outputs in terms of the inputs:

Generally, A would be multiple neurons. But suppose it is a single neuron for a moment.

Recall that a typical neuron in a neural network is described by:

Where x0, x1… are the inputs. The weights, w0, w1, … describe how the neuron connects to its inputs. A negative weight means that an input inhibits the neuron from firing, while a positive weight encourages it to. The weights are the heart of the neuron, controlling its behavior.3 Saying that multiple neurons are identical is the same thing as saying that the weights are the same.

It’s this wiring of neurons, describing all the weights and which ones are identical, that convolution will handle for us.

Typically, we describe all the neurons in a layers at once, rather than individually. The trick is to have a weight matrix, W:

For example, we get:

Each row of the matrix describes the weights connect a neuron to its inputs.

Returning to the convolutional layer, though, because there are multiple copies of the same neuron, many weights appear in multiple positions.

Which corresponds to the equations:

So while, normally, a weight matrix connects every input to every neuron with different weights:

The matrix for a convolutional layer like the one above looks quite different. The same weights appear in a bunch of positions. And because neurons don’t connect to many possible inputs, there’s lots of zeros.

Multiplying by the above matrix is the same thing as convolving with [...0,w1,w0,0...]. The function sliding to different positions corresponds to having neurons at those positions.

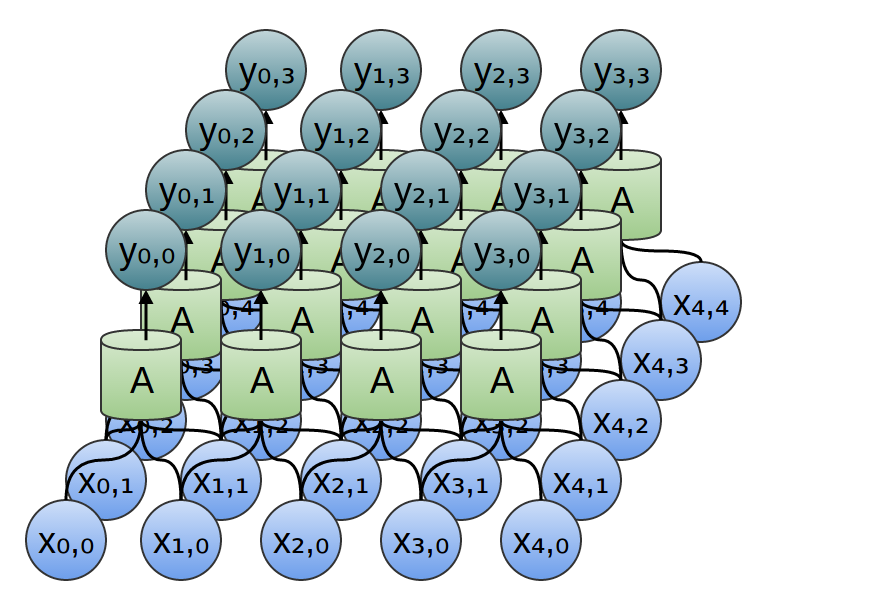

What about two-dimensional convolutional layers?

The wiring of a two dimensional convolutional layer corresponds to a two-dimensional convolution.

Consider our example of using a convolution to detect edges in an image, above, by sliding a kernel around and applying it to every patch. Just like this, a convolutional layer will apply a neuron to every patch of the image.

Conclusion

We introduced a lot of mathematical machinery in this blog post, but it may not be obvious what we gained. Convolution is obviously a useful tool in probability theory and computer graphics, but what do we gain from phrasing convolutional neural networks in terms of convolutions?

The first advantage is that we have some very powerful language for describing the wiring of networks. The examples we’ve dealt with so far haven’t been complicated enough for this benefit to become clear, but convolutions will allow us to get rid of huge amounts of unpleasant book-keeping for us.

Secondly, convolutions come with significant implementational advantages. Many libraries provide highly efficient convolution routines. Further, while convolution naively appears to be an O(n2) operation, using some rather deep mathematical insights, it is possible to create a O(nlog(n)) implementation. We will discuss this in much greater detail in a future post.

In fact, the use of highly-efficient parallel convolution implementations on GPUs has been essential to recent progress in computer vision.

Next Posts in this Series

This post is part of a series on convolutional neural networks and their generalizations. The first two posts will be review for those familiar with deep learning, while later ones should be of interest to everyone. To get updates, subscribe to my RSS feed!

Please comment below or on the side. Pull requests can be made on github.

Acknowledgments

I’m extremely grateful to Eliana Lorch, for extensive discussion of convolutions and help writing this post.

I’m also grateful to Michael Nielsen and Dario Amodei for their comments and support.

We want the probability of the ball rolling a units the first time and also rolling b units the second time. The distributions P(A)=f(a) and P(b)=g(b) are independent, with both distributions centered at 0. So P(a,b)=P(a)∗P(b)=f(a)⋅g(b).↩

The non-standard definition, which I haven’t previously seen, seems to have a lot of benefits. In future posts, we will find this definition very helpful because it lends itself to generalization to new algebraic structures. But it also has the advantage that it makes a lot of algebraic properties of convolutions really obvious.

For example, convolution is a commutative operation. That is, f∗g=g∗f. Why?

∑a+b=cf(a)⋅g(b) = ∑b+a=cg(b)⋅f(a)Convolution is also associative. That is, (f∗g)∗h=f∗(g∗h). Why?

∑(a+b)+c=d(f(a)⋅g(b))⋅h(c) = ∑a+(b+c)=df(a)⋅(g(b)⋅h(c))There’s also the bias, which is the “threshold” for whether the neuron fires, but it’s much simpler and I don’t want to clutter this section talking about it.↩

Understanding Convolutions【转】的更多相关文章

- Understanding Convolutions

http://colah.github.io/posts/2014-07-Understanding-Convolutions/ Posted on July 13, 2014 neural netw ...

- 【机器学习Machine Learning】资料大全

昨天总结了深度学习的资料,今天把机器学习的资料也总结一下(友情提示:有些网站需要"科学上网"^_^) 推荐几本好书: 1.Pattern Recognition and Machi ...

- 【转】自学成才秘籍!机器学习&深度学习经典资料汇总

小编都深深的震惊了,到底是谁那么好整理了那么多干货性的书籍.小编对此人表示崇高的敬意,小编不是文章的生产者,只是文章的搬运工. <Brief History of Machine Learn ...

- 机器学习(Machine Learning)&深度学习(Deep Learning)资料

<Brief History of Machine Learning> 介绍:这是一篇介绍机器学习历史的文章,介绍很全面,从感知机.神经网络.决策树.SVM.Adaboost到随机森林.D ...

- 机器学习(Machine Learning)&深入学习(Deep Learning)资料

<Brief History of Machine Learning> 介绍:这是一篇介绍机器学习历史的文章,介绍很全面,从感知机.神经网络.决策树.SVM.Adaboost 到随机森林. ...

- 机器学习(Machine Learning)&深度学习(Deep Learning)资料【转】

转自:机器学习(Machine Learning)&深度学习(Deep Learning)资料 <Brief History of Machine Learning> 介绍:这是一 ...

- 机器学习&深度学习经典资料汇总,data.gov.uk大量公开数据

<Brief History of Machine Learning> 介绍:这是一篇介绍机器学习历史的文章,介绍很全面,从感知机.神经网络.决策树.SVM.Adaboost到随机森林.D ...

- 机器学习、NLP、Python和Math最好的150余个教程(建议收藏)

编辑 | MingMing 尽管机器学习的历史可以追溯到1959年,但目前,这个领域正以前所未有的速度发展.最近,我一直在网上寻找关于机器学习和NLP各方面的好资源,为了帮助到和我有相同需求的人,我整 ...

- 近200篇机器学习&深度学习资料分享(含各种文档,视频,源码等)(1)

原文:http://developer.51cto.com/art/201501/464174.htm 编者按:本文收集了百来篇关于机器学习和深度学习的资料,含各种文档,视频,源码等.而且原文也会不定 ...

随机推荐

- Mysql启停以及恢复备份恢复数据库

1.mysql启停 进入cmd 输入如下命令 net stop mysql(自己起的mysql名称) -------停 net strat mysql ---------------------- ...

- 编程作业—C++初探 简单的学生信息处理程序实现

简单的学生信息处理程序实现 来源: POJ (Coursera声明:在POJ上完成的习题将不会计入Coursera的最后成绩.) 注意: 总时间限制: 1000ms 内存限制: 65536kB 描述 ...

- Win7系统下VS2008安装SP1补丁解决JQuery无智能提示的问题

jQuery在vs2008中的智能提示 1 安装VS2008SP1补丁 要确保您的vs2008已经打了sp1补丁,在vs2008的帮助里的关于,要是安装了sp1,会出现“版本 3.5 sp1”,没安 ...

- 2014年第五届蓝桥杯试题C/C++程序设计B组——李白打酒

题目描述: 标题:李白打酒 话说大诗人李白,一生好饮.幸好他从不开车. 一天,他提着酒壶,从家里出来,酒壶中有酒2斗.他边走边唱: 无事街上走,提壶去打酒. 逢店加一倍,遇花喝一斗. 这一路上,他一共 ...

- ADO .NET 链接 增删改查

ADO.NET: 数据访问技术 就是将C#和MSSQL连接起来的一个纽带 可以通过ADO.NET将内存中的临时数据写入到数据库中也可以将数据库中的数据提取到内存中供程序调用 所有数据访问技术的基础 连 ...

- Knockout.Js官网学习(event绑定、submit绑定)

event绑定 event绑定在DOM元素上添加指定的事件句柄以便元素被触发的时候执行定义的JavaScript 函数.大部分情况下是用在keypress,mouseover和mouseout上. 简 ...

- 分析MapReduce执行过程

分析MapReduce执行过程 MapReduce运行的时候,会通过Mapper运行的任务读取HDFS中的数据文件,然后调用自己的方法,处理数据,最后输出. Reducer任务会接收Mapper任务输 ...

- python匿名函数(lambda)

简单来说,编程中提到的 lambda 表达式,通常是在需要一个函数,但是又不想费神去命名一个函数的场合下使用,也就是指匿名函数 当我们在传入函数时,有些时候,不需要显式地定义函数,直接传入匿名函数更方 ...

- Github上LeakCanary编译报错CreateProcess error=2的解决方法

现象说明: 从github上拉下LeakCanary编译时报错 CreateProcess error=2, ϵͳÕҲ»µ½ָ¶ 原因分析: 该现象是由于Windows中Gradle调用命令未加cmd ...

- linux log find 查询

常用的日志查询命令:find 1.从根目录开始查找所有扩展名为.log的文本文件,并找出包含”ERROR”的行: find / -type f -name "*.log" | xa ...