kubernetes实践之一:kubernetes二进制包安装

kubernetes二进制部署

1、环境规划

|

软件 |

版本 |

|

Linux操作系统 |

CentOS Linux release 7.6.1810 (Core) |

|

Kubernetes |

1.9 |

|

Docker |

18.09.3 |

|

etcd |

3.3.10 |

|

角色 |

IP |

组件 |

推荐配置 |

|

k8s_master etcd01 |

192.168.1.153 |

kube-apiserver kube-controller-manager kube-scheduler etcd |

CPU 2核+ 2G内存+ |

|

k8s_node01 etcd02 |

192.168.1.154 |

kubelet kube-proxy docker flannel etcd |

|

|

k8s_node02 etcd03 |

192.168.1.155 |

kubelet kube-proxy docker flannel etcd |

2、 单Master集群架构

3、 系统常规参数配置

3.1 关闭selinux

sed -i 's/SELINUX=enforcing/SELINUX=disabled/' /etc/selinux/config

setenforce 0

3.2 文件数调整

sed -i '/* soft nproc 4096/d' /etc/security/limits.d/20-nproc.conf

echo '* - nofile 65536' >> /etc/security/limits.conf

echo '* soft nofile 65535' >> /etc/security/limits.conf

echo '* hard nofile 65535' >> /etc/security/limits.conf

echo 'fs.file-max = 65536' >> /etc/sysctl.conf

3.3 防火墙关闭

systemctl disable firewalld.service

systemctl stop firewalld.service

3.4 常用工具安装及时间同步

yum -y install vim telnet iotop openssh-clients openssh-server ntp net-tools.x86_64 wget

ntpdate time.windows.com

3.5 hosts文件配置(3个节点)

vim /etc/hosts

192.168.1.153 k8s_master

192.168.1.154 k8s_node01

192.168.1.155 k8s_node02

3.6 服务器之间免密钥登录

ssh-keygen

ssh-copy-id -i ~/.ssh/id_rsa.pub root@192.168.1.154

ssh-copy-id -i ~/.ssh/id_rsa.pub root@192.168.1.155

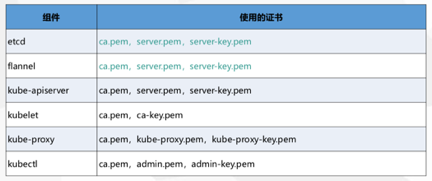

4、 自签ssl证书

4.1 etcd生成证书

|

cfssl.sh |

etcd-cert.sh |

etcd.sh |

4.1.1 安装cfssl工具(cfssl.sh)

cd /home/k8s_install/ssl_etcd

chmod +x cfssl.sh

./cfssl.sh

内容如下:

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

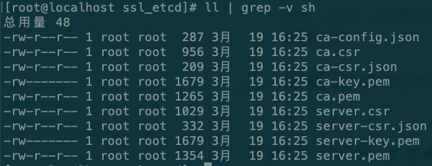

4.1.2 生成etcd 自签ca证书(etcd-cert.sh)

chmod +x etcd-cert.sh

./etcd-cert.sh

内容如下:

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca –

#-----------------------

cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"192.168.1.153",

"192.168.1.154",

"192.168.1.155",

"192.168.1.156",

"192.168.1.157"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

注意:hosts一定要包含所有节点,可以多部署几个预留节点以便后续扩容,否则还需要重新生成

4.1.3 etcd二进制包安装

#存放配置文件,可执行文件,证书文件

mkdir /opt/etcd/{cfg,bin,ssl} -p

#ssl 证书切记复制到/opt/etcd/ssl/

cp {ca,server-key,server}.pem /opt/etcd/ssl/

#部署etcd以及增加etcd服务(etcd.sh)

cd /home/k8s_install/soft/

tar -zxvf etcd-v3.3.10-linux-amd64.tar.gz

cd etcd-v3.3.10-linux-amd64

mv etcd etcdctl /opt/etcd/bin/

cd /home/k8s_install/ssl_etcd

chmod +x etcd.sh

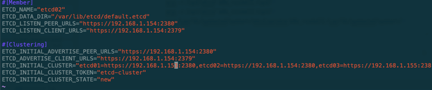

参数说明:1.etcd名称 2.本机ip 3.其他两个etcd名称以及地址

./etcd.sh etcd01 192.168.1.153 etcd02=https://192.168.1.154:2380,etcd03=https://192.168.1.155:2380

执行后会卡住实际是在等待其他两个节点加入

其他两个node节点部署etcd:

scp -r /opt/etcd/ k8s_node01:/opt/

scp -r /opt/etcd/ k8s_node02:/opt/

scp /usr/lib/systemd/system/etcd.service k8s_node01:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/etcd.service k8s_node02:/usr/lib/systemd/system/

#修改node节点配置文件(2个节点都需要更改)

ssh k8s_node01

vim /opt/etcd/cfg/etcd

ETCD_NAME以及ip地址

systemctl daemon-reload

systemctl start etcd.service

etcd.sh脚本内容如下:

#!/bin/bash

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/opt/etcd

cat <<EOF >$WORK_DIR/cfg/etcd

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

cat <<EOF >/usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

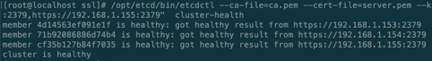

4.1.4 查看etcd集群健康情况

cd /opt/etcd/ssl

/opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.1.153:2379,https://192.168.1.154:2379,https://192.168.1.155:2379" cluster-health

5、 安装Docker(node 节点)

5.1 安装依赖包

yum install -y yum-utils \ device-mapper-persistent-data \ lvm2

5.2 配置官方源(替换为阿里源)

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

5.3 更新并安装Docker-CE

yum makecache fast

yum install docker-ce -y

5.4 配置docker加速器

curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://f1361db2.m.daocloud.io

5.5 启动docker

systemctl restart docker.service

systemctl enable docker.service

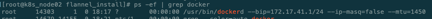

6、部署Flannel网络

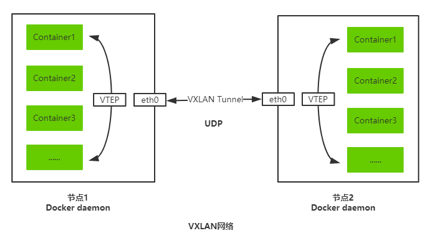

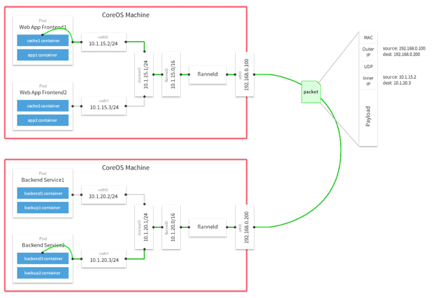

Overlay Network:覆盖网络,在基础网络上叠加的一种虚拟网络技术模式,该网络中的主机通过虚拟链路连接起来。 VXLAN:将源数据包封装到UDP中,并使用基础网络的IP/MAC作为外层报文头进行封装,然后在以太网上传输,到达目的地后由隧道端点解封装并将数据发送给目标地址。 Flannel:是Overlay网络的一种,也是将源数据包封装在另一种网络包里面进行路由转发和通信,目前已经支持UDP、VXLAN、AWS VPC和GCE路由等数据转发方式。 多主机容器网络通信其他主流方案:隧道方案( Weave、OpenvSwitch ),路由方案(Calico)等。

6.1 写入分配的子网段到etcd,供flanneld使用(master)

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints=https://192.168.1.153:2379,https://192.168.1.154:2379,https://192.168.1.155:2379 set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

6.2 二进制包安装Flannel(node节点 flannel.sh)

下载地址:https://github.com/coreos/flannel/releases/download/

#

mkdir /opt/kubernetes/{bin,cfg,ssl} -p

cd /home/k8s_install/flannel_install/

tar -zxvf flannel-v0.10.0-linux-amd64.tar.gz

mv {flanneld,mk-docker-opts.sh} /opt/kubernetes/bin/

cd /home/k8s_install/flannel_install

chmod +x flannel.sh

chmod +x /opt/kubernetes/bin/{flanneld,mk-docker-opts.sh}

./flannel.sh https://192.168.1.153:2379,https://192.168.1.154:2379,https://192.168.1.155:2379

脚本内容如下:

#!/bin/bash

ETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"}

cat <<EOF >/opt/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \

-etcd-cafile=/opt/etcd/ssl/ca.pem \

-etcd-certfile=/opt/etcd/ssl/server.pem \

-etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

cat <<EOF >/usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

cat <<EOF >/usr/lib/systemd/system/docker.service

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd \$DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP \$MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

systemctl restart docker

6.3 查看flannel是否部署成功

7、部署Master组件

mkdir /opt/kubernetes/{bin,cfg,ssl} -p

cd /home/k8s_install/k8s_master_componet

tar -zxvf kubernetes-server-linux-amd64.tar.gz

cp -r kubernetes/server/bin/{kube-scheduler,kube-controller-manager,kube-apiserver} /opt/kubernetes/bin

cp kubernetes/server/bin/kubectl /usr/bin/

7.1 Kube-apiserver部署

chmod +x apiserver.sh

./apiserver.sh 192.168.1.153 https://192.168.1.153:2379,https://192.168.1.154:2379,https://192.168.1.155:2379

脚本内容:

#!/bin/bash

MASTER_ADDRESS=$1

ETCD_SERVERS=$2

cat <<EOF >/opt/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \\

--v=4 \\

--etcd-servers=${ETCD_SERVERS} \\

--bind-address=${MASTER_ADDRESS} \\

--secure-port=6443 \\

--advertise-address=${MASTER_ADDRESS} \\

--allow-privileged=true \\

--service-cluster-ip-range=10.0.0.0/24 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--kubelet-https=true \\

--enable-bootstrap-token-auth \\

--token-auth-file=/opt/kubernetes/cfg/token.csv \\

--service-node-port-range=30000-50000 \\

--tls-cert-file=/opt/kubernetes/ssl/server.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--etcd-cafile=/opt/etcd/ssl/ca.pem \\

--etcd-certfile=/opt/etcd/ssl/server.pem \\

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

7.1.1 生成kube-apiserver自签证书(k8s-cert.sh)

chmod +x k8s-cert.sh

./k8s-cert.sh

cp ca.pem server.pem ca-key.pem server-key.pem /opt/kubernetes/ssl/

脚本内容如下:

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca –

#-----------------------

cat > server-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.1.153",

"192.168.1.154",

"192.168.1.155",

"192.168.1.156",

"192.168.1.157",

"192.168.1.158",

"192.168.1.159",

"192.168.1.160",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

#-----------------------

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

#-----------------------

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

备注:hosts中尽量把未来可拓展的node加进来,之后就不用在重新生成自签证书了.

7.1.2 生成token证书(kube_token.sh)

sh kube_token.sh

mv token.csv /opt/kubernetes/cfg/

脚本内容如下(kube_token.sh):

# 创建 TLS Bootstrapping Token

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

cat > token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF

7.1.3 部署kube-apiserver配置文件及服务

cd /home/k8s_install/k8s_master_componet

chmod +x apiserver.sh

./apiserver.sh 192.168.1.153 https://192.168.1.153:2379,https://192.168.1.154:2379,https://192.168.1.155:2379

脚本内容如下(apiserver.sh):

#!/bin/bash

MASTER_ADDRESS=$1

ETCD_SERVERS=$2

cat <<EOF >/opt/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \\

--v=4 \\

--etcd-servers=${ETCD_SERVERS} \\

--bind-address=${MASTER_ADDRESS} \\

--secure-port=6443 \\

--advertise-address=${MASTER_ADDRESS} \\

--allow-privileged=true \\

--service-cluster-ip-range=10.0.0.0/24 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--kubelet-https=true \\

--enable-bootstrap-token-auth \\

--token-auth-file=/opt/kubernetes/cfg/token.csv \\

--service-node-port-range=30000-50000 \\

--tls-cert-file=/opt/kubernetes/ssl/server.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--etcd-cafile=/opt/etcd/ssl/ca.pem \\

--etcd-certfile=/opt/etcd/ssl/server.pem \\

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

7.2 kube-controller-manager部署

7.2.1 部署controller-manager配置文件及服务

cd /home/k8s_install/k8s_master_componet

chmod +x controller-manager.sh

./controller-manager.sh 127.0.0.1

脚本内容如下:

#!/bin/bash

MASTER_ADDRESS=$1

cat <<EOF >/opt/kubernetes/cfg/kube-controller-manager

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\

--v=4 \\

--master=${MASTER_ADDRESS}:8080 \\

--leader-elect=true \\

--address=127.0.0.1 \\

--service-cluster-ip-range=10.0.0.0/24 \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--experimental-cluster-signing-duration=87600h0m0s"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

7.3 Kube-scheduler部署

7.3.1 部署Kube-scheduler配置文件及服务

cd /home/k8s_install/k8s_master_componet

chmod +x scheduler.sh

./scheduler.sh 127.0.0.1

脚本内容如下:

#!/bin/bash

MASTER_ADDRESS=$1

cat <<EOF >/opt/kubernetes/cfg/kube-controller-manager

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\

--v=4 \\

--master=${MASTER_ADDRESS}:8080 \\

--leader-elect=true \\

--address=127.0.0.1 \\

--service-cluster-ip-range=10.0.0.0/24 \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--experimental-cluster-signing-duration=87600h0m0s"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

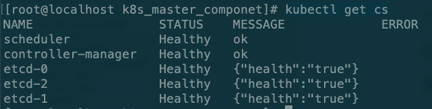

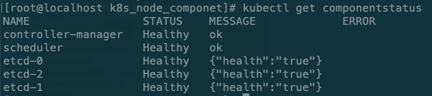

7.4 查看Master状态

kubectl get cs

8、部署Node组件

8.1 将kubelet-bootstrap用户绑定到系统集群角色(master执行)

kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

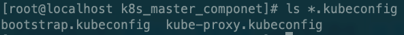

8.2 创建kubeconfig文件(存放连接apiserver认证信息,master执行)

cd /home/k8s_install/k8s_master_componet

chmod +x kubeconfig.sh

#执行之前需要保证BOOTSTRAP_TOKEN正确修改脚本BOOTSTRAP_TOKEN

cat /opt/kubernetes/cfg/token.csv

./kubeconfig.sh 192.168.1.153 /home/k8s_install/k8s_master_componet/

参数说明:param1:apiserver地址 param2:生成证书目录

脚本说明:

APISERVER=$1

SSL_DIR=$2

BOOTSTRAP_TOKEN=18a5ee2b7525d343e112807ba5f101ae

# 创建kubelet bootstrapping kubeconfig

export KUBE_APISERVER="https://$APISERVER:6443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

# 创建kube-proxy kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=$SSL_DIR/kube-proxy.pem \

--client-key=$SSL_DIR/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

8.3 复制kubeconfig文件到node节点

scp -r *.kubeconfig k8s_node01:/opt/kubernetes/cfg/

scp -r *.kubeconfig k8s_node02:/opt/kubernetes/cfg/

8.4 部署kubelet组件

#复制包到node节点

scp kubelet kube-proxy k8s_node01:/opt/kubernetes/bin/

cd /home/k8s_install/k8s_node_componet

chmod +x kubelet.sh

./kubelet.sh 192.168.1.154

脚本如下:

#!/bin/bash

NODE_ADDRESS=$1

DNS_SERVER_IP=${2:-"10.0.0.2"}

cat <<EOF >/opt/kubernetes/cfg/kubelet

KUBELET_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=${NODE_ADDRESS} \\

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\

--config=/opt/kubernetes/cfg/kubelet.config \\

--cert-dir=/opt/kubernetes/ssl \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

EOF

cat <<EOF >/opt/kubernetes/cfg/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: ${NODE_ADDRESS}

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- ${DNS_SERVER_IP}

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

EOF

cat <<EOF >/usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet

8.5 部署kube-proxy组件

cd /home/k8s_install/k8s_node_componet

chmod +x proxy.sh

./proxy.sh 192.168.1.154

脚本如下:

#!/bin/bash

NODE_ADDRESS=$1

cat <<EOF >/opt/kubernetes/cfg/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=${NODE_ADDRESS} \\

--cluster-cidr=10.0.0.0/24 \\

--proxy-mode=ipvs \\

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

EOF

cat <<EOF >/usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

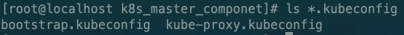

8.6 master授权node请求签名

kubectl get csr

kubectl certificate approve node-csr-aCTdx_2qXRajXlHrIlg2uPddhpexw8NRHP5EEtPllps

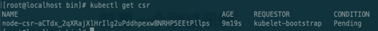

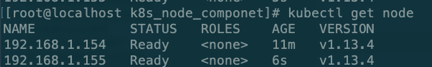

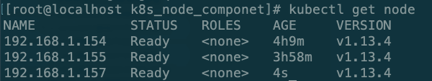

9、查询集群状态

# kubectl get node

# kubectl get componentstatus

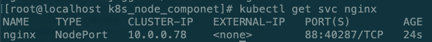

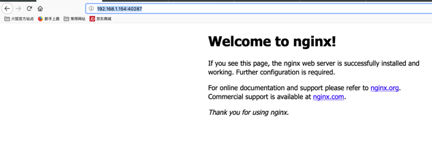

10、启动一个测试示例

# kubectl create deployment nginx --image=nginx

# kubectl get pod -o wide

# kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

# kubectl get svc nginx

授权:

kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous

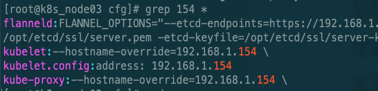

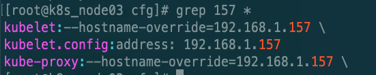

11、新增一个node节点步骤

本文以157为例子

11.1 参照三章系统常规参数配置

更新hosts(所有节点)

vim /etc/hosts

192.168.1.153 k8s_master

192.168.1.154 k8s_node01

192.168.1.155 k8s_node02

192.168.1.157 k8s_node03

11.2 安装Docker 参照第五章

11.3 从node01复制文件到node03

scp -r /opt/kubernetes/ k8s_node03:/opt/

scp /usr/lib/systemd/system/{kubelet,kube-proxy}.service k8s_node03:/usr/lib/systemd/system/

11.3.1 替换node节点ip

cd /opt/kubernetes/cfg

需要修改:kubelet,kubelet.config,kube-proxy文件ip

11.3.2 删除node01认证信息

rm -rf /opt/kubernetes/ssl/*

11.3.3 启动kubelet,kube-proxy服务

systemctl start kubelet.service

systemctl enable kubelet.service

systemctl start kube-proxy.service

systemctl enable kube-proxy.service

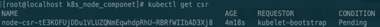

11.3.4 master授权新加入node

# kubectl get csr

kubectl certificate approve node-csr-tE3KOFUjDDu1VLUZQNmEqwhdpRhU-RBRfWIIbAD3Xj8

12、K8s高可用搭建

环境规划:

|

角色 |

IP |

组件 |

推荐配置 |

|

k8s_master etcd01 |

192.168.1.153 |

kube-apiserver kube-controller-manager kube-scheduler etcd |

CPU 2核+ 2G内存+ |

|

k8s_master02 |

192.168.1.157 |

kube-apiserver kube-controller-manager kube-scheduler etcd |

|

|

k8s_node01 etcd02 |

192.168.1.154 |

kubelet kube-proxy docker flannel etcd |

|

|

k8s_node02 etcd03 |

192.168.1.155 |

kubelet kube-proxy docker flannel etcd |

|

|

Load Balancer (Master) |

192.168.1.158 192.168.1.160 (VIP) |

Nginx L4 |

|

|

Load Balancer (Backup) |

192.168.1.159 |

Nginx L4 |

多master集群架构

12.1 高可用LB环境搭建(keepalived+nginx)

nginx安装:

rpm -Uvh http://nginx.org/packages/centos/7/noarch/RPMS/nginx-release-centos-7-0.el7.ngx.noarch.rpm

yum install -y nginx

keepalived安装:

yum -y install keepalived

nginx配置增加stream模块:

vim /etc/nginx/nginx.conf

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.1.153:6443;

server 192.168.1.157:6443;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

service nginx start

keepalived配置:

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/nginx/check_nginx.sh"

}

vrrp_instance VI_1 {

state MASTER

interface enp0s8

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 100 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.1.160/24

}

track_script {

check_nginx

}

}

keepalived检查nginx状态脚本:

vim /etc/nginx/check_nginx.sh

count=$(ps -ef |grep nginx |egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

/etc/init.d/keepalived stop

fi

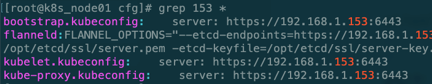

12.2 高可用Master02搭建

复制master01文件:

scp -r /opt/kubernetes/ k8s_master02:/opt/

scp -r /opt/etcd/ k8s_master02:/opt/

scp /usr/bin/kubectl k8s_master02:/usr/bin/

scp /usr/lib/systemd/system/kube-* k8s_master02:/usr/lib/systemd/system

修改配置文件(master02):

vim /opt/kubernetes/cfg/kube-apiserver

--bind-address=192.168.1.157 \

--advertise-address=192.168.1.157 \

启动Master服务:

systemctl start kube-apiserver

systemctl enable kube-apiserver

systemctl start kube-scheduler

systemctl enable kube-scheduler

systemctl start kube-controller-manager

systemctl enable kube-controller-manager

# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.1.154 Ready <none> 6h15m v1.13.4

192.168.1.155 Ready <none> 6h4m v1.13.4

12.3 Node重新指向Load Balancer

cd /opt/kubernetes/cfg

需要修改bootstrap.kubeconfig,kubelet.kubeconfig,kube-proxy.kubeconfig为VIP 192.168.1.160

重新启动服务:

systemctl restart kubelet

systemctl restart kube-proxy

12.4 测试高可用部署是否成功:

重新创建kubeconfig文件(存放连接apiserver认证信息,master01执行)

cd /home/k8s_install/k8s_master_componet/

./kubelet_new.sh

会生成一个config文件

脚本如下:

kubectl config set-cluster kubernetes --server=https://192.168.1.160:6443 --embed-certs=true --certificate-authority=ca.pem --kubeconfig=config

kubectl config set-credentials cluster-admin --certificate-authority=ca.pem --embed-certs=true --client-key=admin-key.pem --client-certificate=admin.pem --kubeconfig=config

kubectl config set-context default --cluster=kubernetes --user=cluster-admin --kubeconfig=config

kubectl config use-context default --kubeconfig=config

node01节点执行(把config,kubectl复制到node01节点):

scp /usr/bin/kubectl k8s_node01:/usr/bin/

scp config k8s_node01:/root/

kubectl --kubeconfig=/root/config get node

NAME STATUS ROLES AGE VERSION

192.168.1.154 Ready <none> 7h7m v1.13.4

192.168.1.155 Ready <none> 6h56m v1.13.4

备注:二进制包及部署脚本下载地址

链接:https://pan.baidu.com/s/1wr4y84hakxV8kncQ_ogCGw 密码:pp6c

由于博客园排版问题有需要word可以自行文档下载地址:

链接:https://pan.baidu.com/s/1b2CHnhAW45fxxf8qW-rEvw 密码:i1nc

新增HA脚本下载地址:

链接:https://pan.baidu.com/s/11lkHP1vslHBDZpR49MUYqQ 密码:ktew

kubernetes实践之一:kubernetes二进制包安装的更多相关文章

- MySQL5.7单实例二进制包安装方法

MySQL5.7单实例二进制包安装方法 一.环境 OS: CentOS release 6.9 (Final)MySQL: mysql-5.7.20-linux-glibc2.12-x86_64.ta ...

- 二进制包安装MySQL数据库

1.1二进制包安装MySQL数据库 1.1.1 安装前准备(规范) [root@Mysql_server ~]# mkdir -p /home/zhurui/tools ##创建指定工具包存放路径 [ ...

- Mysql 通用二进制包安装

通用二进制包安装 注意:这里有严格的平台问题: 使用时:centos5.5版本 (类似Windows下的绿色包) 下载(mirrors.sohu.com/mysql) 直接使用tar 解压到指 ...

- MySQL二进制包安装

mysql的安装有多种方法,这里就介绍一下二进制包安装. [root@node1 ~]# tar xvf mysql-5.7.27-linux-glibc2.12-x86_64.tar [root@n ...

- MySQL二进制包安装及启动问题排查

环境部署:VMware10.0+CentOS6.9(64位)+MySQL5.7.19(64位)一.操作系统调整 # 更改时区 .先查看时区 [root@localhost ~]# date -R Tu ...

- liunx系统二进制包安装编译mysql数据库

liunx系统二进制包安装编译mysql数据库 # 解压二进制压缩包 [root@localhost ~]# tar xf mysql-5.5.32-linux2.6-x86_64.tar.gz -C ...

- CentOS8.1操作系下使用通用二进制包安装MySQL8.0(实践整理自MySQL官方)

写在前的的话: 在IT技术日新月异的今天,老司机也可能在看似熟悉的道路上翻车,甚至是大型翻车现场!自己一个人开车过去翻个车不可怕,可怕的是带着整个团队甚至是整个公司一起翻车山崖下,解决办法就是:新出现 ...

- [Mongodb] Tarball二进制包安装过程

一.缘由: 用在线安装的方式安装mongodb,诚然很方便.但文件过于分散,如果在单机多实例的情况下,就不方便管理. 对于数据库的管理,我习惯将所有数据(配置)文件放在一个地方,方便查找区分. 二.解 ...

- mysql二进制包安装与配置实战记录

导读 一般中小型网站的开发都选择 MySQL 作为网站数据库,由于其社区版的性能卓越,搭配 PHP .Linux和 Apache 可组成良好的开发环境,经过多年的web技术发展,在业内被广泛使用的一种 ...

随机推荐

- TensorFlow图像处理API

TensorFlow提供了一些常用的图像处理接口,可以让我们方便的对图像数据进行操作,以下首先给出一段显示原始图片的代码,然后在此基础上,实践TensorFlow的不同API. 显示原始图片 impo ...

- python爬虫入门(三)XPATH和BeautifulSoup4

XML和XPATH 用正则处理HTML文档很麻烦,我们可以先将 HTML文件 转换成 XML文档,然后用 XPath 查找 HTML 节点或元素. XML 指可扩展标记语言(EXtensible Ma ...

- Python进阶开发之网络编程,socket实现在线聊天机器人

系列文章 √第一章 元类编程,已完成 ; √第二章 网络编程,已完成 ; 本文目录 什么是socket?创建socket客户端创建socket服务端socket工作流程图解socket公共函数汇总实战 ...

- arcEngine开发之根据点坐标创建Shp图层

思路 根据点坐标创建Shapefile文件大致思路是这样的: (1)创建表的工作空间,通过 IField.IFieldsEdit.IField 等接口创建属性字段,添加到要素集中. (2)根据获取点的 ...

- css绝对底部的实现方法

最近发现公司做的好多管理系统也存在这样的问题,当页面不够长的时候,页尾也跟着跑到了页面中部,这样确实感觉视觉体验不太好,没有研究之前还真不知道还能用css实现,主要利用min-height;paddi ...

- Zookeeper vs etcd vs Consul

Zookeeper vs etcd vs Consul [编者的话]本文对比了Zookeeper.etcd和Consul三种服务发现工具,探讨了最佳的服务发现解决方案,仅供参考. 如果使用预定义的端口 ...

- Debian9桌面设置

本文由荒原之梦原创,原文链接:http://zhaokaifeng.com/?p=665 新安装的Debian9桌面上啥都没有,就像这样: 图 1 虽然很简洁,但是用着不是很方便,下面我们就通过一些设 ...

- sql server按符号截取字符串

http://www.360doc.com/content/12/0626/13/1912775_220523992.shtml

- 超实用的JavaScript代码段 Item5 --图片滑动效果实现

先上图 鼠标滑过那张图,显示完整的哪张图,移除则复位: 简单的CSS加JS操作DOM实现: <!doctype html> <html> <head> <me ...

- javascript项目实战---ajax实现无刷新分页

分页: limit 偏移量,长度; limit 0,7; 第一页 limit 7,7; 第二页 limit 14,7; 第三页 每页信息条数:7 信息总条数:select count(*) from ...