matlab(7) Regularized logistic regression : mapFeature(将feature增多) and costFunctionReg

Regularized logistic regression : mapFeature(将feature增多) and costFunctionReg

ex2_reg.m文件中的部分内容

%% =========== Part 1: Regularized Logistic Regression ============

% In this part, you are given a dataset with data points that are not

% linearly separable. However, you would still like to use logistic

% regression to classify the data points.

%

% To do so, you introduce more features to use -- in particular, you add

% polynomial features to our data matrix (similar to polynomial

% regression).

%

% Add Polynomial Features

% Note that mapFeature also adds a column of ones for us, so the intercept

% term is handled

X = mapFeature(X(:,1), X(:,2)); %调用下面的mapFeature.m文件中的mapFeature(X1,X2)函数

%将只有x1,x2feature map成一个有28个feature的6次的多项式  ,这样就能画出更复杂的decision boundary, 但同时也有可能带来overfitting的结果(取决于λ的值)

,这样就能画出更复杂的decision boundary, 但同时也有可能带来overfitting的结果(取决于λ的值)

% 调用完后X变为118*28(118个example,28个属性,包括前面的1做为一列)的矩阵

% Initialize fitting parameters

initial_theta = zeros(size(X, 2), 1); %initial_theta: 28*1

% Set regularization parameter lambda to 1

lambda = 1; % λ=1;当λ=0时表示不正则化(No regularization ),这时会出现overfitting;当λ=100时会出现Too much regularization(Underfitting)

% Compute and display initial cost and gradient for regularized logistic

% regression

[cost, grad] = costFunctionReg(initial_theta, X, y, lambda); %调用costFunctionReg.m文件中的costFunctionReg(theta, X, y, lambda)函数

fprintf('Cost at initial theta (zeros): %f\n', cost); %计算initial theta (zeros)时的cost 值

fprintf('\nProgram paused. Press enter to continue.\n');

pause;

mapFeature.m文件

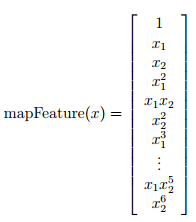

function out = mapFeature(X1, X2)

% MAPFEATURE Feature mapping function to polynomial features

%

% MAPFEATURE(X1, X2) maps the two input features

% to quadratic features used in the regularization exercise.

%

% Returns a new feature array with more features, comprising of

% X1, X2, X1.^2, X2.^2, X1*X2, X1*X2.^2, etc..

%

% Inputs X1, X2 must be the same size

%

degree = 6; %map the features into all polynomial terms of x1 and x2 up to the sixth power

out = ones(size(X1(:,1)));

for i = 1:degree

for j = 0:i

out(:, end+1) = (X1.^(i-j)).*(X2.^j);

end

end

end

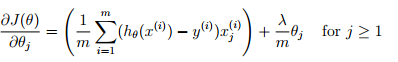

costFunctionReg.m文件

function [J, grad] = costFunctionReg(theta, X, y, lambda)

%COSTFUNCTIONREG Compute cost and gradient for logistic regression with regularization

% J = COSTFUNCTIONREG(theta, X, y, lambda) computes the cost of using

% theta as the parameter for regularized logistic regression and the

% gradient of the cost w.r.t. to the parameters.

% Initialize some useful values

m = length(y); % number of training examples

% You need to return the following variables correctly

J = 0;

grad = zeros(size(theta));

% ====================== YOUR CODE HERE ======================

% Instructions: Compute the cost of a particular choice of theta.

% You should set J to the cost.

% Compute the partial derivatives and set grad to the partial

% derivatives of the cost w.r.t. each parameter in theta

J = 1/m*(-1*y'*log(sigmoid(X*theta)) - (ones(1,m)-y')*log(ones(m,1)-sigmoid(X*theta)))...

+ lambda/(2*m) * (theta(2:end,:))' * theta(2:end,:); %Note that you should not regularize the parameter θ0.

the regularized cost function,

the regularized cost function,

grad = 1/m * (X' * (sigmoid(X*theta) - y)) + (lambda/m)*theta; %

grad(1) = 1/m * (X(:,1))' * (sigmoid(X*theta) - y); %

% Note that you should not regularize the parameter θ0.

% =============================================================

end

matlab(7) Regularized logistic regression : mapFeature(将feature增多) and costFunctionReg的更多相关文章

- matlab(6) Regularized logistic regression : plot data(画样本图)

Regularized logistic regression : plot data(画样本图) ex2data2.txt 0.051267,0.69956,1-0.092742,0.68494, ...

- matlab(8) Regularized logistic regression : 不同的λ(0,1,10,100)值对regularization的影响,对应不同的decision boundary\ 预测新的值和计算模型的精度predict.m

不同的λ(0,1,10,100)值对regularization的影响\ 预测新的值和计算模型的精度 %% ============= Part 2: Regularization and Accur ...

- machine learning(15) --Regularization:Regularized logistic regression

Regularization:Regularized logistic regression without regularization 当features很多时会出现overfitting现象,图 ...

- Regularized logistic regression

要解决的问题是,给出了具有2个特征的一堆训练数据集,从该数据的分布可以看出它们并不是非常线性可分的,因此很有必要用更高阶的特征来模拟.例如本程序中个就用到了特征值的6次方来求解. Data To be ...

- 编程作业2.2:Regularized Logistic regression

题目 在本部分的练习中,您将使用正则化的Logistic回归模型来预测一个制造工厂的微芯片是否通过质量保证(QA),在QA过程中,每个芯片都会经过各种测试来保证它可以正常运行.假设你是这个工厂的产品经 ...

- 吴恩达机器学习笔记22-正则化逻辑回归模型(Regularized Logistic Regression)

针对逻辑回归问题,我们在之前的课程已经学习过两种优化算法:我们首先学习了使用梯度下降法来优化代价函数

- Stanford机器学习---第三讲. 逻辑回归和过拟合问题的解决 logistic Regression & Regularization

原文:http://blog.csdn.net/abcjennifer/article/details/7716281 本栏目(Machine learning)包括单参数的线性回归.多参数的线性回归 ...

- Andrew Ng机器学习编程作业:Logistic Regression

编程作业文件: machine-learning-ex2 1. Logistic Regression (逻辑回归) 有之前学生的数据,建立逻辑回归模型预测,根据两次考试结果预测一个学生是否有资格被大 ...

- ML 逻辑回归 Logistic Regression

逻辑回归 Logistic Regression 1 分类 Classification 首先我们来看看使用线性回归来解决分类会出现的问题.下图中,我们加入了一个训练集,产生的新的假设函数使得我们进行 ...

随机推荐

- DeviceEventEmitter React-Native 发送和接受消息(事件监听器)

A页面注册通知: import {DeviceEventEmitter} from 'react-native'; //… //调用事件通知 DeviceEventEmitter.emit('xxxN ...

- AES加密、解密(linux、window加密解密效果一致,支持中文)

转自: http://sunfish.iteye.com/blog/2169158 import java.io.UnsupportedEncodingException; import java.s ...

- Android持久化存储——(包含操作SQLite数据库)

<第一行代码>读书手札 你可能会遇到的问题:解决File Explorer 中无显示问题 Android中,持久化存储,常见的一共有三种方法实现 (一.)利用文件存储 文件存储是Andro ...

- golang之文件结尾错误(EOF)

函数经常会返回多种错误,这对终端用户来说可能会很有趣,但对程序而言,这使得情况变得复杂.很多时候,程序必须根据错误类型,作出不同的响应.让我们考虑这样一个例子:从文件中读取n个字节.如果n等于文件的长 ...

- PAT(B) 1038 统计同成绩学生(C)统计

题目链接:1038 统计同成绩学生 (20 point(s)) 题目描述 本题要求读入 N 名学生的成绩,将获得某一给定分数的学生人数输出. 输入格式 输入在第 1 行给出不超过 105 的正整 ...

- bash 和 powershell 常用命令集锦

Linux Shell # 1. 后台运行命令 nohup python xxx.py & # 查找替换 ## 只在目录中所有的 .py 和 .dart 文件中递归搜索字符"main ...

- FPS 游戏实现D3D透视

FPS游戏可以说一直都比较热门,典型的代表有反恐精英,穿越火线,绝地求生等,基本上只要是FPS游戏都会有透视挂的存在,而透视挂还分为很多种类型,常见的有D3D透视,方框透视,还有一些比较高端的显卡透视 ...

- mouseenter 与 mouseover 区别于选择

mouseover事件, 箭头在子元素移动会触发冒泡事件, 子元素的鼠标箭头可触父元素方法, 相反,mouseenter事件功能与mouseover类似, 但鼠标进入某个元素不会冒泡触发父元素方法. ...

- flume-ng version出现错误Error: Could not find or load main class org.apache.flume.tools.GetJavaPrope的解决办法

错误: 找不到或无法加载主类 org.apache.flume.tools.GetJavaProperty或者Error: Could not find or load main class org. ...

- C# EF 加密连接数据库连接字符串

不多说,直接上代码 public partial class Model1 : DbContext { private static string connStr = ""; pu ...