吴裕雄 python 机器学习-KNN算法(1)

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def createDataSet():

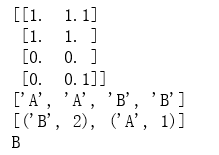

group = np.array([[1.0,1.1],[1.0,1.0],[0,0],[0,0.1]])

labels = ['A','A','B','B']

return group, labels data,labels = createDataSet()

print(data)

print(labels) test = np.array([[0,0.5]])

result = classify0(test,data,labels,3)

print(result)

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(int(listFromLine[-1]))

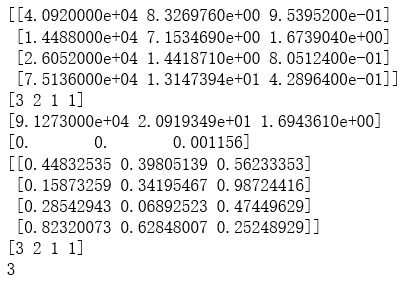

return np.array(returnMat),np.array(classLabelVector) trainData,trainLabel = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet2.txt")

print(trainData[0:4])

print(trainLabel[0:4]) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

return normDataSet, ranges, minVals normDataSet, ranges, minVals = autoNorm(trainData)

print(ranges)

print(minVals)

print(normDataSet[0:4])

print(trainLabel[0:4]) testData = np.array([[0.5,0.3,0.5]])

result = classify0(testData, normDataSet, trainLabel, 5)

print(result)

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(listFromLine[-1])

return np.array(returnMat),np.array(classLabelVector) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

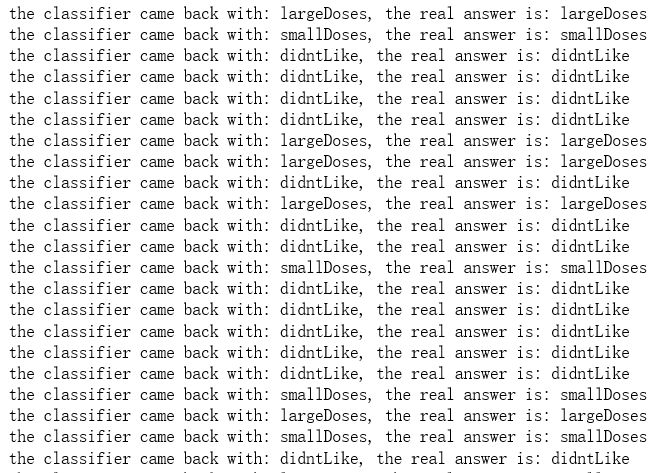

return normDataSet, ranges, minVals normDataSet, ranges, minVals = autoNorm(trainData) def datingClassTest():

hoRatio = 0.10 #hold out 10%

datingDataMat,datingLabels = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet.txt")

normMat, ranges, minVals = autoNorm(datingDataMat)

m = normMat.shape[0]

numTestVecs = int(m*hoRatio)

errorCount = 0.0

for i in range(numTestVecs):

classifierResult = classify0(normMat[i,:],normMat[numTestVecs:m,:],datingLabels[numTestVecs:m],3)

print(('the classifier came back with: %s, the real answer is: %s') % (classifierResult, datingLabels[i]))

if (classifierResult != datingLabels[i]):

errorCount += 1.0

print(('the total error rate is: %f') % (errorCount/float(numTestVecs)))

print(errorCount) datingClassTest()

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(listFromLine[-1])

return np.array(returnMat),np.array(classLabelVector) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

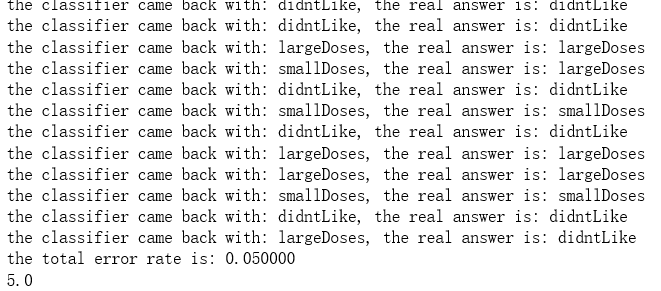

return normDataSet, ranges, minVals normDataSet, ranges, minVals = autoNorm(trainData) def datingClassTest():

hoRatio = 0.10 #hold out 10%

datingDataMat,datingLabels = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet.txt")

normMat, ranges, minVals = autoNorm(datingDataMat)

m = normMat.shape[0]

numTestVecs = int(m*hoRatio)

errorCount = 0.0

for i in range(numTestVecs):

classifierResult = classify0(normMat[i,:],normMat[numTestVecs:m,:],datingLabels[numTestVecs:m],3)

print(('the classifier came back with: %s, the real answer is: %s') % (classifierResult, datingLabels[i]))

if (classifierResult != datingLabels[i]):

errorCount += 1.0

print(('the total error rate is: %f') % (errorCount/float(numTestVecs)))

print(errorCount) datingClassTest()

................................................

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(int(listFromLine[-1]))

return np.array(returnMat),np.array(classLabelVector) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

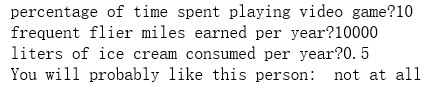

return normDataSet, ranges, minVals def classifyPerson():

resultList = ["not at all", "in samll doses", "in large doses"]

percentTats = float(input("percentage of time spent playing video game?"))

ffMiles = float(input("frequent flier miles earned per year?"))

iceCream = float(input("liters of ice cream consumed per year?"))

testData = np.array([percentTats,ffMiles,iceCream])

trainData,trainLabel = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet2.txt")

normDataSet, ranges, minVals = autoNorm(trainData)

result = classify0((testData-minVals)/ranges, normDataSet, trainLabel, 3)

print("You will probably like this person: ",resultList[result-1]) classifyPerson()

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def file2matrix(filename):

fr = open(filename)

returnMat = []

classLabelVector = [] #prepare labels return

for line in fr.readlines():

line = line.strip()

listFromLine = line.split('\t')

returnMat.append([float(listFromLine[0]),float(listFromLine[1]),float(listFromLine[2])])

classLabelVector.append(int(listFromLine[-1]))

return np.array(returnMat),np.array(classLabelVector) def autoNorm(dataSet):

minVals = dataSet.min(0)

maxVals = dataSet.max(0)

ranges = maxVals - minVals

normDataSet = np.zeros(np.shape(dataSet))

m = dataSet.shape[0]

normDataSet = dataSet - np.tile(minVals, (m,1))

normDataSet = normDataSet/np.tile(ranges, (m,1)) #element wise divide

return normDataSet, ranges, minVals def classifyPerson():

resultList = ["not at all", "in samll doses", "in large doses"]

percentTats = float(input("percentage of time spent playing video game?"))

ffMiles = float(input("frequent flier miles earned per year?"))

iceCream = float(input("liters of ice cream consumed per year?"))

testData = np.array([percentTats,ffMiles,iceCream])

trainData,trainLabel = file2matrix("D:\\LearningResource\\machinelearninginaction\\Ch02\\datingTestSet2.txt")

normDataSet, ranges, minVals = autoNorm(trainData)

result = classify0((testData-minVals)/ranges, normDataSet, trainLabel, 3)

print("You will probably like this person: ",resultList[result-1]) classifyPerson()

import numpy as np

import operator as op

from os import listdir def classify0(inX, dataSet, labels, k):

dataSetSize = dataSet.shape[0]

diffMat = np.tile(inX, (dataSetSize,1)) - dataSet

sqDiffMat = diffMat**2

sqDistances = sqDiffMat.sum(axis=1)

distances = sqDistances**0.5

sortedDistIndicies = distances.argsort()

classCount={}

for i in range(k):

voteIlabel = labels[sortedDistIndicies[i]]

classCount[voteIlabel] = classCount.get(voteIlabel,0) + 1

sortedClassCount = sorted(classCount.items(), key=op.itemgetter(1), reverse=True)

return sortedClassCount[0][0] def img2vector(filename):

returnVect = []

fr = open(filename)

for i in range(32):

lineStr = fr.readline()

for j in range(32):

returnVect.append(int(lineStr[j]))

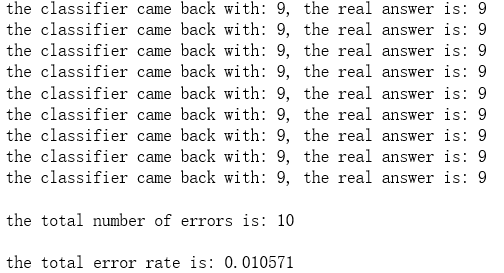

return np.array([returnVect]) def handwritingClassTest():

hwLabels = []

trainingFileList = listdir('D:\\LearningResource\\machinelearninginaction\\Ch02\\trainingDigits') #load the training set

m = len(trainingFileList)

trainingMat = np.zeros((m,1024))

for i in range(m):

fileNameStr = trainingFileList[i]

fileStr = fileNameStr.split('.')[0] #take off .txt

classNumStr = int(fileStr.split('_')[0])

hwLabels.append(classNumStr)

trainingMat[i,:] = img2vector('D:\\LearningResource\\machinelearninginaction\\Ch02\\trainingDigits\\%s' % fileNameStr)

testFileList = listdir('D:\\LearningResource\\machinelearninginaction\\Ch02\\testDigits') #iterate through the test set

mTest = len(testFileList)

errorCount = 0.0

for i in range(mTest):

fileNameStr = testFileList[i]

fileStr = fileNameStr.split('.')[0] #take off .txt

classNumStr = int(fileStr.split('_')[0])

vectorUnderTest = img2vector('D:\\LearningResource\\machinelearninginaction\\Ch02\\testDigits\\%s' % fileNameStr)

classifierResult = classify0(vectorUnderTest, trainingMat, hwLabels, 3)

print("the classifier came back with: %d, the real answer is: %d" % (classifierResult, classNumStr))

if (classifierResult != classNumStr):

errorCount += 1.0

print("\nthe total number of errors is: %d" % errorCount)

print("\nthe total error rate is: %f" % (errorCount/float(mTest))) handwritingClassTest()

.......................................

吴裕雄 python 机器学习-KNN算法(1)的更多相关文章

- 吴裕雄 python 机器学习——KNN回归KNeighborsRegressor模型

import numpy as np import matplotlib.pyplot as plt from sklearn import neighbors, datasets from skle ...

- 吴裕雄 python 机器学习——KNN分类KNeighborsClassifier模型

import numpy as np import matplotlib.pyplot as plt from sklearn import neighbors, datasets from skle ...

- 吴裕雄 python 机器学习-KNN(2)

import matplotlib import numpy as np import matplotlib.pyplot as plt from matplotlib.patches import ...

- 吴裕雄 python 机器学习——半监督学习标准迭代式标记传播算法LabelPropagation模型

import numpy as np import matplotlib.pyplot as plt from sklearn import metrics from sklearn import d ...

- 吴裕雄 python 机器学习——集成学习AdaBoost算法回归模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets,ensemble from sklear ...

- 吴裕雄 python 机器学习——集成学习AdaBoost算法分类模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets,ensemble from sklear ...

- 吴裕雄 python 机器学习——人工神经网络感知机学习算法的应用

import numpy as np from matplotlib import pyplot as plt from sklearn import neighbors, datasets from ...

- 吴裕雄 python 机器学习——半监督学习LabelSpreading模型

import numpy as np import matplotlib.pyplot as plt from sklearn import metrics from sklearn import d ...

- 吴裕雄 python 机器学习——人工神经网络与原始感知机模型

import numpy as np from matplotlib import pyplot as plt from mpl_toolkits.mplot3d import Axes3D from ...

随机推荐

- 安装配置Glusterfs

软件下载地址:http://bits.gluster.org/pub/gluster/glusterfs/3.4.2/x86_64/ 192.168.1.11 10.1.1.241 glusterfs ...

- Ren'Py视觉小说安装,玩一下吧,上班很闲的话

---------------------------------------------------------------------------------------------------- ...

- java的Map遍历

java中的map遍历有多种方法,从最早的Iterator,到java5支持的foreach,再到java8 Lambda,让我们一起来看下具体的用法以及各自的优缺点 先初始化一个mappublic ...

- js语法规则 ---console.log ---- prompt ----基本类型 ---parseInt

在页面中可以在body里面加入type=”text/javascript” 例如: <script type="text/javascript"> </scrip ...

- [Unity插件]Lua行为树(十二):行为树管理

之前运行的行为树,都是一颗总树,那么实际上会有很多的总树,因此需要对行为树进行管理. BTBehaviorManager.lua BTBehaviorManager = {}; local this ...

- Java课程作业之动手动脑(四)

1.继承条件下的构造方法调用 class Grandparent { public Grandparent() { System.out.println("GrandParent Creat ...

- 重识linux-SSH中的SFTP

重识linux-SSH中的SFTP 1 SFTP也是使用SSH的通道(port 22) 2 SFTP是linux系统自带的功能 3 连接上主流的ftp软件都支持sftp协议 比如flashfxp,fi ...

- 31.用 CSS 的动画原理,创作一个乒乓球对打动画

原文地址:https://segmentfault.com/a/1190000015002553 感想:纯属动画 HTML代码: <div class="court"> ...

- C++学习基础十五--sizeof的常见使用

sizeof的常见用法 1. 基本类型所占的内存大小 类型 32位系统(字节) 64位系统(字节) char 1 1 int 4 4 short 2 2 long 4 8 float 4 4 doub ...

- mycat测试

mycat 目前最流行的分布式数据库中间插件 mycat能满足数据的大量存储,并能提高查询性能:同样应用程序与数据库解耦,程序只需知道中间件的地址,无需知道底层数据库,数据分布存储,提高读写性能,也可 ...