Distributed Deep Learning

安利一下刘铁岩老师的《分布式机器学习》这本书

以及一个大神的blog:

https://zhuanlan.zhihu.com/p/29032307

https://zhuanlan.zhihu.com/p/30976469

分布式深度学习原理

在很多教程中都有介绍DL training的原理。我们来简单回顾一下:

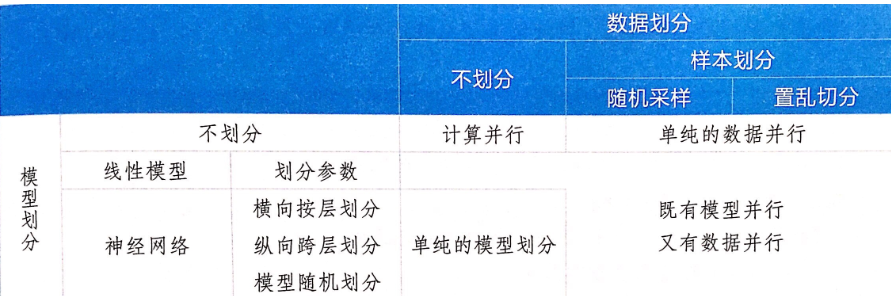

那么如果scale太大,需要分布式呢?分布式机器学习大致有以下几个思路:

- 对于计算量太大的场景(计算并行),可以多线程/多节点并行计算。常用的一个算法就是同步随机梯度下降(synchronous stochastic gradient descent),含义大致相当于K个(K是节点数)mini-batch SGD [ch6.2]

- 对于训练数据太多的场景(数据并行,也是最主要的场景),需要将数据划分到多个节点上训练。每个节点先用本地的数据先训练出一个子模型,同时和其他节点保持通信(比如更新参数)以保证最终可以有效整合来自各个节点的训练结果,并得到全局的ML模型。 [ch6.3]

- 对于模型太大的场景,需要把模型(例如NN中的不同层)划分到不同节点上进行训练。此时不同节点之间可能需要频繁的sync。 [ch6.4]

它们可以总结为下图:

以数据并行为例,整个pipeline如下:

- 划分数据到不同节点

- 每个节点单机训练

- 节点之间的通信以及整个拓扑结构设计 【ch7】

- 多个训练好的子模型的聚合 【ch8】

Distributed DL model

目前工业界常见的Distributed DL方法有以下三种:【ch7.3】

1. PyTorch: AllReduce Model

MPI is a common method of distributed computing framework to implement distributed machine learning system. The main idea is to use AllReduce API to synchronize message and it also supports operations which satisfy Reduce rules. The common polymerization method for machine learning models is addition and average, so AllReduce logic is suitable to deal with it. The standard API of AllReduce have various implemented methods.

AllReduce mode is simple and convenient which is beneficial for paralleling training in synchronization algorithm. Till now, there are many deep learning systems still use it to complete communication function in distributed training, such as gloo communication library from Caffe2, DeepSpeech system in Baidu and NCCL communication library in Nvidia.

However, AllReduce can only support synchronizing communication and the logic of all working nodes are same which means every working node should handle completed model. It is unsuitable for large scale model.

Limitation of AllReduce:

When working nodes in system is increasing and the computing is unbalance, the training speed is decided by the slowest node in this system; once a working node does not work, the whole system has to stop.

Also, when the number of parameters of models in machine learning task is too large, it will exceed the memory capacity of single machine.

2. MXNet: Parameter Server Model

In the parameter server framework, all nodes in system are divided into worker and server logically. The main task of each worker is to take charge of local training task and communicate with parameter server through server interface. In this way, they can obtain latest model parameters from parameter server or send latest local training model to parameter server. With this parameter server, machine learning can be synchronous or asynchronous, or even mixed.

3. TensorFlow: Dataflow Model

Computational graph model in TensorFlow: Computation is described as a directed acyclic data flow graph. The nodes in the figure represent compute nodes and the edges represent data flow.

Distributed machine learning system based on data flow draws on the flexibility of DAG-based big data processing system, it describes the computing task as a directed acyclic data flow graph. The nodes in the figure represent the operations on the data and the edges in the figure represent the dependencies of the operation.

The system automatically provides distributed execution of the dataflow graph, so the user cares about how to design the appropriate dataflow graph to represent the algorithmic logic that is to be executed.

Below, it will take a data flow diagram representing the data flow system in TensorFlow as an example to introduce a typical data flow diagram.

分布式机器学习算法

【ch9】

Distributed Deep Learning的更多相关文章

- (转)分布式深度学习系统构建 简介 Distributed Deep Learning

HOME ABOUT CONTACT SUBSCRIBE VIA RSS DEEP LEARNING FOR ENTERPRISE Distributed Deep Learning, Part ...

- 英特尔深度学习框架BigDL——a distributed deep learning library for Apache Spark

BigDL: Distributed Deep Learning on Apache Spark What is BigDL? BigDL is a distributed deep learning ...

- CoRR 2018 | Horovod: Fast and Easy Distributed Deep Learning in Tensorflow

将深度学习模型的训练从单GPU扩展到多GPU主要面临以下问题:(1)训练框架必须支持GPU间的通信,(2)用户必须更改大量代码以使用多GPU进行训练.为了克服这些问题,本文提出了Horovod,它通过 ...

- Install PaddlePaddle (Parallel Distributed Deep Learning)

Step 1: Install docker on your linux system (My linux is fedora) https://docs.docker.com/engine/inst ...

- NeurIPS 2017 | TernGrad: Ternary Gradients to Reduce Communication in Distributed Deep Learning

在深度神经网络的分布式训练中,梯度和参数同步时的网络开销是一个瓶颈.本文提出了一个名为TernGrad梯度量化的方法,通过将梯度三值化为\({-1, 0, 1}\)来减少通信量.此外,本文还使用逐层三 ...

- 【深度学习Deep Learning】资料大全

最近在学深度学习相关的东西,在网上搜集到了一些不错的资料,现在汇总一下: Free Online Books by Yoshua Bengio, Ian Goodfellow and Aaron C ...

- (转) Awesome Deep Learning

Awesome Deep Learning Table of Contents Free Online Books Courses Videos and Lectures Papers Tutori ...

- 机器学习(Machine Learning)&深度学习(Deep Learning)资料

<Brief History of Machine Learning> 介绍:这是一篇介绍机器学习历史的文章,介绍很全面,从感知机.神经网络.决策树.SVM.Adaboost到随机森林.D ...

- 机器学习(Machine Learning)&深入学习(Deep Learning)资料

<Brief History of Machine Learning> 介绍:这是一篇介绍机器学习历史的文章,介绍很全面,从感知机.神经网络.决策树.SVM.Adaboost 到随机森林. ...

随机推荐

- jQery Datatables回调函数中文

Datatables——回调函数 ------------------------------------------------- fnCookieCallback:还没有使用过 $(documen ...

- BZOJ 4821: [Sdoi2017]相关分析 线段树 + 卡精

考试的时候切掉了,然而卡精 + 有一个地方忘开 $long long$,完美挂掉 $50$pts. 把式子化简一下,然后直接拿线段树来维护即可. Code: // luogu-judger-enabl ...

- SpringBoot2.2发行版新特性

Spring Framework升级 SpringBoot2.2的底层Spring Framework版本升级为5.2. JMX默认禁用 默认情况下不再启用JMX. 可以使用配置属性spring.jm ...

- (77)一文了解Redis

为什么我们做分布式使用Redis? 绝大部分写业务的程序员,在实际开发中使用 Redis 的时候,只会 Set Value 和 Get Value 两个操作,对 Redis 整体缺乏一个认知.这里对 ...

- vue树形菜单

vue树形菜单 由于项目原因,没有使用ui框架上的树形菜单,所以自己动手并参考大佬的代码写了一个树形菜单的组件,话不多说,直接上代码.html代码js代码直接调用api 把请求到的数据直接赋值给per ...

- 为什么电脑连接不上FTP

我们对服务器的FTP状况有实时监控,一般问题都不在服务器端. 而且我们客服一般会第一时间测试下您空间FTP是否真的不能连接 99%绝大部分FTP连接不上的问题,都是客户那边的软件端或网络问题. 问题分 ...

- How to Create a Basic Plugin 如何写一个基础的jQuery插件

How to Create a Basic Plugin Sometimes you want to make a piece of functionality available throughou ...

- JavaScript apply

https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Function/apply The ...

- WPF DevExpress Chart控件 界面绑定数据源,不通过C#代码进行绑定

<Grid x:Name="myGrid" Loaded="Grid_Loaded" DataContext="{Binding PartOne ...

- ASP.NET对路径"C:/......."的访问被拒绝 解决方法小结 [转载]

问题: 异常详细信息: System.UnauthorizedAccessException: 对路径“C:/Supermarket/output.pdf”的访问被拒绝. 解决方法: 一.在IIS中的 ...