Scrapy命令行详解

官方文档:https://doc.scrapy.org/en/latest/

Global commands:

Project-only commands: 在项目目录下才可以执行

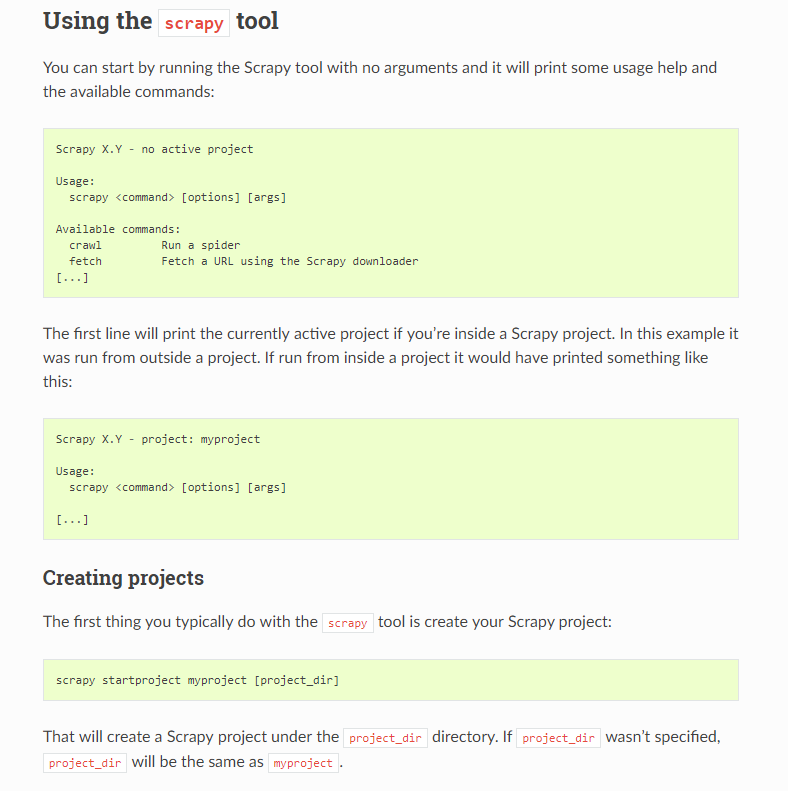

startproject

- Syntax:

scrapy startproject <project_name> [project_dir] - Requires project: no

Creates a new Scrapy project named project_name, under the project_dir directory. If project_dir wasn’t specified, project_dirwill be the same as project_name.

Usage example:

$ scrapy startproject myproject

genspider

- Syntax:

scrapy genspider [-t template] <name> <domain> - Requires project: no

Create a new spider in the current folder or in the current project’s spiders folder, if called from inside a project. The <name> parameter is set as the spider’s name, while <domain> is used to generate the allowed_domains and start_urls spider’s attributes.

Usage example:

$ scrapy genspider -l

Available templates:

basic

crawl

csvfeed

xmlfeed $ scrapy genspider example example.com

Created spider 'example' using template 'basic' $ scrapy genspider -t crawl scrapyorg scrapy.org

Created spider 'scrapyorg' using template 'crawl'

This is just a convenience shortcut command for creating spiders based on pre-defined templates, but certainly not the only way to create spiders. You can just create the spider source code files yourself, instead of using this command.

crawl

- Syntax:

scrapy crawl <spider> - Requires project: yes

Start crawling using a spider.

Usage examples:

$ scrapy crawl myspider

[ ... myspider starts crawling ... ]

check

- Syntax:

scrapy check [-l] <spider> - Requires project: yes

Run contract checks.

Usage examples:

$ scrapy check -l

first_spider

* parse

* parse_item

second_spider

* parse

* parse_item $ scrapy check

[FAILED] first_spider:parse_item

>>> 'RetailPricex' field is missing [FAILED] first_spider:parse

>>> Returned 92 requests, expected 0..4

list

- Syntax:

scrapy list - Requires project: yes

List all available spiders in the current project. The output is one spider per line.

Usage example:

$ scrapy list

spider1

spider2

edit

- Syntax:

scrapy edit <spider> - Requires project: yes

Edit the given spider using the editor defined in the EDITORenvironment variable or (if unset) the EDITOR setting.

This command is provided only as a convenience shortcut for the most common case, the developer is of course free to choose any tool or IDE to write and debug spiders.

Usage example:

$ scrapy edit spider1

fetch

- Syntax:

scrapy fetch <url> - Requires project: no

Downloads the given URL using the Scrapy downloader and writes the contents to standard output.

The interesting thing about this command is that it fetches the page how the spider would download it. For example, if the spider has a USER_AGENT attribute which overrides the User Agent, it will use that one.

So this command can be used to “see” how your spider would fetch a certain page.

If used outside a project, no particular per-spider behaviour would be applied and it will just use the default Scrapy downloader settings.

Supported options:

--spider=SPIDER: bypass spider autodetection and force use of specific spider--headers: print the response’s HTTP headers instead of the response’s body--no-redirect: do not follow HTTP 3xx redirects (default is to follow them) #有重定向的连接时候使用这个参数

Usage examples:

$ scrapy fetch --nolog http://www.example.com/some/page.html

[ ... html content here ... ] $ scrapy fetch --nolog --headers http://www.example.com/

{'Accept-Ranges': ['bytes'],

'Age': ['1263 '],

'Connection': ['close '],

'Content-Length': ['596'],

'Content-Type': ['text/html; charset=UTF-8'],

'Date': ['Wed, 18 Aug 2010 23:59:46 GMT'],

'Etag': ['"573c1-254-48c9c87349680"'],

'Last-Modified': ['Fri, 30 Jul 2010 15:30:18 GMT'],

'Server': ['Apache/2.2.3 (CentOS)']}

view

- Syntax:

scrapy view <url> - Requires project: no

Opens the given URL in a browser, as your Scrapy spider would “see” it. Sometimes spiders see pages differently from regular users, so this can be used to check what the spider “sees” and confirm it’s what you expect.

Supported options:

--spider=SPIDER: bypass spider autodetection and force use of specific spider--no-redirect: do not follow HTTP 3xx redirects (default is to follow them)

Usage example:

$ scrapy view http://www.example.com/some/page.html

[ ... browser starts ... ]

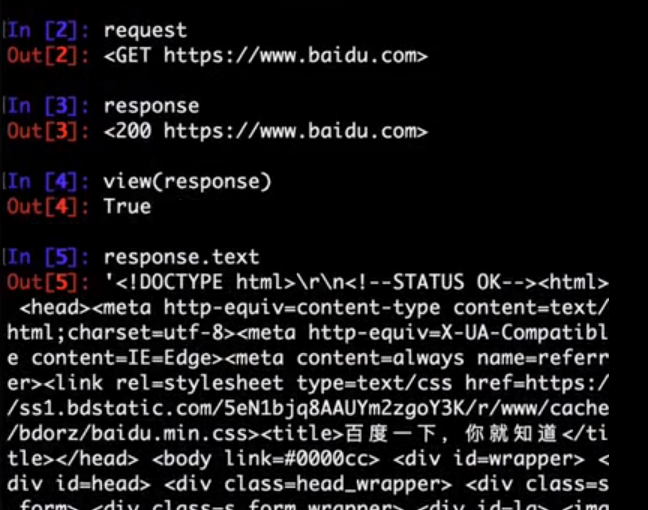

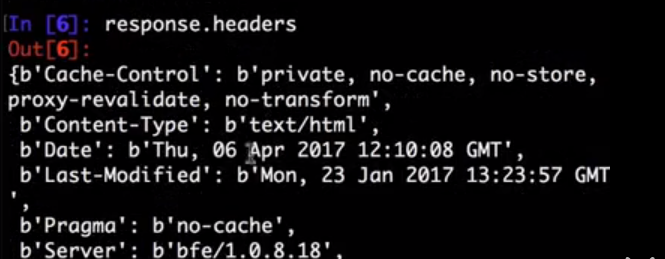

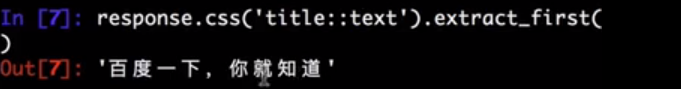

shell

- Syntax:

scrapy shell [url] - Requires project: no

Starts the Scrapy shell for the given URL (if given) or empty if no URL is given. Also supports UNIX-style local file paths, either relative with ./ or ../ prefixes or absolute file paths. See Scrapy shell for more info.

Supported options:

--spider=SPIDER: bypass spider autodetection and force use of specific spider-c code: evaluate the code in the shell, print the result and exit--no-redirect: do not follow HTTP 3xx redirects (default is to follow them); this only affects the URL you may pass as argument on the command line; once you are inside the shell,fetch(url)will still follow HTTP redirects by default.

Usage example:

$ scrapy shell http://www.example.com/some/page.html

[ ... scrapy shell starts ... ] $ scrapy shell --nolog http://www.example.com/ -c '(response.status, response.url)'

(200, 'http://www.example.com/') # shell follows HTTP redirects by default

$ scrapy shell --nolog http://httpbin.org/redirect-to?url=http%3A%2F%2Fexample.com%2F -c '(response.status, response.url)'

(200, 'http://example.com/') # you can disable this with --no-redirect

# (only for the URL passed as command line argument)

$ scrapy shell --no-redirect --nolog http://httpbin.org/redirect-to?url=http%3A%2F%2Fexample.com%2F -c '(response.status, response.url)'

(302, 'http://httpbin.org/redirect-to?url=http%3A%2F%2Fexample.com%2F')

parse

- Syntax:

scrapy parse <url> [options] - Requires project: yes

Fetches the given URL and parses it with the spider that handles it, using the method passed with the --callback option, or parse if not given.

Supported options:

--spider=SPIDER: bypass spider autodetection and force use of specific spider--a NAME=VALUE: set spider argument (may be repeated)--callbackor-c: spider method to use as callback for parsing the response--metaor-m: additional request meta that will be passed to the callback request. This must be a valid json string. Example: –meta=’{“foo” : “bar”}’--pipelines: process items through pipelines--rulesor-r: useCrawlSpiderrules to discover the callback (i.e. spider method) to use for parsing the response--noitems: don’t show scraped items--nolinks: don’t show extracted links--nocolour: avoid using pygments to colorize the output--depthor-d: depth level for which the requests should be followed recursively (default: 1)--verboseor-v: display information for each depth level

Usage example:

$ scrapy parse http://www.example.com/ -c parse_item

[ ... scrapy log lines crawling example.com spider ... ] >>> STATUS DEPTH LEVEL 1 <<<

# Scraped Items ------------------------------------------------------------

[{'name': 'Example item',

'category': 'Furniture',

'length': '12 cm'}] # Requests -----------------------------------------------------------------

[]

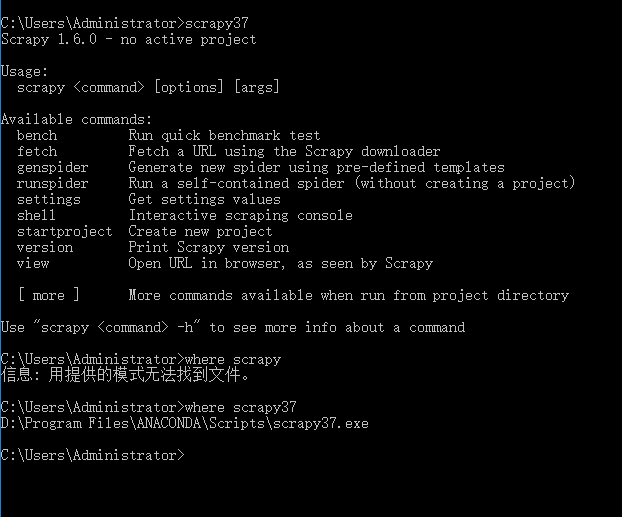

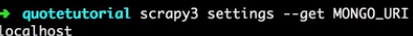

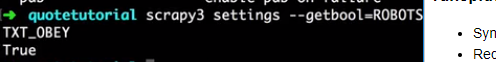

settings

- Syntax:

scrapy settings [options] - Requires project: no

Get the value of a Scrapy setting.

If used inside a project it’ll show the project setting value, otherwise it’ll show the default Scrapy value for that setting.

Example usage:

$ scrapy settings --get BOT_NAME

scrapybot

$ scrapy settings --get DOWNLOAD_DELAY

0

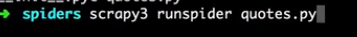

runspider

- Syntax:

scrapy runspider <spider_file.py> - Requires project: no #全局执行

Run a spider self-contained in a Python file, without having to create a project.

Example usage:

$ scrapy runspider myspider.py

[ ... spider starts crawling ... ]

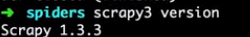

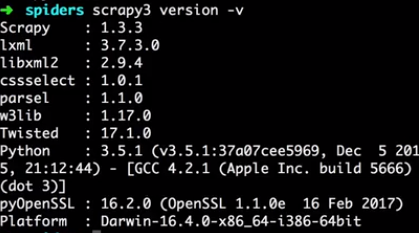

version

- Syntax:

scrapy version [-v] - Requires project: no

Prints the Scrapy version. If used with -v it also prints Python, Twisted and Platform info, which is useful for bug reports.

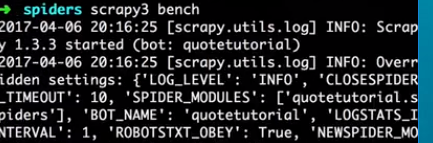

bench

New in version 0.17.

- Syntax:

scrapy bench - Requires project: no

Run a quick benchmark test. Benchmarking.

Scrapy命令行详解的更多相关文章

- 爬虫(十):scrapy命令行详解

建爬虫项目 scrapy startproject 项目名例子如下: localhost:spider zhaofan$ scrapy startproject test1 New Scrapy pr ...

- Scrapy框架的命令行详解【转】

Scrapy框架的命令行详解 请给作者点赞 --> 原文链接 这篇文章主要是对的scrapy命令行使用的一个介绍 创建爬虫项目 scrapy startproject 项目名例子如下: loca ...

- [转载]OpenSSL中文手册之命令行详解(未完待续)

声明:OpenSSL之命令行详解是根据卢队长发布在https://blog.csdn.net/as3luyuan123/article/details/16105475的系列文章整理修改而成,我自己 ...

- 7Z命令行详解

7z.exe在CMD窗口的使用说明如下: 7-Zip (A) 4.57 Copyright (c) 1999-2007 Igor Pavlov 2007-12-06 Usage: 7za <co ...

- 7-zip命令行详解

一.简介 7z,全称7-Zip, 是一款开源软件.是目前公认的压缩比例最大的压缩解压软件. 主要特征: # 全新的LZMA算法加大了7z格式的压缩比 # 支持格式: * 压缩 / 解压缩:7z, XZ ...

- Python爬虫从入门到放弃(十三)之 Scrapy框架的命令行详解

这篇文章主要是对的scrapy命令行使用的一个介绍 创建爬虫项目 scrapy startproject 项目名例子如下: localhost:spider zhaofan$ scrapy start ...

- Python之爬虫(十五) Scrapy框架的命令行详解

这篇文章主要是对的scrapy命令行使用的一个介绍 创建爬虫项目 scrapy startproject 项目名例子如下: localhost:spider zhaofan$ scrapy start ...

- gcc命令行详解

介绍] ----------------------------------------- 常见用法: GCC 选项 GCC 有超过100个的编译选项可用. 这些选项中的许多你可能永远都不会用到, 但 ...

- [转]TFS常用的命令行详解

本文转自:http://blchen.com/tfs-common-commands/ 微软的TFS和Visual Studio整合的非常好,但是在开发过程中,很多时候只用GUI图形界面就会发现一些复 ...

随机推荐

- ROS 101

https://www.clearpathrobotics.com/blog/2014/01/how-to-guide-ros-101/ 什么是ROS ROS(robot operating syst ...

- 解决ASP.NET MVC 接受Request Payload参数问题

今天与跟前端小伙伴对接口,发现微信小程序的POST带参数传值HttpContent.Request[]接收不到参数. 拿小程序官网文档举例 wx.request({ url: 'Text/Text', ...

- 【Java千问】你了解代理模式吗?

代理模式详解 1 什么是代理模式? 一句话描述:代理模式是一种使用代理对象来执行目标对象的方法并在代理对象中增强目标对象方法的一种设计模式. 详细描述: 1.理论基础-代理模式是设计原则中的“开闭原则 ...

- append和appendTo的区别!

今天在写dome的时候,碰到了一小点问题,就是我们想把一个小效果用jquery的办法添加到HTML页面中.我用的办法就是先在HTML中把代码写完,js和css同样写好并调试完成后.然后只保存外面最大的 ...

- Chrome 下input的默认样式

一.去除默认边框以及padding border: none;padding:0 二.去除聚焦蓝色边框 outline: none; 三.form表单自动填充变色 1.给input设置内置阴影,至少要 ...

- 过滤器(Filter)和拦截器(Interceptor)

过滤器(Filter) Servlet中的过滤器Filter是实现了javax.servlet.Filter接口的服务器端程序.它依赖于servlet容器,在实现上,基于函数回调,它可以对几乎所有请求 ...

- AI时代大点兵-国内外知名AI公司2018年最新盘点

AI时代大点兵-国内外知名AI公司2018年最新盘点 导言 据腾讯研究院统计,截至2017年6月,全球人工智能初创企业共计2617家.美国占据1078家居首,中国以592家企业排名第二,其后分别是英国 ...

- 【Dojo 1.x】笔记3 等待DOM加载完成

有的web页面总是得等DOM加载完成才能继续执行功能,例如,待页面DOM加载完成后,才能在DIV上进行渲染图形. Dojo提供了这个功能的模块,叫domReady,但是由于它很特殊,就在结尾加了个叹号 ...

- 优秀代码摘录片段一:LinkedList中定位index时使用折半思想

在LinkedList有一段小代码,实现的功能是,在链表中间进行插如,所以在插如的过程中会需要找到对应的index位置的node元素: 如果放在平时只为了实现功能而进行遍历查找,很多人会直接使用一个w ...

- 解决一个Ubuntu中编译NEON优化的OpenCV的错误

在Ubuntu 16中编译开启NEON优化的Opencv时,遇到libpng编译是使用汇编代码的错误,完整错误见文章末尾.通过查询发现解决方案是安装跨平台编译器,安装代码如下: sudo apt-ge ...