Spark Shell启动时遇到<console>:14: error: not found: value spark import spark.implicits._ <console>:14: error: not found: value spark import spark.sql错误的解决办法(图文详解)

不多说,直接上干货!

最近,开始,进一步学习spark的最新版本。由原来经常使用的spark-1.6.1,现在来使用spark-2.2.0-bin-hadoop2.6.tgz。

前期博客

Spark on YARN模式的安装(spark-1.6.1-bin-hadoop2.6.tgz + hadoop-2.6.0.tar.gz)(master、slave1和slave2)(博主推荐)

这里我,使用的是spark-2.2.0-bin-hadoop2.6.tgz + hadoop-2.6.0.tar.gz 的单节点来测试下。

其中,hadoop-2.6.0的单节点配置文件,我就不赘述了。

这里,我重点写下spark on yarn。我这里采取的是这模式。

spark-defaults.conf

默认,保持不修改。

spark-env.sh

export JAVA_HOME=/home/spark/app/jdk1..0_60

export SCALA_HOME=/home/spark/app/scala-2.10.

export HADOOP_HOME=/home/spark/app/hadoop-2.6.

export HADOOP_CONF_DIR=/home/spark/app/hadoop-2.6./etc/hadoop

export SPARK_MASTER_IP=192.168.80.218

export SPARK_WORKER_MERMORY=1G

slaves

sparksinglenode

问题详情

我已经是启动了hadoop进程。

然后,来执行

[spark@sparksinglenode spark-2.2.-bin-hadoop2.]$ bin/spark-shell

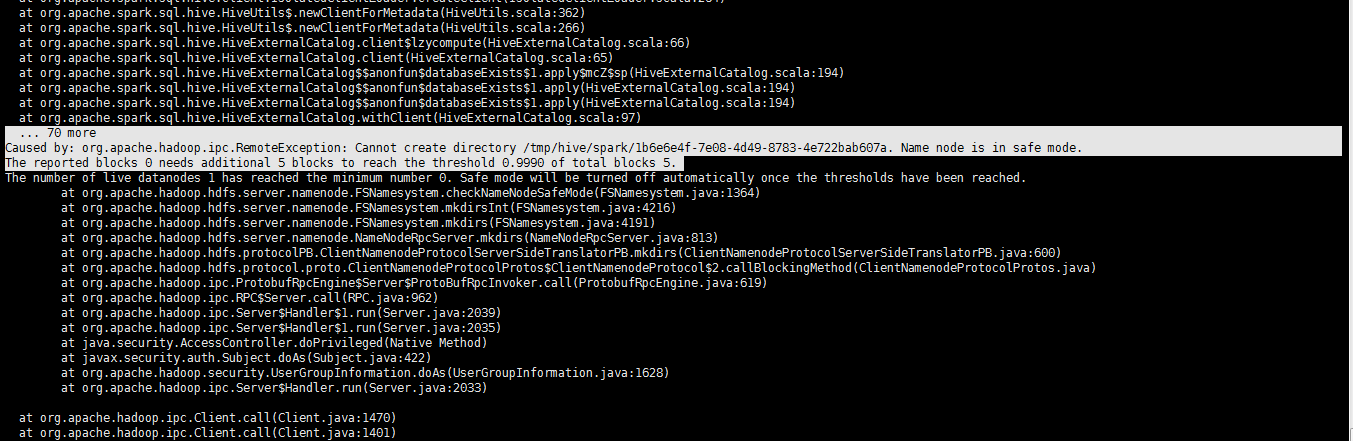

at org.apache.spark.sql.hive.HiveUtils$.newClientForMetadata(HiveUtils.scala:)

at org.apache.spark.sql.hive.HiveUtils$.newClientForMetadata(HiveUtils.scala:)

at org.apache.spark.sql.hive.HiveExternalCatalog.client$lzycompute(HiveExternalCatalog.scala:)

at org.apache.spark.sql.hive.HiveExternalCatalog.client(HiveExternalCatalog.scala:)

at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$.apply$mcZ$sp(HiveExternalCatalog.scala:)

at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$.apply(HiveExternalCatalog.scala:)

at org.apache.spark.sql.hive.HiveExternalCatalog$$anonfun$databaseExists$.apply(HiveExternalCatalog.scala:)

at org.apache.spark.sql.hive.HiveExternalCatalog.withClient(HiveExternalCatalog.scala:)

... more

Caused by: org.apache.hadoop.ipc.RemoteException: Cannot create directory /tmp/hive/spark/1b6e6e4f-7e08-4d49--4e722bab607a. Name node is in safe mode.

The reported blocks needs additional blocks to reach the threshold 0.9990 of total blocks .

The number of live datanodes has reached the minimum number . Safe mode will be turned off automatically once the thresholds have been reached.

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkNameNodeSafeMode(FSNamesystem.java:)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirsInt(FSNamesystem.java:)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.mkdirs(FSNamesystem.java:)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.mkdirs(NameNodeRpcServer.java:)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.mkdirs(ClientNamenodeProtocolServerSideTranslatorPB.java:)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:)

at org.apache.hadoop.ipc.Server$Handler$.run(Server.java:)

at org.apache.hadoop.ipc.Server$Handler$.run(Server.java:)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:)

at org.apache.hadoop.hdfs.DFSClient.mkdirs(DFSClient.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem$.doCall(DistributedFileSystem.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem$.doCall(DistributedFileSystem.java:)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem.mkdirsInternal(DistributedFileSystem.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem.mkdirs(DistributedFileSystem.java:)

at org.apache.hadoop.hive.ql.session.SessionState.createPath(SessionState.java:)

at org.apache.hadoop.hive.ql.session.SessionState.createSessionDirs(SessionState.java:)

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:)

... more

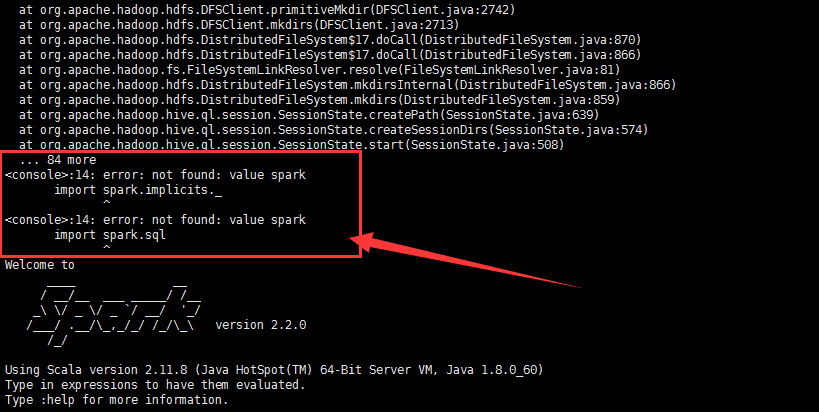

<console>:14: error: not found: value spark

import spark.implicits._

^

<console>:14: error: not found: value spark

import spark.sql

^

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 2.2.

/_/ Using Scala version 2.11. (Java HotSpot(TM) -Bit Server VM, Java 1.8.0_60)

Type in expressions to have them evaluated.

Type :help for more information. scala>

解决办法

[spark@sparksinglenode ~]$ jps

SecondaryNameNode

Jps

NameNode

ResourceManager

NodeManager

DataNode

[spark@sparksinglenode ~]$ hdfs dfsadmin -safemode leave

// :: WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Safe mode is OFF

[spark@sparksinglenode ~]$

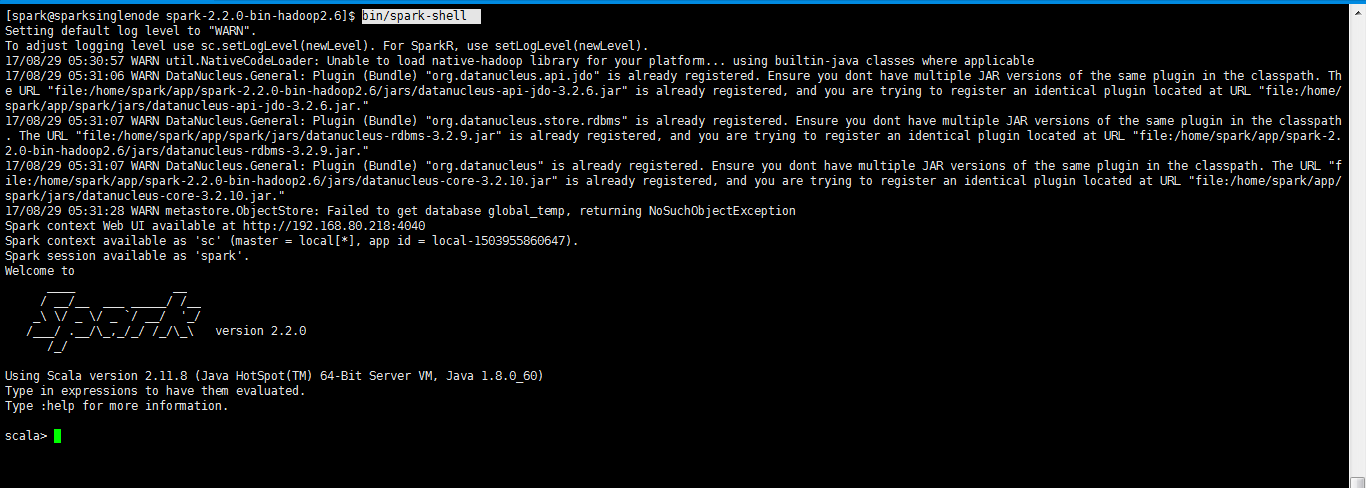

再次执行,成功了

[spark@sparksinglenode spark-2.2.-bin-hadoop2.]$ bin/spark-shell

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

// :: WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

// :: WARN DataNucleus.General: Plugin (Bundle) "org.datanucleus.api.jdo" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/home/spark/app/spark-2.2.0-bin-hadoop2.6/jars/datanucleus-api-jdo-3.2.6.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/home/spark/app/spark/jars/datanucleus-api-jdo-3.2.6.jar."

// :: WARN DataNucleus.General: Plugin (Bundle) "org.datanucleus.store.rdbms" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/home/spark/app/spark/jars/datanucleus-rdbms-3.2.9.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/home/spark/app/spark-2.2.0-bin-hadoop2.6/jars/datanucleus-rdbms-3.2.9.jar."

// :: WARN DataNucleus.General: Plugin (Bundle) "org.datanucleus" is already registered. Ensure you dont have multiple JAR versions of the same plugin in the classpath. The URL "file:/home/spark/app/spark-2.2.0-bin-hadoop2.6/jars/datanucleus-core-3.2.10.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/home/spark/app/spark/jars/datanucleus-core-3.2.10.jar."

// :: WARN metastore.ObjectStore: Failed to get database global_temp, returning NoSuchObjectException

Spark context Web UI available at http://192.168.80.218:4040

Spark context available as 'sc' (master = local[*], app id = local-).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 2.2.

/_/ Using Scala version 2.11. (Java HotSpot(TM) -Bit Server VM, Java 1.8.0_60)

Type in expressions to have them evaluated.

Type :help for more information. scala>

或者

[spark@sparksinglenode spark-2.2.-bin-hadoop2.]$ bin/spark-shell --master yarn-client

注意,这里的--master是固定参数

Spark Shell启动时遇到<console>:14: error: not found: value spark import spark.implicits._ <console>:14: error: not found: value spark import spark.sql错误的解决办法(图文详解)的更多相关文章

- IDEA里运行代码时出现Error:scalac: error while loading JUnit4, Scala signature JUnit4 has wrong version expected: 5.0 found: 4.1 in JUnit4.class错误的解决办法(图文详解)

不多说,直接上干货! 问题详情 当出现这类错误时是由于版本不匹配造成的 Information:// : - Compilation completed with errors and warnin ...

- 关于MyEclipse启动报错:Error starting static Resources;下面伴随Failed to start component [StandardServer[8005]]; A child container failed during start.的错误提示解决办法.

最后才发现原因是Tomcat的server.xml配置文件有问题:apache-tomcat-7.0.67\conf的service.xml下边多了类似与 <Host appBase=" ...

- PHP生成页面二维码解决办法?详解

随着科技的进步,二维码应用领域越来越广泛,今天我给大家分享下如何使用PHP生成二维码,以及如何生成中间带LOGO图像的二维码. 具体工具: phpqrcode.php内库:这个文件可以到网上下载,如果 ...

- SQL SERVER 2000安装教程图文详解

注意:Windows XP不能装企业版.win2000\win2003服务器安装企业版一.硬件和操作系统要求 下表说明安装 Microsoft SQL Server 2000 或 SQL Server ...

- SQL server 2008 r2 安装图文详解

文末有官网下载地址.百度网盘下载地址和产品序列号以及密钥,中间需要用到密钥和序列号的可以到文末找选择网盘下载的下载解压后是镜像文件,还需要解压一次直接右键点击解如图所示选项,官网下载安装包的可以跳过前 ...

- Elasticsearch集群状态健康值处于red状态问题分析与解决(图文详解)

问题详情 我的es集群,开启后,都好久了,一直报red状态??? 问题分析 有两个分片数据好像丢了. 不知道你这数据怎么丢的. 确认下本地到底还有没有,本地要是确认没了,那数据就丢了,删除索引 ...

- error: not found: value sqlContext/import sqlContext.implicits._/error: not found: value sqlContext /import sqlContext.sql/Caused by: java.net.ConnectException: Connection refused

1.今天启动启动spark的spark-shell命令的时候报下面的错误,百度了很多,也没解决问题,最后想着是不是没有启动hadoop集群的问题 ,可是之前启动spark-shell命令是不用启动ha ...

- 对于maven创建spark项目的pom.xml配置文件(图文详解)

不多说,直接上干货! http://mvnrepository.com/ 这里,怎么创建,见 Spark编程环境搭建(基于Intellij IDEA的Ultimate版本)(包含Java和Scala版 ...

- 全网最详细的启动或格式化zkfc时出现java.net.NoRouteToHostException: No route to host ... Will not attempt to authenticate using SASL (unknown error)错误的解决办法(图文详解)

不多说,直接上干货! 全网最详细的启动zkfc进程时,出现INFO zookeeper.ClientCnxn: Opening socket connection to server***/192.1 ...

随机推荐

- JAVA8 Lambda 表达式使用心得

List<HashMap> 指定数据求和: List<HashMap> kk = new ArrayList<>(); Map mmm = new H ...

- C++内存管理之shared_ptr

----------------------------------------shared_ptr--------------------------------------- 引子 c++中动态 ...

- 以太坊系列之三: 以太坊的crypto模块--以太坊源码学习

以太坊的crypto模块 该模块分为两个部分一个是实现sha3,一个是实现secp256k1(这也是比特币中使用的签名算法). 需要说明的是secp256k1有两种实现方式,一种是依赖libsecp2 ...

- webrowser卡死解决方案

webrowser 是由于有道词典造成 解决方案,关闭有道或卸载:

- Google的C++代码规范

英文版:http://google-styleguide.googlecode.com/svn/trunk/cppguide.xml 中文版:http://zh-google-styleguide ...

- map/fileter

一.生成器,generator,节省内存,但是增加了CPU的计算时间 (下节课讲函数怎么变成生成器) 每次循环的时候,就按照这个规则(自己定义的逻辑)去生成一个数据. res = [ 'a','1' ...

- 【ARC083E】Bichrome Tree 树形dp

Description 有一颗N个节点的树,其中1号节点是整棵树的根节点,而对于第ii个点(2≤i≤N)(2≤i≤N),其父节点为PiPi 对于这棵树上每一个节点Snuke将会钦定一种颜色(黑或白), ...

- 更改Linux下的时间

1.使用tzseletect glibc-common-2.12-1.192.el6.x86_64 : Common binaries and locale data for glibc Repo : ...

- 在DZ 中 showmessage 中可以再次执行 JS

showmessage ( '登录', '', array (), array ( 'showdialog' => 0, ...

- Opencv博文收藏列表

opencv识别二维码:https://blog.csdn.net/jia20003/article/details/77348170 opencv视频:http://www.opencv.org.c ...