Hadoop 2.7.3 完全分布式维护-部署篇

测试环境如下

| IP | host | JDK | linux | hadop | role |

| 172.16.101.55 | sht-sgmhadoopnn-01 | 1.8.0_111 | CentOS release 6.5 | hadoop-2.7.3 | NameNode,SecondaryNameNode,ResourceManager |

| 172.16.101.58 | sht-sgmhadoopdn-01 | 1.8.0_111 | CentOS release 6.5 | hadoop-2.7.3 | DataNode,NodeManager |

| 172.16.101.59 | sht-sgmhadoopdn-02 | 1.8.0_111 | CentOS release 6.5 | hadoop-2.7.3 | DataNode,NodeManager |

| 172.16.101.60 | sht-sgmhadoopdn-03 | 1.8.0_111 | CentOS release 6.5 | hadoop-2.7.3 | DataNode,NodeManager |

1. 软件准备

http://www-eu.apache.org/dist/hadoop/common/hadoop-2.7.3/hadoop-2.7.3.tar.gz

http://download.oracle.com/otn-pub/java/jdk/8u144-b01/090f390dda5b47b9b721c7dfaa008135/jdk-8u144-linux-x64.tar.gz

2. 系统环境该准备

2.1 创建用户并配置好环境变量

- # useradd -r -u -g dba -G root -d /home/hduser hduser

- # echo 'admin123' | passwd --stdin hduser

- # cat /home/hduser/.bash_profile

- ..........

- export JAVA_HOME=/usr/java/jdk1..0_111_273

- export JRE_HOME=$JAVA_HOME/jre

- export HADOOP_HOME=/usr/local/hadoop-2.7.3

- export CLASSPATH=.:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/dt.jar:$JRE_HOME/lib

- export PATH=$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH:$HOME/bin

2.2 解压hadoop和jdk

- # tar -zxf jdk-8u144-linux-x64.tar.gz -C /usr/java/

- # tar -zxf hadoop-2.7.3.tar.gz -C /usr/local/

- # chown -R hduser:dba /usr/local/hadoop-2.7.3/

2.3 设置各主机名与IP地址解析

- # cat /etc/hosts

- 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

- :: localhost localhost.localdomain localhost6 localhost6.localdomain6

- 172.16.101.55 sht-sgmhadoopnn-

- 172.16.101.58 sht-sgmhadoopdn-

- 172.16.101.59 sht-sgmhadoopdn-

- 172.16.101.60 sht-sgmhadoopdn-

2.4 配置namnode与各datanode节点间的ssh互信

- $ ssh-keygen -t rsa

- $ ssh-copy-id hduser@sht-sgmhadoopnn-

- $ ssh-copy-id hduser@sht-sgmhadoopdn-

- $ ssh-copy-id hduser@sht-sgmhadoopdn-

- $ ssh-copy-id hduser@sht-sgmhadoopdn-

2.5 同步各节点之间时间

http://lythjq.blog.51cto.com/8902270/1603266

3. hadoop配置

3.1 配置hadoop-env.sh文件

修改JAVA_HOME

- export JAVA_HOME=${JAVA_HOME}

为

- export JAVA_HOME=/usr/java/jdk1..0_111

3.2 配置core-site.xml文件

- <configuration>

- <property>

- <name>hadoop.tmp.dir</name>

- <value>/usr/local/hadoop/data</value>

- </property>

- <property>

- <name>fs.defaultFS</name>

- <value>hdfs://sht-sgmhadoopnn-01:9000</value>

- </property>

- <property>

- <name>hadoop.http.staticuser.user</name>

- <value>root</value>

- </property>

- <property>

- <name>fs.trash.interval</name>

- <value>1440</value>

- </property>

- <property>

- <name>fs.trash.checkpoint.interval</name>

- <value>0</value>

- </property>

- <property>

- <name>io.file.buffer.size</name>

- <value>65536</value>

- </property>

- <property>

- <name>hadoop.proxyuser.hduser.groups</name>

- <value>*</value>

- </property>

- <property>

- <name>hadoop.proxyuser.hduser.hosts</name>

- <value>*</value>

- </property>

- </configuration>

3.2 配置hdfs-site.xml

- <configuration>

- <property>

- <name>dfs.webhdfs.enabled</name>

- <value>true</value>

- </property>

- <property>

- <name>dfs.permissions.enabled</name>

- <value>false</value>

- </property>

- <property>

- <name>dfs.namenode.http-address</name>

- <value>172.16.101.55:50070</value>

- </property>

- <property>

- <name>dfs.namenode.https-address</name>

- <value>172.16.101.55:50470</value>

- </property>

- <property>

- <name>dfs.namenode.secondary.http-address</name>

- <value>172.16.101.55:50090</value>

- </property>

- <property>

- <name>dfs.namenode.secondary.https-address</name>

- <value>172.16.101.55:50091</value>

- </property>

- <property>

- <name>dfs.datanode.address</name>

- <value>0.0.0.0:50010</value>

- </property>

- <property>

- <name>dfs.datanode.ipc.address</name>

- <value>0.0.0.0:50020</value>

- </property>

- <property>

- <name>dfs.datanode.http.address</name>

- <value>0.0.0.0:50075</value>

- </property>

- <property>

- <name>dfs.datanode.https-address</name>

- <value>0.0.0.0:50475</value>

- </property>

- <property>

- <name>dfs.namenode.name.dir</name>

- <value>/usr/local/hadoop/data/dfs/name</value>

- </property>

- <property>

- <name>dfs.namenode.edits.dir</name>

- <value>${dfs.namenode.name.dir}</value>

- </property>

- <property>

- <name>dfs.datanode.data.dir</name>

- <value>/usr/local/hadoop/data/dfs/data</value>

- </property>

- <property>

- <name>dfs.namenode.checkpoint.dir</name>

- <value>/usr/local/hadoop/data/dfs/namesecondary</value>

- </property>

- <property>

- <name>dfs.namenode.checkpoint.period</name>

- <value>3600</value>

- </property>

- <property>

- <name>dfs.namenode.checkpoint.edits.dir</name>

- <value>/usr/local/hadoop/data/dfs/namesecondary</value>

- </property>

- <property>

- <name>dfs.replication</name>

- <value>3</value>

- </property>

- <property>

- <name>dfs.blocksize</name>

- <value>134217728</value>

- </property>

- <property>

- <name>dfs.datanode.handler.count</name>

- <value>100</value>

- </property>

- </configuration>

3.3 配置mapred-site.xml

- <configuration>

- <property>

- <name>mapreduce.framework.name</name>

- <value>yarn</value>

- </property>

- <property>

- <name>mapreduce.jobtracker.http.address</name>

- <value>0.0.0.0:50030</value>

- </property>

- <property>

- <name>mapreduce.jobhistory.address</name>

- <value>0.0.0.0:10020</value>

- </property>

- <property>

- <name>mapreduce.jobhistory.webapp.address</name>

- <value>0.0.0.0:19888</value>

- </property>

- </configuration>

3.4 配置yarn-site.xml

- <configuration>

- <property>

- <name>yarn.nodemanager.aux-services</name>

- <value>mapreduce_shuffle</value>

- </property>

- <property>

- <name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

- <value>org.apache.hadoop.mapred.ShuffleHandler</value>

- </property>

- <property>

- <name>yarn.resourcemanager.hostname</name>

- <value>172.16.101.55</value>

- <description>The hostname of the RM.</description>

- </property>

- <property>

- <name>yarn.resourcemanager.address</name>

- <value>${yarn.resourcemanager.hostname}:8032</value>

- </property>

- <property>

- <name>yarn.resourcemanager.scheduler.address</name>

- <value>${yarn.resourcemanager.hostname}:8030</value>

- </property>

- <property>

- <name>yarn.resourcemanager.webapp.address</name>

- <value>${yarn.resourcemanager.hostname}:8088</value>

- </property>

- <property>

- <name>yarn.resourcemanager.webapp.https.address</name>

- <value>${yarn.resourcemanager.hostname}:8090</value>

- </property>

- <property>

- <name>yarn.resourcemanager.resource-tracker.address</name>

- <value>${yarn.resourcemanager.hostname}:8031</value>

- </property>

- <property>

- <name>yarn.resourcemanager.admin.address</name>

- <value>${yarn.resourcemanager.hostname}:8033</value>

- </property>

- <property>

- <name>yarn.nodemanager.localizer.address</name>

- <value>0.0.0.0:8040</value>

- </property>

- <property>

- <name>yarn.nodemanager.webapp.address</name>

- <value>0.0.0.0:8042</value>

- </property>

- <property>

- <name>yarn.resourcemanager.connect.retry-interval.ms</name>

- <value>2000</value>

- </property>

- <property>

- <name>yarn.nodemanager.resource.memory-mb</name>

- <value>4096</value>

- </property>

- <property>

- <name>yarn.scheduler.minimum-allocation-mb</name>

- <value>1024</value>

- </property>

- <property>

- <name>yarn.scheduler.maximum-allocation-mb</name>

- <value>4096</value>

- </property>

- </configuration>

3.5 配置slaves

- sht-sgmhadoopdn-01

- sht-sgmhadoopdn-02

- sht-sgmhadoopdn-03

3.6 将hadoop配置同步到其他节点

- $ scp -r /usr/local/hadoop-2.7.3/* hduser@sht-sgmhadoopdn-01:/usr/local/hadoop-2.7.3/

- $ scp -r /usr/local/hadoop-2.7.3/* hduser@sht-sgmhadoopdn-02:/usr/local/hadoop-2.7.3/

- $ scp -r /usr/local/hadoop-2.7.3/* hduser@sht-sgmhadoopdn-03:/usr/local/hadoop-2.7.3/

4. 格式化namenode主节点

- $ hdfs namenode -format

- // :: INFO namenode.NameNode: STARTUP_MSG:

- /************************************************************

- STARTUP_MSG: Starting NameNode

- STARTUP_MSG: host = sht-sgmhadoopnn-01/172.16.101.55

- STARTUP_MSG: args = [-format]

- STARTUP_MSG: version = 2.7.3

- STARTUP_MSG: classpath = /usr/local/hadoop-2.7.3/etc/hadoop:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/hadoop-auth-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-httpclient-3.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jersey-json-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jsr305-3.0.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jsp-api-2.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jets3t-0.9.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jersey-server-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-net-3.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/avro-1.7.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/hadoop-annotations-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-io-2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/log4j-1.2.17.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-lang-2.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/netty-3.6.2.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/guava-11.0.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/httpcore-4.2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-collections-3.2.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-digester-1.8.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-logging-1.1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/junit-4.11.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/asm-3.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/xz-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/xmlenc-0.52.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-codec-1.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jettison-1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jsch-0.1.42.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jersey-core-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-math3-3.1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/curator-framework-2.7.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jetty-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/mockito-all-1.8.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/stax-api-1.0-2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/curator-client-2.7.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/httpclient-4.2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/gson-2.2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/activation-1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/servlet-api-2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/zookeeper-3.4.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/jetty-util-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/hamcrest-core-1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/paranamer-2.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-cli-1.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-configuration-1.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/commons-compress-1.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3-tests.jar:/usr/local/hadoop-2.7.3/share/hadoop/common/hadoop-nfs-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-io-2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/guava-11.0.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/asm-3.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3-tests.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-client-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-json-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-server-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-io-2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/log4j-1.2.17.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-lang-2.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/guava-11.0.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/guice-3.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/asm-3.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/xz-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-codec-1.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jettison-1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/javax.inject-1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-core-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/activation-1.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/aopalliance-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/servlet-api-2.5.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-cli-1.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-client-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-registry-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-common-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-common-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-api-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-3.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/junit-4.11.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/asm-3.2.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/xz-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/javax.inject-1.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3-tests.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3.jar:/usr/local/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.3.jar:/usr/local/hadoop-2.7.3/contrib/capacity-scheduler/*.jar

- STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff; compiled by 'root' on 2016-08-18T01:41Z

- STARTUP_MSG: java = 1.8.0_111

- ************************************************************/

- // :: INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]

- // :: INFO namenode.NameNode: createNameNode [-format]

- // :: WARN common.Util: Path /usr/local/hadoop-2.7./data/dfs/name should be specified as a URI in configuration files. Please update hdfs configuration.

- // :: WARN common.Util: Path /usr/local/hadoop-2.7./data/dfs/name should be specified as a URI in configuration files. Please update hdfs configuration.

- Formatting using clusterid: CID-80464bc9-331a-4ebd-9c45-5c952ddaa4ef

- // :: INFO namenode.FSNamesystem: No KeyProvider found.

- // :: INFO namenode.FSNamesystem: fsLock is fair:true

- // :: INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=

- // :: INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true

- // :: INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to :::00.000

- // :: INFO blockmanagement.BlockManager: The block deletion will start around Aug ::

- // :: INFO util.GSet: Computing capacity for map BlocksMap

- // :: INFO util.GSet: VM type = -bit

- // :: INFO util.GSet: 2.0% max memory MB = 17.8 MB

- // :: INFO util.GSet: capacity = ^ = entries

- // :: INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false

- // :: INFO blockmanagement.BlockManager: defaultReplication =

- // :: INFO blockmanagement.BlockManager: maxReplication =

- // :: INFO blockmanagement.BlockManager: minReplication =

- // :: INFO blockmanagement.BlockManager: maxReplicationStreams =

- // :: INFO blockmanagement.BlockManager: replicationRecheckInterval =

- // :: INFO blockmanagement.BlockManager: encryptDataTransfer = false

- // :: INFO blockmanagement.BlockManager: maxNumBlocksToLog =

- // :: INFO namenode.FSNamesystem: fsOwner = hduser (auth:SIMPLE)

- // :: INFO namenode.FSNamesystem: supergroup = supergroup

- // :: INFO namenode.FSNamesystem: isPermissionEnabled = false

- // :: INFO namenode.FSNamesystem: HA Enabled: false

- // :: INFO namenode.FSNamesystem: Append Enabled: true

- // :: INFO util.GSet: Computing capacity for map INodeMap

- // :: INFO util.GSet: VM type = -bit

- // :: INFO util.GSet: 1.0% max memory MB = 8.9 MB

- // :: INFO util.GSet: capacity = ^ = entries

- // :: INFO namenode.FSDirectory: ACLs enabled? false

- // :: INFO namenode.FSDirectory: XAttrs enabled? true

- // :: INFO namenode.FSDirectory: Maximum size of an xattr:

- // :: INFO namenode.NameNode: Caching file names occuring more than times

- // :: INFO util.GSet: Computing capacity for map cachedBlocks

- // :: INFO util.GSet: VM type = -bit

- // :: INFO util.GSet: 0.25% max memory MB = 2.2 MB

- // :: INFO util.GSet: capacity = ^ = entries

- // :: INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033

- // :: INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes =

- // :: INFO namenode.FSNamesystem: dfs.namenode.safemode.extension =

- // :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets =

- // :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users =

- // :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = ,,

- // :: INFO namenode.FSNamesystem: Retry cache on namenode is enabled

- // :: INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is millis

- // :: INFO util.GSet: Computing capacity for map NameNodeRetryCache

- // :: INFO util.GSet: VM type = -bit

- // :: INFO util.GSet: 0.029999999329447746% max memory MB = 273.1 KB

- // :: INFO util.GSet: capacity = ^ = entries

- // :: INFO namenode.FSImage: Allocated new BlockPoolId: BP--172.16.101.55-

- // :: INFO common.Storage: Storage directory /usr/local/hadoop-2.7./data/dfs/name has been successfully formatted.

- // :: INFO namenode.FSImageFormatProtobuf: Saving image file /usr/local/hadoop-2.7./data/dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression

- // :: INFO namenode.FSImageFormatProtobuf: Image file /usr/local/hadoop-2.7./data/dfs/name/current/fsimage.ckpt_0000000000000000000 of size bytes saved in seconds.

- // :: INFO namenode.NNStorageRetentionManager: Going to retain images with txid >=

- // :: INFO util.ExitUtil: Exiting with status

- // :: INFO namenode.NameNode: SHUTDOWN_MSG:

- /************************************************************

- SHUTDOWN_MSG: Shutting down NameNode at sht-sgmhadoopnn-01/172.16.101.55

- ************************************************************/

5. 启动hadoop

5.1 启动hdfs

- $ /usr/local/hadoop-2.7.3/sbin/start-dfs.sh

- Starting namenodes on [sht-sgmhadoopnn-]

- sht-sgmhadoopnn-: starting namenode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-namenode-sht-sgmhadoopnn-.out

- sht-sgmhadoopdn-: starting datanode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-datanode-sht-sgmhadoopdn-.out

- sht-sgmhadoopdn-: starting datanode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-datanode-sht-sgmhadoopdn-.out

- sht-sgmhadoopdn-: starting datanode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-datanode-sht-sgmhadoopdn-.out

- Starting secondary namenodes [sht-sgmhadoopnn-]

- sht-sgmhadoopnn-: starting secondarynamenode, logging to /usr/local/hadoop-2.7.3/logs/hadoop-hduser-secondarynamenode-sht-sgmhadoopnn-.out

5.2 启动yarn

- $ /usr/local/hadoop-2.7.3/sbin/start-yarn.sh

- starting yarn daemons

- starting resourcemanager, logging to /usr/local/hadoop-2.7.3/logs/yarn-hduser-resourcemanager-sht-sgmhadoopnn-.out

- sht-sgmhadoopdn-: starting nodemanager, logging to /usr/local/hadoop-2.7.3/logs/yarn-hduser-nodemanager-sht-sgmhadoopdn-.out

- sht-sgmhadoopdn-: starting nodemanager, logging to /usr/local/hadoop-2.7.3/logs/yarn-hduser-nodemanager-sht-sgmhadoopdn-.out

- sht-sgmhadoopdn-: starting nodemanager, logging to /usr/local/hadoop-2.7.3/logs/yarn-hduser-nodemanager-sht-sgmhadoopdn-.out

5.3 启动historyserver

- $ /usr/local/hadoop-2.7.3/sbin/mr-jobhistory-daemon.sh start historyserver

- starting historyserver, logging to /usr/local/hadoop-2.7.3/logs/mapred-hduser-historyserver-sht-sgmhadoopnn-.out

5.4 验证各节点状态

sht-sgmhadoopnn-0

- $ jps

- Jps

- JobHistoryServer

- NameNode

- ResourceManager

- SecondaryNameNode

sht-sgmhadoopdn-01

- $ jps

- DataNode

- Jps

- NodeManager

sht-sgmhadoopdn-02

- $ jps

- DataNode

- Jps

- NodeManager

sht-sgmhadoopdn-03

- $ jps

- NodeManager

- DataNode

- Jps

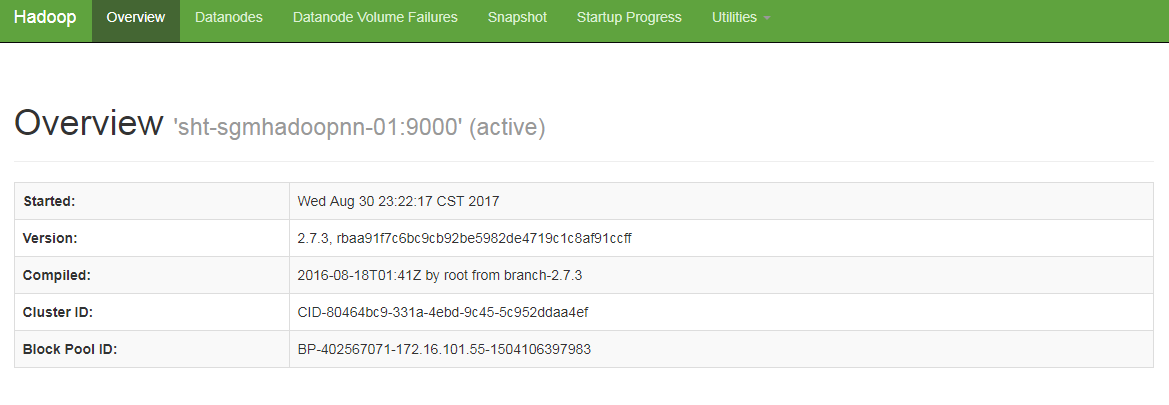

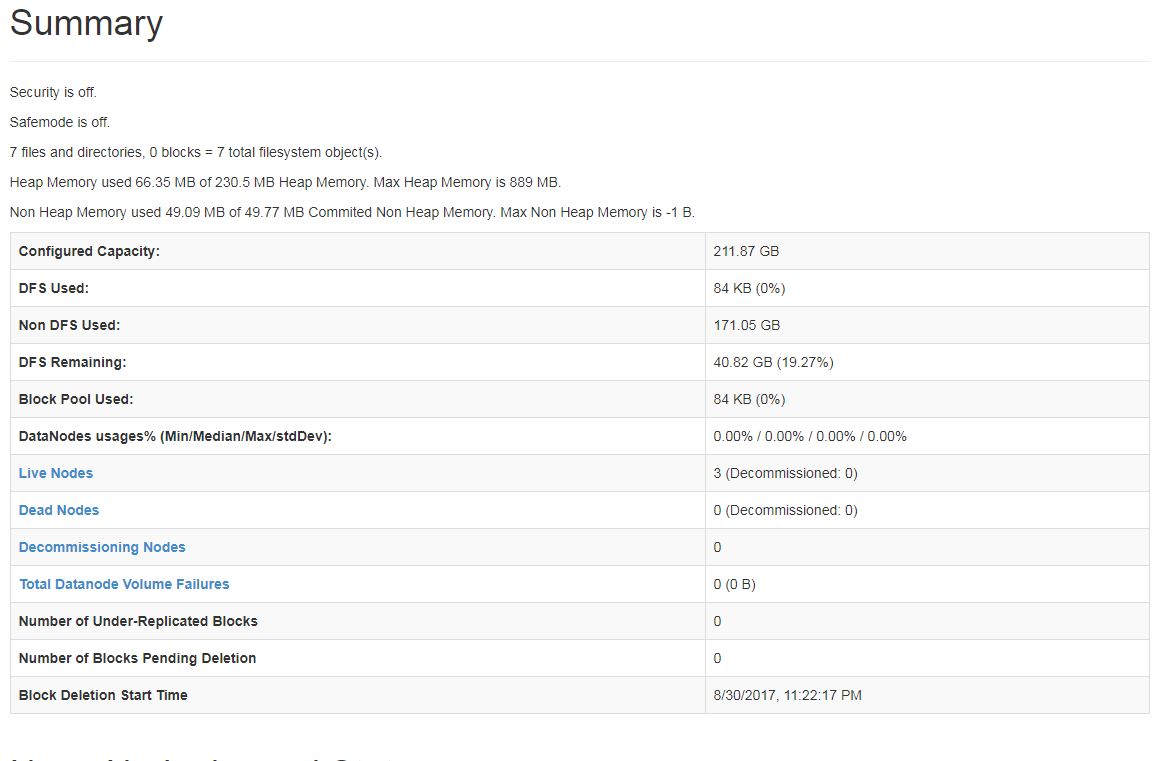

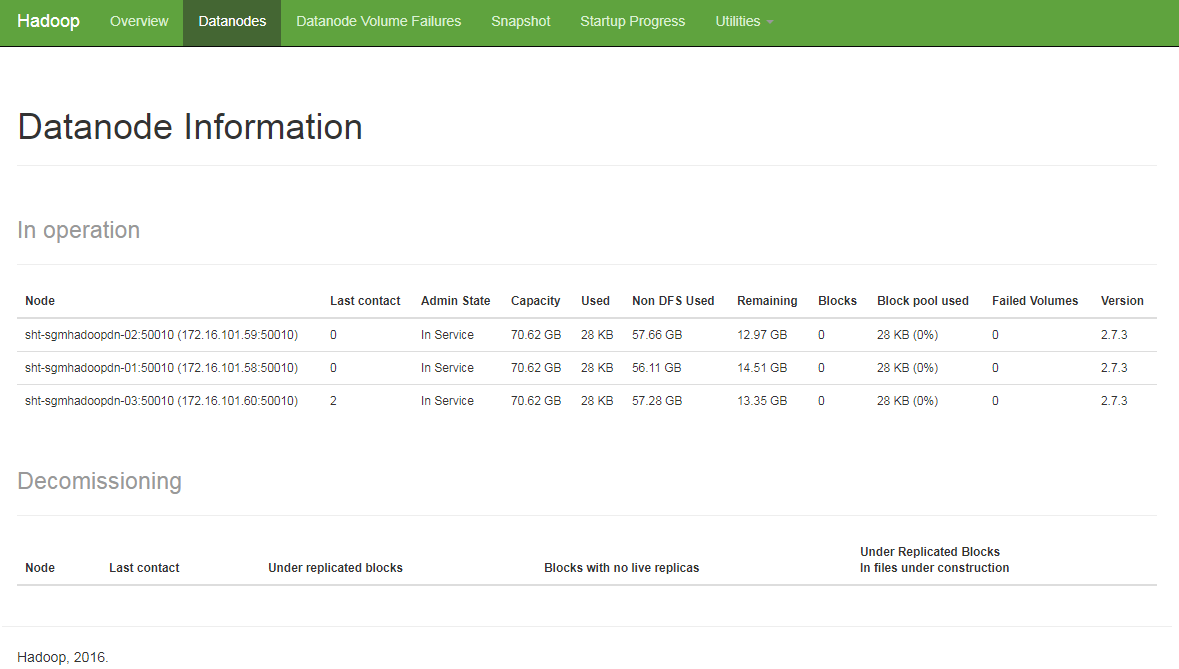

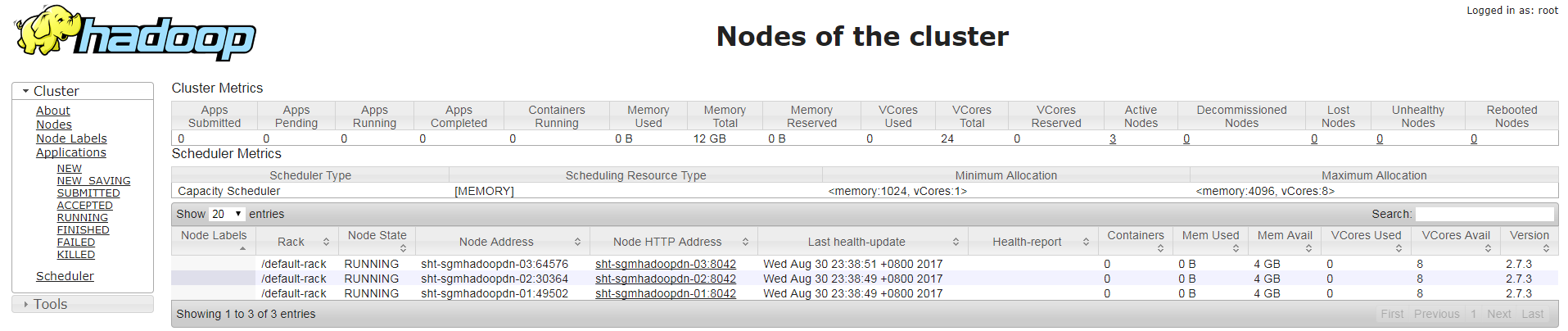

6. 检查Hadoop运行状态

6.1 HDFS状态

http://172.16.101.55:50070

6.1 YARN状态

http://172.16.101.55:8088

Hadoop 2.7.3 完全分布式维护-部署篇的更多相关文章

- Hadoop 2.7.3 完全分布式维护-简单测试篇

1. 测试MapReduce Job 1.1 上传文件到hdfs文件系统 $ jps Jps SecondaryNameNode JobHistoryServer NameNode ResourceM ...

- Hadoop 2.7.3 完全分布式维护-动态增加datanode篇

原有环境 http://www.cnblogs.com/ilifeilong/p/7406944.html IP host JDK linux hadop role 172.16.101 ...

- 安装部署Apache Hadoop (本地模式和伪分布式)

本节内容: Hadoop版本 安装部署Hadoop 一.Hadoop版本 1. Hadoop版本种类 目前Hadoop发行版非常多,有华为发行版.Intel发行版.Cloudera发行版(CDH)等, ...

- Hadoop伪分布式模式部署

Hadoop的安装有三种执行模式: 单机模式(Local (Standalone) Mode):Hadoop的默认模式,0配置.Hadoop执行在一个Java进程中.使用本地文件系统.不使用HDFS, ...

- Apache Hadoop 2.9.2 完全分布式部署

Apache Hadoop 2.9.2 完全分布式部署(HDFS) 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 一.环境准备 1>.操作平台 [root@node101.y ...

- 3-3 Hadoop集群完全分布式配置部署

Hadoop集群完全分布式配置部署 下面的部署步骤,除非说明是在哪个服务器上操作,否则默认为在所有服务器上都要操作.为了方便,使用root用户. 1.准备工作 1.1 centOS6服务器3台 手动指 ...

- Hadoop基础-完全分布式模式部署yarn日志聚集功能

Hadoop基础-完全分布式模式部署yarn日志聚集功能 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 其实我们不用配置也可以在服务器后台通过命令行的形式查看相应的日志,但为了更方 ...

- Hadoop生态圈-Kafka的完全分布式部署

Hadoop生态圈-Kafka的完全分布式部署 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 本篇博客主要内容就是搭建Kafka完全分布式,它是在kafka本地模式(https:/ ...

- Hadoop安装-单机-伪分布式简单部署配置

最近在搞大数据项目支持所以有时间写下hadoop随笔吧. 环境介绍: Linux: centos7 jdk:java version "1.8.0_181 hadoop:hadoop-3.2 ...

随机推荐

- spring-tool-suite使用教程,并创建spring配置文件

本文为博主原创,未经允许不得转载: 在应用springMVC框架的时候,每次创建spring的xml配置文件时,需要很多步骤,非常麻烦. 所以spring提供了spring-tool-suite插件, ...

- js中获取当前浏览器类型

本文为博主原创,转载请注明出处: 在应用POI进行导出时,先应用POI进行数据封装,将数据封装到Excel中,然后在进行download下载操作,从而完成 POI导出操作.由于在download操作时 ...

- Latex 左右引号

参考: LaTeX技巧218:LaTeX如何正确输入引号:双引号""单引号'' Latex 左右引号 在latex中加引号时,使用""的输出为两个同向的引号: ...

- 使用TestServer测试ASP.NET Core API

今儿给大家分享下,在ASP.NET Core下使用TestServer进行集成测试,这意味着你可以在没有IIS服务器或任何外部事物的情况下测试完整的Web应用程序.下面给出示例: public Sta ...

- HDU 5754 Life Winner Bo(各类博弈大杂合)

http://acm.hdu.edu.cn/showproblem.php?pid=5754 题意: 给一个国际象棋的棋盘,起点为(1,1),终点为(n,m),现在每个棋子只能往右下方走,并且有4种不 ...

- jmeter命令行模式运行,实时获取压测结果

jmeter命令行模式运行,实时获取压测结果 jmeter很小,很快,使用方便,可以在界面运行,可以命令行运行.简单介绍下命令行运行的方式: sh jmeter.sh -n -t my-script. ...

- 【SQLite】可视化工具SQLite studio

SQLite数据库的特性 特点: 1.轻量级2.独立性,没有依赖,无需安装3.隔离性 全部在一个文件夹系统4.跨平台 支持众多操作系统5.多语言接口 支持众多编程语言6.安全性 事物,通过独占性和共享 ...

- MySQL基本使用

来自李兴华视频. 1. 启动命令行方式 2. 连接mysql数据库,其中“-u”标记的是输入用户名,“-p”标记的是输入密码. 3. 建立一个新数据库——mldn,使用UTF-8编码: create ...

- Spring的一个入门例子

例子参考于:Spring系列教材 以及<轻量级JavaEE企业应用实战> Axe.class package com.how2java.bean; public class Axe { p ...

- 在选择列表中无效,因为该列既不包含在聚合函数中,也不包含在 GROUP BY 子句

在选择列表中无效,因为该列既不包含在聚合函数中,也不包含在 GROUP BY 子句 突然看到这个问题,脑袋一蒙,不知道啥意思,后来想想,试图把select里的选项放到后面,问题自然解决! 下面这 ...