Hadoop部署方式-伪分布式(Pseudo-Distributed Mode)

Hadoop部署方式-伪分布式(Pseudo-Distributed Mode)

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

一.下载相应的jdk和Hadoop安装包

JDK:http://www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html

Hadoop:http://hadoop.apache.org/releases.html

注意,Hadoop官方虽然没有windows版本,但是可用通过“visual studio”编译工具将Linux的运行伪分布式迁移到windows中,建议安装2015版本,网上的教程有很多。遗憾的是,这个工具的使用并不是本篇博客的重点。

二.安装Java环境

1>.创建软件解压目录并授权给普通用户

[yinzhengjie@yinzhengjie ~]$ ll

total

-rw-rw-r--. yinzhengjie yinzhengjie Aug hadoop-2.7..tar.gz

-rw-rw-r--. yinzhengjie yinzhengjie May jdk-8u131-linux-x64.tar.gz

[yinzhengjie@yinzhengjie ~]$ sudo mkdir /soft

[sudo] password for yinzhengjie:

[yinzhengjie@yinzhengjie ~]$ sudo chown yinzhengjie:yinzhengjie /soft/

[yinzhengjie@yinzhengjie ~]$ ll /soft/ -d

drwxr-xr-x. yinzhengjie yinzhengjie May : /soft/

[yinzhengjie@yinzhengjie ~]$

2>.解压jdk并配置软连接路径

[yinzhengjie@yinzhengjie ~]$ tar zxf jdk-8u131-linux-x64.tar.gz -C /soft/

[yinzhengjie@yinzhengjie ~]$ cd /soft/

[yinzhengjie@yinzhengjie soft]$ ll

total

drwxr-xr-x. yinzhengjie yinzhengjie Mar jdk1..0_131

[yinzhengjie@yinzhengjie soft]$ ln -s jdk1..0_131/ jdk

[yinzhengjie@yinzhengjie soft]$ ll

total

lrwxrwxrwx. yinzhengjie yinzhengjie May : jdk -> jdk1..0_131/

drwxr-xr-x. yinzhengjie yinzhengjie Mar jdk1..0_131

[yinzhengjie@yinzhengjie soft]$

3>.设置Java环境变量

[yinzhengjie@yinzhengjie soft]$ sudo vi /etc/profile

[yinzhengjie@yinzhengjie soft]$ tail - /etc/profile

#Add by yinzhengjie

JAVA_HOME=/soft/jdk/

PATH=$PATH:$JAVA_HOME/bin

[yinzhengjie@yinzhengjie soft]$ . /etc/profile

[yinzhengjie@yinzhengjie soft]$

[yinzhengjie@yinzhengjie soft]$ java -version

java version "1.8.0_131"

Java(TM) SE Runtime Environment (build 1.8.0_131-b11)

Java HotSpot(TM) -Bit Server VM (build 25.131-b11, mixed mode)

[yinzhengjie@yinzhengjie soft]$

三.安装Hadoop

1>.解压jdk并配置软连接路径

[yinzhengjie@yinzhengjie ~]$ ll

total

-rw-rw-r--. yinzhengjie yinzhengjie Aug hadoop-2.7..tar.gz

-rw-rw-r--. yinzhengjie yinzhengjie May jdk-8u131-linux-x64.tar.gz

[yinzhengjie@yinzhengjie ~]$ tar zxf hadoop-2.7..tar.gz -C /soft/

[yinzhengjie@yinzhengjie ~]$ ln -s /soft/hadoop-2.7./ /soft/hadoop

[yinzhengjie@yinzhengjie ~]$ ll /soft/

total

lrwxrwxrwx. yinzhengjie yinzhengjie May : hadoop -> /soft/hadoop-2.7./

drwxr-xr-x. yinzhengjie yinzhengjie Aug hadoop-2.7.

lrwxrwxrwx. yinzhengjie yinzhengjie May : jdk -> jdk1..0_131/

drwxr-xr-x. yinzhengjie yinzhengjie Mar jdk1..0_131

[yinzhengjie@yinzhengjie ~]$

2>.设置Hadoop的环境变量

[yinzhengjie@yinzhengjie ~]$ sudo vi /etc/profile

[sudo] password for yinzhengjie:

[yinzhengjie@yinzhengjie ~]$ tail - /etc/profile

#Add by yinzhengjie

JAVA_HOME=/soft/jdk/

PATH=$PATH:$JAVA_HOME/bin #Add HADOOP_HOME

HADOOP_HOME=/soft/hadoop/

PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

[yinzhengjie@yinzhengjie ~]$

[yinzhengjie@yinzhengjie ~]$ source /etc/profile

[yinzhengjie@yinzhengjie ~]$ grep JAVA_HOME /soft/hadoop/etc/hadoop/hadoop-env.sh | grep -v ^#

export JAVA_HOME=/soft/jdk/

[yinzhengjie@yinzhengjie ~]$

3>.验证是否安装完毕(注意,提交的目录当前用户需要有权限,因为本地部署不需要启动服务,它用的就是Linux操作系统,如果普通用户把文件直接提交到根的话肯定会报异常的哟!)

[yinzhengjie@yinzhengjie ~]$ ll

total

-rw-rw-r--. yinzhengjie yinzhengjie Aug hadoop-2.7..tar.gz

-rw-rw-r--. yinzhengjie yinzhengjie May jdk-8u131-linux-x64.tar.gz

-rw-r--r--. yinzhengjie yinzhengjie May : test

[yinzhengjie@yinzhengjie ~]$ rm -rf test

[yinzhengjie@yinzhengjie ~]$

[yinzhengjie@yinzhengjie ~]$

[yinzhengjie@yinzhengjie ~]$ ll

total

-rw-rw-r--. yinzhengjie yinzhengjie Aug hadoop-2.7..tar.gz

-rw-rw-r--. yinzhengjie yinzhengjie May jdk-8u131-linux-x64.tar.gz

[yinzhengjie@yinzhengjie ~]$

[yinzhengjie@yinzhengjie ~]$ hdfs dfs -put hadoop-2.7..tar.gz /home/yinzhengjie/test

[yinzhengjie@yinzhengjie ~]$ tar zxf test

[yinzhengjie@yinzhengjie ~]$ ll

total

drwxr-xr-x. yinzhengjie yinzhengjie Aug hadoop-2.7.

-rw-rw-r--. yinzhengjie yinzhengjie Aug hadoop-2.7..tar.gz

-rw-rw-r--. yinzhengjie yinzhengjie May jdk-8u131-linux-x64.tar.gz

-rw-r--r--. yinzhengjie yinzhengjie May : test

[yinzhengjie@yinzhengjie ~]$

四.部署hadoop伪分布式

1>.配置三种模式并存,将源文件拷贝三份

[yinzhengjie@yinzhengjie ~]$ cd /soft/hadoop/etc/

[yinzhengjie@yinzhengjie etc]$ cp -r hadoop local

[yinzhengjie@yinzhengjie etc]$ cp -r hadoop pseudo

[yinzhengjie@yinzhengjie etc]$ cp -r hadoop full

[yinzhengjie@yinzhengjie etc]$ ll /soft/hadoop/etc/

total

drwxr-xr-x. yinzhengjie yinzhengjie May : full

drwxr-xr-x. yinzhengjie yinzhengjie May : hadoop

drwxr-xr-x. yinzhengjie yinzhengjie May : local

drwxr-xr-x. yinzhengjie yinzhengjie May : pseudo

[yinzhengjie@yinzhengjie etc]$

2>.删除hadoop目录并创建连接文件,让其指向hadoop目录

[yinzhengjie@yinzhengjie etc]$ ll /soft/hadoop/etc/

total

drwxr-xr-x. yinzhengjie yinzhengjie May : full

drwxr-xr-x. yinzhengjie yinzhengjie May : hadoop

drwxr-xr-x. yinzhengjie yinzhengjie May : local

drwxr-xr-x. yinzhengjie yinzhengjie May : pseudo

[yinzhengjie@yinzhengjie etc]$

[yinzhengjie@yinzhengjie etc]$

[yinzhengjie@yinzhengjie etc]$ rm -rf hadoop/

[yinzhengjie@yinzhengjie etc]$ ln -s pseudo/ hadoop

[yinzhengjie@yinzhengjie etc]$ ll

total

drwxr-xr-x. yinzhengjie yinzhengjie May : full

lrwxrwxrwx. yinzhengjie yinzhengjie May : hadoop -> pseudo/

drwxr-xr-x. yinzhengjie yinzhengjie May : local

drwxr-xr-x. yinzhengjie yinzhengjie May : pseudo

[yinzhengjie@yinzhengjie etc]$

3>.修改Hadoop的配置文件

[yinzhengjie@yinzhengjie etc]$ cd /soft/hadoop/etc/hadoop/

[yinzhengjie@yinzhengjie hadoop]$

[yinzhengjie@yinzhengjie hadoop]$ more core-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost/</value>

</property>

</configuration> <!-- core-site.xml:

用于定义系统级别的参数,如HDFS URL、Hadoop的临时目录以及用

于rack-aware集群中的配置文件的配置等,此中的参数定义会覆

盖core-default.xml文件中的默认配置。 -->

[yinzhengjie@yinzhengjie hadoop]$ more hdfs-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>dfs.replication</name>

<value></value>

</property>

</configuration> <!--

hdfs-site.xml:

HDFS的相关设定,如文件副本的个数、块大小及是否使用强制权限

等,此中的参数定义会覆盖hdfs-default.xml文件中的默认配置. -->

[yinzhengjie@yinzhengjie hadoop]$

[yinzhengjie@yinzhengjie hadoop]$ more mapred-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration> <!--

mapred-site.xml:

HDFS的相关设定,如reduce任务的默认个数、任务所能够使用内存

的默认上下限等,此中的参数定义会覆盖mapred-default.xml文件中的默认配置.

--> [yinzhengjie@yinzhengjie hadoop]$ more yarn-site.xml

<?xml version="1.0"?>

<configuration>

<property>

<name>yarn.resourcemanager.hostname</name>

<value>localhost</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration> <!--

yarn-site.xml:

主要用于配置调度器级别的参数. -->

[yinzhengjie@yinzhengjie hadoop]$

五.配置ssh免密登录

1>.在本地上生成公私秘钥对

[yinzhengjie@yinzhengjie ~]$ ssh-keygen -t rsa -P ‘’ -f ~/.ssh/id_rsa

Generating public/private rsa key pair.

Created directory '/home/yinzhengjie/.ssh'.

Your identification has been saved in /home/yinzhengjie/.ssh/id_rsa.

Your public key has been saved in /home/yinzhengjie/.ssh/id_rsa.pub.

The key fingerprint is:

:f0:d6::d0::4d:4f:9c:c2:ae::8b:af:cf: yinzhengjie@yinzhengjie

The key's randomart image is:

+--[ RSA ]----+

| .. .o..o|

| ...oo.+.|

| . oo. . .|

| o . o . |

| + S o . |

| . + . o |

| . . |

| .o E |

| .+o. |

+-----------------+

[yinzhengjie@yinzhengjie ~]$

2>.使用ssh-copy-id命令分配公钥(需要输入当前用户密码)

[yinzhengjie@yinzhengjie ~]$ ssh-copy-id yinzhengjie@localhost

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: key(s) remain to be installed -- if you are prompted now it is to install the new keys

yinzhengjie@localhost's password: Number of key(s) added: Now try logging into the machine, with: "ssh 'yinzhengjie@localhost'"

and check to make sure that only the key(s) you wanted were added. [yinzhengjie@yinzhengjie ~]$

3>.测试ssh连接

[yinzhengjie@yinzhengjie ~]$ cd /soft/

[yinzhengjie@yinzhengjie soft]$ ssh yinzhengjie@localhost

Last login: Fri May :: from localhost

[yinzhengjie@yinzhengjie ~]$ who

yinzhengjie pts/ -- : (localhost)

yinzhengjie pts/ -- : (172.30.100.1)

[yinzhengjie@yinzhengjie ~]$ exit

logout

Connection to localhost closed.

[yinzhengjie@yinzhengjie soft]$ who

yinzhengjie pts/ -- : (172.30.100.1)

[yinzhengjie@yinzhengjie soft]$

六.格式化hdfs文件系统

// :: INFO namenode.NameNode: STARTUP_MSG:

/************************************************************

STARTUP_MSG: Starting NameNode

STARTUP_MSG: host = yinzhengjie/211.98.71.195

STARTUP_MSG: args = [-format]

STARTUP_MSG: version = 2.7.3

STARTUP_MSG: classpath = /soft/hadoop-2.7.3/etc/hadoop:/soft/hadoop-2.7.3/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/stax-api-1.0-2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/activation-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jets3t-0.9.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/httpclient-4.2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/httpcore-4.2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-configuration-1.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-digester-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/avro-1.7.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/paranamer-2.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/gson-2.2.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hadoop-auth-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/zookeeper-3.4.6.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-framework-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-client-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsch-0.1.42.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/junit-4.11.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hamcrest-core-1.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/mockito-all-1.8.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/hadoop-annotations-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-math3-3.1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/xmlenc-0.52.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-httpclient-3.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-net-3.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/commons-collections-3.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jsp-api-2.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jersey-json-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/common/lib/jettison-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-common-2.7.3-tests.jar:/soft/hadoop-2.7.3/share/hadoop/common/hadoop-nfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xmlenc-0.52.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/htrace-core-3.1.0-incubating.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/netty-all-4.0.23.Final.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xercesImpl-2.9.1.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/xml-apis-1.3.04.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-2.7.3-tests.jar:/soft/hadoop-2.7.3/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6-tests.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-lang-2.6.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guava-11.0.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jsr305-3.0.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-logging-1.1.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-cli-1.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-api-2.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/stax-api-1.0-2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/activation-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/servlet-api-2.5.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-codec-1.4.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-util-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-client-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-jaxrs-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jackson-xc-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guice-servlet-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/guice-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/javax.inject-1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/aopalliance-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-json-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jettison-1.1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jaxb-impl-2.2.3-1.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jersey-guice-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/zookeeper-3.4.6.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/commons-collections-3.2.2.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/jetty-6.1.26.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-api-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-client-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/yarn/hadoop-yarn-registry-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/protobuf-java-2.5.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/avro-1.7.4.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-core-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jackson-mapper-asl-1.9.13.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/paranamer-2.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/snappy-java-1.0.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-compress-1.4.1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/xz-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/hadoop-annotations-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/commons-io-2.4.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-core-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-server-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/asm-3.2.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/log4j-1.2.17.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/netty-3.6.2.Final.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/leveldbjni-all-1.8.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/javax.inject-1.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/aopalliance-1.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/jersey-guice-1.9.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/guice-servlet-3.0.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/junit-4.11.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar:/soft/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.3-tests.jar:/contrib/capacity-scheduler/*.jar

STARTUP_MSG: build = https://git-wip-us.apache.org/repos/asf/hadoop.git -r baa91f7c6bc9cb92be5982de4719c1c8af91ccff; compiled by 'root' on 2016-08-18T01:41Z

STARTUP_MSG: java = 1.8.0_131

************************************************************/

// :: INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]

// :: INFO namenode.NameNode: createNameNode [-format]

Formatting using clusterid: CID-d8968c48-7cec-4e7f--ac533756fd3f

// :: INFO namenode.FSNamesystem: No KeyProvider found.

// :: INFO namenode.FSNamesystem: fsLock is fair:true

// :: INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit=

// :: INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true

// :: INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to :::00.000

// :: INFO blockmanagement.BlockManager: The block deletion will start around May ::

// :: INFO util.GSet: Computing capacity for map BlocksMap

// :: INFO util.GSet: VM type = -bit

// :: INFO util.GSet: 2.0% max memory MB = 17.8 MB

// :: INFO util.GSet: capacity = ^ = entries

// :: INFO blockmanagement.BlockManager: dfs.block.access.token.enable=false

// :: INFO blockmanagement.BlockManager: defaultReplication =

// :: INFO blockmanagement.BlockManager: maxReplication =

// :: INFO blockmanagement.BlockManager: minReplication =

// :: INFO blockmanagement.BlockManager: maxReplicationStreams =

// :: INFO blockmanagement.BlockManager: replicationRecheckInterval =

// :: INFO blockmanagement.BlockManager: encryptDataTransfer = false

// :: INFO blockmanagement.BlockManager: maxNumBlocksToLog =

// :: INFO namenode.FSNamesystem: fsOwner = yinzhengjie (auth:SIMPLE)

// :: INFO namenode.FSNamesystem: supergroup = supergroup

// :: INFO namenode.FSNamesystem: isPermissionEnabled = true

// :: INFO namenode.FSNamesystem: HA Enabled: false

// :: INFO namenode.FSNamesystem: Append Enabled: true

// :: INFO util.GSet: Computing capacity for map INodeMap

// :: INFO util.GSet: VM type = -bit

// :: INFO util.GSet: 1.0% max memory MB = 8.9 MB

// :: INFO util.GSet: capacity = ^ = entries

// :: INFO namenode.FSDirectory: ACLs enabled? false

// :: INFO namenode.FSDirectory: XAttrs enabled? true

// :: INFO namenode.FSDirectory: Maximum size of an xattr:

// :: INFO namenode.NameNode: Caching file names occuring more than times

// :: INFO util.GSet: Computing capacity for map cachedBlocks

// :: INFO util.GSet: VM type = -bit

// :: INFO util.GSet: 0.25% max memory MB = 2.2 MB

// :: INFO util.GSet: capacity = ^ = entries

// :: INFO namenode.FSNamesystem: dfs.namenode.safemode.threshold-pct = 0.9990000128746033

// :: INFO namenode.FSNamesystem: dfs.namenode.safemode.min.datanodes =

// :: INFO namenode.FSNamesystem: dfs.namenode.safemode.extension =

// :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets =

// :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users =

// :: INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = ,,

// :: INFO namenode.FSNamesystem: Retry cache on namenode is enabled

// :: INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is millis

// :: INFO util.GSet: Computing capacity for map NameNodeRetryCache

// :: INFO util.GSet: VM type = -bit

// :: INFO util.GSet: 0.029999999329447746% max memory MB = 273.1 KB

// :: INFO util.GSet: capacity = ^ = entries

// :: INFO namenode.FSImage: Allocated new BlockPoolId: BP--211.98.71.195-

// :: INFO common.Storage: Storage directory /tmp/hadoop-yinzhengjie/dfs/name has been successfully formatted.

// :: INFO namenode.FSImageFormatProtobuf: Saving image file /tmp/hadoop-yinzhengjie/dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression

// :: INFO namenode.FSImageFormatProtobuf: Image file /tmp/hadoop-yinzhengjie/dfs/name/current/fsimage.ckpt_0000000000000000000 of size bytes saved in seconds.

// :: INFO namenode.NNStorageRetentionManager: Going to retain images with txid >=

// :: INFO util.ExitUtil: Exiting with status

// :: INFO namenode.NameNode: SHUTDOWN_MSG:

/************************************************************

SHUTDOWN_MSG: Shutting down NameNode at yinzhengjie/211.98.71.195

************************************************************/

[yinzhengjie@yinzhengjie ~]$ echo $? [yinzhengjie@yinzhengjie ~]$

[yinzhengjie@yinzhengjie ~]$ hdfs namenode -format

七.启动hadoop进程(首次启动需要交互式输入确认信息,输入yes即可!)

[yinzhengjie@yinzhengjie ~]$ start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

Starting namenodes on [localhost]

localhost: starting namenode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-namenode-yinzhengjie.out

localhost: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-yinzhengjie.out

Starting secondary namenodes [0.0.0.0]

The authenticity of host '0.0.0.0 (0.0.0.0)' can't be established.

ECDSA key fingerprint is 0e::8d::d8:2f::::::6a::e4:f8:.

Are you sure you want to continue connecting (yes/no)? yes

0.0.0.0: Warning: Permanently added '0.0.0.0' (ECDSA) to the list of known hosts.

0.0.0.0: starting secondarynamenode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-secondarynamenode-yinzhengjie.out

starting yarn daemons

starting resourcemanager, logging to /soft/hadoop-2.7./logs/yarn-yinzhengjie-resourcemanager-yinzhengjie.out

localhost: starting nodemanager, logging to /soft/hadoop-2.7./logs/yarn-yinzhengjie-nodemanager-yinzhengjie.out

[yinzhengjie@yinzhengjie ~]$

[yinzhengjie@yinzhengjie ~]$ echo $? [yinzhengjie@yinzhengjie ~]$

八.验证安装是否成功

1>.查看服务端进程是否都正常启动

[yinzhengjie@yinzhengjie ~]$ jps

SecondaryNameNode

ResourceManager

DataNode

NodeManager

NameNode

Jps

[yinzhengjie@yinzhengjie ~]$

2>.关闭服务端防火墙

[yinzhengjie@yinzhengjie ~]$ systemctl status firewalld

● firewalld.service - firewalld - dynamic firewall daemon

Loaded: loaded (/usr/lib/systemd/system/firewalld.service; enabled; vendor preset: enabled)

Active: active (running) since Fri -- :: PDT; 58min ago

Main PID: (firewalld)

CGroup: /system.slice/firewalld.service

└─ /usr/bin/python -Es /usr/sbin/firewalld --nofork --nopid

[yinzhengjie@yinzhengjie ~]$ systemctl stop firewalld

==== AUTHENTICATING FOR org.freedesktop.systemd1.manage-units ===

Authentication is required to manage system services or units.

Authenticating as: root

Password:

==== AUTHENTICATION COMPLETE ===

[yinzhengjie@yinzhengjie ~]$ systemctl disable firewalld

==== AUTHENTICATING FOR org.freedesktop.systemd1.manage-unit-files ===

Authentication is required to manage system service or unit files.

Authenticating as: root

Password:

==== AUTHENTICATION COMPLETE ===

[yinzhengjie@yinzhengjie ~]$ systemctl status firewalld

● firewalld.service - firewalld - dynamic firewall daemon

Loaded: loaded (/usr/lib/systemd/system/firewalld.service; disabled; vendor preset: enabled)

Active: inactive (dead)

[yinzhengjie@yinzhengjie ~]$

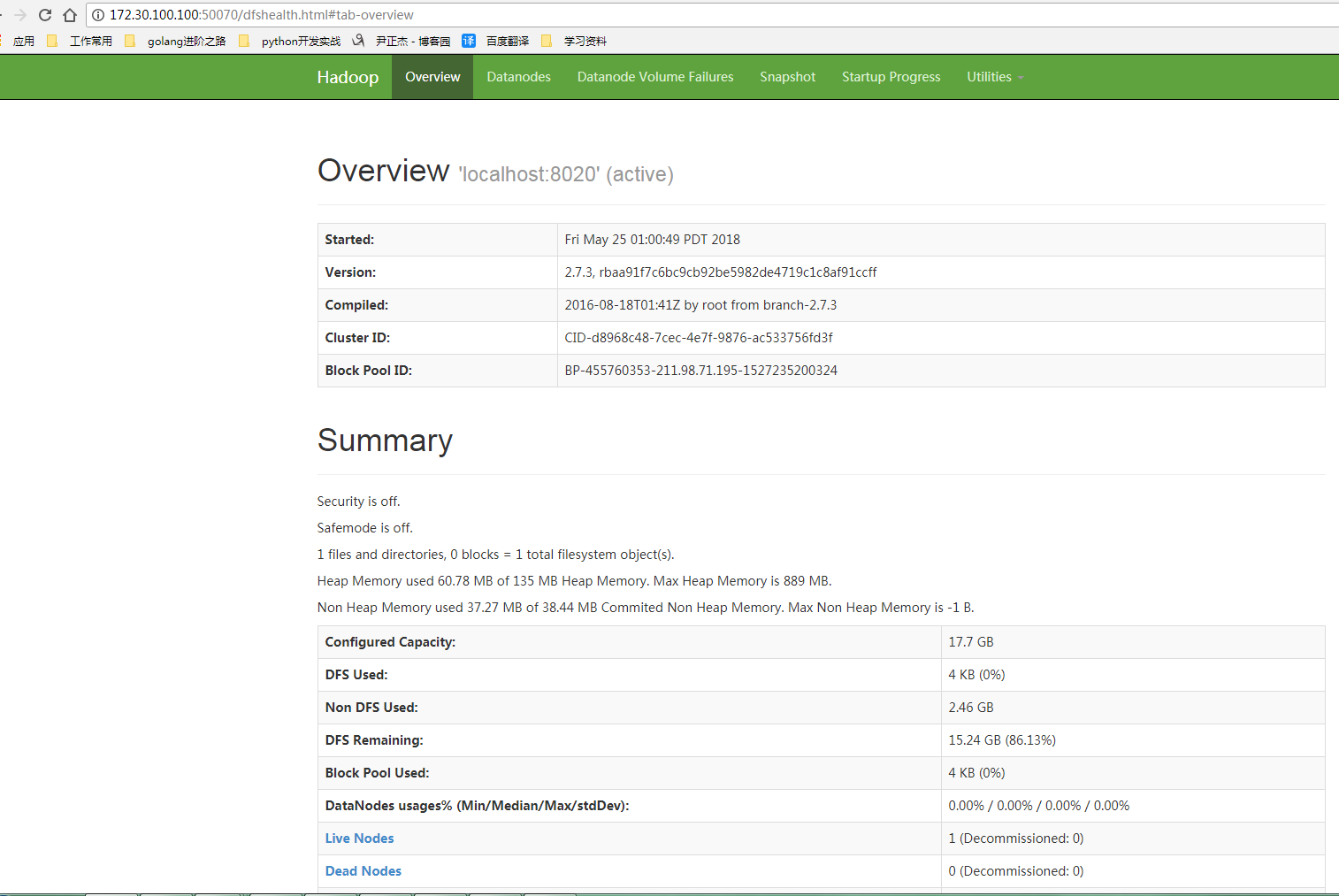

3>.客户端检查是否可用正常访问Hadoop的UI界面

Hadoop部署方式-伪分布式(Pseudo-Distributed Mode)的更多相关文章

- Hadoop部署方式-完全分布式(Fully-Distributed Mode)

Hadoop部署方式-完全分布式(Fully-Distributed Mode) 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 本博客搭建的虚拟机是伪分布式环境(https://w ...

- Hadoop部署方式-本地模式(Local (Standalone) Mode)

Hadoop部署方式-本地模式(Local (Standalone) Mode) 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. Hadoop总共有三种运行方式.本地模式(Local ...

- Hadoop单机和伪分布式安装

本教程为单机版+伪分布式的Hadoop,安装过程写的有些简单,只作为笔记方便自己研究Hadoop用. 环境 操作系统 Centos 6.5_64bit 本机名称 hadoop001 本机IP ...

- ubantu18.04下Hadoop安装与伪分布式配置

1 下载 下载地址:http://mirror.bit.edu.cn/apache/hadoop/common/stable2/ 2 解压 将文件解压到 /usr/local/hadoop cd ~ ...

- 使用Docker搭建Hadoop集群(伪分布式与完全分布式)

之前用虚拟机搭建Hadoop集群(包括伪分布式和完全分布式:Hadoop之伪分布式安装),但是这样太消耗资源了,自学了Docker也来操练一把,用Docker来构建Hadoop集群,这里搭建的Hado ...

- Hadoop学习2—伪分布式环境搭建

一.准备虚拟环境 1. 虚拟环境网络设置 A.安装VMware软件并安装linux环境,本人安装的是CentOS B.安装好虚拟机后,打开网络和共享中心 -> 更改适配器设置 -> 右键V ...

- 云计算课程实验之安装Hadoop及配置伪分布式模式的Hadoop

一.实验目的 1. 掌握Linux虚拟机的安装方法. 2. 掌握Hadoop的伪分布式安装方法. 二.实验内容 (一)Linux基本操作命令 Linux常用基本命令包括: ls,cd,mkdir,rm ...

- CentOS7 下 Hadoop 单节点(伪分布式)部署

Hadoop 下载 (2.9.2) https://hadoop.apache.org/releases.html 准备工作 关闭防火墙 (也可放行) # 停止防火墙 systemctl stop f ...

- Hadoop安装-单机-伪分布式简单部署配置

最近在搞大数据项目支持所以有时间写下hadoop随笔吧. 环境介绍: Linux: centos7 jdk:java version "1.8.0_181 hadoop:hadoop-3.2 ...

随机推荐

- java实验1实验报告(20135232王玥)

实验一 Java开发环境的熟悉 一.实验内容 1. 使用JDK编译.运行简单的Java程序 2.使用Eclipse 编辑.编译.运行.调试Java程序 二.实验要求 1.没有Linux基础的同学建议先 ...

- UVA - 11021 Tribles 概率dp

题目链接: http://vjudge.net/problem/UVA-11021 Tribles Time Limit: 3000MS 题意 有k只麻球,每只活一天就会死亡,临死之前可能会出生一些新 ...

- Codeforces Round #299 (Div. 2) D. Tavas and Malekas kmp

题目链接: http://codeforces.com/problemset/problem/535/D D. Tavas and Malekas time limit per test2 secon ...

- 19_集合_第19天(List、Set)_讲义

今日内容介绍 1.List接口 2.Set接口 3.判断集合唯一性原理 非常重要的关系图 xmind下载地址 链接:https://pan.baidu.com/s/1kx0XabmT27pt4Ll9A ...

- Beta版本测试第二天

一. 每日会议 1. 照片 2. 昨日完成工作 登入界面的优化与注册界面的优化,之前的登入界面与注册界面没有设计好,使得登入界面与注册界面并不好看,这次对界面进行了优化.另外尝试了找回密码的功能. 3 ...

- java 基础 --静态

1. 静态变量和静态代码块是在JVM加载类的时候执行的(静态变量被赋值,以后再new时不会重新赋值),执行且只执行一次2. 独立于该类的任何对象,不依赖于特定的实例,被类的所有实例(对象)所共享3. ...

- 【bzoj4712】洪水 树链剖分+线段树维护树形动态dp

题目描述 给出一棵树,点有点权.多次增加某个点的点权,并在某一棵子树中询问:选出若干个节点,使得每个叶子节点到根节点的路径上至少有一个节点被选择,求选出的点的点权和的最小值. 输入 输入文件第一行包含 ...

- 【bzoj5166】[HAOI2014]遥感监测 贪心

题目描述 给出平面上 $n$ 个圆,在x轴上选出尽可能少的点,使得每个圆中至少有一个点.求这个最小点数. 输入 第1行: N R 分别表示激光点的个数和射电望远镜能检测到的半径 第2~N+1行: Xi ...

- [BZOJ2055]80人环游世界 有上下界最小费用最大流

2055: 80人环游世界 Time Limit: 10 Sec Memory Limit: 64 MB Description 想必大家都看过成龙大哥的<80天环游世界>,里面 ...

- nginx支持.htaccess文件实现伪静态的方法

方法如下: 1. 在需要使用.htaccess文件的目录下新建一个.htaccess文件, vim /var/www/html/.htaccess 2. 在里面输入规则,我这里输入Discuz的伪静态 ...