word2vec词向量处理英文语料

word2vec介绍

word2vec官网:https://code.google.com/p/word2vec/

- word2vec是google的一个开源工具,能够根据输入的词的集合计算出词与词之间的距离。

- 它将term转换成向量形式,可以把对文本内容的处理简化为向量空间中的向量运算,计算出向量空间上的相似度,来表示文本语义上的相似度。

- word2vec计算的是余弦值,距离范围为0-1之间,值越大代表两个词关联度越高。

- 词向量:用Distributed Representation表示词,通常也被称为“Word Representation”或“Word Embedding(嵌入)”。

使用

运行和测试同样需要text8、questions-words.txt文件,语料下载地址:http://mattmahoney.net/dc/text8.zip

该语料编码格式UTF-8,存储为一行,语料训练信息:training on 85026035 raw words (62529137 effective words) took 197.4s, 316692 effective words/s

word2vec使用参数解释

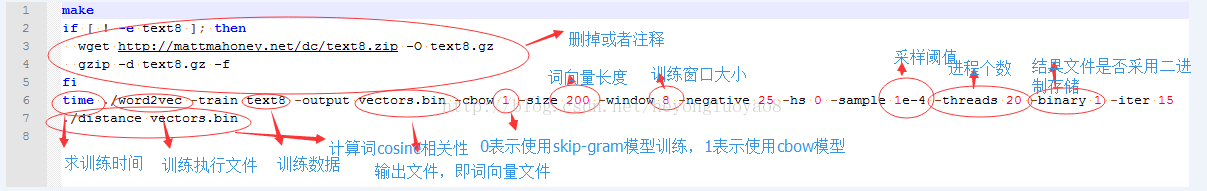

-train 训练数据

-output 结果输入文件,即每个词的向量

-cbow 是否使用cbow模型,0表示使用skip-gram模型,1表示使用cbow模型,默认情况下是skip-gram模型,cbow模型快一些,skip-gram模型效果好一些

-size 表示输出的词向量维数

-window 为训练的窗口大小,8表示每个词考虑前8个词与后8个词(实际代码中还有一个随机选窗口的过程,窗口大小<=5)

-negative 表示是否使用NEG方,0表示不使用,其它的值目前还不是很清楚

-hs 是否使用HS方法,0表示不使用,1表示使用

-sample 表示 采样的阈值,如果一个词在训练样本中出现的频率越大,那么就越会被采样

-binary 表示输出的结果文件是否采用二进制存储,0表示不使用(即普通的文本存储,可以打开查看),1表示使用,即vectors.bin的存储类型

-alpha 表示 学习速率

-min-count 表示设置最低频率,默认为5,如果一个词语在文档中出现的次数小于该阈值,那么该词就会被舍弃

-classes 表示词聚类簇的个数,从相关源码中可以得出该聚类是采用k-means

代码——

# -*- coding: utf-8 -*- """

功能:测试gensim使用

时间:2016年5月2日 18:00:00

""" from gensim.models import word2vec

import logging # 主程序

logging.basicConfig(format='%(asctime)s : %(levelname)s : %(message)s', level=logging.INFO)

sentences = word2vec.Text8Corpus("data/text8") # 加载语料

model = word2vec.Word2Vec(sentences, size=200) # 训练skip-gram模型; 默认window=5 # 计算两个词的相似度/相关程度

y1 = model.similarity("woman", "man")

print u"woman和man的相似度为:", y1

print "--------\n" # 计算某个词的相关词列表

y2 = model.most_similar("good", topn=20) # 20个最相关的

print u"和good最相关的词有:\n"

for item in y2:

print item[0], item[1]

print "--------\n" # 寻找对应关系

print ' "boy" is to "father" as "girl" is to ...? \n'

y3 = model.most_similar(['girl', 'father'], ['boy'], topn=3)

for item in y3:

print item[0], item[1]

print "--------\n" more_examples = ["he his she", "big bigger bad", "going went being"]

for example in more_examples:

a, b, x = example.split()

predicted = model.most_similar([x, b], [a])[0][0]

print "'%s' is to '%s' as '%s' is to '%s'" % (a, b, x, predicted)

print "--------\n" # 寻找不合群的词

y4 = model.doesnt_match("breakfast cereal dinner lunch".split())

print u"不合群的词:", y4

print "--------\n" # 保存模型,以便重用

model.save("text8.model")

# 对应的加载方式

# model_2 = word2vec.Word2Vec.load("text8.model") # 以一种C语言可以解析的形式存储词向量

model.save_word2vec_format("text8.model.bin", binary=True)

# 对应的加载方式

# model_3 = word2vec.Word2Vec.load_word2vec_format("text8.model.bin", binary=True) if __name__ == "__main__":

pass

Ubuntu16.04系统下运行结果

2016-5-2 18:56:19,332 : INFO : collecting all words and their counts

2016-5-2 18:56:19,334 : INFO : PROGRESS: at sentence #0, processed 0 words, keeping 0 word types

2016-5-2 18:56:27,431 : INFO : collected 253854 word types from a corpus of 17005207 raw words and 1701 sentences

2016-5-2 18:56:27,740 : INFO : min_count=5 retains 71290 unique words (drops 182564)

2016-5-2 18:56:27,740 : INFO : min_count leaves 16718844 word corpus (98% of original 17005207)

2016-5-2 18:56:27,914 : INFO : deleting the raw counts dictionary of 253854 items

2016-5-2 18:56:27,947 : INFO : sample=0.001 downsamples 38 most-common words

2016-5-2 18:56:27,947 : INFO : downsampling leaves estimated 12506280 word corpus (74.8% of prior 16718844)

2016-5-2 18:56:27,947 : INFO : estimated required memory for 71290 words and 200 dimensions: 149709000 bytes

2016-5-2 18:56:28,176 : INFO : resetting layer weights

2016-5-2 18:56:29,074 : INFO : training model with 3 workers on 71290 vocabulary and 200 features, using sg=0 hs=0 sample=0.001 negative=5

2016-5-2 18:56:29,074 : INFO : expecting 1701 sentences, matching count from corpus used for vocabulary survey

2016-5-2 18:56:30,086 : INFO : PROGRESS: at 0.86% examples, 531932 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:31,088 : INFO : PROGRESS: at 1.72% examples, 528872 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:32,108 : INFO : PROGRESS: at 2.68% examples, 549248 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:33,113 : INFO : PROGRESS: at 3.47% examples, 534255 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:34,135 : INFO : PROGRESS: at 4.43% examples, 545575 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:35,145 : INFO : PROGRESS: at 5.40% examples, 555220 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:36,147 : INFO : PROGRESS: at 6.34% examples, 560815 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:37,155 : INFO : PROGRESS: at 7.28% examples, 564712 words/s, in_qsize 6, out_qsize 1

2016-5-2 18:56:38,172 : INFO : PROGRESS: at 8.24% examples, 568088 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:39,169 : INFO : PROGRESS: at 9.19% examples, 570872 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:40,191 : INFO : PROGRESS: at 10.16% examples, 573068 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:41,203 : INFO : PROGRESS: at 11.12% examples, 575184 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:56:42,217 : INFO : PROGRESS: at 12.09% examples, 577227 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:43,220 : INFO : PROGRESS: at 13.04% examples, 578418 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:56:44,235 : INFO : PROGRESS: at 14.00% examples, 579574 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:56:45,239 : INFO : PROGRESS: at 14.96% examples, 580577 words/s, in_qsize 6, out_qsize 2

2016-5-2 18:56:46,243 : INFO : PROGRESS: at 15.86% examples, 578374 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:47,252 : INFO : PROGRESS: at 16.70% examples, 574918 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:56:48,256 : INFO : PROGRESS: at 17.66% examples, 576221 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:49,258 : INFO : PROGRESS: at 18.61% examples, 577045 words/s, in_qsize 4, out_qsize 0

2016-5-2 18:56:50,260 : INFO : PROGRESS: at 19.54% examples, 576947 words/s, in_qsize 4, out_qsize 1

2016-5-2 18:56:51,261 : INFO : PROGRESS: at 20.47% examples, 577120 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:52,284 : INFO : PROGRESS: at 21.43% examples, 577251 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:56:53,287 : INFO : PROGRESS: at 22.34% examples, 576556 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:54,308 : INFO : PROGRESS: at 23.20% examples, 574618 words/s, in_qsize 6, out_qsize 1

2016-5-2 18:56:55,306 : INFO : PROGRESS: at 24.15% examples, 575304 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:56,329 : INFO : PROGRESS: at 25.09% examples, 575610 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:56:57,333 : INFO : PROGRESS: at 26.04% examples, 576358 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:56:58,340 : INFO : PROGRESS: at 26.97% examples, 576745 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:56:59,337 : INFO : PROGRESS: at 27.91% examples, 577161 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:00,338 : INFO : PROGRESS: at 28.84% examples, 577303 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:01,346 : INFO : PROGRESS: at 29.65% examples, 575087 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:02,353 : INFO : PROGRESS: at 30.55% examples, 574516 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:57:03,356 : INFO : PROGRESS: at 31.36% examples, 572590 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:04,371 : INFO : PROGRESS: at 32.10% examples, 569320 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:05,380 : INFO : PROGRESS: at 32.95% examples, 568088 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:06,389 : INFO : PROGRESS: at 33.78% examples, 566886 words/s, in_qsize 6, out_qsize 1

2016-5-2 18:57:07,399 : INFO : PROGRESS: at 34.60% examples, 565345 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:08,418 : INFO : PROGRESS: at 35.51% examples, 564685 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:09,432 : INFO : PROGRESS: at 36.39% examples, 564093 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:10,441 : INFO : PROGRESS: at 37.21% examples, 562778 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:57:11,453 : INFO : PROGRESS: at 38.14% examples, 563163 words/s, in_qsize 6, out_qsize 1

2016-5-2 18:57:12,449 : INFO : PROGRESS: at 38.98% examples, 562072 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:13,461 : INFO : PROGRESS: at 39.88% examples, 561949 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:14,464 : INFO : PROGRESS: at 40.75% examples, 561493 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:15,482 : INFO : PROGRESS: at 41.60% examples, 560419 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:57:16,503 : INFO : PROGRESS: at 42.40% examples, 558807 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:17,520 : INFO : PROGRESS: at 43.27% examples, 558287 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:18,534 : INFO : PROGRESS: at 44.13% examples, 557685 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:19,538 : INFO : PROGRESS: at 44.93% examples, 556591 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:20,540 : INFO : PROGRESS: at 45.83% examples, 556881 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:21,541 : INFO : PROGRESS: at 46.75% examples, 557341 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:22,553 : INFO : PROGRESS: at 47.69% examples, 557860 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:57:23,557 : INFO : PROGRESS: at 48.51% examples, 557066 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:24,564 : INFO : PROGRESS: at 49.42% examples, 557201 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:25,571 : INFO : PROGRESS: at 50.31% examples, 557231 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:57:26,585 : INFO : PROGRESS: at 51.26% examples, 557820 words/s, in_qsize 6, out_qsize 1

2016-5-2 18:57:27,586 : INFO : PROGRESS: at 52.22% examples, 558455 words/s, in_qsize 4, out_qsize 0

2016-5-2 18:57:28,588 : INFO : PROGRESS: at 53.16% examples, 558932 words/s, in_qsize 6, out_qsize 1

2016-5-2 18:57:29,609 : INFO : PROGRESS: at 54.11% examples, 559389 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:30,616 : INFO : PROGRESS: at 55.01% examples, 559415 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:31,642 : INFO : PROGRESS: at 55.87% examples, 558596 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:32,647 : INFO : PROGRESS: at 56.78% examples, 558665 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:33,656 : INFO : PROGRESS: at 57.57% examples, 557526 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:34,660 : INFO : PROGRESS: at 58.39% examples, 556830 words/s, in_qsize 4, out_qsize 0

2016-5-2 18:57:35,664 : INFO : PROGRESS: at 59.31% examples, 557019 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:36,670 : INFO : PROGRESS: at 60.12% examples, 556187 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:37,683 : INFO : PROGRESS: at 60.94% examples, 555461 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:38,686 : INFO : PROGRESS: at 61.78% examples, 554836 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:39,705 : INFO : PROGRESS: at 62.54% examples, 553555 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:40,710 : INFO : PROGRESS: at 63.35% examples, 552863 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:41,719 : INFO : PROGRESS: at 64.12% examples, 551760 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:42,726 : INFO : PROGRESS: at 64.93% examples, 551152 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:43,741 : INFO : PROGRESS: at 65.74% examples, 550535 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:44,743 : INFO : PROGRESS: at 66.51% examples, 549746 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:45,743 : INFO : PROGRESS: at 67.23% examples, 548498 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:46,773 : INFO : PROGRESS: at 67.98% examples, 547297 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:47,786 : INFO : PROGRESS: at 68.81% examples, 546808 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:48,792 : INFO : PROGRESS: at 69.58% examples, 546028 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:49,798 : INFO : PROGRESS: at 70.37% examples, 545344 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:50,807 : INFO : PROGRESS: at 71.19% examples, 545012 words/s, in_qsize 6, out_qsize 1

2016-5-2 18:57:51,802 : INFO : PROGRESS: at 72.09% examples, 545184 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:52,806 : INFO : PROGRESS: at 72.98% examples, 545315 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:53,827 : INFO : PROGRESS: at 73.92% examples, 545714 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:54,827 : INFO : PROGRESS: at 74.86% examples, 546256 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:55,840 : INFO : PROGRESS: at 75.79% examples, 546379 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:56,851 : INFO : PROGRESS: at 76.73% examples, 546823 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:57:57,843 : INFO : PROGRESS: at 77.66% examples, 547189 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:58,847 : INFO : PROGRESS: at 78.50% examples, 546858 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:57:59,849 : INFO : PROGRESS: at 79.39% examples, 546959 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:00,854 : INFO : PROGRESS: at 80.27% examples, 546954 words/s, in_qsize 5, out_qsize 1

2016-5-2 18:58:01,856 : INFO : PROGRESS: at 81.22% examples, 547394 words/s, in_qsize 3, out_qsize 0

2016-5-2 18:58:02,875 : INFO : PROGRESS: at 82.13% examples, 547429 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:03,888 : INFO : PROGRESS: at 83.07% examples, 547815 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:04,880 : INFO : PROGRESS: at 84.00% examples, 548153 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:05,895 : INFO : PROGRESS: at 84.91% examples, 548428 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:06,888 : INFO : PROGRESS: at 85.77% examples, 548357 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:07,901 : INFO : PROGRESS: at 86.64% examples, 548365 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:08,897 : INFO : PROGRESS: at 87.50% examples, 548265 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:09,902 : INFO : PROGRESS: at 88.42% examples, 548504 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:10,916 : INFO : PROGRESS: at 89.18% examples, 547765 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:11,921 : INFO : PROGRESS: at 89.94% examples, 547006 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:12,923 : INFO : PROGRESS: at 90.81% examples, 546992 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:13,930 : INFO : PROGRESS: at 91.72% examples, 547225 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:14,935 : INFO : PROGRESS: at 92.59% examples, 547187 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:15,939 : INFO : PROGRESS: at 93.46% examples, 547133 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:16,944 : INFO : PROGRESS: at 94.18% examples, 546224 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:17,953 : INFO : PROGRESS: at 94.93% examples, 545497 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:18,959 : INFO : PROGRESS: at 95.70% examples, 544697 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:19,967 : INFO : PROGRESS: at 96.40% examples, 543702 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:20,974 : INFO : PROGRESS: at 97.26% examples, 543612 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:21,978 : INFO : PROGRESS: at 98.17% examples, 543801 words/s, in_qsize 5, out_qsize 0

2016-5-2 18:58:22,994 : INFO : PROGRESS: at 99.07% examples, 543908 words/s, in_qsize 4, out_qsize 2

2016-5-2 18:58:23,989 : INFO : PROGRESS: at 99.91% examples, 543692 words/s, in_qsize 6, out_qsize 0

2016-5-2 18:58:24,067 : INFO : worker thread finished; awaiting finish of 2 more threads

2016-5-2 18:58:24,083 : INFO : worker thread finished; awaiting finish of 1 more threads

2016-5-2 18:58:24,086 : INFO : worker thread finished; awaiting finish of 0 more threads

2016-5-2 18:58:24,086 : INFO : training on 85026035 raw words (62534095 effective words) took 115.0s, 543725 effective words/s

2016-5-2 18:58:24,086 : INFO : precomputing L2-norms of word weight vectors

<span style="color:#FF0000;">woman和man的相似度为: 0.699695936218

--------

和good最相关的词有: bad 0.721469461918

poor 0.567566931248

safe 0.534923613071

luck 0.518905758858

courage 0.510788619518

useful 0.498157411814

quick 0.497716665268

easy 0.497328162193

everyone 0.485905945301

pleasure 0.483758479357

true 0.482762247324

simple 0.480014979839

practical 0.479516804218

fair 0.479104012251

happy 0.476968646049

wrong 0.476797521114

reasonable 0.476701617241

you 0.475801795721

fun 0.472196519375

helpful 0.471719056368

-------- "boy" is to "father" as "girl" is to ...? mother 0.76334130764

grandmother 0.690031766891

daughter 0.684129178524

-------- 'he' is to 'his' as 'she' is to 'her'

'big' is to 'bigger' as 'bad' is to 'worse'

'going' is to 'went' as 'being' is to 'was'

-------- 不合群的词: cereal

--------</span> 2016-5-2 18:58:24,185 : INFO : saving Word2Vec object under text8.model, separately None

2016-5-2 18:58:24,185 : INFO : storing numpy array 'syn1neg' to text8.model.syn1neg.npy

2016-5-2 18:58:24,235 : INFO : not storing attribute syn0norm

2016-5-2 18:58:24,235 : INFO : storing numpy array 'syn0' to text8.model.syn0.npy

2016-5-2 18:58:24,278 : INFO : not storing attribute cum_table

2016-5-2 18:58:25,083 : INFO : storing 71290x200 projection weights into text8.model.bin

常用语料资源

下面提供一些网上能下载到的中文的好语料,供研究人员学习使用。

(1).中科院自动化所的中英文新闻语料库 http://www.datatang.com/data/13484

中文新闻分类语料库从凤凰、新浪、网易、腾讯等版面搜集。英语新闻分类语料库为Reuters-21578的ModApte版本。

(2).搜狗的中文新闻语料库 http://www.sogou.com/labs/dl/c.html

包括搜狐的大量新闻语料与对应的分类信息。有不同大小的版本可以下载。

(3).李荣陆老师的中文语料库 http://www.datatang.com/data/11968

压缩后有240M大小

(4).谭松波老师的中文文本分类语料 http://www.datatang.com/data/11970

不仅包含大的分类,例如经济、运动等等,每个大类下面还包含具体的小类,例如运动包含篮球、足球等等。能够作为层次分类的语料库,非常实用。这个网址免积分(谭松波老师的主页):http://www.searchforum.org.cn/tansongbo/corpus1.PHP

(5).网易分类文本数据 http://www.datatang.com/data/11965

包含运动、汽车等六大类的4000条文本数据。

(6).中文文本分类语料 http://www.datatang.com/data/11963

包含Arts、Literature等类别的语料文本。

(7).更全的搜狗文本分类语料 http://www.sogou.com/labs/dl/c.html

搜狗实验室发布的文本分类语料,有不同大小的数据版本供免费下载

(8).2002年中文网页分类训练集 http://www.datatang.com/data/15021

2002年秋天北京大学网络与分布式实验室天网小组通过动员不同专业的几十个学生,人工选取形成了一个全新的基于层次模型的大规模中文网页样本集。它包括11678个训练网页实例和3630个测试网页实例,分布在11个大类别中。

常用分词工具

将预料库进行分词并去掉停用词,常用分词工具有:

StandardAnalyzer(中英文)、ChineseAnalyzer(中文)、CJKAnalyzer(中英文)、IKAnalyzer(中英文,兼容韩文,日文)、paoding(中文)、MMAnalyzer(中英文)、MMSeg4j(中英文)、imdict(中英文)、NLTK(中英文)、Jieba(中英文)。

提供一份DEMO语料资源

原始语料 http://pan.baidu.com/s/1nviuFc1

训练语料 http://pan.baidu.com/s/1kVEmNTd

word2vec词向量处理英文语料的更多相关文章

- word2vec词向量处理中文语料

word2vec介绍 word2vec官网:https://code.google.com/p/word2vec/ word2vec是google的一个开源工具,能够根据输入的词的集合计算出词与词之间 ...

- 文本分布式表示(三):用gensim训练word2vec词向量

今天参考网上的博客,用gensim训练了word2vec词向量.训练的语料是著名科幻小说<三体>,这部小说我一直没有看,所以这次拿来折腾一下. <三体>这本小说里有不少人名和一 ...

- word2vec词向量训练及中文文本类似度计算

本文是讲述怎样使用word2vec的基础教程.文章比較基础,希望对你有所帮助! 官网C语言下载地址:http://word2vec.googlecode.com/svn/trunk/ 官网Python ...

- 机器学习之路: python 实践 word2vec 词向量技术

git: https://github.com/linyi0604/MachineLearning 词向量技术 Word2Vec 每个连续词汇片段都会对后面有一定制约 称为上下文context 找到句 ...

- Word2Vec词向量(一)

一.词向量基础(一)来源背景 word2vec是google在2013年推出的一个NLP工具,它的特点是将所有的词向量化,这样词与词之间就可以定量的去度量他们之间的关系,挖掘词之间的联系.虽然源码是 ...

- 机器学习入门-文本特征-word2vec词向量模型 1.word2vec(进行word2vec映射编码)2.model.wv['sky']输出这个词的向量映射 3.model.wv.index2vec(输出经过映射的词名称)

函数说明: 1. from gensim.model import word2vec 构建模型 word2vec(corpus_token, size=feature_size, min_count ...

- 文本分类实战(一)—— word2vec预训练词向量

1 大纲概述 文本分类这个系列将会有十篇左右,包括基于word2vec预训练的文本分类,与及基于最新的预训练模型(ELMo,BERT等)的文本分类.总共有以下系列: word2vec预训练词向量 te ...

- 词向量之word2vec实践

首先感谢无私分享的各位大神,文中很多内容多有借鉴之处.本次将自己的实验过程记录,希望能帮助有需要的同学. 一.从下载数据开始 现在的中文语料库不是特别丰富,我在之前的文章中略有整理,有兴趣的可以看看. ...

- 文本情感分析(二):基于word2vec、glove和fasttext词向量的文本表示

上一篇博客用词袋模型,包括词频矩阵.Tf-Idf矩阵.LSA和n-gram构造文本特征,做了Kaggle上的电影评论情感分类题. 这篇博客还是关于文本特征工程的,用词嵌入的方法来构造文本特征,也就是用 ...

随机推荐

- 线段树 区间查询区间修改 poj 3468

#include<cstdio> #include<iostream> #include<algorithm> #include<string.h> u ...

- HTML5圆形百分比进度条插件circleChart

在页面中引入jquery和circleChart.min.js文件. <script src="path/to/jquery.min.js"></script&g ...

- Go第三方库之tail

Tail Demo // tail.TailFile()函数开启goroutine去读取文件,通过channel格式的t.lines传递内容. t, err := tail.TailFile(&quo ...

- power-plan如何定

Power-Plan或者说PG如何打,这是一个仁者见仁智者见智的问题,没有一个标准的答案,因为有各种各样的影响因素.本文将列举一些可能的影响因素: 1.和design 相关 1) Utilizati ...

- 读懂timing report

三部分:表头/launch path /capture path 1.表头 1) 工具版本信息:如示例中的18.10-p001,对某个具体项目timing signoff 工具的版本最好保证一致: 操 ...

- python练习:编写一个程序,要求用户输入10个整数,然后输出其中最大的奇数,如果用户没有输入奇数,则输出一个消息进行说明。

python练习:编写一个程序,要求用户输入10个整数,然后输出其中最大的奇数,如果用户没有输入奇数,则输出一个消息进行说明. 重难点:通过input函数输入的行消息为字符串格式,必须转换为整型,否则 ...

- 使用python同时替换json多个指定key的value

1.如何同时替换json多个指定key的value import json from jsonpath_ng import parse def join_paths(regx_path,new_val ...

- android基础控件的使用

控件在屏幕上位置的确定 通常情况下控件在屏幕上确定至少要连接两条线(一条水平,一条垂直) 如下图连接了四条线 辅助线 辅助线的调出: 水平辅助线:进入activity.xml的设计模式之后如下图 为了 ...

- js克隆一个对象

我们知道,对象类型在赋值的过程中其实是复制了地址,所以如果改变了一方,其他都会被改变.我们应该如何克隆一个对象,并且避免这种现象的发生呢? 方法一:Object.assign function cop ...

- 【转载】 BIOS设置选项详细解释——CPU核心篇

原文地址: http://kuaibao.qq.com/s/20180226A1G1OC00?refer=spider ---------------------------------------- ...