Installing Apache Spark on Ubuntu 16.04

Santosh Srinivas

on 07 Nov 2016, tagged onApache Spark, Analytics, Data Minin

I've finally got to a long pending to-do-item to play with Apache Spark.

The following installation steps worked for me on Ubuntu 16.04.

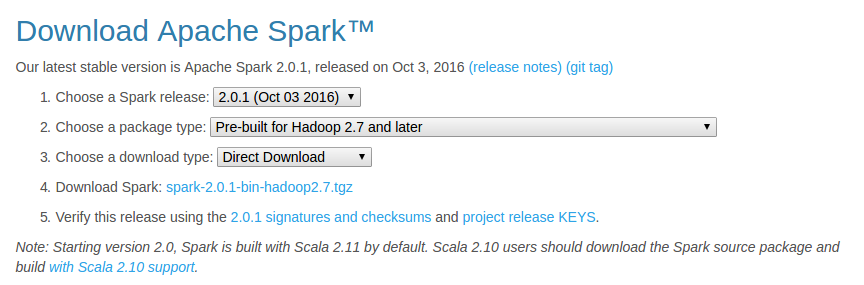

- Download the latest pre-built version from http://spark.apache.org/downloads.html

The below options worked for me:

- Unzip and move Spark

cd ~/Downloads/

tar xzvf spark-2.0.1-bin-hadoop2.7.tgz

mv spark-2.0.1-bin-hadoop2.7/ spark

sudo mv spark/ /usr/lib/

- Install SBT

As mentioned at sbt - Download

echo "deb https://dl.bintray.com/sbt/debian /" | sudo tee -a /etc/apt/sources.list.d/sbt.list

sudo apt-key adv --keyserver hkp://keyserver.ubuntu.com:80 --recv 2EE0EA64E40A89B84B2DF73499E82A75642AC823

sudo apt-get update

sudo apt-get install sbt

- Make sure Java is installed

If not, install java

sudo apt-add-repository ppa:webupd8team/java

sudo apt-get update

sudo apt-get install oracle-java8-installer

- Configure Spark

cd /usr/lib/spark/conf/

cp spark-env.sh.template spark-env.sh

vi spark-env.sh

Add the following lines

JAVA_HOME=/usr/lib/jvm/java-8-oracle

SPARK_WORKER_MEMORY=4g

- Configure IPv6

Basically, disable IPv6 using sudo vi /etc/sysctl.conf and add below lines

net.ipv6.conf.all.disable_ipv6 = 1

net.ipv6.conf.default.disable_ipv6 = 1

net.ipv6.conf.lo.disable_ipv6 = 1

- Configure .bashrc

I modified .bashrc in Sublime Text using subl ~/.bashrc and added the following lines

export JAVA_HOME=/usr/lib/jvm/java-8-oracle

export SBT_HOME=/usr/share/sbt-launcher-packaging/bin/sbt-launch.jar

export SPARK_HOME=/usr/lib/spark

export PATH=$PATH:$JAVA_HOME/bin

export PATH=$PATH:$SBT_HOME/bin:$SPARK_HOME/bin:$SPARK_HOME/sbin

- Configure fish (Optional - But I love the fish shell)

Modify config.fish using subl ~/.config/fish/config.fish and add the following lines

#Credit: http://fishshell.com/docs/current/tutorial.html#tut_startup

set -x PATH $PATH /usr/lib/spark

set -x PATH $PATH /usr/lib/spark/bin

set -x PATH $PATH /usr/lib/spark/sbin

- Test Spark (Should work both in fish and bash)

Run pyspark (this is available in /usr/lib/spark/bin/) and test out.

For example ....

>>> a = 5

>>> b = 3

>>> a+b

8

>>> print(“Welcome to Spark”)

Welcome to Spark

## type Ctrl-d to exit

Try also, the built in run-example using run-example org.apache.spark.examples.SparkPi

That's it! You are ready to rock on using Apache Spark!

Next, I plan to checkout analysis using R as mentioned inhttp://www.milanor.net/blog/wp-content/uploads/2016/11/interactiveDataAnalysiswithSparkR_v5.pdf

Installing Apache Spark on Ubuntu 16.04的更多相关文章

- Install and Configure Apache Kafka on Ubuntu 16.04

https://devops.profitbricks.com/tutorials/install-and-configure-apache-kafka-on-ubuntu-1604-1/ by hi ...

- Install LAMP Stack On Ubuntu 16.04

原文:http://www.unixmen.com/how-to-install-lamp-stack-on-ubuntu-16-04/ LAMP is a combination of operat ...

- Ubuntu 16.04 LAMP server tutorial with Apache 2.4, PHP 7 and MariaDB (instead of MySQL)

https://www.howtoforge.com/tutorial/install-apache-with-php-and-mysql-on-ubuntu-16-04-lamp/ This tut ...

- digitalocean --- How To Install Apache Tomcat 8 on Ubuntu 16.04

https://www.digitalocean.com/community/tutorials/how-to-install-apache-tomcat-8-on-ubuntu-16-04 Intr ...

- 安装Hadoop及Spark(Ubuntu 16.04)

安装Hadoop及Spark(Ubuntu 16.04) 安装JDK 下载jdk(以jdk-8u91-linux-x64.tar.gz为例) 新建文件夹 sudo mkdir /usr/lib/jvm ...

- 解决Ubuntu 16.04 上Android Studio2.3上面运行APP时提示DELETE_FAILED_INTERNAL_ERROR Error while Installing APKs的问题

本人工作环境:Ubuntu 16.04 LTS + Android Studio 2.3 AVD启动之后,运行APP,报错提示: DELETE_FAILED_INTERNAL_ERROR Error ...

- Installing Moses on Ubuntu 16.04

Installing Moses on Ubuntu 16.04 The process of installation To install requirements sudo apt-get in ...

- Installing Hyperledger Fabric v1.1 on Ubuntu 16.04 — Part I

There is an entire library of Blockchain APIs which you can select according to the needs that suffi ...

- 如何在Ubuntu 16.04上安装Apache Web服务器

转载自:https://www.howtoing.com/how-to-install-the-apache-web-server-on-ubuntu-16-04 介绍 Apache HTTP服务器是 ...

随机推荐

- Signalr信息推送

前序 距离上次写文章,差不多已经大半年了.感觉自己越来越懒了,即使有时候空闲下来了,也不想动.前面买了一系列的Python的书,基础的看了大概有四分之一,剩下的基本上还未动,晚上回去也只是吃饭看电影. ...

- loadrunner日志信息

日志分两种1.在VUGEN中运行后的日志2.在controller中运行后的日志 日志设置分两步:1.首先,在VUGEN或controller中run-time setting, 选中always s ...

- Gitlab-system-hooks

当创建或者删除,用户或者项目时,可能想收到一个通知.Gitlab支持这种类型的system hooks. 下面事件可以触发一个system webhook调用. Project created Pro ...

- 005 爬虫(requests与beautifulSoup库的使用)

一:知识点 1.安装requests库 2.Brautiful soup 可以提供一些简单的,python式的函数来处理导航,搜索,修改分析树等功能. 她是一个工具箱,通过解析文档为用户提供需要抓去的 ...

- 使用 Web 服务 为 ECS Linux 实例配置网站及绑定域名

Nginx 服务绑定域名 https://help.aliyun.com/knowledge_detail/41091.html?spm=a2c4e.11155515.0.0.4lvCpF 以 YUM ...

- 安装 Git

是时候动手尝试下 Git 了,不过得先安装好它.有许多种安装方式,主要分为两种,一种是通过编译源代码来安装:另一种是使用为特定平台预编译好的安装包. 从源代码安装 若是条件允许,从源代码安装有很多好处 ...

- 【Trie】【枚举约数】Codeforces Round #482 (Div. 2) D. Kuro and GCD and XOR and SUM

题意: 给你一个空的可重集,支持以下操作: 向其中塞进一个数x(不超过100000), 询问(x,K,s):如果K不能整除x,直接输出-1.否则,问你可重集中所有是K的倍数的数之中,小于等于s-x,并 ...

- bzoj 1492

这道题真好... 首先,感觉像DP,但是如果按照原题意,有无数个状态,每个状态又有无数个转移. 然后思考,我们每次买一部分和卖一部分的原因是什么,如果没有那个比例(就是rate=1恒成立),那么很容易 ...

- Codeforces Round #FF (Div. 1) A. DZY Loves Sequences 动态规划

A. DZY Loves Sequences 题目连接: http://www.codeforces.com/contest/446/problem/A Description DZY has a s ...

- hdoj 5199 Gunner map

Gunner Time Limit: 1 Sec Memory Limit: 256 MB 题目连接 http://acm.hdu.edu.cn/showproblem.php?pid=5199 D ...

Download Apache Spark

Download Apache Spark