使用flink Table &Sql api来构建批量和流式应用(1)Table的基本概念

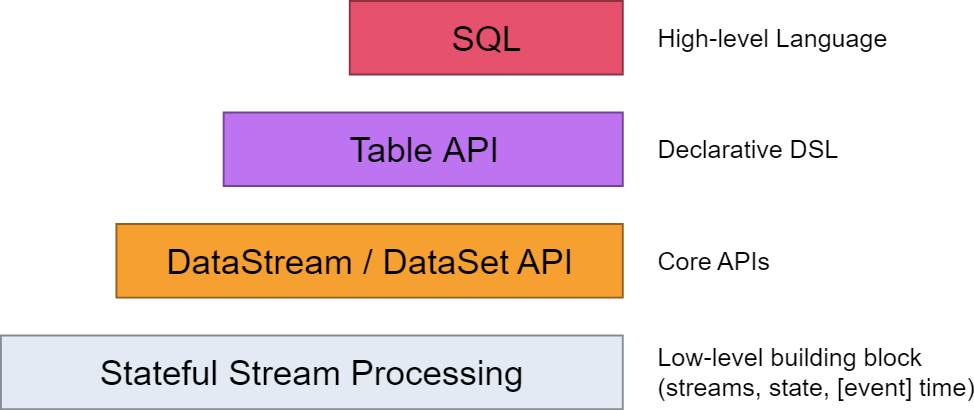

从flink的官方文档,我们知道flink的编程模型分为四层,sql层是最高层的api,Table api是中间层,DataStream/DataSet Api 是核心,stateful Streaming process层是底层实现。

其中,

flink dataset api使用及原理 介绍了DataSet Api

flink DataStream API使用及原理介绍了DataStream Api

flink中的时间戳如何使用?---Watermark使用及原理 介绍了底层实现的基础Watermark

flink window实例分析 介绍了window的概念及使用原理

Flink中的状态与容错 介绍了State的概念及checkpoint,savepoint的容错机制

0. 基本概念:

0.1 TableEnvironment

TableEnvironment是Table API和SQL集成的核心概念,它主要负责:

2、注册一个外部目录Catalog

3、执行SQL查询

4、注册一个用户自定义函数UDF

5、将DataStream或者DataSet转换成Table

6、持有BatchTableEnvironment或者StreamTableEnvironment的引用

/**

* The base class for batch and stream TableEnvironments.

*

* <p>The TableEnvironment is a central concept of the Table API and SQL integration. It is

* responsible for:

*

* <ul>

* <li>Registering a Table in the internal catalog</li>

* <li>Registering an external catalog</li>

* <li>Executing SQL queries</li>

* <li>Registering a user-defined scalar function. For the user-defined table and aggregate

* function, use the StreamTableEnvironment or BatchTableEnvironment</li>

* </ul>

*/

0.2 Catalog

Catalog:所有对数据库和表的元数据信息都存放再Flink CataLog内部目录结构中,其存放了flink内部所有与Table相关的元数据信息,包括表结构信息/数据源信息等。

/**

* This interface is responsible for reading and writing metadata such as database/table/views/UDFs

* from a registered catalog. It connects a registered catalog and Flink's Table API.

*/

其结构如下:

0.3 TableSource

在使用Table API时,可以将外部的数据源直接注册成Table数据结构。此结构称之为TableSource

/**

* Defines an external table with the schema that is provided by {@link TableSource#getTableSchema}.

*

* <p>The data of a {@link TableSource} is produced as a {@code DataSet} in case of a {@code BatchTableSource}

* or as a {@code DataStream} in case of a {@code StreamTableSource}. The type of ths produced

* {@code DataSet} or {@code DataStream} is specified by the {@link TableSource#getProducedDataType()} method.

*

* <p>By default, the fields of the {@link TableSchema} are implicitly mapped by name to the fields of

* the produced {@link DataType}. An explicit mapping can be defined by implementing the

* {@link DefinedFieldMapping} interface.

*

* @param <T> The return type of the {@link TableSource}.

*/

0.4 TableSink

数据处理完成后需要将结果写入外部存储中,在Table API中有对应的Sink模块,此模块为TableSink

/**

* A {@link TableSink} specifies how to emit a table to an external

* system or location.

*

* <p>The interface is generic such that it can support different storage locations and formats.

*

* @param <T> The return type of the {@link TableSink}.

*/

0.5 Table Connector

在Flink1.6版本之后,为了能够让Table API通过配置化的方式连接外部系统,且同时可以在sql client中使用,flink 提出了Table Connector的概念,主要目的时将Table Source和Table Sink的定义和使用分离。

通过Table Connector将不同内建的Table Source和TableSink封装,形成可以配置化的组件,在Table Api和Sql client能够同时使用。

/**

* Creates a table source and/or table sink from a descriptor.

*

* <p>Descriptors allow for declaring the communication to external systems in an

* implementation-agnostic way. The classpath is scanned for suitable table factories that match

* the desired configuration.

*

* <p>The following example shows how to read from a connector using a JSON format and

* register a table source as "MyTable":

*

* <pre>

* {@code

*

* tableEnv

* .connect(

* new ExternalSystemXYZ()

* .version("0.11"))

* .withFormat(

* new Json()

* .jsonSchema("{...}")

* .failOnMissingField(false))

* .withSchema(

* new Schema()

* .field("user-name", "VARCHAR").from("u_name")

* .field("count", "DECIMAL")

* .registerSource("MyTable");

* }

*</pre>

*

* @param connectorDescriptor connector descriptor describing the external system

*/

TableDescriptor connect(ConnectorDescriptor connectorDescriptor);

本篇主要聚焦于sql和Table Api。

1.sql

1.1 基于DataSet api的sql

示例:

package org.apache.flink.table.examples.java; import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.table.api.Table;

import org.apache.flink.table.api.java.BatchTableEnvironment; /**

* Simple example that shows how the Batch SQL API is used in Java.

*

* <p>This example shows how to:

* - Convert DataSets to Tables

* - Register a Table under a name

* - Run a SQL query on the registered Table

*/

public class WordCountSQL { // *************************************************************************

// PROGRAM

// ************************************************************************* public static void main(String[] args) throws Exception { // set up execution environment

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

BatchTableEnvironment tEnv = BatchTableEnvironment.create(env); DataSet<WC> input = env.fromElements(

new WC("Hello", 1),

new WC("Ciao", 1),

new WC("Hello", 1)); // register the DataSet as table "WordCount"

tEnv.registerDataSet("WordCount", input, "word, frequency"); // run a SQL query on the Table and retrieve the result as a new Table

Table table = tEnv.sqlQuery(

"SELECT word, SUM(frequency) as frequency FROM WordCount GROUP BY word"); DataSet<WC> result = tEnv.toDataSet(table, WC.class); result.print();

} // *************************************************************************

// USER DATA TYPES

// ************************************************************************* /**

* Simple POJO containing a word and its respective count.

*/

public static class WC {

public String word;

public long frequency; // public constructor to make it a Flink POJO

public WC() {} public WC(String word, long frequency) {

this.word = word;

this.frequency = frequency;

} @Override

public String toString() {

return "WC " + word + " " + frequency;

}

}

}

其中,BatchTableEnvironment

/**

* The {@link TableEnvironment} for a Java batch {@link ExecutionEnvironment} that works

* with {@link DataSet}s.

*

* <p>A TableEnvironment can be used to:

* <ul>

* <li>convert a {@link DataSet} to a {@link Table}</li>

* <li>register a {@link DataSet} in the {@link TableEnvironment}'s catalog</li>

* <li>register a {@link Table} in the {@link TableEnvironment}'s catalog</li>

* <li>scan a registered table to obtain a {@link Table}</li>

* <li>specify a SQL query on registered tables to obtain a {@link Table}</li>

* <li>convert a {@link Table} into a {@link DataSet}</li>

* <li>explain the AST and execution plan of a {@link Table}</li>

* </ul>

*/

BatchTableSource

/** Defines an external batch table and provides access to its data.

*

* @param <T> Type of the {@link DataSet} created by this {@link TableSource}.

*/

BatchTableSink

/** Defines an external {@link TableSink} to emit a batch {@link Table}.

*

* @param <T> Type of {@link DataSet} that this {@link TableSink} expects and supports.

*/

1.2 基于DataStream api的sql

示例代码

package org.apache.flink.table.examples.java; import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.table.api.Table;

import org.apache.flink.table.api.java.StreamTableEnvironment; import java.util.Arrays; /**

* Simple example for demonstrating the use of SQL on a Stream Table in Java.

*

* <p>This example shows how to:

* - Convert DataStreams to Tables

* - Register a Table under a name

* - Run a StreamSQL query on the registered Table

*

*/

public class StreamSQLExample { // *************************************************************************

// PROGRAM

// ************************************************************************* public static void main(String[] args) throws Exception { // set up execution environment

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

StreamTableEnvironment tEnv = StreamTableEnvironment.create(env); DataStream<Order> orderA = env.fromCollection(Arrays.asList(

new Order(1L, "beer", 3),

new Order(1L, "diaper", 4),

new Order(3L, "rubber", 2))); DataStream<Order> orderB = env.fromCollection(Arrays.asList(

new Order(2L, "pen", 3),

new Order(2L, "rubber", 3),

new Order(4L, "beer", 1))); // convert DataStream to Table

Table tableA = tEnv.fromDataStream(orderA, "user, product, amount");

// register DataStream as Table

tEnv.registerDataStream("OrderB", orderB, "user, product, amount"); // union the two tables

Table result = tEnv.sqlQuery("SELECT * FROM " + tableA + " WHERE amount > 2 UNION ALL " +

"SELECT * FROM OrderB WHERE amount < 2"); tEnv.toAppendStream(result, Order.class).print(); env.execute();

} // *************************************************************************

// USER DATA TYPES

// ************************************************************************* /**

* Simple POJO.

*/

public static class Order {

public Long user;

public String product;

public int amount; public Order() {

} public Order(Long user, String product, int amount) {

this.user = user;

this.product = product;

this.amount = amount;

} @Override

public String toString() {

return "Order{" +

"user=" + user +

", product='" + product + '\'' +

", amount=" + amount +

'}';

}

}

}

其中,StreamTableEnvironment

/**

* The {@link TableEnvironment} for a Java {@link StreamExecutionEnvironment} that works with

* {@link DataStream}s.

*

* <p>A TableEnvironment can be used to:

* <ul>

* <li>convert a {@link DataStream} to a {@link Table}</li>

* <li>register a {@link DataStream} in the {@link TableEnvironment}'s catalog</li>

* <li>register a {@link Table} in the {@link TableEnvironment}'s catalog</li>

* <li>scan a registered table to obtain a {@link Table}</li>

* <li>specify a SQL query on registered tables to obtain a {@link Table}</li>

* <li>convert a {@link Table} into a {@link DataStream}</li>

* <li>explain the AST and execution plan of a {@link Table}</li>

* </ul>

*/

StreamTableSource

/** Defines an external stream table and provides read access to its data.

*

* @param <T> Type of the {@link DataStream} created by this {@link TableSource}.

*/

StreamTableSink

/**

* Defines an external stream table and provides write access to its data.

*

* @param <T> Type of the {@link DataStream} created by this {@link TableSink}.

*/

2. table api

示例

package org.apache.flink.table.examples.java; import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.table.api.Table;

import org.apache.flink.table.api.java.BatchTableEnvironment; /**

* Simple example for demonstrating the use of the Table API for a Word Count in Java.

*

* <p>This example shows how to:

* - Convert DataSets to Tables

* - Apply group, aggregate, select, and filter operations

*/

public class WordCountTable { // *************************************************************************

// PROGRAM

// ************************************************************************* public static void main(String[] args) throws Exception {

ExecutionEnvironment env = ExecutionEnvironment.createCollectionsEnvironment();

BatchTableEnvironment tEnv = BatchTableEnvironment.create(env); DataSet<WC> input = env.fromElements(

new WC("Hello", 1),

new WC("Ciao", 1),

new WC("Hello", 1)); Table table = tEnv.fromDataSet(input); Table filtered = table

.groupBy("word")

.select("word, frequency.sum as frequency")

.filter("frequency = 2"); DataSet<WC> result = tEnv.toDataSet(filtered, WC.class); result.print();

} // *************************************************************************

// USER DATA TYPES

// ************************************************************************* /**

* Simple POJO containing a word and its respective count.

*/

public static class WC {

public String word;

public long frequency; // public constructor to make it a Flink POJO

public WC() {} public WC(String word, long frequency) {

this.word = word;

this.frequency = frequency;

} @Override

public String toString() {

return "WC " + word + " " + frequency;

}

}

}

3.数据转换

3.1 DataSet与Table相互转换

DataSet-->Table

注册方式:

// register the DataSet as table "WordCount"

tEnv.registerDataSet("WordCount", input, "word, frequency");

转换方式:

Table table = tEnv.fromDataSet(input);

Table-->DataSet

DataSet<WC> result = tEnv.toDataSet(filtered, WC.class);

3.2 DataStream与Table相互转换

DataStream-->Table

注册方式:

tEnv.registerDataStream("OrderB", orderB, "user, product, amount");

转换方式:

Table tableA = tEnv.fromDataStream(orderA, "user, product, amount");

Table-->DataStream

DataSet<WC> result = tEnv.toDataSet(filtered, WC.class);

参考资料

【1】https://ci.apache.org/projects/flink/flink-docs-release-1.8/concepts/programming-model.html

【2】Flink原理、实战与性能优化

使用flink Table &Sql api来构建批量和流式应用(1)Table的基本概念的更多相关文章

- 使用flink Table &Sql api来构建批量和流式应用(2)Table API概述

从flink的官方文档,我们知道flink的编程模型分为四层,sql层是最高层的api,Table api是中间层,DataStream/DataSet Api 是核心,stateful Stream ...

- 使用flink Table &Sql api来构建批量和流式应用(3)Flink Sql 使用

从flink的官方文档,我们知道flink的编程模型分为四层,sql层是最高层的api,Table api是中间层,DataStream/DataSet Api 是核心,stateful Stream ...

- Flink 另外一个分布式流式和批量数据处理的开源平台

Apache Flink是一个分布式流式和批量数据处理的开源平台. Flink的核心是一个流式数据流动引擎,它为数据流上面的分布式计算提供数据分发.通讯.容错.Flink包括几个使用 Flink引擎创 ...

- 8、Flink Table API & Flink Sql API

一.概述 上图是flink的分层模型,Table API 和 SQL 处于最顶端,是 Flink 提供的高级 API 操作.Flink SQL 是 Flink 实时计算为简化计算模型,降低用户使用实时 ...

- Flink table&Sql中使用Calcite

Apache Calcite是什么东东 Apache Calcite面向Hadoop新的sql引擎,它提供了标准的SQL语言.多种查询优化和连接各种数据源的能力.除此之外,Calcite还提供了OLA ...

- Demo:基于 Flink SQL 构建流式应用

Flink 1.10.0 于近期刚发布,释放了许多令人激动的新特性.尤其是 Flink SQL 模块,发展速度非常快,因此本文特意从实践的角度出发,带领大家一起探索使用 Flink SQL 如何快速构 ...

- kafka传数据到Flink存储到mysql之Flink使用SQL语句聚合数据流(设置时间窗口,EventTime)

网上没什么资料,就分享下:) 简单模式:kafka传数据到Flink存储到mysql 可以参考网站: 利用Flink stream从kafka中写数据到mysql maven依赖情况: <pro ...

- Flink Batch SQL 1.10 实践

Flink作为流批统一的计算框架,在1.10中完成了大量batch相关的增强与改进.1.10可以说是第一个成熟的生产可用的Flink Batch SQL版本,它一扫之前Dataset的羸弱,从功能和性 ...

- Flink系列之1.10版流式SQL应用

随着Flink 1.10的发布,对SQL的支持也非常强大.Flink 还提供了 MySql, Hive,ES, Kafka等连接器Connector,所以使用起来非常方便. 接下来咱们针对构建流式SQ ...

随机推荐

- 将exe和dll文件打包成单一的启动文件

当我们用 VS 或其它编程工具生成了可执行exe要运行它必须要保证其目录下有一大堆dll库文件,看起来很不爽,用专业的安装程序生成软件又显得繁琐,下面这个方法教你如何快速把exe文件和dll文件打包成 ...

- Android Camera2拍照(一)——使用SurfaceView

原文:Android Camera2拍照(一)--使用SurfaceView Camera2 API简介 Android 从5.0(21)开始,引入了新的Camera API Camera2,原来的a ...

- 从PRISM开始学WPF(八)导航Navigation?

原文:从PRISM开始学WPF(八)导航Navigation? 0x6Navigation Basic Navigation Prism中的Navigation提供了一种类似导航的功能,他可以根据用户 ...

- Delphi7文件操作常用函数

1. AssignFile.Erase AssignFile procedure AssignFile(var F; FileName: string);:给文件变量连接一个外部文件名.这里需要注意的 ...

- 字符串、数组操作函数 Copy Concat Delete Insert High MidStr Pos SetLength StrPCopy TrimLeft

对字符串及数组的操作,是每个程序员必须要掌握的.熟练的使用这些函数,在编程时能更加得心应手. 1.Copy 功能说明:该函数用于从字符串中复制指定范围中的字符.该函数有3个参数.第一个参数是数据源(即 ...

- 快速写入Xml文件

我们在做一些操作的时候会需要生成日志,Xml文件就是我们常用的一种日志文件. 普通操作Xml文件的代码遇到大数据量的话就很慢了. 用这个生成Xml文件的话,即使数据量很大,也很快 private vo ...

- Visual Studio 2017报表RDLC设计器与工具箱中Report Viewer问题

原文:VS2017入门 RDLC入门之01 本系列所有内容为网络收集转载,版权为原作者所有. VS2017初始安装后和VS2015一样,都没有ReportDesigner/ReportViewer R ...

- C#高性能大容量SOCKET并发(六):超时Socket断开(守护线程)和心跳包

原文:C#高性能大容量SOCKET并发(六):超时Socket断开(守护线程)和心跳包 守护线程 在服务端版Socket编程需要处理长时间没有发送数据的Socket,需要在超时多长时间后断开连接,我们 ...

- Android实现简单音乐播放器(startService和bindService后台运行程序)

Android实现简单音乐播放器(MediaPlayer) 开发工具:Andorid Studio 1.3运行环境:Android 4.4 KitKat 工程内容 实现一个简单的音乐播放器,要求功能有 ...

- 中芯国际在CSTIC上悉数追赶国际先进水平的布局

作为中国最大.工艺最先进的晶圆厂,中芯国际的一举一动都会受到大家的关注.在由SEMI主办的2017’中国国际半导体技术大会(CSTIC 2017)上,中芯国际CEO邱慈云博士给我们带来了最新的介绍. ...