review backpropagation

The goal of backpropagation is to compute the partial derivatives ∂C/∂w and ∂C/∂b of the cost function C with respect to any weight ww or bias b in the network.

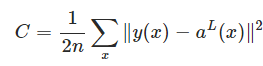

we use the quadratic cost function

two assumptions :

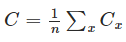

1: The first assumption we need is that the cost function can be written as an average

(case for the quadratic cost function)

(case for the quadratic cost function)

The reason we need this assumption is because what backpropagation actually lets us do is compute the partial derivatives

∂Cx/∂w and ∂Cx/∂b for a single training example. We then recover ∂C/∂w and ∂C/∂b by averaging over training examples. In

fact, with this assumption in mind, we'll suppose the training example x has been fixed, and drop the x subscript, writing the

cost Cx as C. We'll eventually put the x back in, but for now it's a notational nuisance that is better left implicit.

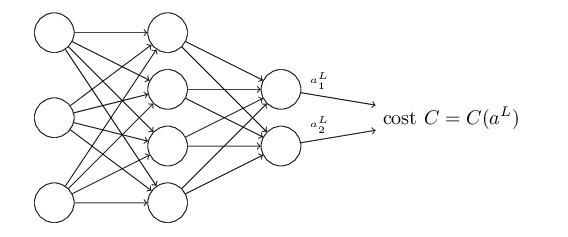

2: The cost function can be written as a function of the outputs from the neural network

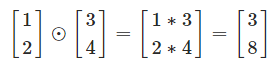

the Hadamard product

(s⊙t)j=sjtj(s⊙t)j=sjtj

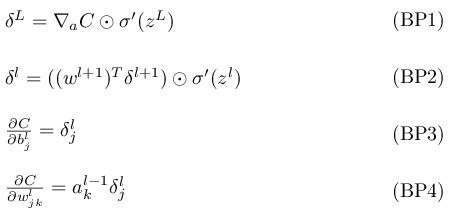

The four fundamental equations behind backpropagation

BP1

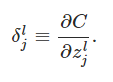

:the error in the jth neuron in the lth layer

:the error in the jth neuron in the lth layer

You might wonder why the demon is changing the weighted input zlj. Surely it'd be more natural to imagine the demon changing

the output activation alj, with the result that we'd be using ∂C/∂alj as our measure of error. In fact, if you do this things work out quite

similarly to the discussion below. But it turns out to make the presentation of backpropagation a little more algebraically complicated.

So we'll stick with δlj=∂C/∂zlj as our measure of error.

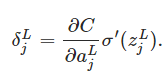

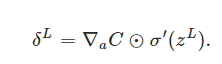

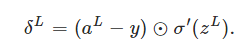

An equation for the error in the output layer, δL: The components of δL are given by

it's easy to rewrite the equation in a matrix-based form, as

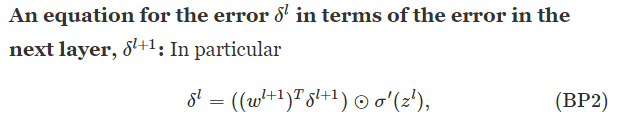

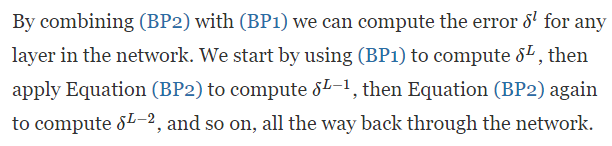

BP2

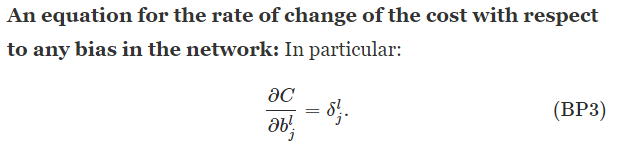

BP3

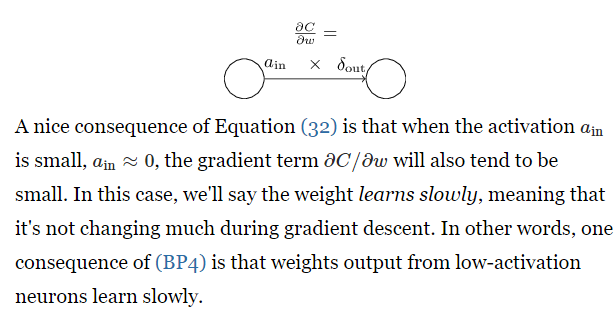

BP4

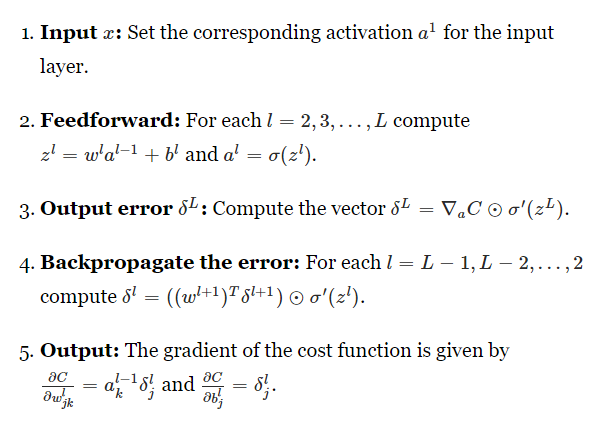

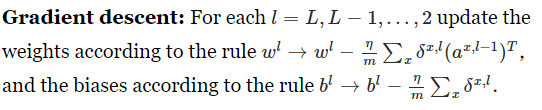

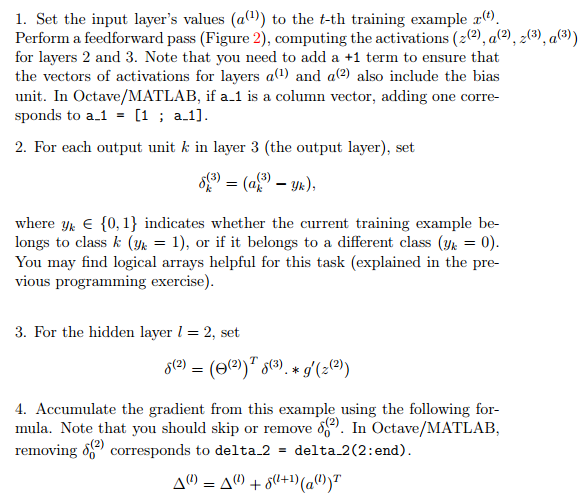

The backpropagation algorithm

Of course, to implement stochastic gradient descent in practice you also need an outer loop generating mini-batches

of training examples, and an outer loop stepping through multiple epochs of training. I've omitted those for simplicity.

reference: http://neuralnetworksanddeeplearning.com/chap2.html

------------------------------------------------------------------------------------------------

reference:Machine Learning byAndrew Ng

review backpropagation的更多相关文章

- (Review cs231n) Backpropagation and Neural Network

损失由两部分组成: 数据损失+正则化损失(data loss + regularization) 想得到损失函数关于权值矩阵W的梯度表达式,然后进性优化操作(损失相当于海拔,你在山上的位置相当于W,你 ...

- A review of learning in biologically plausible spiking neural networks

郑重声明:原文参见标题,如有侵权,请联系作者,将会撤销发布! Contents: ABSTRACT 1. Introduction 2. Biological background 2.1. Spik ...

- Deep Learning论文翻译(Nature Deep Review)

原论文出处:https://www.nature.com/articles/nature14539 by Yann LeCun, Yoshua Bengio & Geoffrey Hinton ...

- 我们是怎么做Code Review的

前几天看了<Code Review 程序员的寄望与哀伤>,想到我们团队开展Code Review也有2年了,结果还算比较满意,有些经验应该可以和大家一起分享.探讨.我们为什么要推行Code ...

- Code Review 程序员的寄望与哀伤

一个程序员,他写完了代码,在测试环境通过了测试,然后他把它发布到了线上生产环境,但很快就发现在生产环境上出了问题,有潜在的 bug. 事后分析,是生产环境的一些微妙差异,使得这种 bug 场景在线下测 ...

- AutoMapper:Unmapped members were found. Review the types and members below. Add a custom mapping expression, ignore, add a custom resolver, or modify the source/destination type

异常处理汇总-后端系列 http://www.cnblogs.com/dunitian/p/4523006.html 应用场景:ViewModel==>Mode映射的时候出错 AutoMappe ...

- Git和Code Review流程

Code Review流程1.根据开发任务,建立git分支, 分支名称模式为feature/任务名,比如关于API相关的一项任务,建立分支feature/api.git checkout -b fea ...

- 神经网络与深度学习(3):Backpropagation算法

本文总结自<Neural Networks and Deep Learning>第2章的部分内容. Backpropagation算法 Backpropagation核心解决的问题: ∂C ...

- 故障review的一些总结

故障review的一些总结 故障review的目的 归纳出现故障产生的原因 检查故障的产生是否具有普遍性,并尽可能的保证同类问题不在出现, 回顾故障的处理流程,并检查处理过程中所存在的问题.并确定此类 ...

随机推荐

- Java VM(虚拟机) 参数

-XX:PermSize/-XX:MaxPermSize,永久代内存: 1. 虚拟机参数:-ea,支持 assert 断言关键字 eclipse 默认是不开启此参数的,也就是虽然编译器支持 asser ...

- 洛谷 P1854 花店橱窗布置

题目描述 某花店现有F束花,每一束花的品种都不一样,同时至少有同样数量的花瓶,被按顺序摆成一行,花瓶的位置是固定的,从左到右按1到V顺序编号,V是花瓶的数目.花束可以移动,并且每束花用1到F的整数标识 ...

- keepalived之 ipvsadm-1.26-4(lvs)+ keepalived-1.2.24 安装

一.安装 LVS 前提:已经提前配置好本地 Yum 源 配置过程可参考> http://blog.csdn.net/zhang123456456/article/details/56690945 ...

- 动态规划算法(后附常见动态规划为题及Java代码实现)

一.基本概念 动态规划过程是:每次决策依赖于当前状态,又随即引起状态的转移.一个决策序列就是在变化的状态中产生出来的,所以,这种多阶段最优化决策解决问题的过程就称为动态规划. 二.基本思想与策略 基本 ...

- 用Azure上Cognitive Service的Face API识别人脸

Azure在China已经发布了Cognitive Service,包括人脸识别.计算机视觉识别和情绪识别等服务. 本文将介绍如何用Face API识别本地或URL的人脸. 一 创建Cognitive ...

- linux php相关命令

学习源头:http://www.cnblogs.com/myjavawork/articles/1869205.html php -m 查看php开启的相关模块 php -v 查看php的版本 运行直 ...

- ES之二:Elasticsearch原理

Elasticsearch是最近两年异军突起的一个兼有搜索引擎和NoSQL数据库功能的开源系统,基于Java/Lucene构建.最近研究了一下,感觉 Elasticsearch 的架构以及其开源的生态 ...

- win 7命令行大全

一.win+(X) 其中win不会不知道吧,X为代码! Win+L 锁定当前用户. Win+E 资源管理器. Win+R 运行. Win+G (Gadgets)顺序切换边栏小工具. Win+U ...

- java selenium webdriver第一讲 seleniumIDE

Selenium是ThoughtWorks公司,一个名为Jason Huggins的测试为了减少手工测试的工作量,自己实现的一套基于Javascript语言的代码库 使用这套库可以进行页面的交互操作, ...

- python 函数相关定义

1.为什么要使用函数? 减少代码的冗余 2.函数先定义后使用(相当于变量一样先定义后使用) 3.函数的分类: 内置函数:python解释器自带的,直接拿来用就行了 自定义函数:根据自己的需求自己定义的 ...