DeepLearning Intro - sigmoid and shallow NN

This is a series of Machine Learning summary note. I will combine the deep learning book with the deeplearning open course . Any feedback is welcomed!

First let's go through some basic NN concept using Bernoulli classification problem as an example.

Activation Function

1.Bernoulli Output

1. Definition

When dealing with Binary classification problem, what activavtion function should we use in the output layer?

Basically given \(x \in R^n\), How to get \(P(y=1|x)\) ?

2. Loss function

Let $\hat{y} = P(y=1|x) $, We would expect following output

\[P(y|x) = \begin{cases}

\hat{y} & \quad when & y= 1\\

1-\hat{y} & \quad when & y= 0\\

\end{cases}

\]

Above can be simplified as

\[P(y|x)= \hat{y}^{y}(1-\hat{y})^{1-y}\]

Therefore, the maximum likelihood of m training samples will be

\[\theta_{ML} = argmax\prod^{m}_{i=1}P(y^i|x^i)\]

As ususal we take the log of above function and get following. Actually for gradient descent log has other advantages, which we will discuss later.

\[log(\theta_{ML}) = argmax\sum^{m}_{i=1} ylog(\hat{y})+(1-y)log(1-\hat{y}) \]

And the cost function for optimization is following

\[J(w,b) = \sum ^{m}_{i=1}L(y^i,\hat{y}^i)= -\sum^{m}_{i=1}ylog(\hat{y})+(1-y)log(1-\hat{y})\]

The cost function is the sum of loss from m training samples, which measures the performance of classification algo.

And yes here it is exactly the negative of log likelihood. While Cost function can be different from negative log likelihood, when we apply regularization. But here let's start with simple version.

So here comes our next problem, how can we get 1-dimension $ log(\hat{y})$, given input \(x\), which is n-dimension vector ?

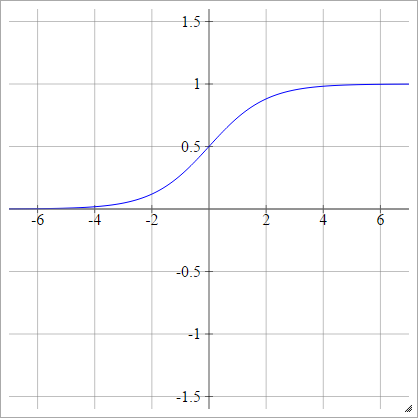

3. Activtion function - Sigmoid

Let \(h\) denotes the output from the previous hidden layer that goes into the final output layer. And a linear transformation is applied to \(h\) before activation function.

Let \(z = w^Th +b\)

The assumption here is

\[log(\hat{y}) = \begin{cases}

z & \quad when & y= 1\\

0 & \quad when & y= 0\\

\end{cases}

\]

Above can be simplified as

\[log(\hat{y}) = yz\quad \to \quad \hat{y} = exp(yz)\]

This is an unnormalized distribution of \(\hat{y}\). Because \(y\) denotes probability, we need to further normalize it to $ [0,1]$.

\[\hat{y} = \frac{exp(yz)} {\sum^1_{y=0}exp(yz)} \\

=\frac{exp(z)}{1+exp(z)}\\

\quad \quad \quad \quad = \frac{1}{1+exp(-z)} = \sigma(z)

\]

Bingo! Here we go - Sigmoid Function: \(\sigma(z) = \frac{1}{1+exp(-z)}\)

\[p(y|x) = \begin{cases}

\sigma(z) & \quad when & y= 1\\

1-\sigma(z) & \quad when & y= 0\\

\end{cases}

\]

Sigmoid function has many pretty cool features like following:

\[ 1- \sigma(x) = \sigma(-x) \\

\frac{d}{dx} \sigma(x) = \sigma(x)(1-\sigma(x)) \\

\quad \quad = \sigma(x)\sigma(-x)

\]

Using the first feature above, we can further simply the bernoulli output into following:

\[p(y|x) = \sigma((2y-1)z)\]

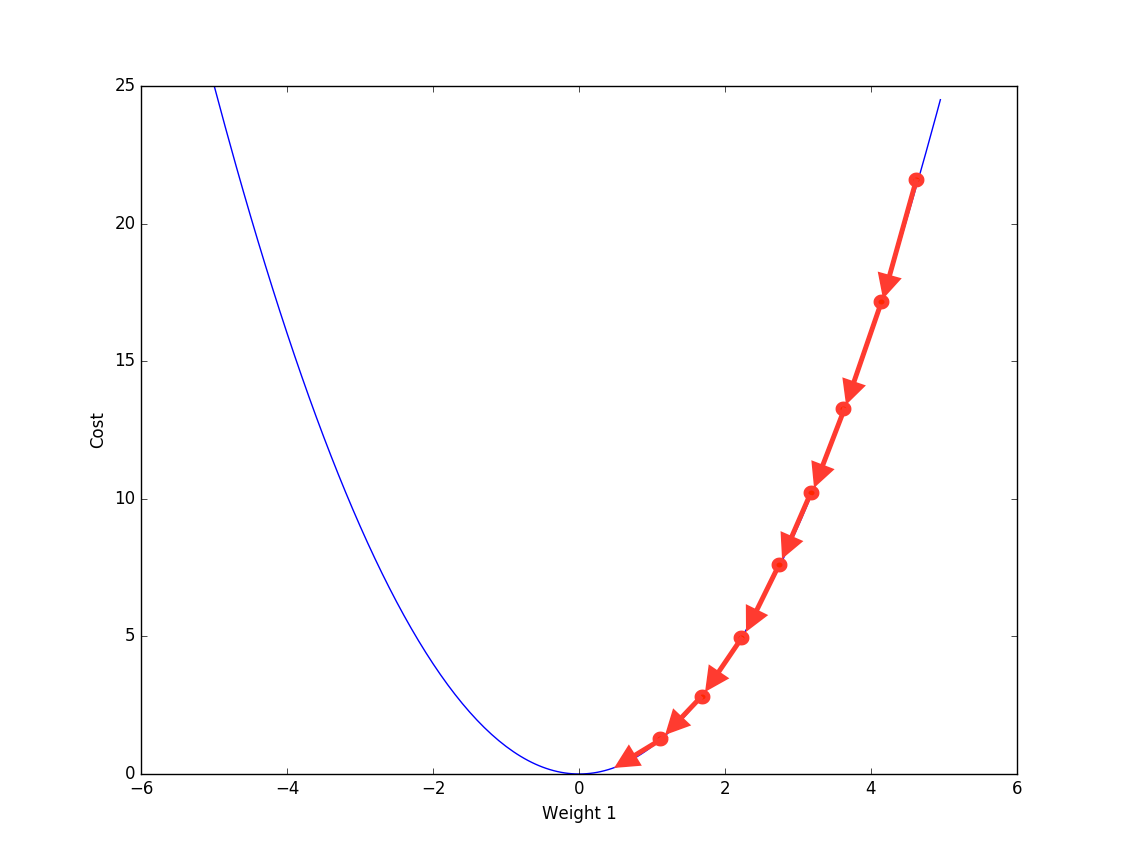

4. gradient descent and back propagation

Now we have target cost fucntion to optimize. How does the NN learn from training data? The answer is -- Back Propagation.

Actually back propagation is not some fancy method that is designed for Neural Network. When training sample is big, we can use back propagation to train linear regerssion too.

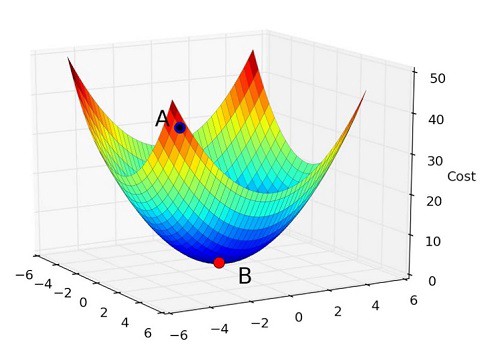

Back Propogation is iteratively using the partial derivative of cost function to update the parameter, in order to reach local optimum.

$ \

Looping \quad m \quad samples :\

w= w - \frac{\partial J(w,b)}{\partial w} \

b= b - \frac{\partial J(w,b)}{\partial b}

$

Bascically, for each training sample \((x,y)\), we compare the \(y\) with \(\hat{y}\) from output layer. Get the difference, and compute which part of difference is from which parameter( by partial derivative). And then update the parameter accordingly.

And the derivative of sigmoid function can be calcualted using chaining method:

For each training sample, let \(\hat{y}=a = \sigma(z)\)

\[ \frac{\partial L(a,y)}{\partial w} =

\frac{\partial L(a,y)}{\partial a} \cdot

\frac{\partial a}{\partial z} \cdot

\frac{\partial z}{\partial w}\]

Where

1.$\frac{\partial L(a,y)}{\partial a}

=-\frac{y}{a} + \frac{1-y}{1-a} $

Given loss function is

\(L(a,y) = -(ylog(a) + (1-y)log(1-a))\)

2.\(\frac{\partial a}{\partial z} = \sigma(z)(1-\sigma(z)) = a(1-a)\).

See above for sigmoid features.

3.\(\frac{\partial z}{\partial w} = x\)

Put them together we get :

\[ \frac{\partial L(a,y)}{\partial w} = (a-y)x\]

This is exactly the update we will have from each training sample \((x,y)\) to the parameter \(w\).

5. Entire work flow.

Summarizing everything. A 1-layer binary classification neural network is trained as following:

- Forward propagation: From \(x\), we calculate \(\hat{y}= \sigma(z)\)

- Calculate the cost function \(J(w,b)\)

- Back propagation: update parameter \((w,b)\) using gradient descent.

- keep doing above until the cost function stop improving (improment < certain threshold)

6. what's next?

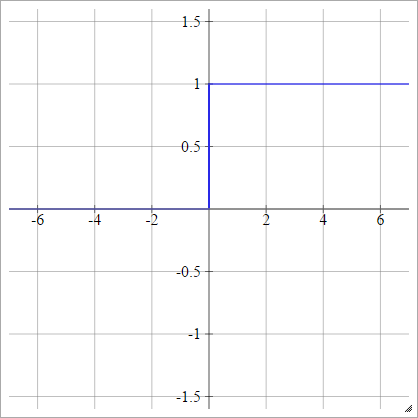

When NN has more than 1 layer, there will be hidden layers in between. And to get non-linear transformation of x, we also need different types of activation function for hidden layer.

However sigmoid is rarely used as hidden layer activation function for following reasons

- vanishing gradient descent

the reason we can't use [left] as activation function is because the gradient is 0 when \(z>1 ,z <0\).

Sigmoid only solves this problem partially. Becuase \(gradient \to 0\), when \(z>1 ,z <0\).

| \(p(y=1\|x)= max\{0,min\{1,z\}\}\) | \(p(y=1\|x)= \sigma(z)\) |

|---|---|

|

|

- non-zero centered

To be continued

Reference

- Ian Goodfellow, Yoshua Bengio, Aaron Conrville, "Deep Learning"

- Deeplearning.ai https://www.deeplearning.ai/

DeepLearning Intro - sigmoid and shallow NN的更多相关文章

- Sigmoid function in NN

X = [ones(m, ) X]; temp = X * Theta1'; t = size(temp, ); temp = [ones(t, ) temp]; h = temp * Theta2' ...

- Deeplearning - Overview of Convolution Neural Network

Finally pass all the Deeplearning.ai courses in March! I highly recommend it! If you already know th ...

- DeepLearning - Regularization

I have finished the first course in the DeepLearnin.ai series. The assignment is relatively easy, bu ...

- DeepLearning - Forard & Backward Propogation

In the previous post I go through basic 1-layer Neural Network with sigmoid activation function, inc ...

- Pytorch_第六篇_深度学习 (DeepLearning) 基础 [2]---神经网络常用的损失函数

深度学习 (DeepLearning) 基础 [2]---神经网络常用的损失函数 Introduce 在上一篇"深度学习 (DeepLearning) 基础 [1]---监督学习和无监督学习 ...

- 0802_转载-nn模块中的网络层介绍

0802_转载-nn 模块中的网络层介绍 目录 一.写在前面 二.卷积运算与卷积层 2.1 1d 2d 3d 卷积示意 2.2 nn.Conv2d 2.3 转置卷积 三.池化层 四.线性层 五.激活函 ...

- Neural Networks and Deep Learning

Neural Networks and Deep Learning This is the first course of the deep learning specialization at Co ...

- 关于BP算法在DNN中本质问题的几点随笔 [原创 by 白明] 微信号matthew-bai

随着deep learning的火爆,神经网络(NN)被大家广泛研究使用.但是大部分RD对BP在NN中本质不甚清楚,对于为什这么使用以及国外大牛们是什么原因会想到用dropout/sigmoid ...

- Pytorch实现UNet例子学习

参考:https://github.com/milesial/Pytorch-UNet 实现的是二值汽车图像语义分割,包括 dense CRF 后处理. 使用python3,我的环境是python3. ...

随机推荐

- Python 学习笔记(九)Python元组和字典(二)

什么是字典 字典是另一种可变容器模型,且可存储任意类型对象. 字典的每个键值 key=>value 对用冒号 : 分割,每个键值对之间用逗号 , 分割,整个字典包括在花括号 {} 中 键必须是唯 ...

- Oracle 常用脚本

ORACLE 默认用户名密码 sys/change_on_install SYSDBA 或 SYSOPER 不能以 NORMAL 登录,可作为默认的系统管理员 system/manager SYSDB ...

- 使用泛型与不使用泛型的Map的遍历

https://www.cnblogs.com/fqfanqi/p/6187085.html

- Xcode9.2 添加iOS11.2以下旧版本模拟器

问题起源 由于手边项目需要适配到iOS7, 但是手边的测试机都被更新到最新版本,所以有些潜在的bug,更不发现不了.最近就是有个用户提出一个bug,而且是致命的,app直接闪退.app闪退,最常见的无 ...

- ES5拓展

一.JSON拓展 1.JSON.parse(str,fun):将JSON字符串转为js对象 两个参数:str表示要处理的字符串:fun处理函数,函数有两个参数,属性名.属性值 // 定义json字符串 ...

- 【C】数据类型和打印(print)

char -128 ~ 127 (1 Byte) unsigned char 0 ~ 255 (1 Byte) short -32768 ~ 32767 (2 Bytes) unsigned sho ...

- centos7下使用n grok编译服务端和客户端穿透内网

(发现博客园会屏蔽一些标题中的关键词,比如ngrok.内网穿透,原因不知,所以改了标题才能正常访问,) 有时候想在自己电脑.路由器或者树莓派上搭建一些web.vpn等服务让自己用,但是自己的电脑一般没 ...

- 爬取猫眼TOP100

学完正则的一个小例子就是爬取猫眼排行榜TOP100的所有电影信息 看一下网页结构: 可以看出要爬取的信息在<dd>标签和</dd>标签中间 正则表达式如下: pattern ...

- Python(9-18天总结)

day9:函数:def (形参): 函数体 函数名(实参)形参:在函数声明位置的变量 1. 位置参数 2. 默认值参数 3. 混合 位置, 默认值 4. 动态传参, *args 动态接收位置参数, * ...

- Python 爬虫 七夕福利

祝大家七夕愉快 妹子图 import requests from lxml import etree import os def headers(refere):#图片的下载可能和头部的referer ...