spark第三篇:Cluster Mode Overview 集群模式预览

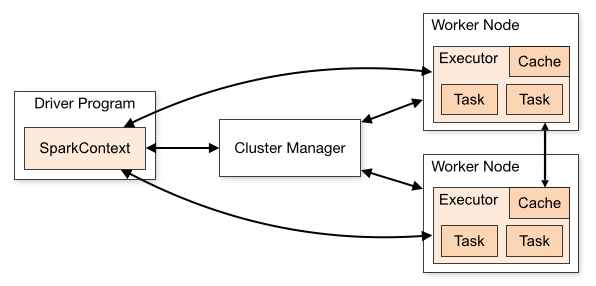

Spark applications run as independent sets of processes on a cluster, coordinated by the SparkContext object in your main program (called the driver program).

Specifically, to run on a cluster, the SparkContext can connect to several types of cluster managers (either Spark’s own standalone cluster manager, Mesos or YARN), which allocate resources across applications. Once connected, Spark acquires executors on nodes in the cluster, which are processes that run computations and store data for your application. Next, it sends your application code (defined by JAR or Python files passed to SparkContext) to the executors. Finally, SparkContext sends tasks to the executors to run.

There are several useful things to note about this architecture:

1、Each application gets its own executor processes, which stay up for the duration of the whole application and run tasks in multiple threads. This has the benefit of isolating applications from each other, on both the scheduling side (each driver schedules its own tasks) and executor side (tasks from different applications run in different JVMs). However, it also means that data cannot be shared across different Spark applications (instances of SparkContext) without writing it to an external storage system.

2、Spark is agnostic to the underlying cluster manager. As long as it can acquire executor processes, and these communicate with each other, it is relatively easy to run it even on a cluster manager that also supports other applications (e.g. Mesos/YARN).

3、The driver program must listen for and accept incoming connections from its executors throughout its lifetime (e.g., see spark.driver.port in the network config section). As such, the driver program must be network addressable from the worker nodes.

4、Because the driver schedules tasks on the cluster, it should be run close to the worker nodes, preferably on the same local area network. If you’d like to send requests to the cluster remotely, it’s better to open an RPC to the driver and have it submit operations from nearby than to run a driver far away from the worker nodes.

应用程序可以使用spark-submit脚本提交。参考application submission guide

每一个驱动程序都有一个Web UI(默认4040端口),显示正在执行的任务、执行程序和存储使用等信息。可通过http://<driver-node>:4040访问该页面。参考Monitoring and Instrumentation

Spark可以跨应用程序和应用程序内进行资源分配控制。参考Job Scheduling

术语表

| Term | Meaning |

|---|---|

| Application | User program built on Spark. Consists of a driver program and executors on the cluster. |

| Application jar |

A jar containing the user's Spark application. In some cases users will want to create an "uber jar" containing their application along with its dependencies. The user's jar should never include Hadoop or Spark libraries, however, these will be added at runtime. |

| Driver program | The process running the main() function of the application and creating the SparkContext |

| Cluster manager | An external service for acquiring resources on the cluster (e.g. standalone manager, Mesos, YARN) |

| Deploy mode |

Distinguishes where the driver process runs. In "cluster" mode, the framework launches the driver inside of the cluster. In "client" mode, the submitter launches the driver outside of the cluster. |

| Worker node | Any node that can run application code in the cluster |

| Executor |

A process launched for an application on a worker node, that runs tasks and keeps data in memory or disk storage across them. Each application has its own executors. |

| Task | A unit of work that will be sent to one executor |

| Job |

A parallel computation consisting of multiple tasks that gets spawned in response to a Spark action (e.g. |

| Stage |

Each job gets divided into smaller sets of tasks called stages that depend on each other (similar to the map and reduce stages in MapReduce); |

spark第三篇:Cluster Mode Overview 集群模式预览的更多相关文章

- Spark 官方文档(2)——集群模式

Spark版本:1.6.2 简介:本文档简短的介绍了spark如何在集群中运行,便于理解spark相关组件.可以通过阅读应用提交文档了解如何在集群中提交应用. 组件 spark应用程序通过主程序的Sp ...

- Apache Spark 2.2.0 中文文档 - 集群模式概述 | ApacheCN

集群模式概述 该文档给出了 Spark 如何在集群上运行.使之更容易来理解所涉及到的组件的简短概述.通过阅读 应用提交指南 来学习关于在集群上启动应用. 组件 Spark 应用在集群上作为独立的进程组 ...

- Redis集群功能预览

目前Redis Cluster仍处于Beta版本,Redis 3.0将会加入,在此可以先对其主要功能和原理进行一个预览.参考<Redis Cluster - a pragmatic approa ...

- redis迁移第三篇(cluster forget)

1.删除错误节点,带有 fail,noaddr , 这种需要用 cluster forget redis集群迁移之后,由于之前的误操作,导致pod日志里面出现这样的错误,出现一会好一会不好的情况,就是 ...

- Spark集群模式概述

作者:foreyou出处:http://www.foreyou.net/2015/06/22/spark-cluster-mode-overview/声明:本文采用以下协议进行授权: 署名-非商用|C ...

- Spark集群模式&Spark程序提交

Spark集群模式&Spark程序提交 1. 集群管理器 Spark当前支持三种集群管理方式 Standalone-Spark自带的一种集群管理方式,易于构建集群. Apache Mesos- ...

- 编写Spark的WordCount程序并提交到集群运行[含scala和java两个版本]

编写Spark的WordCount程序并提交到集群运行[含scala和java两个版本] 1. 开发环境 Jdk 1.7.0_72 Maven 3.2.1 Scala 2.10.6 Spark 1.6 ...

- 近千节点的Redis Cluster高可用集群案例:优酷蓝鲸优化实战(摘自高可用架构)

(原创)2016-07-26 吴建超 高可用架构导读:Redis Cluster 作者建议的最大集群规模 1,000 节点,目前优酷在蓝鲸项目中管理了超过 700 台节点,积累了 Redis Clus ...

- 超详细,多图文使用galera cluster搭建mysql集群并介绍wsrep相关参数

超详细,多图文使用galera cluster搭建mysql集群并介绍wsrep相关参数 介绍galera cluster原理的文章已经有一大堆了,百度几篇看一看就能有相关了解,这里就不赘述了.本文主 ...

随机推荐

- oracle数据库之分组查询

本章内容和大家分享的是数据当中的分组查询.分组查询复杂一点的是建立在多张表的查询的基础之上,(我们在上一节课的学习中已经给大家分享了多表查询的使用技巧,大家可以自行访问:多表查询1 多表查询2)而在 ...

- 关于 Azure 安全性的 10 点提示

讨论云服务时,安全性是一个关键领域.实际上,Windows Azure 基础结构实施大量的技术和流程来保护环境.此页介绍 Microsoft 的全球基础服务如何运行基础结构以及它们实施的安全措施. 从 ...

- RobotFramework添加自定义关键字实战

背景: 此篇文章是上一篇博客python的requests库怎么发送带cookies的请求的后续,上一篇只是使用python脚本调试通过了,接下来要把我们的方法封装为关键字,在RF中调用. 实施: 一 ...

- MongoDB单表导出与导入

mongoexport -h -u dbAdmin -p L-$LpGQ=FJvSf*****([l --authenticationDatabase=project_core_db -d proje ...

- WinForm中的焦点

窗口打开后默认的焦点在TabIndex为0的元素上,即使代码中在其他元素上设置了Focus(),也没用,所以初始状态最好通过TabIndex来控制. WebForm中点其他如空白地方,之前的控件就会失 ...

- 关于命名空间 namespace的总结

namespace 有作用的类型 类.函数.常量关键字namespace必须在所有代码之前 除用于编码的declare语句 namespace Myproject; const A = 1; cla ...

- NSSet集合

前言 NSSet:集合 NSSet 集合跟数组差不多,但 Set 集合不能存放相同的对象,它是一组单值对象的集合,被存放进集合中的数据是无序的,它可以是可变的,也可以是不变的. Xcode 7 对系统 ...

- 原码、反码、补码及位操作符,C语言位操作

计算机中的所有数据均是以二进制形式存储和处理的.所谓位操作就是直接把计算机中的二进制数进行操作,无须进行数据形式的转换,故处理速度较快. 1.原码.反码和补码 位(bit) 是计算机中处理数据的最小单 ...

- 1235: 入学考试[DP]

1235: 入学考试 [DP] 时间限制: 1 Sec 内存限制: 128 MB 提交: 37 解决: 12 统计 题目描述 辰辰是个天资聪颖的孩子,他的梦想是成为世界上最伟大的医师.为此,他想拜附近 ...

- 移动端页面怎么适配ios页面

1.viewport 简单粗暴的方式:<meta name="viewport" content="width=320,maximum-scale=1.3,user ...