scrapy笔记集合

细读http://scrapy-chs.readthedocs.io/zh_CN/latest/index.html

目录

TinyScrapy(自定义框架)

补充

- 数据采集器

- log

Scrapy介绍

Scrapy是一个为了爬取网站数据,提取结构性数据而编写的应用框架。 其可以应用在数据挖掘,信息处理或存储历史数据等一系列的程序中。

其最初是为了页面抓取 (更确切来说, 网络抓取 )所设计的, 也可以应用在获取API所返回的数据(例如 Amazon Associates Web Services ) 或者通用的网络爬虫。Scrapy用途广泛,可以用于数据挖掘、监测和自动化测试。

Scrapy 使用了 Twisted异步网络库来处理网络通讯。整体架构大致如下

Scrapy Engine

引擎负责控制数据流在系统中所有组件中流动,并在相应动作发生时触发事件。 详细内容查看下面的数据流(Data Flow)部分。

调度器(Scheduler)

调度器从引擎接受request并将他们入队,以便之后引擎请求他们时提供给引擎。

下载器(Downloader)

下载器负责获取页面数据并提供给引擎,而后提供给spider。

Spiders

Spider是Scrapy用户编写用于分析response并提取item(即获取到的item)或额外跟进的URL的类。 每个spider负责处理一个特定(或一些)网站。 更多内容请看 Spiders 。

Item Pipeline

Item Pipeline负责处理被spider提取出来的item。典型的处理有清理、 验证及持久化(例如存取到数据库中)。 更多内容查看 Item Pipeline 。

下载器中间件(Downloader middlewares)

下载器中间件是在引擎及下载器之间的特定钩子(specific hook),处理Downloader传递给引擎的response。 其提供了一个简便的机制,通过插入自定义代码来扩展Scrapy功能。更多内容请看 下载器中间件(Downloader Middleware) 。

Spider中间件(Spider middlewares)

Spider中间件是在引擎及Spider之间的特定钩子(specific hook),处理spider的输入(response)和输出(items及requests)。 其提供了一个简便的机制,通过插入自定义代码来扩展Scrapy功能。更多内容请看 Spider中间件(Middleware) 。

Scrapy中的数据流由执行引擎控制,其过程如下:

- 引擎打开一个网站(open a domain),找到处理该网站的Spider并向该spider请求第一个要爬取的URL(s)。

- 引擎从Spider中获取到第一个要爬取的URL并在调度器(Scheduler)以Request调度。

- 引擎向调度器请求下一个要爬取的URL。

- 调度器返回下一个要爬取的URL给引擎,引擎将URL通过下载中间件(请求(request)方向)转发给下载器(Downloader)。

- 一旦页面下载完毕,下载器生成一个该页面的Response,并将其通过下载中间件(返回(response)方向)发送给引擎。

- 引擎从下载器中接收到Response并通过Spider中间件(输入方向)发送给Spider处理。

- Spider处理Response并返回爬取到的Item及(跟进的)新的Request给引擎。

- 引擎将(Spider返回的)爬取到的Item给Item Pipeline,将(Spider返回的)Request给调度器。

- (从第二步)重复直到调度器中没有更多地request,引擎关闭该网站。

安装

- Linux

- pip3 install scrapy

- Windows

- a. pip3 install wheel

- b. 下载twisted http://www.lfd.uci.edu/~gohlke/pythonlibs/#twisted

- c. 进入下载目录,执行 pip3 install Twisted‑17.1.0‑cp35‑cp35m‑win_amd64.whl

- d. pip3 install scrapy

- e. 下载并安装pywin32:https://sourceforge.net/projects/pywin32/files/ 或者 pip install pywin32

安装(linux、windows)

基本命令

- 1. scrapy startproject 项目名称

- - 在当前目录中创建中创建一个项目文件(类似于Django)

- 2. scrapy genspider [-t template] <name> <domain>

- - 创建爬虫应用

- 如:

- scrapy gensipider -t basic oldboy oldboy.com

- scrapy gensipider -t xmlfeed autohome autohome.com.cn

- PS:

- 查看所有命令:scrapy gensipider -l

- 查看模板命令:scrapy gensipider -d 模板名称

- 3. scrapy list

- - 展示爬虫应用列表

- 4. scrapy crawl 爬虫应用名称

- - 运行单独爬虫应用

- scrapy crawl xxx --nolog

- 5.scrapy shell 进入shell

基本命令(创建项目、运行爬虫,类似于Django)

- 全局命令

- startproject 创建项目

- genspider: scrapy genspider [-t template] <name> <domain>生成爬虫,-l 查看模板; -t 指定模板,name爬虫名,domain域名

- settings 查看设置

- runspider 运行爬虫(运行一个独立的python文件,不必创建项目)

- shell :scrapy shell [url]进入交互式命令行,可以方便调试

- –spider=SPIDER 忽略爬虫自动检测,强制使用指定的爬虫

- -c 评估代码,打印结果并退出:

- $ scrapy shell --nolog http://www.example.com/ -c '(response.status, response.url)'

- (200, 'http://www.example.com/')

- 1

- 2

- –no-redirect 拒绝重定向

- –nolog 不打印日志

- response.status 查看响应码

- response.url

- response.text; response.body 响应文本;响应二进制

- view(response) 打开下载到本地的页面,方便分析页面(比如非静态元素)

-

- fetch 查看爬虫是如何获取页面的,常见选项如下:

- –spider=SPIDER 忽略爬虫自动检测,强制使用指定的爬虫

- –headers 查看响应头信息

- –no-redirect 拒绝重定向

- view 同交互式命令中的view

- version

- 项目命令

- crawl : scrapy crawl <spider> 指定爬虫开始爬取(确保配置文件中ROBOTSTXT_OBEY = False)

- check: scrapy check [-l] <spider>检查语法错误

- list 爬虫list

- edit 命令行模式编辑爬虫(没啥用)

- parse: scrapy parse <url> [options] 爬取并用指定的回掉函数解析(可以验证我们的回调函数是否正确)

- –callback 或者 -c 指定回调函数

- bench 测试爬虫性能

命令

项目结构以及爬虫应用介绍

- project_name/

- scrapy.cfg

- project_name/

- __init__.py

- items.py # 定义Item,类似于Django的Model

- pipelines.py # 定义持久化类

- settings.py # settings

- spiders/ # 所有自定义的爬虫存放文件夹

- __init__.py

- 爬虫1.py

- 爬虫2.py

- 爬虫3.py

- 文件说明:

- scrapy.cfg 项目的主配置信息。(真正爬虫相关的配置信息在settings.py文件中)

- items.py 设置数据存储模板,用于结构化数据,如:Django的Model

- pipelines 数据处理行为,如:一般结构化的数据持久化

- settings.py 配置文件,如:递归的层数、并发数,延迟下载等

- spiders 爬虫目录,如:创建文件,编写爬虫规则

项目结构 及 说明

样例:

- import scrapy

- class XiaoHuarSpider(scrapy.spiders.Spider):

- name = "xiaohuar" # 爬虫名称 *****

- allowed_domains = ["xiaohuar.com"] # 允许的域名

- start_urls = [

- "http://www.xiaohuar.com/hua/", # 其实URL

- ]

- def parse(self, response):

- # 访问起始URL并获取结果后的回调函数

爬虫1.py

- import sys,os

- import io

- sys.stdout=io.TextIOWrapper(sys.stdout.buffer,encoding='gb18030')

windows编码

简单使用示例

- import scrapy

- from scrapy.selector import HtmlXPathSelector

- from scrapy.http.request import Request

- class DigSpider(scrapy.Spider):

- # 爬虫应用的名称,通过此名称启动爬虫命令

- name = "dig"

- # 允许的域名

- allowed_domains = ["chouti.com"]

- # 起始URL

- start_urls = [

- 'http://dig.chouti.com/',

- ]

- has_request_set = {}

- def parse(self, response):

- print(response.url)

- hxs = HtmlXPathSelector(response)

- page_list = hxs.select('//div[@id="dig_lcpage"]//a[re:test(@href, "/all/hot/recent/\d+")]/@href').extract()

- for page in page_list:

- page_url = 'http://dig.chouti.com%s' % page

- key = self.md5(page_url)

- if key in self.has_request_set:

- pass

- else:

- self.has_request_set[key] = page_url

- obj = Request(url=page_url, method='GET', callback=self.parse)

- yield obj

- @staticmethod

- def md5(val):

- import hashlib

- ha = hashlib.md5()

- ha.update(bytes(val, encoding='utf-8'))

- key = ha.hexdigest()

- return key

爬虫1.py

执行此爬虫文件,则在终端进入项目目录执行如下命令:

|

1

|

scrapy crawl dig --nolog |

对于上述代码重要之处在于:

- Request是一个封装用户请求的类,在回调函数中yield该对象表示继续访问

- HtmlXpathSelector用于结构化HTML代码并提供选择器功能

选择器

- nodeName 选取此节点的所有节点

- / 从根节点选取

- // 从匹配选择的当前节点选择文档中的节点,不考虑它们的位置

- . 选择当前节点

- .. 选取当前节点的父节点

- @ 选取属性

- * 匹配任何元素节点

- @* 匹配任何属性节点

- Node() 匹配任何类型的节点

常用的路径表达式

- .class .color 选择class=”color”的所有元素

- #id #info 选择id=”info”的所有元素

- * * 选择所有元素

- element p 选择所有的p元素

- element,element div,p 选择所有div元素和所有p元素

- element element div p 选择div标签内部的所有p元素

- [attribute] [target] 选择带有targe属性的所有元素

- [arrtibute=value] [target=_blank] 选择target=”_blank”的所有元素

CSS选择器

- contains a[contains(@href, "link")] 属性href中包含link的a标签

- starts-with a[starts-with(@href, "link") 属性href中以link开头的a标签

- re:test a[re:test(@id, "i\d+") 属性id中格式是i\d+的a标签

- 。。。

css高级用法

- 不带extr。。。的 # 结果为obj

- extract() # 提取为list

- extract_first() # 提取第一个

提取

- #!/usr/bin/env python

- # -*- coding:utf-8 -*-

- from scrapy.selector import Selector, HtmlXPathSelector

- from scrapy.http import HtmlResponse

- html = """<!DOCTYPE html>

- <html>

- <head lang="en">

- <meta charset="UTF-8">

- <title></title>

- </head>

- <body>

- <ul>

- <li class="item-"><a id='i1' href="link.html">first item</a></li>

- <li class="item-0"><a id='i2' href="llink.html">first item</a></li>

- <li class="item-1"><a href="llink2.html">second item<span>vv</span></a></li>

- </ul>

- <div><a href="llink2.html">second item</a></div>

- </body>

- </html>

- """

- response = HtmlResponse(url='http://example.com', body=html,encoding='utf-8')

- # hxs = HtmlXPathSelector(response)

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a') # 查找整个html中所有a标签

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[2]') # 查找整个html中所有a标签的第二个元素

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[@id]') # 查找整个html中具有id的所有a标签

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[@id="i1"]') # 查找整个html中id为i1的a标签

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[@href="link.html"][@id="i1"]') # 查找整个html中href为link.html以及id为i1的a标签

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[contains(@href, "link")]') # 查找整个html中href包含link字段的所有a标签

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[starts-with(@href, "link")]') # 查找整个html中href以link开头的所有a标签

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[re:test(@id, "i\d+")]') # 查找整个html中id格式为i\d+的所有a标签

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[re:test(@id, "i\d+")]/text()').extract() # 查找整个html中id格式为i\d+的所有a标签的text值,并提出为string的列表

- # print(hxs)

- # hxs = Selector(response=response).xpath('//a[re:test(@id, "i\d+")]/@href').extract() # 查找整个html中id格式为i\d+的所有a标签的href值,并提出为string的列表

- # print(hxs)

- # hxs = Selector(response=response).xpath('/html/body/ul/li/a/@href').extract() # 查找response里,路径为/html/body/ul/li/a的href值,并提出为string的列表

- # print(hxs)

- # hxs = Selector(response=response).xpath('//body/ul/li/a/@href').extract_first()查找response的后代里,路径为body/ul/li/a的href值,并提出第一个值

- # print(hxs)

- # ul_list = Selector(response=response).xpath('//body/ul/li')

- # for item in ul_list:

- # v = item.xpath('./a/span') # 相对路径

- # # 或

- # # v = item.xpath('a/span')

- # # 或

- # # v = item.xpath('*/a/span')

- # print(v)

示例

- # 找div,class=part2的标签,获取share-linkid属性

- hxs = Selector(response)

- linkid_list = hxs.xpath("//div[@class='part2']/@share-linkid").extract()

- # print(linkid_list)

之前做的真实示例

补充:

- '''

- In [326]: text="""

- ...: <div>

- ...: <a>1a</a>

- ...: <p>2p</p>

- ...: <p>3p</p>

- ...: </div>"""

- '''

- # css写法

- #完整子节点列表,从第一个子节点开始计数,并且满足子节点tag限定

- In [332]: sel.css(‘a:nth-child(1)‘).extract()

- Out[332]: [‘<a>1a</a>‘]

- #完整子节点列表,从最后一个子节点开始计数,并且满足子节点tag限定

- In [333]: sel.css(‘a:nth-last-child(1)‘).extract()

- Out[333]: []

- In [340]: sel.css(‘a:first-child‘).extract()

- Out[340]: [‘<a>1a</a>‘]

- In [341]: sel.css(‘a:last-child‘).extract()

- Out[341]: []

- # 上述 -child 修改为 -of-type ,仅对 过滤后的相应子节点列表 进行计数

- # 这句话待验证

- # xpath写法

- In [345]: sel.xpath(‘//div/*‘).extract()

- Out[345]: [‘<a>1a</a>‘, ‘<p>2p</p>‘, ‘<p>3p</p>‘]

- In [346]: sel.xpath(‘//div/node()‘).extract()

- Out[346]: [‘\n ‘, ‘<a>1a</a>‘, ‘\n ‘, ‘<p>2p</p>‘, ‘\n ‘, ‘<p>3p</p>‘, ‘\n‘]

- In [356]: sel.xpath(‘//div/node()[1]‘).extract() #包括纯文本

- Out[356]: [‘\n ‘]

- In [352]: sel.xpath(‘//div/p[last()]‘).extract()

- Out[352]: [‘<p>3p</p>‘]

- In [353]: sel.xpath(‘//div/p[last()-1]‘).extract()

- Out[353]: [‘<p>2p</p>‘]

第几个子节点 css和xpath写法

- 排除一个属性的节点可以使用//tbody/tr[not(@class)]来写

- 排除一个或者两个属性可以使用//tbody/tr[not(@class or @id)]来选择。

- 排查某属性值可使用//tbody/tr[not(@class='xxx')]

not

- 一、节点的前后节点:

- 当前节点的祖先节点:

- //*[title=""]/ancestor::*

- 当前节点的父节点:

- //*[title=""]/parent::*

- //*[title=""]/..

- 当前节点的开始标签之前的所有节点 /preceding

- 当前节点的结束标签之后的所有节点 /following

- 当前节点之后的兄弟节点 /following-sibling::*

- 当前节点之前的兄弟节点 /preceding-sibling::*

- 当前节点的所有后代(子,孙) /descendant

- 包含指定文本:span[contains(text(), "指定文本内容")]

- 取最后一个子元素 //div[@class='box-nav']/a[last()]

- 二、选取包含指定文本的标签前面的某个兄弟节点

- html代码如下:

- <div class="tittle_x F_Left">

- <a href="http://www.ccidnet.com/">首页</a>

- <em>></em>

- <a href="http://www.ccidnet.com/news/">新闻</a>

- <em>></em>

- <a href="http://www.ccidnet.com/news/focus/">焦点直击</a>

- <em>></em>

- <a href="#">正文 </a>

- </div>

- 如上图与代码,我想选择“正文”前面的“焦点直击”为类型,那么可以这样写:

- 类型: //div[@class='tittle_x F_Left']/a[contains(text(),'正文')]/preceding-sibling::a[1]

- 三、选取指定节点之前的不带标签的文本

- 例如:选class="bb"前面的文本:“这是文本。”

- <span>

- <span class="aa">文本</span>

- 这是文本。

- <a class="bb">文本</a>

- </span>

- 可以这样写: //span[@class='aa'][2]/following::text()[1]

- ---------------------

- 作者:那个南墙

- 来源:CSDN

- 原文:https://blog.csdn.net/baidu_38414830/article/details/70325232

- 版权声明:本文为博主原创文章,转载请附上博文链接!

关系选择 即祖先父母后代兄弟

- 获取某标签的多个子标签的text

- xpath("string(.)")

- response.xpath("//div[@class='tpc_content do_not_catch']")[0].xpath("string(.)").extract_first()

- # 这个是获取子标签text的列表

- response.xpath("//div[@class='tpc_content do_not_catch']")[0].xpath("text()").extract()

- 获取单个标签的text

- css("::text")

- xpath("xxxx/text()")

获取多个子标签的text

- 1.CSS写法

- 1.1 获取属性值:

- 标签名::attr(属性名)

- 例:response.css('base::attr(href)')

- 1.2 获取元素内容

- 标签名::text

- 例:response.css('title::text')

- 1.3遇到有相同的标签时,需要在[ ]中加限定内容:

- 标签名[]::attr(属性名)

- 例:response.css('a[href*=image]::attr(href)')

- 2.XPath方法

- 2.1 获取属性值:

- //标签名/@属性名

- 例:response.xpath('//base/@href')

- 2.2 获取元素内容:

- //标签名/text()

- 例:response.xpath('//title/text()')

- 2.3 遇到有相同的标签时,需要在[ ]中加限定内容:

- //标签名[contains(@属性名,"标签名")]/@属性名

- 例:response.xpath('//div[@id="images"]/a/text()')

- 注意:这里id对应的属性值必须用双引号,在scrapy的shell命令模式中,单引号一直报语法错误

补充

- xpath中没有提供对class的原生查找方法。但是 stackoverflow 看到了一个很有才的回答:

- This selector should work but will be more efficient if you replace it with your suited markup:

- 这个表达式应该是可行的。不过如果你把class换成更好识别的标识执行效率会更高

- //*[contains(@class, 'Test')]

- But since this will also match cases like class="Testvalue" or class="newTest".

- 但是这个表达式会把类似 class="Testvalue" 或者 class="newTest"也匹配出来。

- //*[contains(concat(' ', @class, ' '), ' Test ')]

- If you wished to be really certain that it will match correctly, you could also use the normalize-space function to clean up stray whitespace characters around the class name (as mentioned by @Terry)

- 如果您希望确定它能够正确匹配,则还可以使用 normalize-space 函数清除类名周围的空白字符(如@Terry所述)

- //*[contains(concat(' ', normalize-space(@class), ' '), ' Test ')]

- Note that in all these versions, the * should best be replaced by whatever element name you actually wish to match, unless you wish to search each and every element in the document for the given condition.

- 请注意在所有这些版本里,除非你想要在所有元素里搜索带有这些条件的元素,否则你最好把*号替换成你想要匹配的具体的元素名(标签名)。

一些函数

数据格式化、持久化

爬取的数据可在parse中直接处理。也可以使用Item进行格式化,交给pipelines进行持久化处理。

- import scrapy

- from scrapy.selector import HtmlXPathSelector

- from scrapy.http.request import Request

- from scrapy.http.cookies import CookieJar

- from scrapy import FormRequest

- class XiaoHuarSpider(scrapy.Spider):

- # 爬虫应用的名称,通过此名称启动爬虫命令

- name = "xiaohuar"

- # 允许的域名

- allowed_domains = ["xiaohuar.com"]

- start_urls = [

- "http://www.xiaohuar.com/list-1-1.html",

- ]

- # custom_settings = {

- # 'ITEM_PIPELINES':{

- # 'spider1.pipelines.JsonPipeline': 100

- # }

- # }

- has_request_set = {}

- def parse(self, response):

- # 分析页面

- # 找到页面中符合规则的内容(校花图片),保存

- # 找到所有的a标签,再访问其他a标签,一层一层的搞下去

- hxs = HtmlXPathSelector(response)

- items = hxs.select('//div[@class="item_list infinite_scroll"]/div')

- for item in items:

- src = item.select('.//div[@class="img"]/a/img/@src').extract_first()

- name = item.select('.//div[@class="img"]/span/text()').extract_first()

- school = item.select('.//div[@class="img"]/div[@class="btns"]/a/text()').extract_first()

- url = "http://www.xiaohuar.com%s" % src

- from ..items import XiaoHuarItem

- obj = XiaoHuarItem(name=name, school=school, url=url)

- yield obj

- urls = hxs.select('//a[re:test(@href, "http://www.xiaohuar.com/list-1-\d+.html")]/@href')

- for url in urls:

- key = self.md5(url)

- if key in self.has_request_set:

- pass

- else:

- self.has_request_set[key] = url

- req = Request(url=url,method='GET',callback=self.parse)

- yield req

- @staticmethod

- def md5(val):

- import hashlib

- ha = hashlib.md5()

- ha.update(bytes(val, encoding='utf-8'))

- key = ha.hexdigest()

- return key

spiders/xiahuar.py

- import scrapy

- class XiaoHuarItem(scrapy.Item):

- name = scrapy.Field()

- school = scrapy.Field()

- url = scrapy.Field()

items.py

- import json

- import os

- import requests

- class JsonPipeline(object):

- def __init__(self):

- self.file = open('xiaohua.txt', 'w')

- def process_item(self, item, spider):

- v = json.dumps(dict(item), ensure_ascii=False)

- self.file.write(v)

- self.file.write('\n')

- self.file.flush()

- return item

- class FilePipeline(object):

- def __init__(self):

- if not os.path.exists('imgs'):

- os.makedirs('imgs')

- def process_item(self, item, spider):

- response = requests.get(item['url'], stream=True)

- file_name = '%s_%s.jpg' % (item['name'], item['school'])

- with open(os.path.join('imgs', file_name), mode='wb') as f:

- f.write(response.content)

- return item

pipelines.py

- ITEM_PIPELINES = {

- 'spider1.pipelines.JsonPipeline': 100,

- 'spider1.pipelines.FilePipeline': 300,

- }

- # 每行后面的整型值,确定了他们运行的顺序,item按数字从低到高的顺序,通过pipeline,通常将这些数字定义在0-1000范围内。

settings.py

对于pipeline可以做更多,如下:

- from scrapy.exceptions import DropItem

- class CustomPipeline(object):

- def __init__(self,v):

- self.value = v

- def process_item(self, item, spider):

- # 操作并进行持久化

- # return表示会被后续的pipeline继续处理

- return item

- # 表示将item丢弃,不会被后续pipeline处理

- # raise DropItem()

- @classmethod

- def from_crawler(cls, crawler):

- """

- 初始化时候,用于创建pipeline对象

- :param crawler:

- :return:

- """

- val = crawler.settings.getint('MMMM')

- return cls(val)

- def open_spider(self,spider):

- """

- 爬虫开始执行时,调用

- :param spider:

- :return:

- """

- print('')

- def close_spider(self,spider):

- """

- 爬虫关闭时,被调用

- :param spider:

- :return:

- """

- print('')

自定义pipeline

中间件

- class SpiderMiddleware(object):

- def process_spider_input(self,response, spider):

- """

- 下载完成,执行,然后交给parse处理

- :param response:

- :param spider:

- :return:

- """

- pass

- def process_spider_output(self,response, result, spider):

- """

- spider处理完成,返回时调用

- :param response:

- :param result:

- :param spider:

- :return: 必须返回包含 Request 或 Item 对象的可迭代对象(iterable)

- """

- return result

- def process_spider_exception(self,response, exception, spider):

- """

- 异常调用

- :param response:

- :param exception:

- :param spider:

- :return: None,继续交给后续中间件处理异常;含 Response 或 Item 的可迭代对象(iterable),交给调度器或pipeline

- """

- return None

- def process_start_requests(self,start_requests, spider):

- """

- 爬虫启动时调用

- :param start_requests:

- :param spider:

- :return: 包含 Request 对象的可迭代对象

- """

- return start_requests

爬虫中间件

- class DownMiddleware1(object):

- def process_request(self, request, spider):

- """

- 请求需要被下载时,经过所有下载器中间件的process_request调用

- :param request:

- :param spider:

- :return:

- None,继续后续中间件去下载;

- Response对象,停止process_request的执行,开始执行process_response

- Request对象,停止中间件的执行,将Request重新调度器

- raise IgnoreRequest异常,停止process_request的执行,开始执行process_exception

- """

- pass

- def process_response(self, request, response, spider):

- """

- spider处理完成,返回时调用

- :param response:

- :param result:

- :param spider:

- :return:

- Response 对象:转交给其他中间件process_response

- Request 对象:停止中间件,request会被重新调度下载

- raise IgnoreRequest 异常:调用Request.errback

- """

- print('response1')

- return response

- def process_exception(self, request, exception, spider):

- """

- 当下载处理器(download handler)或 process_request() (下载中间件)抛出异常

- :param response:

- :param exception:

- :param spider:

- :return:

- None:继续交给后续中间件处理异常;

- Response对象:停止后续process_exception方法

- Request对象:停止中间件,request将会被重新调用下载

- """

- return None

下载器中间件

- 首先需要明确:

- 请求是引擎发出来的,不是爬虫发出来的

- 引擎从爬虫拿url,给调度器去重,同时会从调度器的任务队列里取出一个任务,给下载器

- 下载器下载完以后,下载器把response返回给引擎

- 先说process_request(request, spider)

- 当设置了很多中间件的时候,会按照setting里的设置,按照从小到大执行

- 假如有两个中间件的等级一样,这两个中间都会被执行。(执行顺序没有得出有效结论)

- 即使某个中间件的设置时错的,比如,故意在代理中间件里给一个错误的ip,依然不会中断中间件的执行,也就是,scrapy无法检测代理中的操作是否合法。

- 经过中间件故意的错误的加代理,下载器仍然去执行这个任务了,只不过根据另一个中间件:RetryMiddleware 的设定去处理了这个请求(默认的是,请求连续失败三次退出任务)

- 当这个请求第一次失败时候,依然会再次经过设置的中间件。

- 第一个发出error信号的不是引擎,是scraper,它是连接引擎、爬虫、下载器的一个东西。。。。然后引擎才发出错误信号

- (重点)每一个任务,也就是每一个请求,不管在什么情况下,只要设置了中间件,就会孜孜不倦的去通过这些中间件,然后到达下载器

- 然后说process_response(request, response, spider)

- 因为获取的响应是从下载器到引擎的,所以response经过中间件的顺序刚好与request相反

- 是从大到小执行的

- ---------------------

- 作者:fiery_heart

- 来源:CSDN

- 原文:https://blog.csdn.net/fiery_heart/article/details/82229871

- 版权声明:本文为博主原创文章,转载请附上博文链接!

下载中间件的总结(转)

- class MyMiddleware(object):

- # Not all methods need to be defined. If a method is not defined,

- # scrapy acts as if the spider middleware does not modify the

- # passed objects.

- @classmethod

- def from_crawler(cls, crawler):

- # This method is used by Scrapy to create your spiders.

- s = cls()

- crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

- crawler.signals.connect(s.item_scraped, signal=signals.item_scraped)

- crawler.signals.connect(s.spider_closed, signal=signals.spider_closed)

- crawler.signals.connect(s.spider_error, signal=signals.spider_error)

- crawler.signals.connect(s.spider_idle, signal=signals.spider_idle)

- return s

- # 当spider开始爬取时发送该信号。该信号一般用来分配spider的资源,不过其也能做任何事。

- def spider_opened(self, spider):

- spider.logger.info('pa chong kai shi le: %s' % spider.name)

- print('start','')

- def item_scraped(self,item, response, spider):

- global hahaha

- hahaha += 1

- # 当某个spider被关闭时,该信号被发送。该信号可以用来释放每个spider在 spider_opened 时占用的资源。

- def spider_closed(self,spider, reason):

- print('-------------------------------all over------------------------------------------')

- global hahaha

- print(spider.name,' closed')

- # 当spider的回调函数产生错误时(例如,抛出异常),该信号被发送。

- def spider_error(self,failure, response, spider):

- code = response.status

- print('spider error')

- # 当spider进入空闲(idle)状态时该信号被发送。空闲意味着:

- # requests正在等待被下载

- # requests被调度

- # items正在item pipeline中被处理

- def spider_idle(self,spider):

- for i in range(10):

- print(spider.name)

- '''

- start 1

- jiandan

- jiandan

- jiandan

- jiandan

- jiandan

- jiandan

- jiandan

- jiandan

- jiandan

- jiandan

- -------------------------------all over------------------------------------------

- jiandan closed

- '''

信号在中间件的使用

自定义命令

- 在spiders同级创建任意目录,如:commands

- 在其中创建 crawlall.py 文件 (此处文件名就是自定义的命令)

- from scrapy.commands import ScrapyCommand

- from scrapy.utils.project import get_project_settings

- class Command(ScrapyCommand):

- requires_project = True

- def syntax(self):

- return '[options]'

- def short_desc(self):

- return 'Runs all of the spiders'

- def run(self, args, opts):

- spider_list = self.crawler_process.spiders.list()

- for name in spider_list:

- self.crawler_process.crawl(name, **opts.__dict__)

- self.crawler_process.start()

crawlall.py

- 在settings.py 中添加配置 COMMANDS_MODULE = '项目名称.目录名称'

- 在项目目录执行命令:scrapy crawlall

自定义扩展(涉及信号)

自定义扩展时,利用信号在指定位置注册制定操作

- 信号:

- engine_started = object()

- engine_stopped = object()

- spider_opened = object()

- spider_idle = object()

- spider_closed = object()

- spider_error = object()

- request_scheduled = object()

- request_dropped = object()

- response_received = object()

- response_downloaded = object()

- item_scraped = object()

- item_dropped = object()

- 用法

- crawler.signals.connect(ext.spider_opened, signal=signals.spider_opened) # 当开始spider时,执行本类中用户自定义的spider_opened方法

信号的类别 和 用法

- from scrapy import signals

- class MyExtension(object):

- def __init__(self, value):

- self.value = value

- @classmethod

- def from_crawler(cls, crawler):

- val = crawler.settings.getint('MMMM')

- ext = cls(val)

- crawler.signals.connect(ext.spider_opened, signal=signals.spider_opened)

- crawler.signals.connect(ext.spider_closed, signal=signals.spider_closed)

- return ext

- def spider_opened(self, spider):

- print('open')

- def spider_closed(self, spider):

- print('close')

自定义扩展

信号在扩展的使用

跟中间件类似,先自己创建一个py文件(名字自定义)放在项目目录里,再在settings文件中添加extension。

- # -*- coding:utf-8 -*-

- from scrapy import signals

- class MyExtension(object):

- def __init__(self,**kwargs):

- self.__dict__.update(kwargs)

- @classmethod

- def from_crawler(cls, crawler):

- ext = cls(a=1,b=2,x=11,y=12)

- crawler.signals.connect(ext.spider_opened, signal=signals.spider_opened)

- crawler.signals.connect(ext.spider_closed, signal=signals.spider_closed)

- return ext

- def spider_opened(self, spider):

- print('open')

- print(self.a)

- print(self.b)

- def spider_closed(self, spider):

- print('close')

- print(self.x)

- print(self.y)

项目名称/extension.py

- EXTENSIONS = {

- # 'scrapy.extensions.telnet.TelnetConsole': None,

- "cl.extensions.MyExtension":200,

- }

settings.py

- open

- 1

- 2

- close

- 11

- 12

注1:信号可在中间件使用,见中间件部分。

注2:信号可配合数据采集器,见后面笔记。

避免重复访问(去重)

- scrapy默认使用 scrapy.dupefilter.RFPDupeFilter 进行去重,相关去重配置有:

- DUPEFILTER_CLASS = 'scrapy.dupefilter.RFPDupeFilter'

- DUPEFILTER_DEBUG = False

- JOBDIR = "保存范文记录的日志路径,如:/root/" # 最终路径为 /root/requests.seen

Scrapy中默认配置

- class RepeatUrl:

- def __init__(self):

- self.visited_url = set()

- @classmethod

- def from_settings(cls, settings):

- """

- 初始化时,调用

- :param settings:

- :return:

- """

- return cls()

- def request_seen(self, request):

- """

- 检测当前请求是否已经被访问过

- :param request:

- :return: True表示已经访问过;False表示未访问过

- """

- if request.url in self.visited_url:

- return True

- self.visited_url.add(request.url)

- return False

- def open(self):

- """

- 开始爬去请求时,调用

- :return:

- """

- print('open replication')

- def close(self, reason):

- """

- 结束爬虫爬取时,调用

- :param reason:

- :return:

- """

- print('close replication')

- def log(self, request, spider):

- """

- 记录日志

- :param request:

- :param spider:

- :return:

- """

- print('repeat', request.url)

自定义URL去重操作

settings说明

http://scrapy-chs.readthedocs.io/zh_CN/latest/topics/settings.html

- # -*- coding: utf-8 -*-

- # Scrapy settings for step8_king project

- #

- # For simplicity, this file contains only settings considered important or

- # commonly used. You can find more settings consulting the documentation:

- #

- # http://doc.scrapy.org/en/latest/topics/settings.html

- # http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

- # http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

- # 1. 爬虫名称

- BOT_NAME = 'step8_king'

- # 2. 爬虫应用路径

- SPIDER_MODULES = ['step8_king.spiders']

- NEWSPIDER_MODULE = 'step8_king.spiders'

- # Crawl responsibly by identifying yourself (and your website) on the user-agent

- # 3. 客户端 user-agent请求头

- # USER_AGENT = 'step8_king (+http://www.yourdomain.com)'

- # Obey robots.txt rules

- # 4. 禁止爬虫配置

- # ROBOTSTXT_OBEY = False

- # Configure maximum concurrent requests performed by Scrapy (default: 16)

- # 5. 并发请求数

- # CONCURRENT_REQUESTS = 4

- # Configure a delay for requests for the same website (default: 0)

- # See http://scrapy.readthedocs.org/en/latest/topics/settings.html#download-delay

- # See also autothrottle settings and docs

- # 6. 延迟下载秒数

- # DOWNLOAD_DELAY = 2

- # The download delay setting will honor only one of:

- # 7. 单域名访问并发数,并且延迟下次秒数也应用在每个域名

- # CONCURRENT_REQUESTS_PER_DOMAIN = 2

- # 单IP访问并发数,如果有值则忽略:CONCURRENT_REQUESTS_PER_DOMAIN,并且延迟下次秒数也应用在每个IP

- # CONCURRENT_REQUESTS_PER_IP = 3

- # Disable cookies (enabled by default)

- # 8. 是否支持cookie,cookiejar进行操作cookie

- # COOKIES_ENABLED = True

- # COOKIES_DEBUG = True

- # Disable Telnet Console (enabled by default)

- # 9. Telnet用于查看当前爬虫的信息,操作爬虫等...

- # 使用telnet ip port ,然后通过命令操作

- # TELNETCONSOLE_ENABLED = True

- # TELNETCONSOLE_HOST = '127.0.0.1'

- # TELNETCONSOLE_PORT = [6023,]

- # 10. 默认请求头

- # Override the default request headers:

- # DEFAULT_REQUEST_HEADERS = {

- # 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

- # 'Accept-Language': 'en',

- # }

- # Configure item pipelines

- # See http://scrapy.readthedocs.org/en/latest/topics/item-pipeline.html

- # 11. 定义pipeline处理请求

- # ITEM_PIPELINES = {

- # 'step8_king.pipelines.JsonPipeline': 700,

- # 'step8_king.pipelines.FilePipeline': 500,

- # }

- # 12. 自定义扩展,基于信号进行调用

- # Enable or disable extensions

- # See http://scrapy.readthedocs.org/en/latest/topics/extensions.html

- # EXTENSIONS = {

- # # 'step8_king.extensions.MyExtension': 500,

- # }

- # 13. 爬虫允许的最大深度,可以通过meta查看当前深度;0表示无深度

- # DEPTH_LIMIT = 3

- # 14. 爬取时,0表示深度优先Lifo(默认);1表示广度优先FiFo

- # 后进先出,深度优先

- # DEPTH_PRIORITY = 0

- # SCHEDULER_DISK_QUEUE = 'scrapy.squeue.PickleLifoDiskQueue'

- # SCHEDULER_MEMORY_QUEUE = 'scrapy.squeue.LifoMemoryQueue'

- # 先进先出,广度优先

- # DEPTH_PRIORITY = 1

- # SCHEDULER_DISK_QUEUE = 'scrapy.squeue.PickleFifoDiskQueue'

- # SCHEDULER_MEMORY_QUEUE = 'scrapy.squeue.FifoMemoryQueue'

- # 15. 调度器队列

- # SCHEDULER = 'scrapy.core.scheduler.Scheduler'

- # from scrapy.core.scheduler import Scheduler

- # 16. 访问URL去重

- # DUPEFILTER_CLASS = 'step8_king.duplication.RepeatUrl'

- # Enable and configure the AutoThrottle extension (disabled by default)

- # See http://doc.scrapy.org/en/latest/topics/autothrottle.html

- """

- 17. 自动限速算法

- from scrapy.contrib.throttle import AutoThrottle

- 自动限速设置

- 1. 获取最小延迟 DOWNLOAD_DELAY

- 2. 获取最大延迟 AUTOTHROTTLE_MAX_DELAY

- 3. 设置初始下载延迟 AUTOTHROTTLE_START_DELAY

- 4. 当请求下载完成后,获取其"连接"时间 latency,即:请求连接到接受到响应头之间的时间

- 5. 用于计算的... AUTOTHROTTLE_TARGET_CONCURRENCY

- target_delay = latency / self.target_concurrency

- new_delay = (slot.delay + target_delay) / 2.0 # 表示上一次的延迟时间

- new_delay = max(target_delay, new_delay)

- new_delay = min(max(self.mindelay, new_delay), self.maxdelay)

- slot.delay = new_delay

- """

- # 开始自动限速

- # AUTOTHROTTLE_ENABLED = True

- # The initial download delay

- # 初始下载延迟

- # AUTOTHROTTLE_START_DELAY = 5

- # The maximum download delay to be set in case of high latencies

- # 最大下载延迟

- # AUTOTHROTTLE_MAX_DELAY = 10

- # The average number of requests Scrapy should be sending in parallel to each remote server

- # 平均每秒并发数

- # AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

- # Enable showing throttling stats for every response received:

- # 是否显示

- # AUTOTHROTTLE_DEBUG = True

- # Enable and configure HTTP caching (disabled by default)

- # See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

- """

- 18. 启用缓存

- 目的用于将已经发送的请求或相应缓存下来,以便以后使用

- from scrapy.downloadermiddlewares.httpcache import HttpCacheMiddleware

- from scrapy.extensions.httpcache import DummyPolicy

- from scrapy.extensions.httpcache import FilesystemCacheStorage

- """

- # 是否启用缓存策略

- # HTTPCACHE_ENABLED = True

- # 缓存策略:所有请求均缓存,下次在请求直接访问原来的缓存即可

- # HTTPCACHE_POLICY = "scrapy.extensions.httpcache.DummyPolicy"

- # 缓存策略:根据Http响应头:Cache-Control、Last-Modified 等进行缓存的策略

- # HTTPCACHE_POLICY = "scrapy.extensions.httpcache.RFC2616Policy"

- # 缓存超时时间

- # HTTPCACHE_EXPIRATION_SECS = 0

- # 缓存保存路径

- # HTTPCACHE_DIR = 'httpcache'

- # 缓存忽略的Http状态码

- # HTTPCACHE_IGNORE_HTTP_CODES = []

- # 缓存存储的插件

- # HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

- """

- 19. 代理,需要在环境变量中设置

- from scrapy.contrib.downloadermiddleware.httpproxy import HttpProxyMiddleware

- 方式一:使用默认

- os.environ

- {

- http_proxy:http://root:woshiniba@192.168.11.11:9999/

- https_proxy:http://192.168.11.11:9999/

- }

- 方式二:使用自定义下载中间件

- def to_bytes(text, encoding=None, errors='strict'):

- if isinstance(text, bytes):

- return text

- if not isinstance(text, six.string_types):

- raise TypeError('to_bytes must receive a unicode, str or bytes '

- 'object, got %s' % type(text).__name__)

- if encoding is None:

- encoding = 'utf-8'

- return text.encode(encoding, errors)

- class ProxyMiddleware(object):

- def process_request(self, request, spider):

- PROXIES = [

- {'ip_port': '111.11.228.75:80', 'user_pass': ''},

- {'ip_port': '120.198.243.22:80', 'user_pass': ''},

- {'ip_port': '111.8.60.9:8123', 'user_pass': ''},

- {'ip_port': '101.71.27.120:80', 'user_pass': ''},

- {'ip_port': '122.96.59.104:80', 'user_pass': ''},

- {'ip_port': '122.224.249.122:8088', 'user_pass': ''},

- ]

- proxy = random.choice(PROXIES)

- if proxy['user_pass'] is not None:

- request.meta['proxy'] = to_bytes("http://%s" % proxy['ip_port'])

- encoded_user_pass = base64.encodestring(to_bytes(proxy['user_pass']))

- request.headers['Proxy-Authorization'] = to_bytes('Basic ' + encoded_user_pass)

- print "**************ProxyMiddleware have pass************" + proxy['ip_port']

- else:

- print "**************ProxyMiddleware no pass************" + proxy['ip_port']

- request.meta['proxy'] = to_bytes("http://%s" % proxy['ip_port'])

- DOWNLOADER_MIDDLEWARES = {

- 'step8_king.middlewares.ProxyMiddleware': 500,

- }

- """

- """

- 20. Https访问

- Https访问时有两种情况:

- 1. 要爬取网站使用的可信任证书(默认支持)

- DOWNLOADER_HTTPCLIENTFACTORY = "scrapy.core.downloader.webclient.ScrapyHTTPClientFactory"

- DOWNLOADER_CLIENTCONTEXTFACTORY = "scrapy.core.downloader.contextfactory.ScrapyClientContextFactory"

- 2. 要爬取网站使用的自定义证书

- DOWNLOADER_HTTPCLIENTFACTORY = "scrapy.core.downloader.webclient.ScrapyHTTPClientFactory"

- DOWNLOADER_CLIENTCONTEXTFACTORY = "step8_king.https.MySSLFactory"

- # https.py

- from scrapy.core.downloader.contextfactory import ScrapyClientContextFactory

- from twisted.internet.ssl import (optionsForClientTLS, CertificateOptions, PrivateCertificate)

- class MySSLFactory(ScrapyClientContextFactory):

- def getCertificateOptions(self):

- from OpenSSL import crypto

- v1 = crypto.load_privatekey(crypto.FILETYPE_PEM, open('/Users/wupeiqi/client.key.unsecure', mode='r').read())

- v2 = crypto.load_certificate(crypto.FILETYPE_PEM, open('/Users/wupeiqi/client.pem', mode='r').read())

- return CertificateOptions(

- privateKey=v1, # pKey对象

- certificate=v2, # X509对象

- verify=False,

- method=getattr(self, 'method', getattr(self, '_ssl_method', None))

- )

- 其他:

- 相关类

- scrapy.core.downloader.handlers.http.HttpDownloadHandler

- scrapy.core.downloader.webclient.ScrapyHTTPClientFactory

- scrapy.core.downloader.contextfactory.ScrapyClientContextFactory

- 相关配置

- DOWNLOADER_HTTPCLIENTFACTORY

- DOWNLOADER_CLIENTCONTEXTFACTORY

- """

- """

- 21. 爬虫中间件

- class SpiderMiddleware(object):

- def process_spider_input(self,response, spider):

- '''

- 下载完成,执行,然后交给parse处理

- :param response:

- :param spider:

- :return:

- '''

- pass

- def process_spider_output(self,response, result, spider):

- '''

- spider处理完成,返回时调用

- :param response:

- :param result:

- :param spider:

- :return: 必须返回包含 Request 或 Item 对象的可迭代对象(iterable)

- '''

- return result

- def process_spider_exception(self,response, exception, spider):

- '''

- 异常调用

- :param response:

- :param exception:

- :param spider:

- :return: None,继续交给后续中间件处理异常;含 Response 或 Item 的可迭代对象(iterable),交给调度器或pipeline

- '''

- return None

- def process_start_requests(self,start_requests, spider):

- '''

- 爬虫启动时调用

- :param start_requests:

- :param spider:

- :return: 包含 Request 对象的可迭代对象

- '''

- return start_requests

- 内置爬虫中间件:

- 'scrapy.contrib.spidermiddleware.httperror.HttpErrorMiddleware': 50,

- 'scrapy.contrib.spidermiddleware.offsite.OffsiteMiddleware': 500,

- 'scrapy.contrib.spidermiddleware.referer.RefererMiddleware': 700,

- 'scrapy.contrib.spidermiddleware.urllength.UrlLengthMiddleware': 800,

- 'scrapy.contrib.spidermiddleware.depth.DepthMiddleware': 900,

- """

- # from scrapy.contrib.spidermiddleware.referer import RefererMiddleware

- # Enable or disable spider middlewares

- # See http://scrapy.readthedocs.org/en/latest/topics/spider-middleware.html

- SPIDER_MIDDLEWARES = {

- # 'step8_king.middlewares.SpiderMiddleware': 543,

- }

- """

- 22. 下载中间件

- class DownMiddleware1(object):

- def process_request(self, request, spider):

- '''

- 请求需要被下载时,经过所有下载器中间件的process_request调用

- :param request:

- :param spider:

- :return:

- None,继续后续中间件去下载;

- Response对象,停止process_request的执行,开始执行process_response

- Request对象,停止中间件的执行,将Request重新调度器

- raise IgnoreRequest异常,停止process_request的执行,开始执行process_exception

- '''

- pass

- def process_response(self, request, response, spider):

- '''

- spider处理完成,返回时调用

- :param response:

- :param result:

- :param spider:

- :return:

- Response 对象:转交给其他中间件process_response

- Request 对象:停止中间件,request会被重新调度下载

- raise IgnoreRequest 异常:调用Request.errback

- '''

- print('response1')

- return response

- def process_exception(self, request, exception, spider):

- '''

- 当下载处理器(download handler)或 process_request() (下载中间件)抛出异常

- :param response:

- :param exception:

- :param spider:

- :return:

- None:继续交给后续中间件处理异常;

- Response对象:停止后续process_exception方法

- Request对象:停止中间件,request将会被重新调用下载

- '''

- return None

- 默认下载中间件

- {

- 'scrapy.contrib.downloadermiddleware.robotstxt.RobotsTxtMiddleware': 100,

- 'scrapy.contrib.downloadermiddleware.httpauth.HttpAuthMiddleware': 300,

- 'scrapy.contrib.downloadermiddleware.downloadtimeout.DownloadTimeoutMiddleware': 350,

- 'scrapy.contrib.downloadermiddleware.useragent.UserAgentMiddleware': 400,

- 'scrapy.contrib.downloadermiddleware.retry.RetryMiddleware': 500,

- 'scrapy.contrib.downloadermiddleware.defaultheaders.DefaultHeadersMiddleware': 550,

- 'scrapy.contrib.downloadermiddleware.redirect.MetaRefreshMiddleware': 580,

- 'scrapy.contrib.downloadermiddleware.httpcompression.HttpCompressionMiddleware': 590,

- 'scrapy.contrib.downloadermiddleware.redirect.RedirectMiddleware': 600,

- 'scrapy.contrib.downloadermiddleware.cookies.CookiesMiddleware': 700,

- 'scrapy.contrib.downloadermiddleware.httpproxy.HttpProxyMiddleware': 750,

- 'scrapy.contrib.downloadermiddleware.chunked.ChunkedTransferMiddleware': 830,

- 'scrapy.contrib.downloadermiddleware.stats.DownloaderStats': 850,

- 'scrapy.contrib.downloadermiddleware.httpcache.HttpCacheMiddleware': 900,

- }

- """

- # from scrapy.contrib.downloadermiddleware.httpauth import HttpAuthMiddleware

- # Enable or disable downloader middlewares

- # See http://scrapy.readthedocs.org/en/latest/topics/downloader-middleware.html

- # DOWNLOADER_MIDDLEWARES = {

- # 'step8_king.middlewares.DownMiddleware1': 100,

- # 'step8_king.middlewares.DownMiddleware2': 500,

- # }

- settings

settings.py参数说明

补充:这里有一些没写到的:https://www.cnblogs.com/Chenjiabing/p/6907251.html

- 暂停和恢复爬虫

- 初学者最头疼的事情就是没有处理好异常,当爬虫爬到一半的时候突然因为错误而中断了,但是这时又不能从中断的地方开始继续爬,顿时感觉心里日了狗,但是这里有一个方法可以暂时的存储你爬的状态,当爬虫中断的时候继续打开后依然可以从中断的地方爬,不过虽说持久化可以有效的处理,但是要注意的是当使用cookie临时的模拟登录状态的时候要注意cookie的有效期

- 只需要在setting.py中JOB_DIR=file_name其中填的是你的文件目录,注意这里的目录不允许共享,只能存储单独的一个spdire的运行状态,如果你不想在从中断的地方开始运行,只需要将这个文件夹删除即可

- 当然还有其他的放法:scrapy crawl somespider -s JOBDIR=crawls/somespider-1,这个是在终端启动爬虫的时候调用的,可以通过ctr+c中断,恢复还是输入上面的命令

暂停和恢复爬虫

- class xxxSpider(scrapy.Spider):

- name = 'xxx'

- '''略'''

- def parse(self, response):

- print(response.url)

- print(self.settings["ABCD"])

其他

待补充

TinyScrapy

解读原理之循序渐进twisted

- from twisted.web.client import getPage, defer

- from twisted.internet import reactor

- def all_done(arg):

- reactor.stop()

- def callback(contents):

- print(contents)

- deferred_list = []

- url_list = ['http://www.bing.com', 'http://www.baidu.com', ]

- for url in url_list:

- deferred = getPage(bytes(url, encoding='utf8'))

- deferred.addCallback(callback) # 单个deferred完成任务时执行的callback

- deferred_list.append(deferred)

- dlist = defer.DeferredList(deferred_list)

- dlist.addBoth(all_done) # 所有deferred返回结果完成任务时执行的callback

- reactor.run()

1、执行多个url爬取任务,成功后各自执行callback,以及整个大任务执行callback

- from twisted.web.client import getPage, defer

- from twisted.internet import reactor

- def all_done(arg):

- reactor.stop()

- def onedone(response):

- print(response)

- @defer.inlineCallbacks

- def task(url):

- deferred = getPage(bytes(url, encoding='utf8'))

- deferred.addCallback(onedone)

- yield deferred

- deferred_list = []

- url_list = ['http://www.bing.com', 'http://www.baidu.com', ]

- for url in url_list:

- deferred = task(url) # 把下面的两个操作封装进task,同样是每个任务单独执行callback

- # deferred = getPage(url)

- # deferred.addCallback(onedone)

- deferred_list.append(deferred)

- dlist = defer.DeferredList(deferred_list)

- dlist.addBoth(all_done) # 所有deferred返回结果完成任务时执行的callback

- reactor.run()

2、把每个任务及执行callback封装到一个函数里

- from twisted.web.client import getPage, defer

- from twisted.internet import reactor

- def all_done(arg):

- reactor.stop()

- def onedone(response):

- print(response)

- @defer.inlineCallbacks

- def task():

- deferred2 = getPage(bytes("http://www.baidu.com", encoding='utf8'))

- deferred2.addCallback(onedone)

- yield deferred2

- deferred1 = getPage(bytes("http://www.google.com", encoding='utf8')) # 访问一个无法访问的url,因此无法执行callback

- deferred1.addCallback(onedone)

- yield deferred1

- ret = task()

- ret.addBoth(all_done) # 所有deferred返回结果完成任务时执行的callback

- reactor.run()

3、执行的任务中存在无法访问的url,导致不能执行该单独任务的callback

- from twisted.web.client import getPage, defer

- from twisted.internet import reactor

- def all_done(arg):

- reactor.stop()

- def onedone(response):

- print(response)

- @defer.inlineCallbacks

- def task():

- deferred2 = getPage(bytes("http://www.baidu.com", encoding='utf8'))

- deferred2.addCallback(onedone)

- yield deferred2

- stop_deferred = defer.Deferred() # 此为任务卡死的deferred,如果不手动执行callback,则程序会一直卡在这

- stop_deferred.callback("aasdfsdf") # 手动执行callback

- yield stop_deferred

- ret = task()

- ret.addBoth(all_done) # 所有deferred返回结果完成任务时执行的callback

- reactor.run()

4、对卡死的任务手动执行callback

- from twisted.web.client import getPage, defer

- from twisted.internet import reactor

- running_list = []

- stop_deferred = None

- def all_done(arg):

- reactor.stop()

- def onedone(response,url):

- print(response)

- running_list.remove(url) # 把正在运行的任务列表去除本callback对应的已完成的url

- def check_empty(response):

- if not running_list: # 正在运行的任务列表,如果为空,在把卡死任务手动执行callback

- stop_deferred.callback(None)

- @defer.inlineCallbacks

- def open_spider(url): # 把爬虫任务封装到这

- deferred2 = getPage(bytes(url, encoding='utf8')) # 执行任务

- deferred2.addCallback(onedone, url) # callback

- deferred2.addCallback(check_empty) # 检查正在运行的任务是否为空,是,则手动执行callback

- yield deferred2

- @defer.inlineCallbacks

- def stop(url):

- global stop_deferred # 执行卡死任务

- stop_deferred = defer.Deferred()

- yield stop_deferred

- @defer.inlineCallbacks

- def task(url):

- yield open_spider(url) # 启动爬虫任务

- yield stop(url) # 启动卡死任务

- running_list.append("http://www.baidu.com")

- ret = task("http://www.baidu.com")

- ret.addBoth(all_done)

- reactor.run()

5、监控正在运行的任务列表,适时手动执行callback

- from twisted.web.client import getPage, defer

- from twisted.internet import reactor

- class ExecutionEngine(object):

- def __init__(self):

- self.stop_deferred = None

- self.running_list = []

- def onedone(self,response,url):

- print(response)

- self.running_list.remove(url) # 把正在运行的任务列表去除本callback对应的已完成的url

- def check_empty(self,response):

- if not self.running_list: # 监控正在运行的任务列表,如果为空,在把卡死任务手动执行callback

- self.stop_deferred.callback(None)

- @defer.inlineCallbacks

- def open_spider(self,url): # 爬虫任务

- deferred2 = getPage(bytes(url, encoding='utf8'))

- deferred2.addCallback(self.onedone, url) # 添加callback

- deferred2.addCallback(self.check_empty) # 添加callback2,监控正在运行的任务列表

- yield deferred2

- @defer.inlineCallbacks

- def stop(self,url):

- self.stop_deferred = defer.Deferred() # 卡死任务

- yield self.stop_deferred

- @defer.inlineCallbacks

- def task(url):

- engine = ExecutionEngine()

- engine.running_list.append(url) # 正在执行任务的列表添加新url

- yield engine.open_spider(url)

- yield engine.stop(url)

- def all_done(arg):

- reactor.stop()

- if __name__ == '__main__':

- ret = task("http://www.baidu.com")

- ret.addBoth(all_done)

- reactor.run()

6、把第5步进一步封装为类

开发TinyScrapy

- #!/usr/bin/env python

- # -*- coding:utf-8 -*-

- from twisted.web.client import getPage, defer

- from twisted.internet import reactor

- import queue

- class Request(object):

- def __init__(self, url, callback):

- self.url = url

- self.callback = callback

- class Scheduler(object):

- def __init__(self, engine):

- self.q = queue.Queue()

- self.engine = engine

- def enqueue_request(self, request):

- """

- :param request:

- :return:

- """

- self.q.put(request)

- def next_request(self):

- try:

- req = self.q.get(block=False)

- except Exception as e:

- req = None

- return req

- def size(self):

- return self.q.qsize()

- class ExecutionEngine(object):

- def __init__(self):

- self._closewait = None

- self.running = True

- self.start_requests = None

- self.scheduler = Scheduler(self)

- self.inprogress = set()

- def check_empty(self, response):

- if not self.running:

- self._closewait.callback('......')

- def _next_request(self):

- while self.start_requests:

- try:

- request = next(self.start_requests)

- except StopIteration:

- self.start_requests = None

- else:

- self.scheduler.enqueue_request(request)

- print(len(self.inprogress), self.scheduler.size())

- while len(self.inprogress) < 5 and self.scheduler.size() > 0: # 最大并发数为5

- request = self.scheduler.next_request()

- if not request:

- break

- self.inprogress.add(request)

- d = getPage(bytes(request.url, encoding='utf-8'))

- d.addBoth(self._handle_downloader_output, request)

- d.addBoth(lambda x, req: self.inprogress.remove(req), request)

- d.addBoth(lambda x: self._next_request())

- if len(self.inprogress) == 0 and self.scheduler.size() == 0:

- self._closewait.callback(None)

- def _handle_downloader_output(self, response, request):

- """

- 获取内容,执行回调函数,并且把回调函数中的返回值获取,并添加到队列中

- :param response:

- :param request:

- :return:

- """

- import types

- gen = request.callback(response)

- if isinstance(gen, types.GeneratorType):

- for req in gen:

- self.scheduler.enqueue_request(req)

- @defer.inlineCallbacks

- def start(self):

- self._closewait = defer.Deferred()

- yield self._closewait

- @defer.inlineCallbacks

- def open_spider(self, start_requests):

- self.start_requests = start_requests

- yield None

- reactor.callLater(0, self._next_request)

- @defer.inlineCallbacks

- def crawl(start_requests):

- engine = ExecutionEngine()

- start_requests = iter(start_requests)

- yield engine.open_spider(start_requests)

- yield engine.start()

- def _stop_reactor(_=None):

- reactor.stop()

- def parse(response):

- for i in range(10):

- yield Request("http://dig.chouti.com/all/hot/recent/%s" % i, callback)

- if __name__ == '__main__':

- start_requests = [Request("http://www.baidu.com", parse), Request("http://www.baidu1.com", parse), ]

- ret = crawl(start_requests)

- ret.addBoth(_stop_reactor)

- reactor.run()

精简版,方便理解流程

- #!/usr/bin/env python

- # -*- coding:utf-8 -*-

- from twisted.web.client import getPage, defer

- from twisted.internet import reactor

- import queue

- class Response(object):

- def __init__(self, body, request):

- self.body = body

- self.request = request

- self.url = request.url

- @property

- def text(self):

- return self.body.decode('utf-8')

- class Request(object):

- def __init__(self, url, callback=None):

- self.url = url

- self.callback = callback

- class Scheduler(object):

- def __init__(self, engine):

- self.q = queue.Queue()

- self.engine = engine

- def enqueue_request(self, request):

- self.q.put(request)

- def next_request(self):

- try:

- req = self.q.get(block=False)

- except Exception as e:

- req = None

- return req

- def size(self):

- return self.q.qsize()

- class ExecutionEngine(object):

- def __init__(self):

- self._closewait = None

- self.running = True

- self.start_requests = None

- self.scheduler = Scheduler(self)

- self.inprogress = set()

- def check_empty(self, response):

- if not self.running:

- self._closewait.callback('......')

- def _next_request(self):

- while self.start_requests:

- try:

- request = next(self.start_requests)

- except StopIteration:

- self.start_requests = None

- else:

- self.scheduler.enqueue_request(request)

- while len(self.inprogress) < 5 and self.scheduler.size() > 0: # 最大并发数为5

- request = self.scheduler.next_request()

- if not request:

- break

- self.inprogress.add(request)

- d = getPage(bytes(request.url, encoding='utf-8'))

- d.addBoth(self._handle_downloader_output, request)

- d.addBoth(lambda x, req: self.inprogress.remove(req), request)

- d.addBoth(lambda x: self._next_request())

- if len(self.inprogress) == 0 and self.scheduler.size() == 0:

- self._closewait.callback(None)

- def _handle_downloader_output(self, body, request):

- """

- 获取内容,执行回调函数,并且把回调函数中的返回值获取,并添加到队列中

- :param response:

- :param request:

- :return:

- """

- import types

- response = Response(body, request)

- func = request.callback or self.spider.parse

- gen = func(response)

- if isinstance(gen, types.GeneratorType):

- for req in gen:

- self.scheduler.enqueue_request(req)

- @defer.inlineCallbacks

- def start(self):

- self._closewait = defer.Deferred()

- yield self._closewait

- @defer.inlineCallbacks

- def open_spider(self, spider, start_requests):

- self.start_requests = start_requests

- self.spider = spider

- yield None

- reactor.callLater(0, self._next_request)

- class Crawler(object):

- def __init__(self, spidercls):

- self.spidercls = spidercls

- self.spider = None

- self.engine = None

- @defer.inlineCallbacks

- def crawl(self):

- self.engine = ExecutionEngine()

- self.spider = self.spidercls()

- start_requests = iter(self.spider.start_requests())

- yield self.engine.open_spider(self.spider, start_requests)

- yield self.engine.start()

- class CrawlerProcess(object):

- def __init__(self):

- self._active = set()

- self.crawlers = set()

- def crawl(self, spidercls, *args, **kwargs):

- crawler = Crawler(spidercls)

- self.crawlers.add(crawler)

- d = crawler.crawl(*args, **kwargs)

- self._active.add(d)

- return d

- def start(self):

- dl = defer.DeferredList(self._active)

- dl.addBoth(self._stop_reactor)

- reactor.run()

- def _stop_reactor(self, _=None):

- reactor.stop()

- class Spider(object):

- def start_requests(self):

- for url in self.start_urls:

- yield Request(url)

- class ChoutiSpider(Spider):

- name = "chouti"

- start_urls = [

- 'http://dig.chouti.com/',

- ]

- def parse(self, response):

- print(response.text)

- class CnblogsSpider(Spider):

- name = "cnblogs"

- start_urls = [

- 'http://www.cnblogs.com/',

- ]

- def parse(self, response):

- print(response.text)

- if __name__ == '__main__':

- spider_cls_list = [ChoutiSpider, CnblogsSpider]

- crawler_process = CrawlerProcess()

- for spider_cls in spider_cls_list:

- crawler_process.crawl(spider_cls)

- crawler_process.start()

TinyScrapy

示例

- # -*- coding: utf-8 -*-

- import scrapy

- from scrapy.http import Request

- from scrapy.selector import Selector

- class ChoutiSpider(scrapy.Spider):

- name = 'chouti'

- allowed_domains = ['chouti.com']

- start_urls = ['http://chouti.com/']

- cookie_dict = {}

- def start_requests(self):

- for url in self.start_urls:

- yield Request(url, dont_filter=True,callback=self.parse)

- def parse(self,response):

- from scrapy.http.cookies import CookieJar

- cookie_jar = CookieJar() # 对象,中封装了cookies

- cookie_jar.extract_cookies(response,response.request) # 去响应中获取cookies

- for k, v in cookie_jar._cookies.items():

- for i, j in v.items():

- for m, n in j.items():

- self.cookie_dict[m] = n.value

- from urllib.parse import urlencode

- post_dict = {

- "phone":"8618xxxxxxxxxx",

- "password":"xxxx",

- "oneMonth":1,

- }

- yield Request(

- url = "http://dig.chouti.com/login",

- method="POST",

- cookies=self.cookie_dict,

- body=urlencode(post_dict),

- headers={

- "Content-Type":"application/x-www-form-urlencoded;charset=UTF-8",

- },

- callback=self.parse2,

- )

- def parse2(self,response):

- # print(response.text)

- yield Request(

- url="http://dig.chouti.com",

- cookies=self.cookie_dict,

- callback=self.parse3,

- )

- def parse3(self,response):

- # 找div,class=part2的标签,获取share-linkid属性

- hxs = Selector(response)

- linkid_list = hxs.xpath("//div[@class='part2']/@share-linkid").extract()

- # print(linkid_list)

- for linkid in linkid_list:

- base_url = "https://dig.chouti.com/link/vote?linksId={linkid}".format(linkid=linkid)

- yield Request(

- method="POST",

- url=base_url,

- cookies=self.cookie_dict,

- callback=self.parse4,

- )

- def parse4(self,response):

- print(response.text)

- 抽屉网第一页点赞

抽屉网第一页点赞

- # -*- coding: utf-8 -*-

- import scrapy

- from scrapy.http import Request

- from scrapy.selector import Selector

- class ChoutiSpider(scrapy.Spider):

- name = 'chouti'

- allowed_domains = ['chouti.com']

- start_urls = ['http://chouti.com/']

- cookie_dict = {}

- def start_requests(self):

- for url in self.start_urls:

- yield Request(url, dont_filter=True,callback=self.parse)

- def parse(self,response):

- from scrapy.http.cookies import CookieJar

- cookie_jar = CookieJar() # 对象,中封装了cookies

- cookie_jar.extract_cookies(response,response.request) # 去响应中获取cookies

- for k, v in cookie_jar._cookies.items():

- for i, j in v.items():

- for m, n in j.items():

- self.cookie_dict[m] = n.value

- from urllib.parse import urlencode

- post_dict = {

- "phone":"8618xxxxxxxxxx",

- "password":"xxxx",

- "oneMonth":1,

- }

- yield Request(

- url = "http://dig.chouti.com/login",

- method="POST",

- cookies=self.cookie_dict,

- body=urlencode(post_dict),

- headers={

- "Content-Type":"application/x-www-form-urlencoded;charset=UTF-8",

- },

- callback=self.parse2,

- )

- def parse2(self,response):

- # print(response.text)

- yield Request(

- url="http://dig.chouti.com",

- cookies=self.cookie_dict,

- callback=self.parse3,

- )

- def parse3(self,response):

- # 找div,class=part2的标签,获取share-linkid属性

- hxs = Selector(response)

- linkid_list = hxs.xpath("//div[@class='part2']/@share-linkid").extract()

- # print(linkid_list)

- for linkid in linkid_list:

- # 获取每一个ID去点赞

- base_url = "https://dig.chouti.com/link/vote?linksId={linkid}".format(linkid=linkid)

- yield Request(

- method="POST",

- url=base_url,

- cookies=self.cookie_dict,

- callback=self.parse4,

- )

- page_list = hxs.xpath("//div[@id='dig_lcpage']//a/@href").extract()

- for page in page_list:

- # /all/hot/recent/2

- page_url = "http://dig.chouti.com{}".format(page)

- yield Request(url=page_url,method="GET",callback=self.parse3)

- def parse4(self,response):

- print(response.text)

抽屉网所有文章点赞(遍历所有页,无节制的)

补充

发送post请求

- from scrapy.http import Request,FormRequest

- class mySpider(scrapy.Spider):

- # start_urls = ["http://www.example.com/"]

- def start_requests(self):

- url = 'http://www.renren.com/PLogin.do'

- # FormRequest 是Scrapy发送POST请求的方法

- yield scrapy.FormRequest(

- url = url,

- formdata = {"email" : "xxx", "password" : "xxxxx"},

- callback = self.parse_page

- )

- def parse_page(self, response):

- # do something

发送post请求

数据采集器

https://www.cnblogs.com/sufei-duoduo/p/5881385.html

- Scrapy 提供了方便的收集数据的机制。数据以 key/value 方式存储,值大多是计数值。该机制叫做数据收集器(Stats Collector),可以通过 Crawler API 的属性 stats来使用。

- 无论数据收集(stats collection)开启或者关闭,数据收集器永远都是可用的。因此可以 import 进自己的模块并使用其 API(增加值或者设置新的状态键(stats keys))。该做法是为了简化数据收集的方法:不应该使用超过一行代码来收集你的 spider,Scrapy 扩展或者任何你使用数据收集器代码里头的状态。

- 数据收集器的另一个特性是(在启用状态下)很高效,(在关闭情况下)非常高效(几乎察觉不到)。

- 数据收集器对每个 spider 保持一个状态。当 spider 启动时,该表自动打开,当 spider 关闭时,自动关闭。

可用的数据采集器种类

- 可用的数据收集器

- 除了基本的 StatsCollector ,Scrapy 也提供了基于 StatsCollector 的数据收集器。 您可以通过 STATS_CLASS 设置来选择。默认使用的是 MemoryStatsCollector 。

- MemoryStatsCollector

- class scrapy.statscol.MemoryStatsCollector

- 一个简单的数据收集器。其在 spider 运行完毕后将其数据保存在内存中。数据可以通过 spider_stats 属性访问。该属性是一个以 spider 名字为键(key)的字典。

- 这是 Scrapy 的默认选择。

- spider_stats

- 保存了每个 spider 最近一次爬取的状态的字典(dict)。该字典以 spider 名字为键,值也是字典。

- DummyStatsCollector

- class scrapy.statscol.DummyStatsCollector

- 该数据收集器并不做任何事情但非常高效。您可以通过设置 STATS_CLASS 启用这个收集器,来关闭数据收集,提高效率。 不过,数据收集的性能负担相较于 Scrapy 其他的处理(例如分析页面)来说是非常小的。

数据采集器种类

使用方法

- #设置数据:

- stats.set_value('hostname', socket.gethostname())

- #增加数据值:

- stats.inc_value('pages_crawled')

- #当新的值比原来的值大时设置数据:

- stats.max_value('max_items_scraped', value)

- #当新的值比原来的值小时设置数据:

- stats.min_value('min_free_memory_percent', value)

- #获取数据:

- >>> stats.get_value('pages_crawled')

- #获取所有数据:

- >>> stats.get_stats()

- {'pages_crawled': 1238, 'start_time': datetime.datetime(2009, 7, 14, 21, 47, 28, 977139)}

常用方法

- class ExtensionThatAccessStats(object):

- def __init__(self, stats):

- self.stats = stats

- @classmethod

- def from_crawler(cls, crawler):

- return cls(crawler.stats)

在extension中的使用

- """

- Extension for collecting core stats like items scraped and start/finish times

- """

- import datetime

- from scrapy import signals

- class CoreStats(object):

- def __init__(self, stats):

- self.stats = stats

- @classmethod

- def from_crawler(cls, crawler):

- o = cls(crawler.stats)

- crawler.signals.connect(o.spider_opened, signal=signals.spider_opened)

- crawler.signals.connect(o.spider_closed, signal=signals.spider_closed)

- crawler.signals.connect(o.item_scraped, signal=signals.item_scraped)

- crawler.signals.connect(o.item_dropped, signal=signals.item_dropped)

- crawler.signals.connect(o.response_received, signal=signals.response_received)

- return o

- def spider_opened(self, spider):

- print("haha")

- self.stats.set_value('start_time', datetime.datetime.utcnow(), spider=spider)

- def spider_closed(self, spider, reason):

- self.stats.set_value('finish_time', datetime.datetime.utcnow(), spider=spider)

- self.stats.set_value('finish_reason', reason, spider=spider)

- print("spider_closed","spider start time",self.stats.get_value("start_time"))

- print("spider_closed","item_scraped",self.stats.get_value("item_scraped_count"))

- print("spider_closed","response_received",self.stats.get_value("response_received_count"))

- print("spider_closed","spider finish time",self.stats.get_value("finish_time"))

- print("spider_closed","spider finish reason",self.stats.get_value("finish_reason"))

- def item_scraped(self, item, spider):

- self.stats.inc_value('item_scraped_count', spider=spider)

- def response_received(self, spider):

- self.stats.inc_value('response_received_count', spider=spider)

- def item_dropped(self, item, spider, exception):

- reason = exception.__class__.__name__

- self.stats.inc_value('item_dropped_count', spider=spider)

- self.stats.inc_value('item_dropped_reasons_count/%s' % reason, spider=spider)

- """

- haha

- spider_closed spider start time 2018-11-03 16:44:18.618151

- spider_closed item_scraped None

- spider_closed response_received 4

- spider_closed spider finish time 2018-11-03 16:44:21.951843

- spider_closed spider finish reason finished

- """

实例

log

https://scrapy-chs.readthedocs.io/zh_CN/latest/topics/logging.html#log-levels

- Logging

- Scrapy提供了log功能。您可以通过 scrapy.log 模块使用。当前底层实现使用了 Twisted logging ,不过可能在之后会有所变化。

- log服务必须通过显示调用 scrapy.log.start() 来开启,以捕捉顶层的Scrapy日志消息。 在此之上,每个crawler都拥有独立的log观察者(observer)(创建时自动连接(attach)),接收其spider的日志消息。

- Log levels

- Scrapy提供5层logging级别:

- CRITICAL - 严重错误(critical)

- ERROR - 一般错误(regular errors)

- WARNING - 警告信息(warning messages)

- INFO - 一般信息(informational messages)

- DEBUG - 调试信息(debugging messages)

- 如何设置log级别

- 您可以通过终端选项(command line option) –loglevel/-L 或 LOG_LEVEL 来设置log级别。

- 如何记录信息(log messages)

- 下面给出如何使用 WARNING 级别来记录信息的例子:

- from scrapy import log

- log.msg("This is a warning", level=log.WARNING)

- 在Spider中添加log(Logging from Spiders)

- 在spider中添加log的推荐方式是使用Spider的 log() 方法。该方法会自动在调用 scrapy.log.msg() 时赋值 spider 参数。其他的参数则直接传递给 msg() 方法。

- scrapy.log模块

- scrapy.log.start(logfile=None, loglevel=None, logstdout=None)

- 启动Scrapy顶层logger。该方法必须在记录任何顶层消息前被调用 (使用模块的 msg() 而不是 Spider.log 的消息)。否则,之前的消息将会丢失。

- 参数:

- logfile (str) – 用于保存log输出的文件路径。如果被忽略, LOG_FILE 设置会被使用。 如果两个参数都是 None ,log将会被输出到标准错误流(standard error)。

- loglevel – 记录的最低的log级别. 可用的值有: CRITICAL, ERROR, WARNING, INFO and DEBUG.

- logstdout (boolean) – 如果为 True , 所有您的应用的标准输出(包括错误)将会被记录(logged instead)。 例如,如果您调用 “print ‘hello’” ,则’hello’ 会在Scrapy的log中被显示。 如果被忽略,则 LOG_STDOUT 设置会被使用。

- scrapy.log.msg(message, level=INFO, spider=None)

- 记录信息(Log a message)

- 参数:

- message (str) – log的信息

- level – 该信息的log级别. 参考 Log levels.

- spider (Spider 对象) – 记录该信息的spider. 当记录的信息和特定的spider有关联时,该参数必须被使用。

- scrapy.log.CRITICAL

- 严重错误的Log级别

- scrapy.log.ERROR

- 错误的Log级别 Log level for errors

- scrapy.log.WARNING

- 警告的Log级别 Log level for warnings

- scrapy.log.INFO

- 记录信息的Log级别(生产部署时推荐的Log级别)

- scrapy.log.DEBUG

- 调试信息的Log级别(开发时推荐的Log级别)

- Logging设置

- 以下设置可以被用来配置logging:

- LOG_ENABLED

- LOG_ENCODING

- LOG_FILE

- LOG_LEVEL

- LOG_STDOUT

logging

实例

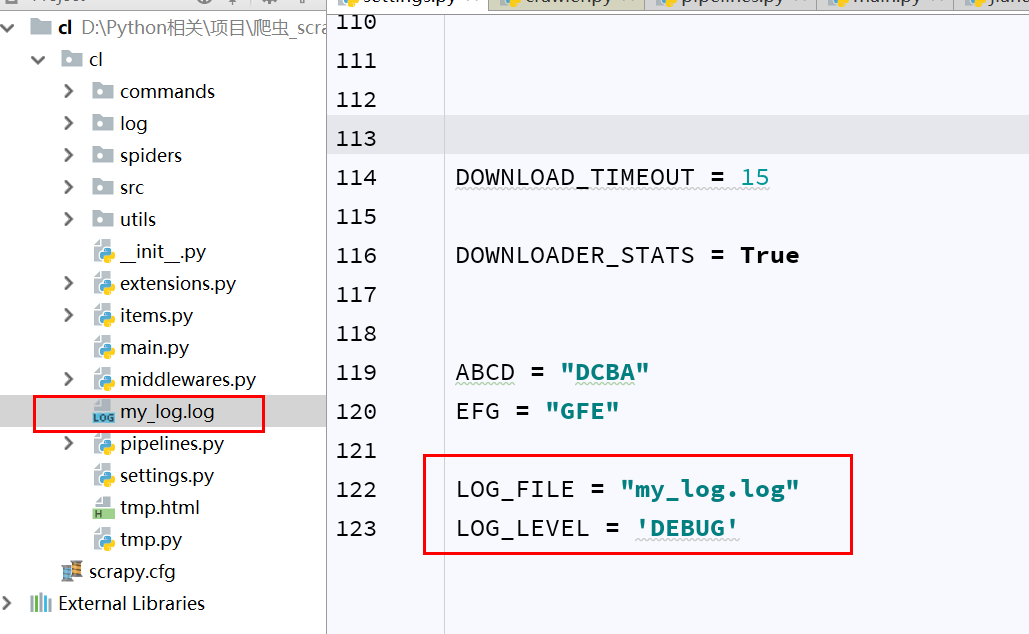

- LOG_FILE = "my_log.log"

- LOG_LEVEL = 'DEBUG'

settings.py

- class JiandanSpider(scrapy.Spider):

- """略"""

- def parse(self, response):

- import logging

- self.log("test scrapy log",level=logging.ERROR)

- self.log("test scrapy log")

spiders/xx.py

参考or转发

http://www.cnblogs.com/wupeiqi/articles/6229292.html

http://scrapy-chs.readthedocs.io/zh_CN/latest/index.html

scrapy笔记集合的更多相关文章

- Learning Scrapy笔记(六)- Scrapy处理JSON API和AJAX页面

摘要:介绍了使用Scrapy处理JSON API和AJAX页面的方法 有时候,你会发现你要爬取的页面并不存在HTML源码,譬如,在浏览器打开http://localhost:9312/static/, ...

- Learning Scrapy笔记(零) - 前言

我已经使用了scrapy有半年之多,但是却一直都感觉没有入门,网上关于scrapy的文章简直少得可怜,而官网上的文档(http://doc.scrapy.org/en/1.0/index.html)对 ...

- JavaScript基础笔记集合(转)

JavaScript基础笔记集合 JavaScript基础笔记集合 js简介 js是脚本语言.浏览器是逐行的读取代码,而传统编程会在执行前进行编译 js存放的位置 html脚本必须放在&l ...

- 转 Scrapy笔记(5)- Item详解

Item是保存结构数据的地方,Scrapy可以将解析结果以字典形式返回,但是Python中字典缺少结构,在大型爬虫系统中很不方便. Item提供了类字典的API,并且可以很方便的声明字段,很多Scra ...

- Scrapy笔记(1)- 入门篇

Scrapy笔记01- 入门篇 Scrapy是一个为了爬取网站数据,提取结构性数据而编写的应用框架.可以应用在包括数据挖掘, 信息处理或存储历史数据等一系列的程序中.其最初是为了页面抓取(更确切来说, ...

- Scrapy笔记02- 完整示例

Scrapy笔记02- 完整示例 这篇文章我们通过一个比较完整的例子来教你使用Scrapy,我选择爬取虎嗅网首页的新闻列表. 这里我们将完成如下几个步骤: 创建一个新的Scrapy工程 定义你所需要要 ...

- Scrapy笔记03- Spider详解

Scrapy笔记03- Spider详解 Spider是爬虫框架的核心,爬取流程如下: 先初始化请求URL列表,并指定下载后处理response的回调函数.初次请求URL通过start_urls指定, ...

- Scrapy笔记04- Selector详解

Scrapy笔记04- Selector详解 在你爬取网页的时候,最普遍的事情就是在页面源码中提取需要的数据,我们有几个库可以帮你完成这个任务: BeautifulSoup是python中一个非常流行 ...

- Scrapy笔记05- Item详解

Scrapy笔记05- Item详解 Item是保存结构数据的地方,Scrapy可以将解析结果以字典形式返回,但是Python中字典缺少结构,在大型爬虫系统中很不方便. Item提供了类字典的API, ...

随机推荐

- python 定时器schedule执行任务

import schedule import time """英文版书籍:<essential sqlalchemy>,这本书讲了很多在每天某个指定的时间点上 ...

- Redis(五)主从复制

本文转载自编程迷思,原文链接 深入学习Redis(3):主从复制 前言 在前面的两篇文章中,分别介绍了Redis的内存模型和Redis的持久化. 在Redis的持久化中曾提到,Redis高可用的方案包 ...

- $2018/8/19 = Day5$学习笔记 + 杂题整理

\(\mathcal{Morning}\) \(Task \ \ 1\) 容斥原理 大概这玩意儿就是来用交集大小求并集大小或者用并集大小求交集大小的\(2333\)? 那窝萌思考已知\(A_1,A_2 ...

- 404 Note Found 队-Beta2

目录 组员情况 组员1(组长):胡绪佩 组员2:胡青元 组员3:庄卉 组员4:家灿 组员5:凯琳 组员6:翟丹丹 组员7:何家伟 组员8:政演 组员9:黄鸿杰 组员10:刘一好 组员11:何宇恒 展示 ...

- oracle什么时候须要commit

今天在oracle的SQL plus 中运行了删除和查询操作,然后在PL/SQL中也运行查询操作,语句一样,结果却不一样,让我大感郁闷,后来才突然想到可能是两边数据不一致造成的,可是为什么不一致呢,就 ...

- Linux学习笔记(第十章)

vim程序编辑器 vim特点: vim三种模式: 一般模式:打开文档就直接进入编辑模式 -可进行删除,复制等,无法直接编辑文档 编辑模式:按下[i,I,o,O,A,R,r]等字母才会进入编辑模式,按E ...

- Visual Studio 2015 正式版镜像下载(含专业版/企业版KEY)

Visual Studio Community 2015简体中文版(社区版,针对个人免费): 在线安装exe:http://download.microsoft.com/download/B/4/8/ ...

- 一维码UPC A简介及其解码实现(zxing-cpp)

UPC(Universal Product Code)码是最早大规模应用的条码,其特性是一种长度固定.连续性的条 码,目前主要在美国和加拿大使用,由于其应用范围广泛,故又被称万用条码. UPC码仅可 ...

- 41-mysql作业

1 2 3 4

- 2460: [BeiJing2011]元素

2460: [BeiJing2011]元素 链接 分析: 贪心的想:首先按权值排序,然后从大到小依次放,能放则放.然后用线性基维护是否合法. 代码: #include<cstdio> #i ...